Volume 27, Number 2

İrem Sağ1 and Buket Kip-Kayabaş2*

1Graduate School of Education, Open and Distance Learning Department, Anadolu University, Eskişehir, Türkiye; 2Lifelong Learning and Adult Education Department, Faculty of Education, Anadolu University, Eskişehir, Türkiye; *Corresponding Author

This study investigated the impact of generative artificial intelligence (GenAI) supported by blended instruction on the argumentative writing skills of first-year students in an English as a foreign language (EFL) teacher education program in a state university in Türkiye. The study was designed as a qualitative case study supported by quantitative data. The study involved nine English language teaching students who initially received traditional academic writing instruction. They completed a pre-test. They participated in a 4-week online writing course integrating GenAI tools within a blended learning environment. Data were collected through pre- and post-tests as well as semi-structured interviews and analyzed using thematic analysis. Findings indicate that GenAI contributed to key stages of the writing process, particularly in idea generation, text organization, argument development, and critical thinking. Participants reported increased confidence and engagement, benefiting from immediate, personalized feedback and flexible learning opportunities. However, concerns regarding reliability and overdependence also emerged. The study suggests that with proper teacher guidance, GenAI can function as a pedagogical scaffold in blended academic writing instruction, supporting learners’ higher-order thinking and autonomy. These insights contribute to understanding how emerging AI technologies can be effectively integrated into EFL contexts to enhance complex writing skills.

Keywords: generative artificial intelligence, blended learning, argumentative writing, critical thinking, English as a foreign language, academic writing instruction, open and distance learning

The rapid advancement of generative artificial intelligence (GenAI) technologies has created new opportunities and challenges in language education. Large language models such as ChatGPT are transforming teaching and learning practices by providing personalized, flexible, and interactive learning experiences for students (Holmes et al., 2019; Luckin et al., 2016). These kinds of technologies facilitate rapid access to linguistic input and give instant feedback, adaptive support, and personalized learning pathways, transforming how students engage with complex skills such as academic writing (Kasneci et al., 2023). These innovations are particularly significant for English as a foreign language (EFL) learners who often struggle with generating new ideas, text organization, argument development, and revision when delivering extended written discourse (Hyland, 2003; Weigle, 2002). While traditional approaches to writing instruction may not provide timely or individualized guidance (Lee & Tajino, 2008), GenAI tools can potentially scaffold learners’ higher-order thinking and reduce cognitive load. Also, GenAI tools can scaffold EFL students.

At the same time, integrating GenAI into formal instruction raises important pedagogical and ethical considerations. Overreliance on AI-generated content can lead to superficial learning, academic integrity concerns, and decreased critical engagement with texts (Tlili et al., 2023; Zhai & Wibowo, 2023). Teachers, thus, play a crucial role in mentoring AI use to ensure that students critically evaluate, adapt, and improve outputs rather than copy them (Ertmer & Ottenbreit-Leftwich, 2010). In blended learning environments, where face-to-face and online instruction are combined, GenAI can be positioned as a dynamic support, offering immediate feedback and personalized recommendations while instructors facilitate reflective and interactive learning (Bozkurt & Sharma, 2020; Graham, 2013).

Argumentative writing represents one of the most cognitively demanding genres in academic contexts, requiring learners to construct logical claims, provide evidence, and address counterarguments (Nussbaum, 2008; Wingate, 2012). Despite the growing interest in digital writing tools, empirical research exploring how GenAI influences students’ experiences and development of argumentative writing skills remains limited (Sun, 2023). Understanding this integration is particularly critical in higher education programs preparing future English teachers, who will themselves mediate technology-integrated writing instruction.

To fill this gap, the study investigated the impact of GenAI-supported blended instruction on first-year English language teaching (ELT) students’ argumentative writing skills. Especially, it explored students’ perceptions, experiences, and skill development when guided to use GenAI critically and reflectively during a structured 4-week online writing program. By examining both qualitative and quantitative outcomes, this research contributes to understanding how AI-integrated pedagogy can support complex writing and critical thinking in EFL contexts while preserving academic standards and learner autonomy.

This study adopted the Community of Inquiry framework (Garrison, Anderson, & Archer, 2000), widely recognized as the foundational model for blended and flipped learning environments. The Community of Inquiry framework posits that deep and meaningful learning occurs through the interaction of three core elements or presences : cognitive, social, and teaching. However, given the integration of generative AI, this study proposed an AI-enhanced Community of Inquiry perspective.

In this adapted framework, the role of teaching presence, traditionally held solely by the instructor, is distributed between the human teacher and the GenAI agents. While the human instructor designs the flipped learning pathway and facilitates high-level discourse, GenAI tools assume the role of intelligent agents that provide immediate feedback, error correction, and structural guidance. Concurrently, cognitive presence is amplified as students engage in dialectic interactions with AI to trigger exploration, integration, and resolution phases of critical thinking. This framework allows for analyzing how the synergy between human guidance and AI support orchestrates the development of argumentative writing skills within a distributed learning environment.

GenAI has recently gained importance as a transformative tool for second language writing instruction. Large language models such as ChatGPT can assist learners by generating ideas, refining vocabulary and syntax, and providing immediate feedback (Godwin-Jones, 2022; Law, 2024). Research has highlighted GenAI’s capacity to analyze learner profiles, offer adaptive ways to support learners, and support pedagogical decision-making (Holmes et al., 2019; Hu et al., 2025). It can also foster linguistic awareness by encouraging self-directed correction and metacognitive engagement (Kasneci et al., 2023). As GenAI provides personalized feedback, creates rapid content, and reduces teacher workload (Bozkurt, 2023), challenges remain regarding fair access, ethical use, and information reliability. In EFL contexts, GenAI is reported to support content development and text cohesion, helping students overcome cognitive barriers during drafting and revision (Guo et al., 2022; Xu & Jumaat, 2025). Nevertheless, researchers have cautioned against uncritical adoption, pointing to potential factual inaccuracies, overreliance, and reduced critical engagement (Tlili et al., 2023). These concerns emphasize the importance of teacher mentoring and explicit instruction in prompting and evaluating AI-generated output.

Digitalization has advanced blended learning, integrating face-to-face and online elements to combine flexibility with structured support (Graham, 2013). Its rapid adoption during the COVID-19 pandemic highlighted the need for teachers’ digital competence and pedagogical design to prevent poor usage of technology (Bozkurt & Sharma, 2020; Ertmer & Ottenbreit-Leftwich, 2010; Hattie, 2012). Learners must self-direct, while instructors guide inquiry and critical thinking (Anderson, 2008).

On the other hand, flipped learning extends this shift by moving content exploration outside class and dedicating class time to collaboration and problem solving, strengthening deep understanding and 21st-century skills (Demirer & Aydın, 2017; Sun et al., 2023).

Blended learning, which integrates digital and face-to-face formats, is recognized for its flexibility, learner autonomy, and capacity to deliver timely, personalized feedback (Bozkurt & Sharma, 2020; Graham, 2013). Integrating GenAI into such environments creates new affordances for writing pedagogy. AI-driven tools can support brainstorming, outline generation, and revision cycles, while teachers provide higher-order feedback and maintain academic integrity (Han et al., 2023; Park & Doo, 2024). In writing instruction, studies show that AI-supported blended tasks can improve coherence and structure of argument by enabling immediate, personalized scaffolding (Guo et al., 2023; Suh et al., 2025). However, the degree of benefit depends on structured guidance, as unguided AI use may result in poor text generation and diminished reasoning.

Argumentative writing is among the most demanding genres for EFL learners due to its cognitive and rhetorical complexity: students must formulate clear claims, provide evidence, and anticipate counterarguments (Qin, 2013; Wingate, 2012). Traditional writing instruction often lacks individualized, timely feedback, limiting opportunities for repeated correction and critical thought (Hyland, 2003; Lee & Tajino, 2008). Emerging evidence suggests that, when critically integrated, GenAI can foster argument quality, text coherence, and metacognitive awareness in EFL writing (Sun, 2023; Wang, 2024). However, the current research base is fragmented and has rarely focused on the experiences of ELT students, a group that will influence future AI-mentored pedagogy.

Despite increasing interest in AI-supported academic writing, there remains a lack of empirical work exploring how GenAI-enhanced blended instruction shapes both skill development and perceptions among ELT students. This study sought to fill this gap by examining the impact of GenAI-supported blended learning on ELT students’ argumentative writing performance and their views on AI as a pedagogical scaffold. This study also assessed how digital applications supported by GenAI contribute to key stages of writing, such as idea generation, organization, developing strong arguments, and critical thinking. Accordingly, this paper sought answers to the following research questions:

This study employed a single holistic case study design (Yin, 2018) to provide an in-depth understanding of how GenAI-integrated blended instruction influences the argumentative writing processes, skill development, and perceptions of EFL learners within a real-life context. The case was defined as the first-year ELT writing cohort using GenAI tools within a blended learning environment over a 4-week period. This approach was selected to allow for a comprehensive examination of the bounded system, focusing on participants’ lived experiences and the pedagogical implications of the intervention. While the research was fundamentally qualitative, descriptive data from pre- and post-writing assessments were used to triangulate findings and provide evidence of performance changes, thereby enhancing the trustworthiness of the study.

Participants were nine first-year students enrolled in the English Language Teaching (ELT) program at a state university in Türkiye. All participants had previously completed an academic writing course using traditional instruction methods. They voluntarily joined a 4-week GenAI-supported blended writing instruction, particularly designed for this study. The instruction was delivered via a learning management system (LMS) complemented by synchronous online sessions (Zoom), allowing participants to access instructional materials, submit assignments, and interact with AI tools while receiving real-time teacher support. The participants’ ages ranged from 18 to 23. Regarding gender distribution, the group included both female and male participants, with a majority being female. An examination of the participants’ birthplaces revealed that each student came from a different city.

The instructional procedures were grounded in a flipped learning model (Bergmann & Sams, 2012), used here as a specific modality of blended learning. This design necessitated a distinct division of labor between AI and human agents: students first engaged with GenAI tools (e.g., Argumate, Quillbot) and instructional content asynchronously via the Canvas LMS to generate initial ideas and drafts. Subsequently, synchronous Zoom sessions were dedicated to human scaffolding, where the instructor guided critical reflection, peer discussion, and the evaluation of AI-generated outputs, ensuring a transition from passive reception to active reasoning.

The weekly procedure was designed to progressively build participants’ argumentative writing skills.

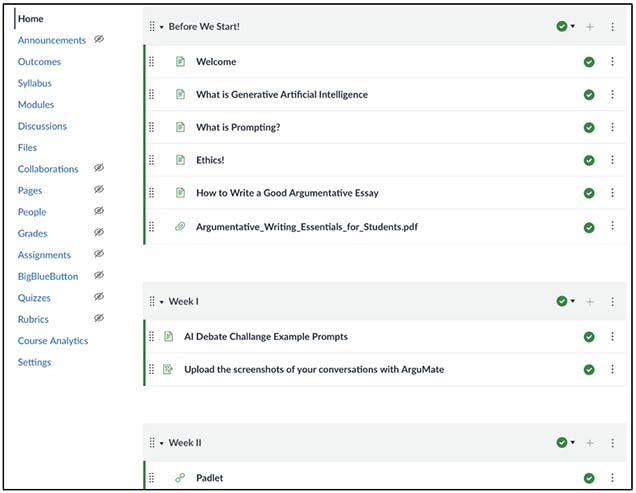

Before the lessons, short reading texts and informative videos on what GenAI is, what prompting is, ethics, and writing a good argumentative essay were presented to the participants. The LMS was used to present these texts and videos. Figure 1 shows a screen from the learning management system (Canvas) used to deliver instructional materials.

Figure 1

Screenshot Showing Introductory Course Materials Offered on the Learning Management System

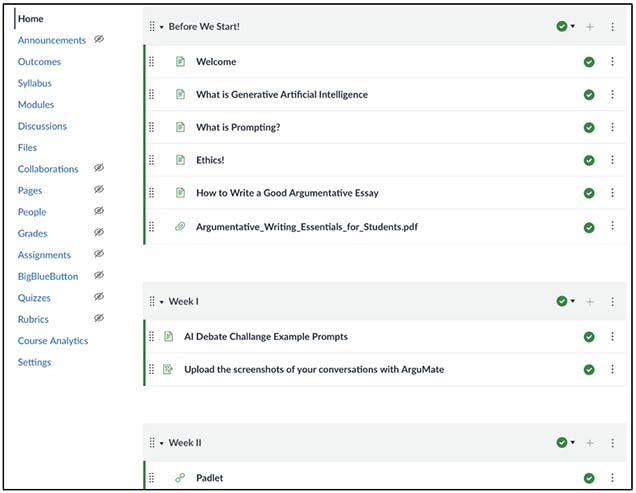

In the first week, activities generating arguments and counterarguments against AI were conducted. Participants used the platform Argumate (https://argu-mate.com/), a GenAI system specifically designed for debate. While producing counterarguments to participants’ claims, it also provided feedback on their sentences, to engage in debates with an AI agent, aiming to develop critical thinking and the students’ ability to form counterarguments. For the activity, three topic and example prompts were presented. Subsequently, participants were asked to debate with the AI agent on the topic they chose. The screenshot in Figure 2 shows the instructions students were given to complete the activity and the three topics they could choose from.

Figure 2

Screenshot of Student Instructions for the Argumate Activity

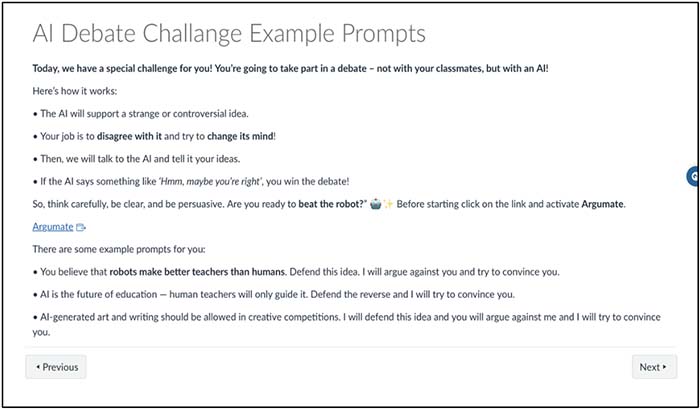

An example from one interaction is shown in Figure 3. When this example is analyzed, it can be seen how participants debated with the AI and how the AI responded.

Figure 3

Screenshot of One Partial Argumate Debate From This Study

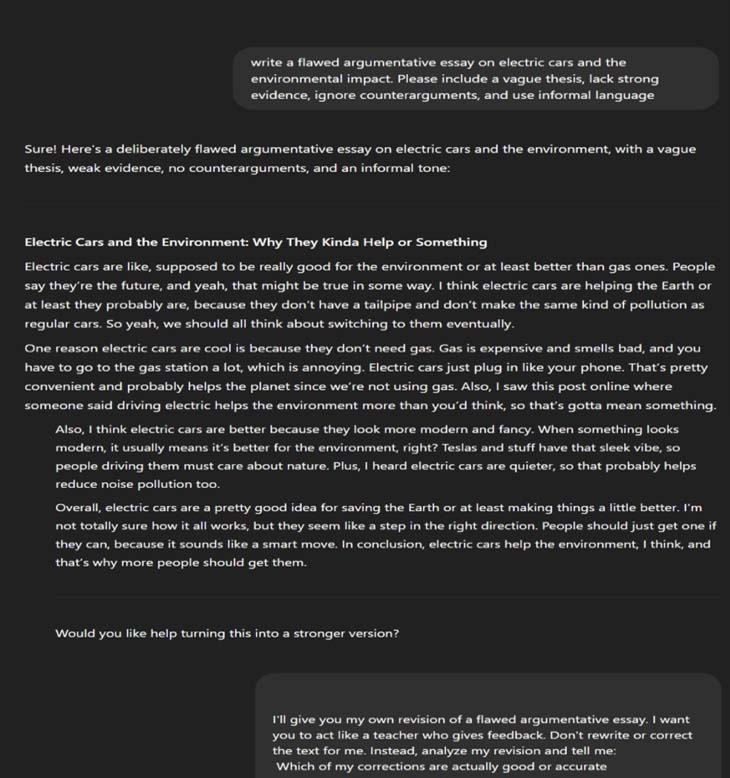

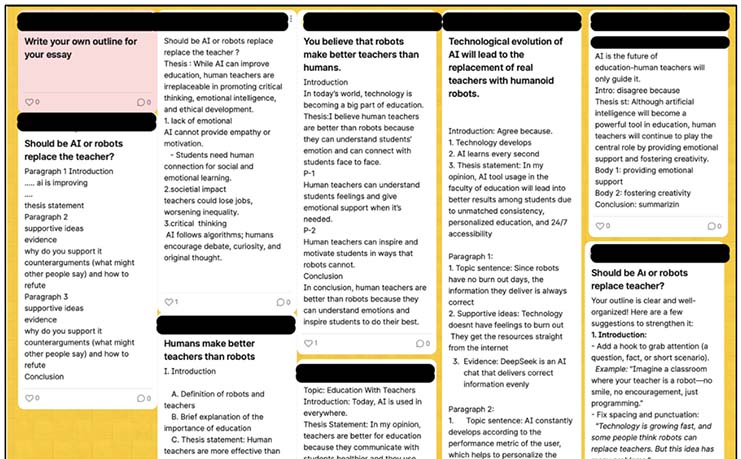

During the second week, participants created essay outlines on Padlet (https://padlet.com/) based on their Argumate discussions and used the GenAI tool QuillBot (https://quillbot.com/) to refine their drafts and receive feedback on structure and language. Figure 4 presents an example of the various essay outlines created on Padlet.

Figure 4

Screenshot of Sample Essay Outlines Created on Padlet

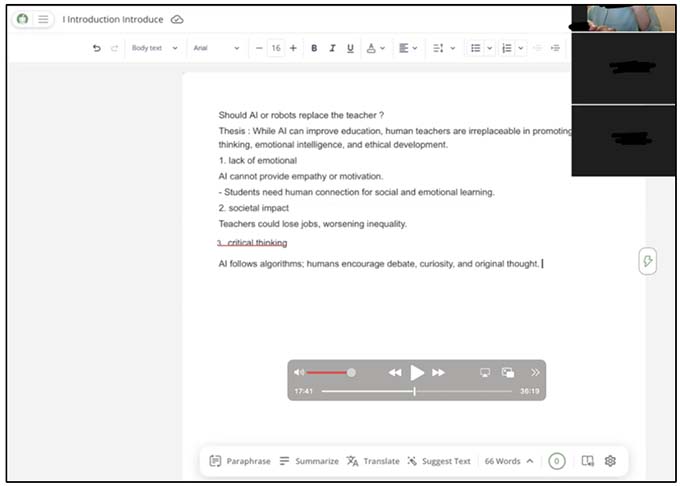

After creating the outlines, these drafts were discussed. Based on the outlines, participants wrote their own argumentative essays on the Quillbot platform, where the writing and feedback processes were carried out. During the process, the GenAI tool corrected participants’ grammar mistakes and provided alternative ideas by giving feedback. After completing the process, participants engaged in an online discussion on the LMS, reviewing their experiences, what they wrote, and how the GenAI tool provided feedback. Figure 5 illustrates one participant’s work completed in Quillbot.

Figure 5

Screenshot of a Quillbot Activity

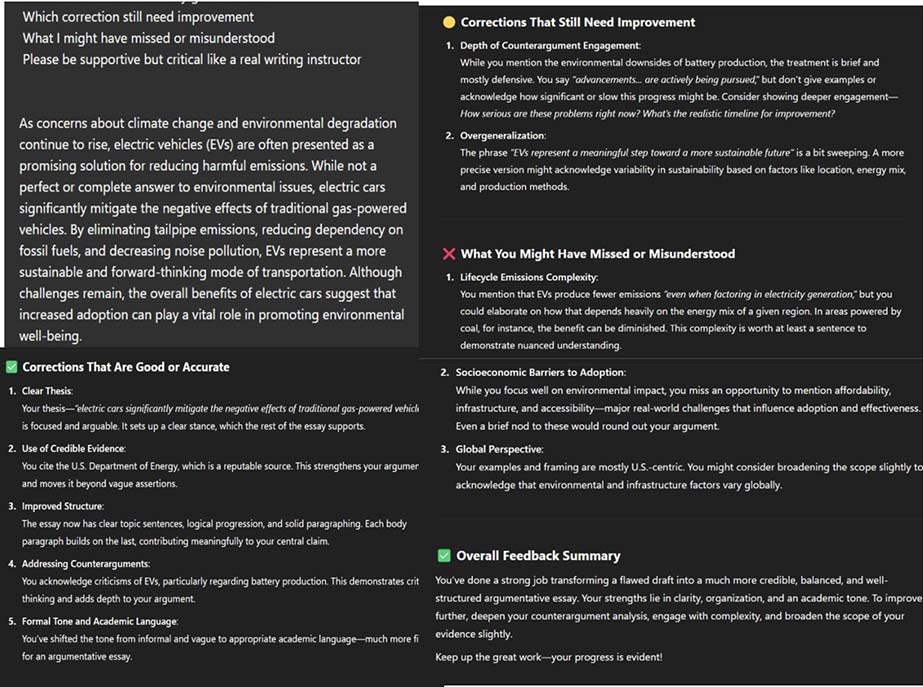

The third week focused on enhancing critical evaluation skills. Participants prompted ChatGPT(Version 4o) to generate a flawed argumentative essay and were then tasked with identifying and correcting its weaknesses. Afterward, participants were expected to give feedback to ChatGPT and provide alternative sentence suggestions. In response to participants’ feedback and correction, ChatGPT gave the participants feedback as a mentor teacher. Figure 6 presents an example of one participant’s work from this activity.

Figure 6

Screenshot Example of the Flawed Argumentative Essay Activity

In the final week, a worksheet was provided on Google Docs, and an in-class discussion was carried out on a flawed sample essay embedded in the worksheet. After discussing, participants answered questions about the flawed essay, and they revised a poorly written sample essay, using AI for information-gathering as needed. In this way, they made the flawed essay more coherent and effective. Their final versions were checked on the platform Grammarly (https://www.grammarly.com/) for grammatical accuracy and then discussed in class.

Different data sources were used. These included pre- and post-writing test assessments, semi-structured interviews, and participants’ drafts from the argumentative writing activity. First of all, a pre-writing test was applied to participants who had already taken the academic writing instruction. During the blended learning sessions, participants then took the GenAI integrated lessons, which lasted four weeks. Then, the participants took the post-writing test. Pre- and post-writing tests required participants to produce argumentative essays on comparable topics. They were scored using an analytic rubric based on the International English Language Testing System (IELTS) writing exam, focusing on organization, argument strength, language accuracy, and coherence. In addition, results were evaluated by two field experts. The results of the tests were compared, and the mean score was calculated. Lastly, semi-structured interviews were conducted individually to explore participants’ perceptions of GenAI’s usefulness, limitations, and other implications (see Appendix for sample interview responses).

The qualitative data obtained from semi-structured interviews and reflective journals were analyzed using Braun and Clarke’s (2006) six-phase framework for thematic analysis. MAXQDA software (Version 24.9.1; https://www.maxqda.com) was used to facilitate the transcription, coding, and data management processes. This recursive analysis involved: (a) familiarizing ourselves with the data through repeated reading; (b) generating initial codes; (c) searching for themes; (d) reviewing themes against the dataset; (e) defining and naming themes; and (f) producing the final report. To ensure trustworthiness, two of us independently coded the data, and discrepancies were resolved through negotiation until consensus was reached.

Regarding the quantitative component, descriptive statistics were calculated for the pre- and post-test argumentative writing scores. In line with the single-case study design, these quantitative results were not used for statistical generalization but served as a method of data triangulation. They provided objective descriptive evidence to corroborate participants’ subjective perceptions of their skill development.

This study was conducted in accordance with institutional and international ethical research standards. Ethical approval was obtained from the Ethics Committee of Anadolu University prior to data collection. All participants were informed about the purpose, scope, and voluntary nature of the study. Written consent was obtained from each participant, and anonymity and confidentiality were strictly maintained throughout the research process. Participants were assured their data would be used solely for academic purposes and that they could withdraw from the study at any stage without consequence.

The research aimed to examine whether GenAI tools make a difference in the writing skills of first-year ELT participants, to understand how GenAI contributes to improving their argumentative writing skills and how it can be used to support the language learning process. The research findings are categorized under three main themes: (a) participants’ perceptions of the GenAI-supported writing process, (b) participants’ experiences of using GenAI in argumentative essay writing, and (c) the perceived role of GenAI in the language learning process. Thematic analysis revealed that participants most frequently emphasized the supportive and facilitative aspects of GenAI in the writing process. Key themes included feedback, vocabulary development, self-paced learning, and learner-centered approaches. Overall, participants expressed predominantly positive attitudes, noting that GenAI made writing easier and more efficient. However, a few participants expressed concerns about potential overreliance and the risk of reduced effort or laziness.

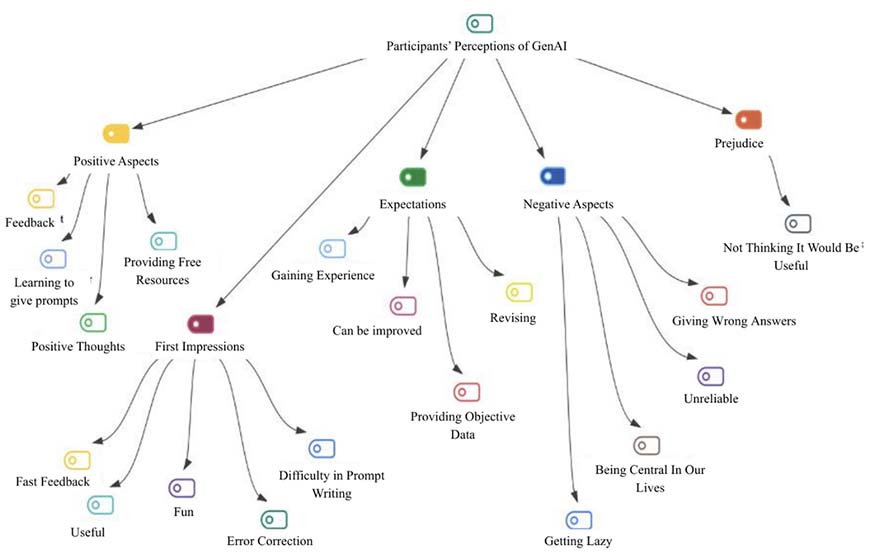

The analysis of semi-structured interviews and pre- and post-writing test results revealed participants’ perceptions of the GenAI-integrated writing process under five main themes: positive aspects, first impressions, expectations, negative aspects, and prejudices. Overall, participants displayed a positive attitude toward GenAI, emphasizing its supportive role in providing immediate feedback, correcting errors, and generating ideas.

Many participants found GenAI particularly useful for improving their writing through detailed, objective, and fast feedback. Participant 4 highlighted, “After writing a text, I send it to AI and ask it to correct my mistakes. This helps me learn from them.” Similarly, participant 9 reported that tools such as Grammarly improved their confidence and made them more aware of their errors. Several participants also appreciated the accessibility of AI-based learning resources. Participant 8 mentioned that AI could generate customized materials and provide recommendations when learning other languages, saving time and cost.

Participants acknowledged the importance of effective prompting for meaningful interaction with AI. While some initially struggled with it, they later found that mastering prompts enhanced their productivity and understanding. Early experiences were often marked by excitement and surprise, which evolved into greater confidence and enjoyment as they became familiar with the tools.

Despite overall positive attitudes, a few participants expressed concerns about overreliance on AI, potential inaccuracies, and reduced effort. Some also reflected on AI’s increasing presence in daily life as a possible disadvantage. Interestingly, several participants who were initially skeptical later reported finding GenAI highly beneficial after direct experience. Figure 7 shows a thematic analysis of participants’ perceptions of GenAI.

Figure 7

Thematic Map of Participants’ Perceptions of Generative Artificial Intelligence

In conclusion, participants viewed generative AI as a facilitative, motivating, and pedagogically valuable support tool that enhanced both their confidence and engagement in the writing process.

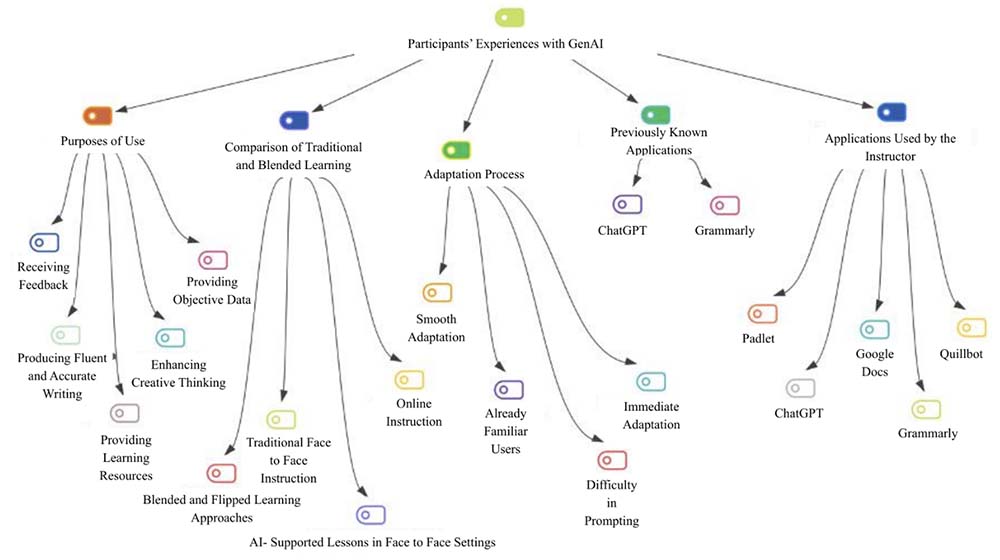

The analysis of semi-structured interviews, pre- and post-writing tests, and classroom data revealed five main themes related to participants’ experiences with GenAI: (a) purposes of use, (b) comparison with traditional/blended learning and learning-environment preferences, (c) adaptation process, (d) previously known tools, and (e) AI tools used by the instructor. The thematic analysis produced 20 codes in total.

Participants reported using GenAI tools for diverse purposes, most commonly to receive feedback, generate ideas, facilitate the writing process, and access resources. Participant 9 noted, “I wasn’t very confident, but after using tools like Grammarly, my confidence returned, and I started paying more attention to my mistakes.” Participant 4 stated that AI feedback helped identify errors, while Participant 1 emphasized AI’s contribution to creativity: “I usually struggle to find ideas.... Its examples helped me generate better ones.” Others described writing more fluent and academic texts through vocabulary enrichment and grammar correction. Many participants perceived GenAI feedback as faster, more objective, and less time-consuming than traditional methods. They also highlighted the advantages of blended and flipped-learning models, where AI tools complemented classroom instruction. Several found these combinations more effective than conventional teaching, describing AI as explanatory and responsive. Most participants adapted quickly to GenAI, particularly those already familiar with digital tools. A few initially struggled with prompting, but improved with teacher support and preparatory materials. Participants also recognized their prior exposure to applications such as ChatGPT, Grammarly, QuillBot, Google Docs, and Padlet, noting that these tools served distinct pedagogical purposes. Guided classroom use of these platforms enhanced writing structure, collaboration, and confidence. As Participant 9 remarked, “Padlet helped me see my peers’ ideas, which expanded my perspective,” while Participant 3 shared, “QuillBot made my writing more academic and refined my sentences.” The map of participants’ experiences with GenAI-supported instructions is illustrated in Figure 8.

Figure 8

A Map of Participants’ Experiences with GenAI-Supported Instruction

To conclude, participants viewed GenAI as an efficient, supportive, and versatile resource that enriched their writing and blended-learning experiences.

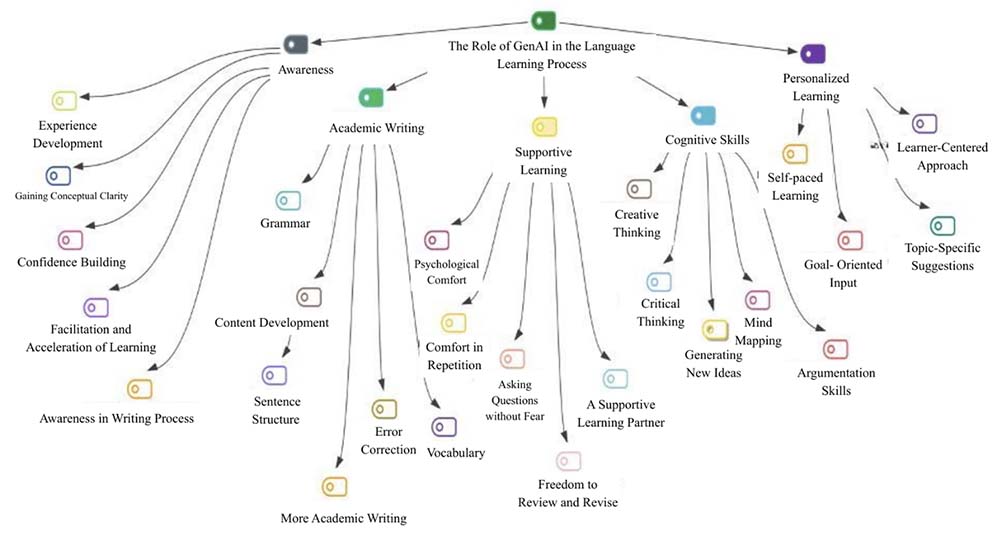

The analysis of semi-structured interviews, pre- and post-writing tests, and classroom data revealed five main themes based on the role of GenAI in the language learning process: (a) awareness, (b) academic writing, (c) supportive learning, (d) cognitive skills, and (e) personalized learning. Across these themes, 25 codes were identified, illustrating the multifaceted contributions of GenAI to participants’ language learning development. Participants reported that using GenAI increased their awareness of their own learning processes, helping them gain confidence, develop self-regulation, and recognize their mistakes more effectively. Participant 4 reflected, “As I gained experience using it, I became more confident, especially in academic writing.” Similarly, participant 3 noted, “I wasn’t confident before, and my writing scores were low, but after writing essays with AI and checking them, my sentence structures improved, and my grades increased.”

In addition, participants acknowledged that effective prompting enhanced their ability to communicate with AI tools, developing critical digital literacy. As participant 1 explained, “When it didn’t give the answers I wanted, I realized the issue was in my prompts.” In addition, AI’s guidance helped clarify conceptual ambiguities, allowing participants to enter lessons more prepared and engaged. As participant 8 stated, “Thanks to the materials shared before class, we came in already knowing unfamiliar concepts.”

Participants emphasized GenAI’s significant contributions to academic writing, including improved grammatical accuracy, vocabulary range, organization, and idea development. They reported noticeable progress between their pre- and post-writing performances, particularly in grammar and lexical resource areas. A thematic map that shows the role of GenAI in the language learning process has been given in Figure 9.

Figure 9

The Role of GenAI in the Language Learning Process

To summarize, findings indicate that GenAI served as an effective learning partner, promoting greater awareness, linguistic competence, and motivation. It not only enhanced participants’ writing performance but also fostered a more autonomous, reflective, and cognitively engaging learning experience.

The findings of this study indicate that participants perceived GenAI tools as supportive, guiding, and motivating resources for academic writing. They valued GenAI for providing rapid, objective feedback, identifying errors, and assisting in text organization, findings consistent with Sun (2023), who emphasized ChatGPT’s ability to support learners in idea generation, structure building, and academic language use.

A major contribution of this study lies in demonstrating how GenAI reduces writing anxiety and enhances confidence. Participants reported feeling less judged and more willing to experiment with ideas when interacting with AI than in traditional classrooms. This observation aligns with Barrot (2023), who found that ChatGPT fosters emotional support and engagement by reducing fear of making mistakes. When integrated into a pedagogically structured course, GenAI can thus create a psychologically safe learning environment that encourages active participation.

The results also show that participants personalized their interaction with GenAI to meet individual needs, consulting it before, during, and after writing for idea generation, language refinement, and revision. This reflects learner agency and autonomy, supporting previous work by Barrot (2023) and Erdem-Aydın et al. (2025), who highlighted GenAI’s potential to enable personalized, learner-centered instruction. Especially in large or remote classes, GenAI can act as a valuable partner for educators to support individualized learning.

Another important finding concerns the development of critical thinking. In debate-based tasks using the Argumate tool, participants produced counterarguments, questioned AI responses, and reconstructed ideas, shifting from passive reception to active reasoning. This finding also responds to calls in the literature, highlighting the need for pedagogically grounded AI applications in higher education (Zawacki-Richter et al., 2019).

Participants also reported noticeable improvement in academic writing competence, particularly in grammar, vocabulary range, organization, and tone. They described GenAI as a virtual tutor and collaborator that supports scaffold planning, drafting, and revision, echoing Chan & Lee (2023) and Dwivedi et al. (2023), who found that AI-assisted writing enhances textual quality and efficiency in higher education.

Despite these benefits, participants expressed concerns about ethical issues, misinformation, and overreliance, confirming previous warnings by Erdem-Aydın et al. (2025). Some questioned the accuracy of AI outputs, indicating emerging critical awareness and the need for explicit guidance in evaluating AI-generated content.

Overall, the findings show that GenAI functions not only as a writing tool but as a pedagogical and cognitive scaffold that enhances awareness, reflection, and self-regulation. Interpreted through the lens of the AI-enhanced Community of Inquiry framework, these results illustrate a distributed teaching presence. While GenAI assumed the role of providing hard scaffolding through immediate, structural feedback and error correction, the human instructor maintained the critical soft scaffolding by fostering emotional support and guiding complex discourse. This supports Kasneci et al. (2023), who described GenAI as an interactive system designed to facilitate learning through engagement. When supported by teacher guidance, participants reported greater efficiency and ethical awareness—consistent with Bozkurt (2023) and Erdem-Aydın et al. (2025), who emphasized the effectiveness of blended, teacher-mediated AI integration.

The results demonstrate how GenAI can serve as an innovative tool for learner support, aligning with the theme “Innovations in GenAI for learner support.” Specifically, the study illustrates how generative AI tools such as Argumate and QuillBot can be effectively integrated into blended learning environments to enhance engagement and learning outcomes. By enabling participants to receive immediate and personalized feedback through GenAI applications, the study also resonates with the special issue’s theme of “Personalization and Feedback.” Moreover, the findings highlight that GenAI is not merely a technical tool but a pedagogically meaningful system that reduces learners’ fear of making mistakes and fosters psychological comfort by allowing them to learn through their own experiences. Finally, the 4-week blended course designed for this research provides a practical model for integrating GenAI applications into distributed learning environments, offering both pedagogical and methodological insights for future implementation.

This study examined the impact of GenAI-supported blended instruction on first-year English as a foreign language (EFL) participants’ argumentative writing skills and perceptions. The integration of GenAI tools within a structured blended learning module led to measurable improvements in writing quality, particularly in organization and argument strength, while fostering participants’ confidence and metacognitive engagement. Qualitative findings showed that participants valued personalized support, immediate non-judgmental feedback, and opportunities to critically evaluate AI-generated suggestions. At the same time, concerns regarding reliability, accuracy, and the risk of overreliance highlight the need for careful pedagogical framing.

These results contribute to the growing body of research on AI integration in higher education, especially in teacher education contexts where future educators are learning how to incorporate emerging technologies responsibly. The findings indicate that teacher mediation, explicit AI literacy training, and guided prompt engineering are key to harnessing GenAI’s benefits while mitigating potential drawbacks. Blended learning offers a flexible space for such integration, balancing digital affordances with interactive human guidance.

Several limitations should be acknowledged. The study involved a small sample size and was conducted within a single institutional context, limiting the generalizability of results. In addition, the relatively short instruction period may have influenced the depth of skill transfer. Future research could adopt larger, more diverse samples and longitudinal designs to explore sustained development of writing competence and evolving perceptions of AI use. Investigating how pre-service teachers later integrate GenAI into their own instructional practice also warrants attention.

Despite these limitations, the study provides empirical evidence that thoughtfully designed AI-supported blended learning can strengthen complex writing skills and foster critical digital literacy in EFL education. The findings inform both classroom practice and policy, offering guidance for institutions and teacher educators aiming to integrate GenAI tools in ways that enhance learning while maintaining academic integrity and intellectual autonomy.

The findings of this study carry several pedagogical and institutional implications for the integration of GenAI in language education, especially within open and distance learning contexts. First, the results emphasize that GenAI tools can serve as adaptive and confidence-building companions for learners, supporting not only linguistic development but also emotional engagement and self-efficacy. This suggests that language educators should incorporate GenAI strategically to enhance learner autonomy, reduce writing anxiety, and foster critical reflection.

Second, the study highlights the importance of teacher mediation. Participants reported the most productive outcomes when GenAI was used under guided supervision with structured prompts and preparatory materials. This underscores the need for professional development programs that equip educators with competencies in prompt design, ethical AI use, and data interpretation. Institutions should therefore focus on capacity-building frameworks that enable teachers to integrate AI meaningfully rather than mechanically.

Third, the results reveal that GenAI can help address the personalization gap in large-scale and distance education settings. By offering individualized feedback, differentiated scaffolding, and context-sensitive learning support, AI systems can complement human instruction and provide equitable access to academic writing support.

Finally, future research should explore policy frameworks and ethical guidelines that balance innovation with responsibility. Ensuring transparency, fairness, and academic integrity will be crucial for sustaining trust in AI-mediated learning environments. When embedded within pedagogically sound and ethically aware systems, GenAI has the potential to transform writing instruction into a more inclusive, reflective, and learner-centered process.

During the preparation of this manuscript, the authors acknowledge that the paper was proofread and edited with the assistance of DeepL and Gemini. The authors reviewed all AI-generated suggestions to ensure accuracy and retain full responsibility for the final content.

Anderson, T. (Ed.). (2008). The theory and practice of online learning (2nd ed.). Athabasca University Press.

Barrot, J. S. (2023). Using ChatGPT for second language writing: Pitfalls and potentials. Assessing Writing, 57, Article 100745 https://doi.org/10.1016/j.asw.2023.100745

Bergmann, J., & Sams, A. (2012). Flip Your Classroom: Reach Every Student in Every Class Every Day (pp. 120-190). Washington DC: International Society for Technology in Education.

Bozkurt, A. (2023). Generative artificial intelligence (AI) powered conversational educational agents: The inevitable paradigm shift. Asian Journal of Distance Education, 18(1), 198-204. https://www.asianjde.com/ojs/index.php/AsianJDE/article/view/718

Bozkurt, A., & Sharma, R. C. (2020). Emergency remote teaching in a time of global crisis due to coronavirus pandemic. Asian Journal of Distance Education, 15(1), i-vi. https://doi.org/10.5281/zenodo.3778083

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77-101. https://doi.org/10.1191/1478088706qp063oa

Chan, C. K. Y., & Lee, K. K. W. (2023). The AI generation gap: Are Gen Z students more interested in adopting generative AI such as ChatGPT in teaching and learning than their Gen X and millennial generation teachers? Smart Learning Environments, 10(1). https://doi.org/10.1186/s40561-023-00269-3

Demirer, V., & Aydın, B. (2017). Ters yüz sinif modeli çerçevesinde gerçekleştirilmiş çalişmalara bir bakiş: içerik analizi. Eğitim Teknolojisi Kuram ve Uygulama, 7(1), 57-82. https://doi.org/10.17943/etku.288488

Dwivedi, Y. K., Kshetri, N., Hughes, L., Slade, E. L., Jeyaraj, A., Kar, A. K., Baabdullah, A. M., Koohang, A., Raghavan, V., Ahuja, M., Albanna, H., Albashrawi, M. A., Al-Busaidi, A. S., Balakrishnan, J., Barlette, Y., Basu, S., Bose, I., Brooks, L., Buhalis, D.,... Wright, R. (2023). Opinion Paper: "So what if ChatGPT wrote it?" Multidisciplinary perspectives on opportunities, challenges and implications of generative conversational AI for research, practice and policy. International Journal of Information Management, 71, 102642. Article 102642. https://doi.org/10.1016/j.ijinfomgt.2023.102642

Erdem-Aydın, İ., Çalışkan, H. & Usta, İ. (2025). Açık ve uzaktan öğrenmede ChatGPT türü yapay zekâ destekli uygulamaların kullanım alanlarının belirlenmesi [Determining the use of ChatGPT-type artificial intelligence supported applications in open and distance learning]. Türk Eğitim Bilimleri Dergisi, 23(1), 666-697. https://doi.org/10.37217/tebd.1576430

Ertmer, P. A., & Ottenbreit-Leftwich, A. T. (2010). Teacher technology change: How knowledge, confidence, beliefs, and culture intersect. Journal of Research on Technology in Education, 42(3), 255-284. https://doi.org/10.1080/15391523.2010.10782551

Garrison, D. R., Anderson, T., & Archer, W. (2000). Critical inquiry in a text-based environment: Computer conferencing in higher education. The Internet and Higher Education, 2(2-3), 87-105. https://doi.org/10.1016/S1096-7516(00)00016-6

Godwin-Jones, R. (2022). Partnering with AI: Intelligent writing assistance and instructed language learning. Language Learning & Technology, 26(2), 5-24. https://doi.org/10.64152/10125/73474

Graham, C. R. (2013). Emerging practice and research in blended learning. In M. G. Moore (Ed.), Handbook of distance education (3rd ed., pp. 333-350). Routledge. https://doi.org/10.4324/9780203803738.ch21

Guo, K., Wang, J., & Chu, S. K. W. (2022). Using chatbots to scaffold EFL students’ argumentative writing. Assessing Writing, 54, Article 100666. https://doi.org/10.1016/j.asw.2022.100666

Guo, K., Zhong, Y., Li, D., & Chu, S. K. W. (2023). Effects of chatbot-assisted in-class debates on students’ argumentation skills and task motivation. Computers & Education, 203, 104862. https://doi.org/10.1016/j.compedu.2023.104862

Han, J., Yoo, H., Kim, Y., Myung, J., Kim, M., Lim, H., Kim, J., Lee, T. Y., Hong, H., Ahn, S.-Y. & Oh, A. (2023). RECIPE: How to integrate ChatGPT into EFL writing education. In D. Spikol (Chair), Proceedings of the 10th ACM Conference on Learning @ Scale (pp. 416-420). ACM. https://doi.org/10.1145/3573051.3596200

Hattie, J. (2012). Visible learning for teachers: Maximizing impact on learning. Routledge.

Holmes, W., Bialik, M., & Fadel, C. (2019). Artificial intelligence in education: Promises and implications for teaching and learning. Center for Curriculum Redesign.

Hu, X., Xu, S., Tong, R., & Graesser, A. (2025). Generative AI in education: From foundational insights to the Socratic playground for learning. arXiv. https://doi.org/10.48550/arXiv.2501.06682

Hyland, K. (2003). Second language writing. Cambridge University Press.

Kasneci, E., Sessler, K., Küchemann, S., Bannert, M., Dementieva, D., Fischer, F., Gasser, U., Groh, G., Günnemann, S., Hüllermeier, E., Krusche, S., Kutyniok, G., Michaeli, T., Nerdel, C., Pfeffer, J., Poquet, O., Sailer, M., Schmidt, A., Seidel, T. ... Kasneci, G. (2023). ChatGPT for good? On opportunities and challenges of large language models for education. Learning and Individual Differences, 103, Article 102274. https://doi.org/10.1016/j.lindif.2023.102274

Law, E. L. (2024). Application of generative artificial intelligence (GenAI) in language teaching and learning: A scoping literature review. Computers and Education Open, 6, Article 100174. https://doi.org/10.1016/j.caeo.2024.100174

Lee, S. C. N., & Tajino, A. (2008). Understanding Students’ Perceptions of Difficulty with Academic Writing for Teacher Development: A Case Study of the University of Tokyo Writing Program. Kyoto University Research Information Repository, 14, 1-11.

Luckin, R., Holmes, W., Griffiths, M., & Forcier, L. B. (2016). Intelligence unleashed: An argument for AI in education. Pearson Education.

Nussbaum, E. M. (2008). Using argumentation vee diagrams (AVDs) for promoting critical thinking and argumentative writing. Journal of Educational Psychology, 100(3), 549-565. https://doi.org/10.1037/0022-0663.100.3.549

Park, Y., & Doo, M. Y. (2024). Role of AI in blended learning: A systematic literature review. The International Review of Research in Open and Distributed Learning, 25(1), 164-196. https://doi.org/10.19173/irrodl.v25i1.7566

Qin, J. (2013). Applying the Toulmin model in teaching L2 argumentative writing. The Journal of Language Teaching and Learning, 3(2), 21-29. https://jltl.com.tr/index.php/jltl/article/view/109

Suh, S., Bang, J., & Han, J. W. (2025). Developing critical thinking in second language learners: Exploring generative AI like ChatGPT as a tool for argumentative essay writing. arXiv. https://doi.org/10.48550/arXiv.2503.17013

Sun, T. (2023). The potential use of generative AI in ESL writing assessment: A case study of IELTS writing tasks. Irish Journal of Technology Enhanced Learning, 7(2), 42-51. https://doi.org/10.22554/ijtel.v7i2.137

Sun, Y., Zhao, X., Li, X., & Yu, F. (2023). Effectiveness of the flipped classroom on self-efficacy among students: A meta-analysis. Cogent Education, 10(2). https://doi.org/10.1080/2331186X.2023.2287886

Tlili, A., Shehata, B., Adarkwah, M. A., Bozkurt, A., Hickey, D. T., Huang, R., & Agyemang, B. (2023). What if the devil is my guardian angel: ChatGPT as a case study of using chatbots in education. Smart Learning Environments, 10, Article 15. https://doi.org/10.1186/s40561-023-00237-x

Wang, C. (2024). Exploring students’ generative AI-assisted writing processes: Perceptions and experiences from native and nonnative English speakers. Technology, Knowledge and Learning, 30, 1825-1846. https://doi.org/10.1007/s10758-024-09744-3

Weigle, S. C. (2002). Assessing writing. Cambridge University Press.

Wingate, U. (2012). “Argument!” Helping students understand what essay writing is about. Journal of English for Academic Purposes, 11(2), 145-154. https://doi.org/10.1016/j.jeap.2011.11.001

Xu, T., & Jumaat, N. F. (2025). Enhancing Critical Thinking in EFL Writing Through an AI-Supported Blended Learning Model. International Journal of Academic Research in Progressive Education and Development, 14(1), 1975-1994.

Yin, R. K. (2018). Case Study Research and Applications: Design and Methods (6th ed.). Thousand Oaks, CA: Sage.

Zawacki-Richter, O., Marín, V. I., Bond, M., & Gouverneur, F. (2019). Systematic review of research on artificial intelligence applications in higher education — where are the educators? International Journal of Educational Technology in Higher Education, 16, 39. https://doi.org/10.1186/s41239-019-0171-0

Zhai, C., & Wibowo, S. (2023). A systematic review on artificial intelligence dialogue systems for enhancing English as foreign language students’ interactional competence in the university. Computers and Education: Artificial Intelligence, 4, Article 100134. https://doi.org/10.1016/j.caeai.2023.100134

Note: The interview responses provided below were originally conducted in Turkish via audio recordings. The spoken responses were transcribed and subsequently translated into English by the authors with the assistance of AI translation tools. Minor grammatical and structural adjustments were made during the translation process to ensure clarity and readability for an English-speaking audience, which accounts for their organized and structured appearance.

1. What was your experience using generative AI? What were your first impressions?

“I found the experience both fun and interesting. We had to use an AI tool for argumentative writing and debate. It was very impressive to interact with a tool that could recall information instantly, unlike humans. It helped me learn to express my thoughts more clearly, so the AI could also respond with counterarguments. At first, I didn’t think it would be very useful because I already had practice writing essays for IELTS and had attended writing courses. But now, I see that it is truly very useful. I gained new perspectives on how to build structure, create an outline, and generate ideas effectively.”

2. What were the first challenges or positive aspects?

“The biggest challenge was writing prompts. Initially, I hadn’t realized how important detailed prompts were. I was using vague phrases like ‘Write about this.’ Now, when I provide context, for example, ‘I am a first-year English language teaching student, and I am writing an argumentative essay on this topic,’ I get much more relevant and high-quality responses. I had used it for school projects before, but not seriously. I usually got help from Google. However, thanks to this course, I learned to use AI tools more purposefully.”

3. How long did it take to get used to it?

“It didn’t take me long to get used to it because I had used it a little before. But in this course, I learned to use it more systematically.”

4. How confident were you before using these tools? Did your perception change?

“I was already confident. I was one of the best students at my school, and I was getting good grades at university too. Still, AI made my writing more structured and professional.”

5. Which was more beneficial: traditional methods or AI-supported writing?

“AI-supported writing was more beneficial because it explained why it used a certain structure. Traditional methods are based more on inference and experience. In contrast, AI can provide explanations according to your level. You can even ask it to simplify the topic.”

6. How did AI tools contribute to your English writing skills?

“It improved my grammar, vocabulary, and academic writing. My academic vocabulary was particularly lacking. Seeing how AI writes formally gave me the opportunity to learn these expressions and apply them in my own writing.”

7. Did the suggestions from AI make your writing more fluent or accurate?

“Yes, my writing became more fluent and accurate. I improved my paragraphs with the suggestions from the AI. I used a more academic and clearer language.”

8. Which was more effective: traditional or AI-supported activities?

“Both are valuable. Traditional activities provide interaction with the teacher and friends, which is important. But AI gives faster and more detailed feedback. The most effective is the combination of the two, the blended model.”

9. Do you think AI feedback was effective?

“Yes, it contributed a lot. I especially improved in structuring and planning my paragraphs. I used to write more intuitively before. Now, I can create a plan like a mind map before writing.”

10. Was there a decrease in your writing mistakes?

“Yes, there was a decrease. I am more conscious about punctuation, transition words, and sentence structures now. My writing has become clearer and more organized.”

11. Would you consider using these tools in the future?

“Yes, I would. It is much more comfortable to proceed with AI, especially when I hesitate to ask questions. It allows me to learn at my own pace.”

12. Do you believe regular use could lead to lasting improvement?

“Yes, I do. AI provides opportunities for repetition and offers continuous feedback, which supports improvement.”

13. Did AI tools speed up or facilitate your writing process?

“Yes. Thanks to AI, I can plan and create my texts faster. It clarifies what I am going to write in advance.”

14. Are you able to write in a more organized way now?

“Definitely. The structures became more distinct. The way AI demonstrated paragraph organization, idea flow, and transitions was very useful.”

15. What is the most important thing you learned about AI?

“AI is very effective not just for writing, but also for supporting the learning process. It doesn’t just show you what it wrote, but also explains why it wrote it that way. This makes it easier to understand and learn the language.”

AI as a Pedagogical Scaffold: Enhancing English as a Foreign Language Argumentative Writing and Critical Thinking in a Distributed Learning Environment by İrem Sağ and Buket Kip-Kayabaş is licensed under a Creative Commons Attribution 4.0 International License.