Volume 27, Number 2

Taoufik Boulhrir1*, Hanan Ghreir2, Mahmoud Hamash3, and Michael Robert4

1Fordham University, USA; 2Universiti Teknologi Malaysia, Malaysia; 3Dublin City University, Ireland; 4American International University, Kuwait; *Corresponding Author

This scoping review examines how artificial intelligence (AI) has been conceptualized and applied in adaptive learning and learning analytics in K–12 online and distance education between 2020 and 2025. Following Arksey and O’Malley’s framework and reported in accordance with PRISMA-ScR, we analyzed 21 empirical studies to explore thematic patterns, methodological trends, and research gaps. Most studies reported gains for learners in engagement, motivation, and self-regulation. However, reported benefits were unevenly distributed and often favored better-resourced learners, particularly in contexts where teacher mediation and institutional support were modest. AI was explicitly integrated in two-thirds of the studies, yet definitional inconsistencies blurred distinctions between genuine intelligence and automated adaptation. Quantitative designs were predominant, largely focusing on performance outcomes as derived from system logs and test data. While a small but growing number of mixed-methods studies have focused on learner experience and teacher mediation, the field remains constrained by methodological consistency and insufficient clarity regarding AI mechanisms. The findings highlight the importance of clearer conceptual frameworks, research designs that are participatory and context-sensitive, and ethical approaches that center teacher expertise and learner participation. This review argues that the transformative potential of AI for adaptive learning depends less on technological sophistication than on equitable, pedagogically informed integration between human judgment and automated systems.

Keywords: artificial intelligence in education, AIED, adaptive learning, personalized learning, artificial intelligence, K–12 online learning, learning analytics, equity, scoping review

K–12 education has undergone accelerated digital transformation since 2020, particularly following the COVID-19 pandemic, when emergency remote instruction gave way to sustained online, virtual, and distance arrangements. Early syntheses of this period document large-scale shifts to digital platforms alongside uneven capacity, variable access around the world, and concerns about engagement and equity, which continue to shape online learning ecosystems today (Huck & Zhang, 2021). At the same time, international guidance urges governments and educational technology providers to build safe, inclusive, and evidence-informed digital infrastructures while weighing the opportunities and risks of artificial intelligence (AI) in education (Global Education Monitoring Report Team, 2023). Against this backdrop, it is timely to ask how adaptive learning (AL) and learning analytics (LA) are being investigated and deployed in K–12 online and virtual settings, and with what implications for learners, educators, and systems.

Conceptual and pedagogical work highlights that equity, accessibility, and ethics are not peripheral but central to responsible adoption of AI in education. From a pedagogical perspective, human-centered approaches emphasize that AL and LA should be designed with teachers and learners, being the main stakeholders, in mind, aligned to classroom realities rather than displacing them (Shum et al., 2019). From an equity and governance perspective, international reviews further show that policy capacity, infrastructure, and privacy safeguards vary widely, shaping whether AI-enabled systems reduce or reproduce inequities across contexts (Aguerrebere et al., 2022). At the level of learner development, particularly in elementary grades, Boulhrir and Ait Bouch (2025) argue that developmental appropriateness, teacher mediation, and data protection are flagged as essential conditions for use. Other scholars similarly argue for stronger alignment between adaptive mechanisms and defensible theories of learning (Boulhrir et al., 2026). Although AI systems are often presented as closed loops approximating one-to-one tutoring, their effectiveness depends on explicit connections between learner models, instructional models, and content models (Martin et al., 2020; Wang et al., 2020). Methodologically, measurement choices are especially influential because they shape what learner characteristics are inferred and, in turn, what aspects of instruction are adapted. Theoretically, however, many studies provide limited justification for how particular learner characteristics are linked to specific feedback or institutional pathways (Boulhrir, 2025; Maier & Klotz, 2022; Tretow-Fish & Khalid, 2023).

Several recent reviews provide important foundations for understanding AL, LA, and AI in education, yet they differ in scope and emphasis from the present study. Martin et al. (2020) synthesize AL research across contexts and technologies prior to the widespread normalization of K–12 online schooling, while Rundquist et al. (2024) focus specifically on LA in K–12 mathematics, with attention to teaching and learning impacts within the subject area. More recently, Yim and Su (2025) offer a broad scoping review of AI learning tools across K–12 settings, emphasizing tool types and adoption trends. Building on these syntheses, the present review narrows the analytical lens to AI-enabled AL and LA in K–12 online, virtual, and distance education, with explicit attention to AI mechanisms, methodological patterns, and equity implications.

Accordingly, this scoping review aims to systematically map empirical research on AI-enabled AL and LA in K–12 online, virtual, and distance education published between 2020 and 2025. Specifically, it investigates (a) what research methods have been used, (b) what themes, outcomes, and technological tools are reported, (c) the extent to which AI is explicitly emphasized, and (d) what gaps persist for future inquiry. It adopts a mechanism-aware lens that distinguishes signals, models, decisions, and targets to clarify how AI is embedded in study designs and system architectures (Romero Alonso et al., 2024; Wang et al., 2020). We expect this contribution to provide a consolidated account of current trends and gaps, generate commensurable claims for researchers, offer mechanism-linked guidance for practitioners, and identify governance priorities for policymakers concerned with equitable and responsible adoption (Global Education Monitoring Report Team, 2023; Yim & Su, 2025). Hence, the article proceeds as follows. The next section details Arksey and O’Malley’s (2005) framework, which guided our methods, including sources, eligibility, screening, and charting. The results synthesize patterns by AI mechanism and research design. The discussion considers implications for pedagogy, equity, and responsible data use. The conclusion outlines priorities for future research in AI-enabled K–12 online education.

Research on AL and LA in K–12 online education is grounded in three interconnected foundations: system components, pedagogical alignment, and evaluation frameworks. Together, these perspectives explain how AI-enabled personalization is conceptualized and studied and why a focused mapping of the field is warranted.

A common way among researchers to classify system design is by distinguishing the sources that a system interprets from the targets it adapts. These sources typically include learner knowledge states or preferences inferred by AI models, while targets involve feedback, navigation, or activity selection (Maier & Klotz, 2022; Martin et al., 2020). On the assessment side, item response theory (IRT) models underpin many operational platforms, whereas sequence models such as Bayesian knowledge tracing and deep knowledge tracing are frequently discussed but are less often deployed at scale (Ihichr et al., 2024). In AL, often in conjunction with LA, these architectures aim to approximate one-to-one tutoring by estimating learner state and adjusting instructional sequences, task difficulty, or feedback (Wang et al., 2020). Despite this technical sophistication, many systems are described as closed loops, with limited attention to the pedagogical reasoning that governs when and why specific adaptations occur.

Across the literature, design is treated more as a people-first process than a purely technical activity. Human-centered approaches to LA emphasize that systems must be fit for purpose, usable, and aligned with teacher and student needs rather than simply demonstrating algorithmic novelty (Shum et al., 2019). Effective K–12 online instruction builds on pillars such as organization and design, connectedness, accessibility, individualization, active learning, and real-time assessment (Johnson et al., 2023). For younger learners, such as those at elementary levels, developmental appropriateness and teacher mediation are non-negotiable when AI enters early grades. International perspectives keep reminding us that policy capacity and infrastructure vary widely, raising the possibility that AI systems may reproduce rather than reduce inequities if safeguards are absent. These tensions make coordinated governance and professional learning integral to responsible adoption.

According to recent studies, measurement choices are not neutral; they shape what is inferred and, by extension, what is adapted. While adaptive assessment research documents widespread use of IRT, sequence models and reinforcement-inspired approaches are gaining traction (Ihichr et al., 2024). Studies of personalized feedback show adaptation at the micro-, meso-, and macro-levels, but rationales that connect learner characteristics to specific interventions are often thin (Maier & Klotz, 2022). Evaluations of dashboards and analytics environments typically emphasize usability and perceived value, but very few probe multimodal evidence or the deeper structures of pedagogy in classroom use (Tretow-Fish & Khalid, 2023). Claims about learning in this respect would be stronger if instrumentation, validity evidence, and teacher practices were examined together within authentic K–12 contexts. These conceptual, pedagogical, and evaluative strands imply that research on AI-enabled AL and LA is fragmented, with unresolved tensions around definition, purpose, and impact. These unresolved tensions underscore the value of a scoping review that systematically maps research designs, outcomes, and persistent gaps.

This review followed the five-stage framework proposed by Arksey and O’Malley (2005) and was reported in accordance with the PRISMA extension for scoping reviews (PRISMA-ScR; Hamash et al., 2025; Tricco et al., 2018). The purpose was to map rather than appraise study quality, identifying research trends, methodological approaches, technological tools, and persistent gaps related to AI in AL and LA in K–12 online, virtual, and distance education environments.

The review was guided by four research questions:

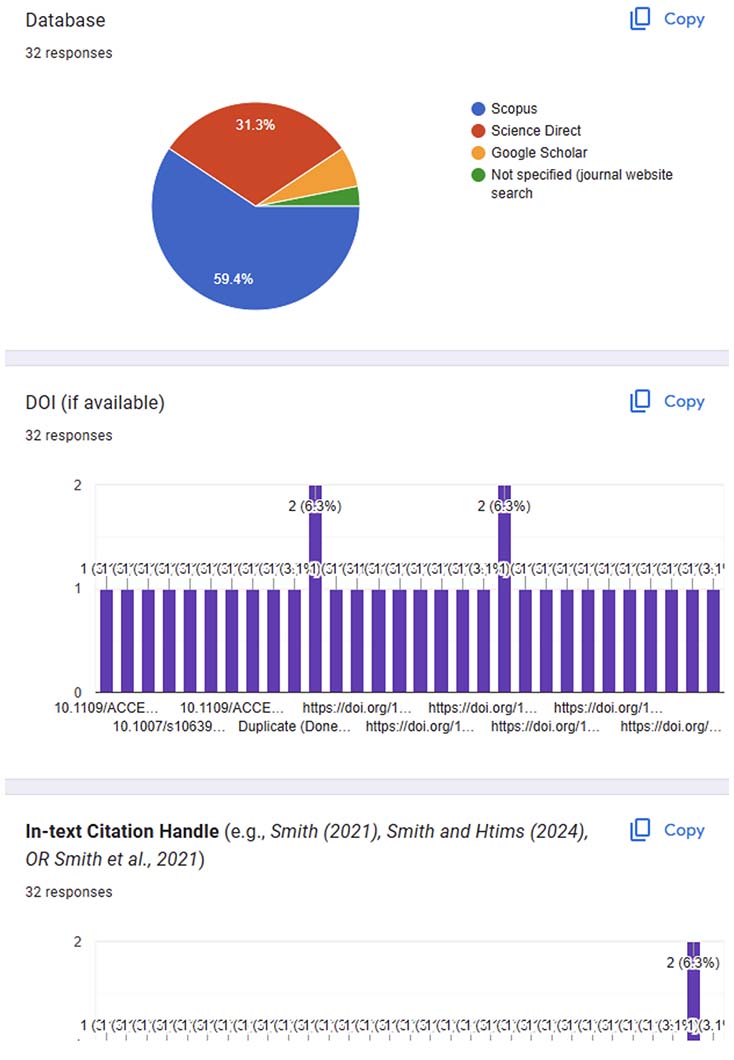

Searches were conducted in Scopus and ScienceDirect in August 2025, covering publications from January 1, 2020, to August 31, 2025. To supplement coverage, Google Scholar was searched with targeted title queries, and reference lists of included articles were screened. Search strings combined terms for population, intervention, and context:

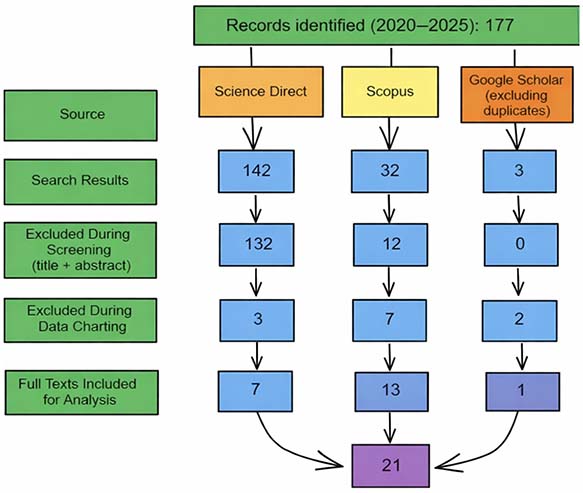

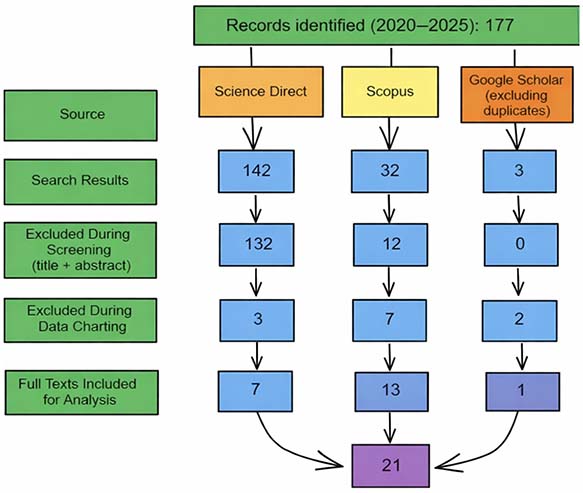

The review was limited to English-language publications due to resource constraints and the language coverage of the selected databases; no geographic restrictions were intentionally applied, although regional representation reflects indexing and publishing patterns within these sources. All search strings were subsequently validated manually by the research team. As detailed in Figure 1, the search yielded 177 records (142 from ScienceDirect, 32 from Scopus, and 3 from Google Scholar).

Screening occurred in two rounds: title/abstract screening, followed by full-text screening and data charting. The inclusion and exclusion criteria are summarized in Table 1.

Table 1

Inclusion and Exclusion Criteria

| Criterion type | Included | Excluded |

| Population | K–12 learners (primary, elementary, secondary, high school) | Higher education or adult learners |

| Intervention | AL and LA as central features | Conceptual/theoretical work without empirical data |

| Context | Online, virtual, or distance education | Face-to-face or blended if online not primary |

| Study type | Peer-reviewed empirical studies (quantitative, qualitative, or mixed methods) | Non-peer-reviewed (e.g., book chapters, dissertations) |

| Language | English | Non-English |

| Publication years | 2020–2025 | Outside 2020–2025 |

Note. AL = adaptive learning; LA = learning analytics.

Studies employing AR/VR environments or neurocognitive measures (e.g., electroencephalogram [EEG] or functional near-infrared spectroscopy [fNIRS]) were included when these tools informed adaptive decision-making, learner modeling, or analytics-driven instructional adjustments within online or virtual K–12 learning contexts. Of the 177 records, 144 were excluded during title/abstract screening. More studies were excluded during data charting for reasons such as wrong population, wrong context, or lack of empirical evidence. The final dataset included 21 studies. The selection process is summarized in the PRISMA flow diagram in Figure 1. Title/abstract and full-text screening, as well as data extraction, were conducted independently by two reviewers. Disagreements regarding study inclusion or data charting were resolved through discussion and consensus. Inter-rater reliability was calculated during screening, yielding a Cohen’s kappa of 0.789, indicating substantial agreement.

Figure 1

PRISMA Flow Diagram for Selected Studies

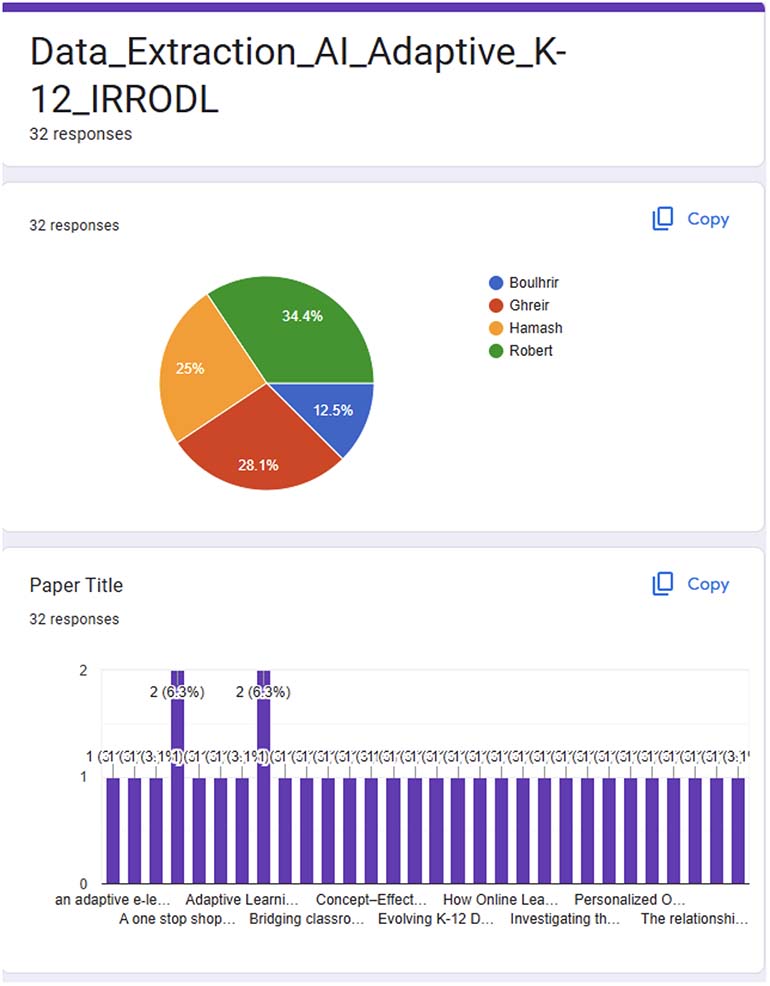

Data from included studies were extracted into a standardized charting tool using Google Forms (see Appendix for an illustrative sample). Fields included citation, country or region, study design, educational level, platform or tool, learning context, AL/LA focus, data sources, outcomes, findings, reported limitations, and thematic tags. We also coded for AI mechanism type, target of adaptation, and locus of use (teacher- vs. learner-facing).

The descriptive analysis summarized publication trends, methodological approaches, and technological tools across the included studies. An iterative descriptive–thematic synthesis was then used to group outcomes, AI mechanisms, and pedagogical purposes, identifying recurring patterns and gaps. Given the heterogeneity of study designs and outcome measures, the synthesis focused on mapping trends rather than aggregating effects or making causal claims, consistent with the aims of a scoping review.

To answer the first research question, the analysis of study design highlights a relatively dominant reliance on quantitative methods, accounting for more than half of all publications, which suggests that research on AI-enabled adaptivity in K–12 contexts is predominantly based on performance metrics and controlled evaluations, with fewer studies employing exploratory classroom-based approaches. Mixed-methods studies, although fewer in number, show an upward trend in the later years of the review period, which is indicative of a growing interest in combining statistical rigor with contextual depth. However, qualitative research remains limited in terms of use and focuses on learner and teacher perspectives. A detailed landscape of the methodologies is detailed in Table 2.

Table 2

Methodologies Implemented in Publications

| Study design | 2020 | 2021 | 2022 | 2023 | 2024 | 2025 | Total |

| Quantitative | 2 | 0 | 4 | 2 | 2 | 3 | 13 |

| Mixed methods | 0 | 0 | 1 | 0 | 1 | 3 | 5 |

| Qualitative | 0 | 1 | 0 | 1 | 1 | 0 | 3 |

| Total | 2 | 1 | 5 | 3 | 4 | 6 | 21 |

To examine how these approaches were operationalized, Table 3 summarizes the specific research designs used. Descriptive and experimental designs were most common (each 26.1%), followed by quasi-experiments (21.7%). Comparative, design-based, and qualitative components appeared infrequently, each representing less than 10%. The dominance of descriptive and experimental work underscores reliance in the field on conventional empirical approaches; the scarcity of design-based or participatory research is indicative of limited methodological innovation.

Table 3

Research Design

| Study type | Count | % |

| Descriptive | 6 | 26.1 |

| Experimental | 6 | 26.1 |

| Quasi-experimental | 5 | 21.7 |

| Comparative | 2 | 8.7 |

| Qualitative component (surveys, interviews, observations) | 1 | 4.3 |

| Design-based research with evaluation | 1 | 4.3 |

| Quantitative (observational/modeling) | 1 | 4.3 |

| Explanatory sequential approach | 1 | 4.3 |

Regarding the data sources in the studies, Table 4 shows a strong preference for conventional empirical methods, with descriptive and experimental designs leading. Quasi-experimental approaches also feature notably, but more innovative or participatory methods remain rare in a way that suggests limited methodological diversity in the field.

Table 4

Data Sources

| Data source | Count | % |

| System logs | 10 | 21.7 |

| Assessment scores | 10 | 21.7 |

| Surveys | 7 | 15.2 |

| Interviews | 3 | 6.5 |

| Clickstream data | 3 | 6.5 |

| Observation | 2 | 4.3 |

| Explicit ratings (fun, difficulty) | 1 | 2.2 |

| Simulated student interactions | 1 | 2.2 |

| Public policy documents | 1 | 2.2 |

| Systematic review | 1 | 2.2 |

| Teacher orchestration data | 1 | 2.2 |

| Pilot evaluation responses | 1 | 2.2 |

| fNIRS logs | 1 | 2.2 |

| Pre-/post-tests, standardized scores, attendance logs, and classroom data | 1 | 2.2 |

| Questionnaires | 1 | 2.2 |

| Semi-structured interviews with stakeholders | 1 | 2.2 |

| EEG recordings | 1 | 2.2 |

Note. fNIRS = functional near-infrared spectroscopy; EEG = electroencephalogram.

Analytical techniques were dominated by descriptive and inferential statistical methods, depicting nearly half of all analyses being either descriptive or inferential. Notably, as Table 5 details, qualitative and computational methods are emerging, but their use remains limited. Relatively less representation is noted for regression and specialized techniques, along with psychometric validation. Conventional statistical approaches are used more frequently than regression-based, qualitative, or advanced computational methods.

Table 5

Data Analysis Methods Reported Across Studies

| Category | Methods | No. of mentions | % of occurrences |

| Descriptive statistics | Frequency counts, means, SD | 12 | 22.6 |

| Inferential statistics | t-tests, ANOVA, ANCOVA, Wilcoxon signed-rank, effect size, Shapiro-Wilk | 11 | 20.8 |

| Regression models | Linear regression, logistic regression | 4 | 7.6 |

| Qualitative analysis | Thematic analysis | 6 | 11.3 |

| Advanced computational methods | Machine learning, fuzzy C-means clustering, recommender evaluation metrics, XBI | 6 | 11.3 |

| Psychometrics/Validation | Instrument validation, IRT | 2 | 3.8 |

| Specialized/Other | EEG attention index, simulation modeling, moderation analysis, multilevel models | 6 | 11.3 |

Note. n = 53 occurrences in 21 studies. XBI = Xie-Beni Index; IRT = item response theory; EEG = electroencephalogram.

Overall, the field reflects a pattern of empirical activity accompanied by methodological narrowness. Methodologically, the dominance of short-term quantitative and experimental designs indicates that evidence on AI-enabled adaptivity in K–12 online education is shaped largely by what platforms can readily measure. Theoretically, this emphasis reflects a narrow conception of learning as performance, rather than as a socially situated and meaning-making process.

The analysis of the selected literature reveals a dynamic but uneven landscape of AL technologies in K–12 education (Table 6). In fact, personalization is the most pervasive theme: Systems adapt content, feedback, and pacing to learner profiles, cognitive performance, or engagement patterns. Many studies ground these mechanisms in constructivist or cognitivist principles that promote differentiation and learner autonomy. Teacher orchestration consistently emerges as a mediating factor, with teacher guidance and feedback amplifying gains in motivation and achievement.

Table 6

Adaptive Learning Technologies in K–12 Education

| Tool/platform | Description | Citation |

| ALEKS, DreamBox | Commercial adaptive learning platforms for math and reading | Divanji et al. (2023) |

| Geniebook | AI-powered platform with real-time analytics and personalized feedback | Sancenon et al. (2022) |

| NgodingSeru.com | Gamified coding platform with adaptive scaffolding | Maryono et al. (2025) |

| PSALMS | AI system for automated remediation and concept mapping | Wahyuningsih et al. (2024) |

| AR pop-up book | AR tool for interactive storytelling | Saif et al. (2021) |

| Matrix factorization LMS | Personalized recommendation engine for learning content | Lamb et al. (2022) |

| EEG-based attention monitor | Neuroadaptive system tracking cognitive engagement | Pardamean et al. (2022) |

Note. AR = augmented reality; LMS = learning management system; EEG = electroencephalogram.

Technological tools primarily include commercial software, custom AI systems, and immersive environments. Although some platforms such as ALEKS and DreamBox remain common in mathematics and reading, newer systems integrate neurocognitive inputs, fuzzy logic, and real-time analytics. Gamified and augmented reality tools, including Geniebook, NgodingSeru.com, and augmented reality pop-up books, emphasize engagement and multimodal interaction. Together, these technologies reflect growing experimentation with AI-driven adaptivity, alongside limited evaluation in authentic classroom contexts.

Educational outcomes are diverse, as depicted in Table 7; most studies report academic improvement and conceptual understanding, alongside motivational and affective benefits such as increased engagement and enjoyment. Self-regulated learning is a recurring behavioral outcome, with evidence of improved goal setting and reflection. Fewer studies address equity explicitly, though several note benefits for low-performing or marginalized learners when adaptivity is coupled with teacher support, aligning with previous literature on educational technology (Hamash et al., 2025; Hamash & Mohamed, 2021).

Table 7

Educational Outcomes Reported Across Studies

| Outcome type | Description | Citations |

| Academic achievement | Improved test scores and conceptual understanding | S. Yang et al. (2021); Katz et al. (2022) |

| Motivation and enjoyment | Increased learner satisfaction and engagement | Al-Malki & Meccawy (2022); Y. Yang et al. (2025) |

| Self-regulated learning | Enhanced goal setting, reflection, and autonomy | Leite et al. (2022); Y. Yang et al. (2025) |

| Equity and inclusion | Support for low-performing and marginalized learners | Chellanthara Jose et al. (2024); Katz et al. (2022) |

Opportunities reported across studies emphasize differentiation, agency, and scalability (Table 8). Adaptive systems enable tailored learning paths informed by diagnostic data and real-time feedback. Gamified and immersive designs strengthen motivation, particularly in STEM (science, technology, engineering, and mathematics) and language subjects. A small number of AI-based platforms (e.g., PSALMS), however, demonstrate automation of remediation, content mapping, and potentially scalable personalization.

Table 8

Opportunities Presented in the Literature

| Opportunity | Description | Citation(s) |

| Differentiated instruction | Tailored learning paths based on diagnostics | Divanji et al. (2023) |

| Student agency | Empowerment through self-paced and personalized learning | Sancenon et al. (2022); Wahyuningsih et al. (2024) |

| Gamification | Use of missions, badges, and leaderboards to boost engagement | Maryono et al. (2025); Saif et al. (2021) |

| Scalable deployment | LMS and mobile-first platforms enabling broad access | Chellanthara Jose et al., (2024); Lamb et al. (2022) |

| Real-time adaptivity | Dynamic instructional adjustments based on analytics and neurodata | Pardamean et al. (2022); Kim et al. (2024) |

Note. LMS = learning management system.

Despite these benefits, several challenges persist as technical barriers appear (Table 9), including limited infrastructure and device access, while pedagogical constraints stem from weak teacher training and overreliance on gamification. Ethical concerns about data privacy, bias, and real-time monitoring also persist, particularly in neuroadaptive and AI feedback systems. Policy misalignment and insufficient curricular integration further restrict the systemic adoption of adaptive technologies.

Table 9

Challenges Presented in the Literature

| Challenge type | Description | Citations |

| Technical constraints | Infrastructure gaps, device limitations, and setup costs | Saif et al. (2021); Pardamean et al. (2022) |

| Pedagogical limitations | Lack of teacher training and overreliance on gamification | Divanji et al. (2023); Maryono et al. (2025) |

| Ethical concerns | Privacy, bias, and fairness in adaptive systems | Katz et al. (2022); Kim et al. (2024) |

| Policy misalignment | Inconsistent curricular integration and lack of formal support | S. Yang et al. (2021); Leite et al. (2022) |

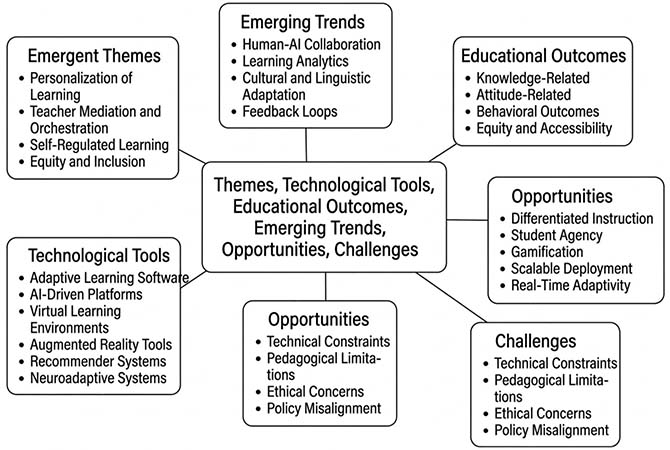

Beyond these established themes (also illustrated in Figure 2), several emerging directions highlight the evolving sophistication of adaptive technologies. Studies increasingly frame human–AI collaboration as central in the sense that teacher roles are emphasized in interpreting analytics and refining system recommendations (Katz et al., 2022; Leite et al., 2022). LA integration supports data-informed pedagogy and iterative system improvement (Kim et al., 2024; Lamb et al., 2022). Furthermore, cultural and linguistic adaptation has gained visibility through localized and multilingual design (Al-Malki & Meccawy, 2022; Chellanthara Jose et al., 2024). These trends point to a gradual shift toward inclusivity, transparency, and sustained pedagogical partnership between humans and AI. Hence, both the promise and fragility of AI-driven adaptivity in K–12 education is evident; although technological capacity and engagement outcomes are advancing, long-term success will depend on ethical safeguards, teacher expertise, and the contextual fit of adaptive systems within diverse learning environments.

Figure 2

Thematic Map of Analyzed Literature

AI integration in adaptive online learning remains uneven across the reviewed studies. About 67% of studies positioned AI as the primary engine for personalization, using learner modeling, predictive algorithms, and recommender systems and often linked to measurable gains in achievement and engagement. Around 24% treated AI as a secondary feature, embedded in platforms or dashboards, with emphasis on pedagogy rather than algorithmic detail. The remaining 10% relied on non-AI strategies such as gamification or teacher-led differentiation, framing adaptivity as a pedagogical challenge. The strongest trend centers on AI-driven modeling and recommendation systems (e.g., Bidirectional Encoder Representations from Transformers [BERT]-based mapping, reinforcement learning sequencing), which emphasize algorithmic personalization as a performance lever. A few AI-related studies stress continued dependence on human-mediated adaptivity and raise scalability concerns (see Table 10 for detailed coding and examples).

Table 10

AI Emphasis in Included Studies (RQ3)

| No. | Study | AI explicitly mentioned? | Primary role of AI | Connection to adaptive/personalized learning |

| 1 | Cheah et al. (2025) | Yes | Generative AI for content creation, grading, admin support | Indirect—supports personalization via teacher practices |

| 2 | Divanji et al. (2023) | No | Adaptive learning (non AI) | Adaptive systems adjust content/feedback; not explicitly AI driven |

| 3 | Hwang et al. (2020) | Yes | Fuzzy expert system for cognitive/affective modeling | AI dynamically adjusts materials and feedback |

| 4 | Chellanthara Jose et al. (2024) | Yes | Adaptive learning + AI feedback/assessment | AI models assess performance and engagement in real time |

| 5 | Lamb et al. (2022) | Yes | ML prediction from neurocognitive data | Real-time AI predictions enable adaptive pathways |

| 6 | Leite et al. (2022) | No | Adaptive learning (non AI) | Personalization via SRL patterns and teacher orchestration |

| 7 | Li et al. (2025) | Yes | Conversational AI tutor (RICE Algebra bot) | AI adapts responses and scaffolds algebra learning |

| 8 | Y. Yang et al. (2025) | No | Self-regulation scaffolds (non AI) | Personalization is student driven |

| 9 | Poly et al. (2025) | Yes | Fuzzy clustering recommender | AI recommends resources based on learning style and accessibility |

| 10 | Al-Malki & Meccawy (2022) | No | Gamified recommender (non AI) | Not connected to AI adaptivity |

| 11 | Sancenon et al. (2022) | No | Adaptive recommender (ML implied) | Learner model selects topics/difficulty for worksheets |

| 12 | Pardamean et al. (2022) | Yes | Learning style prediction (matrix factorization) | AI recommends materials matching predicted style |

| 13 | Saif et al. (2021) | No | AR-based adaptive tools (non AI) | Adaptive via interaction, not AI |

| 14 | Palliyalil & Mukherjee (2020) | Yes | AI-driven personalization in Byju’s app | AI predicts learning styles and recommends content |

| 15 | Wahyuningsih et al. (2024) | Yes | BERT-based concept mapping and path generation | AI generates personalized remedial learning paths |

| 16 | Katz et al. (2022) | Yes | AI personalization and recommendation in Algebra Nation | AI tailors content exposure and feedback |

| 17 | Bhatt et al. (2024) | Yes | Deep learning recommender | AI recommends activities using implicit/explicit feedback |

| 18 | Kim et al. (2024) | No | EEG attention monitoring (AI proposed, not implemented) | AI mentioned only as potential future application |

| 19 | Maryono et al. (2025) | No | Adaptive gamified system (non AI) | Not connected to AI adaptivity |

| 20 | Katz et al. (2022)—Simulation study | Yes | AI personalization and recommendation | AI adjusts learning paths based on mastery and engagement |

| 21 | Bhatt et al. (2024)—Deep learning recommender systems | Yes | Sequence-aware deep recommender | AI personalizes activity recommendations |

Note. AI = artificial intelligence; ML = machine learning; SRL = self-regulated learning; AR = augmented reality; BERT = Bidirectional Encoder Representations from Transformers; EEG = electroencephalogram.

The dominance of short-term quantitative and experimental designs indicates that evidence of AI-enabled adaptivity in K–12 online education is shaped largely by what platforms can readily measure rather than by sustained classroom inquiry (Yim & Su, 2025). This data pattern privileges performance indicators derived from system logs and tests, limiting insight into instructional practices, learner sensemaking, and longer-term pedagogical effects.

This methodological concentration reflects a deeper theoretical gap in terms of the limited integration of constructivist and sociocultural theories of learning into the design and evaluation of adaptive systems. As a result, the prevalence of experimental and quasi-experimental designs continues to reinforce behaviorist assumptions, framing learning primarily as a quantifiable outcome rather than as a dynamic, socially situated process. Adaptivity, hence, is often framed as a technical optimization of performance metrics rather than as a vehicle for meaning making or interaction. In line with earlier observations by Martin et al. (2020) and Shum et al. (2019), such a focus risks the potential reduction of learning to what can be measured, overlooking affective, social, and contextual dimensions that are essential to authentic pedagogy.

A notable limitation lies in the disconnect between algorithmic modeling and pedagogical theory, as few studies explain how adaptive mechanisms such as sequencing, feedback loops, or learner modeling derive from established learning theories. Adaptivity is often justified by technical feasibility rather than educational reasoning, reinforcing earlier concerns about misalignment among learners and instructional and content models (Wang et al., 2020). This is highlighted and confirmed by the dominance of vendor-driven or platform-specific studies, which frame personalization through available system features instead of pedagogical intent. Algorithmic processes are often described functionally, with emphasis on performance or usability, leaving instructional logic implicit. From a theoretical perspective, such framing risks equating technical optimization with educational value, particularly when evaluations are tightly coupled to proprietary platforms. Hence, clearer theoretical grounding and greater use of qualitative or mixed-methods designs would allow for stronger claims about how AI shapes learning through interaction, reflection, and collaboration.

From pedagogical and equity perspectives, the findings suggest that adaptive systems without sustained teacher mediation tend to benefit learners who possess strong self-regulation skills and access to institutional support (Rundquist et al., 2024). Studies reporting more equitable outcomes often situate adaptive systems within pedagogical practices where teachers interpret analytics, scaffold learner decision-making, and adjust system use in response to diverse needs. In practice, inclusive design is reflected in how adaptive tools are used within everyday instructional routines. For example, several studies show that LA dashboards are most effective when teachers use them to identify misconceptions or disengagement and then adapt feedback, pacing, or grouping strategies rather than relying on automated recommendations alone.

Adaptive platforms that combine personalized learning paths with scheduled teacher check-ins and guided reflection activities similarly appear better suited to supporting self-regulation and persistence than stand-alone systems. Therefore, professional development, as Boulhrir and Ait Bouch (2025) argue, plays a critical role, equipping teachers to critically engage with adaptive tools, understand their limitations, and align system feedback with curricular goals and learner contexts. In the absence of such support, adaptive technologies risk amplifying existing disparities by privileging learners who are already better positioned, particularly in the absence of sustained teacher mediation of curriculum implementation and adaptation (Aguerrebere et al., 2022; Shum et al., 2019).

The reviewed studies reveal a persistent inconsistency in how AI is defined and operationalized in K–12 online learning research, which complicates the evaluation of classroom applications. To address this issue, we propose a clearer distinction between AI-enabled adaptivity and rule-based digital personalization. In this review, AI refers to systems that infer latent learner states or patterns from data using computational models that can generalize beyond prespecified rules, such as machine learning, probabilistic modeling, or adaptive inference mechanisms (Holmes et al., 2023; Luckin et al., 2016). By contrast, rule-based systems rely on fixed decision logic, scripted pathways, or manually encoded conditions, even when implemented within digital or adaptive platforms. Conflating these approaches obscures both pedagogical and ethical analysis, as systems that merely automate predefined responses differ fundamentally from those that dynamically model learner behavior. Applying a consistent definition clarifies whether reported effects arise from intelligent adaptation, instructional design choices, or teacher mediation, thereby strengthening the interpretability and comparability of findings across studies.

This inconsistency in definitions indicates important implications for how AI-enabled learning is evaluated and interpreted. When studies describe AI without specifying algorithms, data sources, or decision rules, it becomes impossible to evaluate transparency, ethical integrity, or educational relevance. In some cases, tools branded as “AI-based” offered little evidence of autonomous learning or intelligent modeling, highlighting how marketing language can outpace scholarly precision. Case in point, the lack of shared definitions or reporting standards risks overstating the presence of AI and underestimating the complexity of its pedagogical integration. When algorithms, adaptation logic, and human oversight were clearly described, systems supported improved performance as well as better alignment between teacher intention and machine feedback (Katz et al., 2022; Leite et al., 2022). This is one way transparency can foster trust and positions AI as a collaborator rather than a black box.

This review contributes theoretically by consolidating system-level, pedagogical, and evaluative lenses into a mechanism-aware account of how AL and LA are currently conceptualized in K–12 online education. Distinguishing signals, models, decisions, and targets clarifies where claims about “AI-enabled” adaptivity are grounded in learning theory and where they remain primarily technical. Methodologically speaking, for instance, it advances the field by mapping dominant designs and data practices while making visible the underuse of longitudinal, mixed, and participatory approaches (Martin et al., 2020; Wang et al., 2020). These contributions collectively reposition AL research away from isolated performance effects and toward questions of pedagogical alignment, teacher mediation, and contextual validity.

Conceptual ambiguity continues to hinder progress, as varying definitions of AI and adaptivity reflect disciplinary fragmentation, with computer science framing it as optimization and education viewing it as differentiation and inclusion. Without common-ground definitions and terminology, it is difficult to compare findings or anchor them in defensible learning theories such as constructivism or self-regulation, especially when research is conducted by specialists from disparate fields who use common terms but attach different meanings to them.

Methodological narrowness also persists; confirming results from previous studies (Rundquist et al., 2024; Yim & Su, 2025), few investigations employ design-based, participatory, or longitudinal methods that could illuminate how adaptivity evolves through human–machine interaction. This may explain why evidence of sustained gains in agency or collaboration is still limited. Notably, studies conducted in Global South and non-English-speaking contexts remain underrepresented in the mapped literature, reflecting broader structural and linguistic asymmetries in K–12 AI and LA research. Aguerrebere et al. (2022) spotlight this imbalance and call for globally inclusive research if AI is to ethically serve equity rather than privilege. Ethical and governance dimensions are equally underdeveloped; few studies explain how privacy, bias, or transparency are addressed, introducing uncertainty about accountability and fairness in online/virtual learning environments. We speculate that this reflects insufficient collaboration between educators, technologists, and ethicists.

This review identifies several implications that clarify priorities for theory, research, and practice in K–12 online and distance learning. At a theoretical level, future work should articulate clearer links between adaptive mechanisms and defensible models of learning. Greater emphasis is needed on longitudinal and classroom-embedded designs that examine how adaptivity operates over time and across diverse learner groups, alongside transparent reporting of algorithmic logic and human oversight. From a practice and policy development perspective, evidence suggests that adaptive systems are most effective when implemented with sustained teacher involvement, professional learning, and governance frameworks that address data use, equity, and accountability. These identified directions indicate that progress in AI-enabled adaptivity will depend on aligning system design and evaluation with explicit pedagogical models, transparent decision logic, and sustained teacher involvement.

This scoping review mapped 21 empirical studies on AI-enabled adaptive learning and learning analytics in K–12 online education published between 2020 and 2025. Synthesizing the identified methodological, conceptual, and equity-related gaps, three key messages emerge: the need for greater methodological diversity, clearer conceptual grounding of AI-enabled adaptivity, and more inclusive, context-sensitive research agendas. Overall, the evidence points to a rapidly expanding but uneven field, marked by technological innovation alongside persistent conceptual and methodological gaps. As adaptive systems continue to improve performance, motivation, and self-regulation, their benefits remain unevenly distributed, with stronger effects observed for students who already have access, resources, and teacher support.

Three overarching insights emerge, beginning with the need for greater methodological diversity and for research that integrates quantitative rigor with qualitative insight to illuminate how adaptive systems interact with pedagogy and context. Second, equity must guide both design and evaluation. Adaptive tools succeed when teachers and learners actively shape their use, ensuring that personalization aligns with human judgment and local realities. Third, conceptual clarity is foundational. Without consistent definitions of what constitutes AI in education, evidence will remain fragmented and difficult to translate into practice or policy.

In response to the identified gaps, future research should adopt transparent, theory-driven, and mixed-methods approaches that explicitly connect AI mechanisms to learning principles, classroom practice, and learner agency. For educators and policymakers, addressing these gaps requires professional learning, data governance, and ethical oversight to ensure that adaptivity serves inclusion rather than automation. As a scoping review, this study was limited to English-language, peer-reviewed publications indexed in Scopus and ScienceDirect (with targeted Google Scholar supplementation) published between 2020 and 2025. The future of AI-enabled online learning in K–12 education depends less on the sophistication of algorithms than on the collective will to embed technology within equitable, pedagogically grounded systems.

AI tools (e.g., Microsoft 365 Copilot) were used during Stage 2 to generate alternative Boolean search strings and streamline phrasing, and for mechanical editing tasks such as truncating charted entries or sentences and correcting grammar throughout the body of the manuscript. DiagramGPT was used to create the PRISMA flow diagram in Figure 1. All substantive coding and interpretive decisions were made by the researchers.

Aguerrebere, C., He, H., Kwet, M., Laakso, M.-J., Lang, C., Marconi, C., Price-Dennis, D., & Zhang, H. (2022). Global perspectives on learning analytics in K–12 education. In C. Lang, G. Siemens, & A. F. Wise (Eds.), The handbook of learning analytics (2nd ed., pp. 223-231). SOLAR. https://doi.org/10.18608/hla22.022

Al-Malki, L., & Meccawy, M. (2022). Investigating students’ performance and motivation in computer programming through a gamified recommender system. Computers in the Schools, 39(2), 137-162. https://doi.org/10.1080/07380569.2022.2071229

Arksey, H., & O’Malley, L. (2005). Scoping studies: Towards a methodological framework. International Journal of Social Research Methodology, 8(1), 19-32. https://doi.org/10.1080/1364557032000119616

Bhatt, S. M., Van den Noortgate, W., & Verbert, K. (2024). Investigating the use of deep learning and implicit feedback in K12 educational recommender systems. IEEE Transactions on Learning Technologies, 17(1), 112-123. https://doi.org/10.1109/TLT.2023.3273422

Boulhrir, T. (2025). [Review of the book Brave new words: How AI will revolutionize education (and why it’s a good thing) by Sal Khan]. The International Review of Research in Open and Distributed Learning, 26(4), 176-179. https://doi.org/10.19173/irrodl.v26i4.9020

Boulhrir, T., & Ait Bouch, R. (2025). Sustainable development goals in elementary school education: Implications for curriculum integration and teacher education. Journal of Teacher Education for Sustainability, 27(1), 183-204. https://doi.org/10.2478/jtes-2025-0010

Boulhrir, T., Hamash, M., & Ghreir, H. M. A. (2026). The Dual Edge of Large Language Models: Innovation in Education and Emerging Ethical Implications. In Innovations and Ethical Dimensions of Large Language Models (pp. 273-316). IGI Global Scientific Publishing. https://doi.org/10.4018/979-8-3373-5017-2.ch009

Cheah, Y. H., Lu, J., & Kim, J. (2025). Integrating generative artificial intelligence in K–12 education: Examining teachers’ preparedness, practices, and barriers. Computers and Education: Artificial Intelligence, 8, Article 100363. https://doi.org/10.1016/j.caeai.2025.100363

Chellanthara Jose, B., Ashok Kumar, M., UdayaBanu, T., & Nagalakshmi, M. (2024). Assessing the effectiveness of adaptive learning systems in K–12 education. International Journal of Advanced IT Research and Development, 1(1). https://doi.org/10.69942/1920184/20240101/02

Divanji, R. A., Bindman, S., Tung, A., Chen, K., Castaneda, L., & Scanlon, M. (2023). A one stop shop? Perspectives on the value of adaptive learning technologies in K–12 education. Computers and Education Open, 5, Article 100157. https://doi.org/10.1016/j.caeo.2023.100157

Global Education Monitoring Report Team. (2023). Global education monitoring report 2023: Technology in education: A tool on whose terms? UNESCO. https://doi.org/10.54676/UZQV8501

Hamash, M., Ghreir, H., Tiernan, P., & Boulhrir, T. (2025). From NPCs to AI assistants: A scoping review of AI-driven agents in immersive STEM learning. In B. I. Edwards, H. Abuhassna, D. Olugbade, O. A. Ojo, & W. A. Jaafar Wan Yahaya (Eds.), Advances in computational intelligence and robotics (pp. 211-244). IGI Global. https://doi.org/10.4018/979-8-3373-0847-0.ch008

Hamash, M., & Mohamed, H. (2021). BASAER team: The first Arabic robot team for building the capacities of visually impaired students to build and program robots. International Journal of Emerging Technologies in Learning (iJET), 16(24), 91-107. https://doi.org/10.3991/ijet.v16i24.27465

Holmes, W., Bialik, M., & Fadel, C. (2023). Artificial intelligence in education. In C. Stückelberger & P. Duggal (Eds.), Data ethics: Building trust: How digital technologies can serve humanity (pp. 621-653). Globethics Publications. https://doi.org/10.58863/20.500.12424/4276068

Huck, C., & Zhang, J. (2021). Effects of the COVID-19 pandemic on K–12 education: A systematic literature review. New Waves—Educational Research and Development Journal, 24(1), 53-84. https://eric.ed.gov/?id=EJ1308731

Hwang, G.-J., Sung, H.-Y., Chang, S.-C., & Huang, X.-C. (2020). A fuzzy expert system-based adaptive learning approach to improving students’ learning performances by considering affective and cognitive factors. Computers and Education: Artificial Intelligence, 1, Article 100003. https://doi.org/10.1016/j.caeai.2020.100003

Ihichr, A., Oustous, O., El Idrissi, Y. E. B., & Lahcen, A. A. (2024). A systematic review on assessment in adaptive learning: Theories, algorithms and techniques. International Journal of Advanced Computer Science & Applications, 15(7). https://doi.org/10.14569/IJACSA.2024.0150785

Johnson, C. C., Walton, J. B., Strickler, L., & Elliott, J. B. (2023). Online teaching in K–12 education in the United States: A systematic review. Review of Educational Research, 93(3), 353-411. https://doi.org/10.3102/00346543221105550

Katz, D., Huggins-Manley, A. C., & Leite, W. (2022). Personalized online learning, test fairness, and educational measurement: Considering differential content exposure prior to a high-stakes end of course exam. Applied Measurement in Education, 35(1), 1-16. https://doi.org/10.1080/08957347.2022.2034824

Kim, S., Kim, J.-H., Hyung, W., Shin, S., Choi, M. J., Kim, D. H., & Im, C.-H. (2024). Characteristic behaviors of elementary students in a low attention state during online learning identified using electroencephalography. IEEE Transactions on Learning Technologies, 17(1), 619-628. https://doi.org/10.1109/TLT.2023.3289498

Lamb, R., Neumann, K., & Linder, K. A. (2022). Real-time prediction of science student learning outcomes using machine learning classification of hemodynamics during virtual reality and online learning sessions. Computers and Education: Artificial Intelligence, 3, Article 100078. https://doi.org/10.1016/j.caeai.2022.100078

Leite, W. L., Kuang, H., Jing, Z., Xing, W., Cavanaugh, C., & Huggins-Manley, A. C. (2022). The relationship between self-regulated student use of a virtual learning environment for algebra and student achievement: An examination of the role of teacher orchestration. Computers & Education, 191, Article 104615. https://doi.org/10.1016/j.compedu.2022.104615

Li, C., Xing, W., Song, Y., & Lyu, B. (2025). RICE AlgebraBot: Lessons learned from designing and developing responsible conversational AI using induction, concretization, and exemplification to support algebra learning. Computers and Education: Artificial Intelligence, 8, Article 100338. https://doi.org/10.1016/j.caeai.2024.100338

Luckin, R., Holmes, W., Griffiths, M., & Forcier, L. B. (2016). Intelligence unleashed: An argument for AI in education. Pearson. https://oro.open.ac.uk/50104/

Maier, U., & Klotz, C. (2022). Personalized feedback in digital learning environments: Classification framework and literature review. Computers and Education: Artificial Intelligence, 3, Article 100080. https://doi.org/10.1016/j.caeai.2022.100080

Martin, F., Chen, Y., Moore, R. L., & Westine, C. D. (2020). Systematic review of adaptive learning research designs, context, strategies, and technologies from 2009 to 2018. Educational Technology Research and Development, 68(4), 1903-1929. https://doi.org/10.1007/s11423-020-09793-2

Maryono, D., Sajidan, Akhyar, M., Sarwanto, Wicaksono, B. T., & Prakisya, N. P. T. (2025). NgodingSeru.com: An adaptive e-learning system with gamification to enhance programming problem-solving skills for vocational high school students. Discover Education, 4(1), Article 157. https://doi.org/10.1007/s44217-025-00581-9

Palliyalil, S., & Mukherjee, S. (2020). Byju’s the learning app: An investigative study on the transformation from traditional learning to technology-based personalized learning. International Journal of Scientific and Technology Research, 9(3), 5054-5059. https://www.researchgate.net/publication/342901964_Byju's_The_Learning_App_An_Investigative_Study_On_The_Transformation_From_Traditional_Learning_To_Technology_Based_Personalized_Learning

Pardamean, B., Suparyanto, T., Cenggoro, T. W., Sudigyo, D., & Anugrahana, A. (2022). AI-based learning style prediction in online learning for primary education. IEEE Access, 10, 35725-35735. https://doi.org/10.1109/ACCESS.2022.3160177

Poly, A., Banu, P. K. N., Althuniyan, N., Azar, A. T., & Kamal, N. A. (2025). Fuzzy logic approach to cold-start challenges in deaf and hard of hearing recommender systems. Engineering, Technology & Applied Science Research, 15(3), 23449-23460. https://doi.org/10.48084/etasr.10825

Romero Alonso, R., Araya Carvajal, K., & Reyes Acevedo, N. (2024). Rol de la inteligencia artificial en la personalización de la educación a distancia: Una revisión sistemática [The role of artificial intelligence in personalizing distance education: A systematic review]. RIED—Revista Iberoamericana de Educación a Distancia, 28(1), 9-36. https://doi.org/10.5944/ried.28.1.41538

Rundquist, R., Holmberg, K., Rack, J., Mohseni, Z., & Masiello, I. (2024). Use of learning analytics in K–12 mathematics education: Systematic scoping review of the impact on teaching and learning. Journal of Learning Analytics, 11(3), 174-191. https://doi.org/10.18608/jla.2024.8299

Saif, A. F. M. S., Mahayuddin, Z. R., & Shapi’i, A. (2021). Augmented reality based adaptive and collaborative learning methods for improved primary education towards the fourth industrial revolution (IR 4.0). International Journal of Advanced Computer Science and Applications, 12(6), 614-623. https://doi.org/10.14569/IJACSA.2021.0120672

Sancenon, V., Wijaya, K., Yue Shu Wen, X., Adi Utama, D., Ashworth, M., & Ng, K. H. (2022). A new Web-based personalized learning system improves students’ learning outcomes. International Journal of Virtual and Personal Learning Environments, 12(1), 1-21. https://doi.org/10.4018/IJVPLE.295306

Shum, S. B., Ferguson, R., & Martinez-Maldonado, R. (2019). Human-centred learning analytics. Journal of Learning Analytics, 6(2), 1-9. https://doi.org/10.18608/jla.2019.62.1

Tretow-Fish, T. A. B., & Khalid, M. S. (2023). Methods for evaluating learning analytics and learning analytics dashboards in adaptive learning platforms: A systematic review. Electronic Journal of e-Learning, 21(5), 430-449. https://doi.org/10.34190/ejel.21.5.3088

Tricco, A. C., Lillie, E., Zarin, W., O’Brien, K. K., Colquhoun, H., Levac, D., Moher, D., Peters, M. D. J., Horsley, T., Weeks, L., Hempel, S., Akl, E. A., Chang, C., McGowan, J., Stewart, L., Hartling, L., Aldcroft, A., Wilson, M. G., Garritty, C., ... Straus, S. E. (2018). PRISMA extension for scoping reviews (PRISMA-ScR): Checklist and explanation. Annals of Internal Medicine, 169(7), 467-473. https://doi.org/10.7326/M18-0850

Wahyuningsih, Y., Djunaidy, A., & Siahaan, D. (2024). Concept–effect relationship weighting based on frequency of concept’s co-occurrence for developing personalized remedial learning path. IEEE Access, 12, 13878-13892. https://doi.org/10.1109/ACCESS.2024.3355138

Wang, S., Christensen, C., McBride, E., Kelly, H., Cui, W., Tong, R., Shear, L., Yarnall, L., & Feng, M. (2020). Identifying gaps in use of and research on adaptive learning systems. In H. C. Lane, S. Zvacek, & J. Uhomoibhi (Eds.), Proceedings of the 12th International Conference on Computer Supported Education (Vol. 1, pp. 118-124). SciTePress: Science and Technology Publications. https://doi.org/10.5220/0009590701180124

Yang, S., Carter, R. A., Zhang, L., & Hunt, T. (2021). Emergent themes of blended learning in K–12 educational environments: Lessons from the Every Student Succeeds Act. Computers & Education, 163, Article 104116. https://doi.org/10.1016/j.compedu.2020.104116

Yang, Y., Song, Y., Yan, J., & Ma, Q. (2025). Bridging classroom and real-life learning mediated by a mobile app with a self-regulation scheme: Impacts on Chinese EFL primary students’ self-regulated vocabulary learning outcomes, enjoyment, and learning behaviours. System, 131, Article 103671. https://doi.org/10.1016/j.system.2025.103671

Yim, I. H. Y., & Su, J. (2025). Artificial intelligence (AI) learning tools in K–12 education: A scoping review. Journal of Computers in Education, 12, 93-131. https://doi.org/10.1007/s40692-023-00304-9

Artificial Intelligence in Education: Mapping Adaptive Learning and Learning Analytics in K-12 Online, Virtual, and Distance Learning by Taoufik Boulhrir, Hanan Ghreir, Mahmoud Hamash, and Michael Robert is licensed under a Creative Commons Attribution 4.0 International License.