Volume 27, Number 2

Nuo Cheng1, Hongxia Liu1, Xiaoqing Xu1*, Wei Zhao1, Lifang Qiao2, and Guohao Zhang3

1School of Information Science and Technology, Northeast Normal University, Changchun, China; 2College of Education, Hebei Normal University, Shijiazhuang, Hebei, China; 3Shishi Experimental School of Chengdu Eastern New Area, Chengdu, Sichuan, China; *Corresponding Author

While generative artificial intelligence (GAI) has emerged as a vital support tool for collaborative learning, further exploration is required to achieve effective human-machine symbiosis in online collaborative processes. Grounded in symbiosis theory, our study developed a role-based intervention strategy to empower learners and their artificial intelligence (AI) partners through clearly defined responsibilities and collaborative interaction rules. In a quasi-experimental pretest-posttest design involving 58 graduate students, we employed statistical analyses and lag sequential analysis to evaluate the impact of the role intervention on online collaborative learning. The results indicated that the role design (a) significantly enhanced the quality of collaborative knowledge construction, (b) facilitated transitions among higher-order collaborative behaviors, and (c) improved perceived usefulness and ease of use of GAI among learners, although it also led to a moderate increase in collaborative cognitive load. These findings validated the core value of symbiosis theory-based role design for optimizing human-AI collaboration. Our study offered both a theoretical perspective on human-machine co-development and valuable insights for instructors to integrate AI tools and design more effective online collaborative learning activities.

Keywords: human-machine collaboration, human-AI collaboration, symbiosis theory, online collaborative learning, collaborative cognitive load

Online collaborative learning has become a prominent instructional approach, enabling learners to connect across time and distance. It relies on interaction and dialogue among learners (de Araujo et al., 2025). Through shared thinking within a team, members exchange information and challenge each other’s views, thereby building and deepening their collective knowledge together (Zabolotna et al., 2025). However, dialogue-based collaborative processes are not necessarily effective in online environments. The success of this approach depends on the background knowledge and depth of thought of each team member (Puntambekar et al., 2023). Consequently, overall team performance is often limited by the ceiling effect (Gui et al., 2025), driven by a few high-level members. Furthermore, weak supervision in online collaboration often hinders deeper cognitive processing (Saqr et al., 2024). Therefore, the issue of how to foster high-quality dialogue and overcome superficial collaboration in online collaborative learning remains an important research question.

Generative artificial intelligence (GAI), such as ChatGPT, is a large language model (LLM) with vast knowledge, strong reasoning skills, and the ability to offer multiple perspectives. It provides rich external cognitive resources for collaborative learning (Wei et al., 2025). Through natural language chat, GAI can join the knowledge‐building process in real time (Pozdniakov et al., 2025). It helps teams overcome individual knowledge gaps (Hao et al., 2024) and creates a deeper blend of human and machine intelligence. This blend helps group thinking move toward higher‐level innovation and reflection (Chen et al., 2023).

Although human-machine collaboration is seen as a key feature of future education, most research has focused on AI-individual learning or single conversational systems (S. Wang et al., 2024). There has been little work on integrating GAI into team collaboration processes or deeper group knowledge construction, particularly in online learning environments (S. Feng, 2025). In practice, GAI has often been limited to information retrieval and answer generation. This has led to two problematic patterns. There may be overreliance on AI, with learners passively accepting its output responses (Zhai et al., 2024) or treating it as a mere tool, ignoring its full potential (Adewale et al., 2024; Mai et al., 2024). Both patterns fail to integrate the strength of human beings and GAI, thereby missing opportunities to create synergy.

Establishing a general framework at the theory level would be necessary for effective human-AI online collaboration. Symbiosis theory offers guiding principles for cultivating a balanced, mutually beneficial relationship between humans and AI. Accordingly, this study introduced a symbiosis-driven role design strategy. We assigned distinct roles to learners and GAI, embedding GAI as an equal intelligent peer within online group discussions. Through empirical analysis of human-AI interaction patterns, the study provided a coherent theoretical framework and practical guidance for effective symbiotic learning environments.

GAI has shown great promise in educational technology, proven to significantly improve learning outcomes (Park & Doo, 2024). As an important social interaction tool, GAI can offer learners viewpoints they had not considered, helping them grow their knowledge and skills (Borge et al., 2024). It can also enhance problem‐solving by providing diverse resources (Canonigo, 2024). Even more importantly, GAI can give customized feedback based on each learner’s needs and preferences (Shahzad et al., 2025). Due to these capabilities, GAI is considered a new normal in learning (Duranti, 2023), opening new directions for research on its value in online collaborative learning.

Several studies have explored GAI in collaborative settings. For example, Gyasi et al. (2025) examined how the AI feedback assistant affected group knowledge construction. Naik et al. (2025) looked at how LLMs offer personalized reflection during collaborative learning. Zheng et al. (2024) found that AI-driven feedback and feedforward strategies significantly improve the quality of collaborative knowledge building. Although these studies commonly use GAI to support collaboration through feedback or scripted guidance, they largely construed GAI as a tool to assist learners rather than as a participant, adapting and developing within the collaborative process. Moreover, they have not provided a comprehensive evaluation of the various ways learners and GAI can collaborate within groups or of its overall collaborative effectiveness, particularly in online learning environments.

There have also been concerns about using GAI in online collaborative learning. Mena-Guacas et al. (2023) argued that relying too much on AI can weaken deep peer interactions and harm collaboration quality. Li et al. (2024) stressed that human-human and human-machine collaboration each have their own strengths and should be combined thoughtfully. Ji et al. (2025) called for future research to examine the full process of human-machine co-creation in educational settings, especially how knowledge, thinking, and behavior interact when GAI is involved. Therefore, designing effective human-GAI interaction mechanisms that use GAI as a partner while preserving and enhancing learner dialogue and higher-order thinking remains a critical challenge in achieving true human-AI symbiosis.

Human-machine collaboration refers to how people and intelligent technologies interact and work together, aiming to use each other’s strengths for mutual improvement (Li et al., 2025). The rise of GAI like ChatGPT has expanded this model from simple human-machine interaction to full human-AI collaboration. In such settings, GAI can play roles like teaching assistant (Barrot, 2024) or learning partner (Cress & Kimmerle, 2023), offering expert insights and creative suggestions.

However, most studies have focused on one-on-one human-AI interactions, overlooking group settings with multiple members (Shin et al., 2023). Online collaborative learning relies on social interaction and shared cognition among group members. There is still a lack of systematic, empirical research on how AI helps or hinders these group processes (Lee et al., 2025). This gap limits our understanding of true human-AI symbiotic collaboration.

In practice, human-AI interaction has often exhibited two extreme patterns. The first is commensalism, when technology intrudes too much and suppresses human agency. Learners depend more and more on AI (Zhang et al., 2024). Human input is reduced to data for AI training (Karimova et al., 2025). The second pattern is parasitism, in which technology intervenes too deeply and weakens higher-order human skills (Morales-García et al., 2024). Both patterns fail to leverage the unique strengths of humans and AI.

A solid theoretical framework would weaken or break these negative patterns. Symbiosis theory outlines the key elements of harmonious coexistence and provides clear design principles. By turning these elements into concrete mechanisms, such as role division and interaction boundaries, we can transcend commensal and parasitic modes and build truly synergistic human-machine collaboration. Unlike self-regulated learning, centered on individual regulation (Du, 2025), or cognitive load theory, concerned with task-related cognitive demands (Janssen & Kirschner, 2020), symbiosis theory focuses on how interacting agents co-develop through sustained collaboration (Mackay, 2024). Furthermore, symbiosis theory can be viewed as an extension of distributed cognition theory, applied to cognition distributed across humans and GAI (Hollan et al., 2000). Consequently, we designed a human-GAI collaborative role strategy based on symbiosis theory and evaluated its effectiveness through an experimental pretest-posttest study.

Symbiosis was originally used to describe how different organisms depend on each other in an ecosystem. In recent years, social scientists have applied this idea to other fields, coining terms like symbiotic learning (C.-L. Wang, 2019) and human-machine symbiosis (Kong et al., 2025). Among symbiotic relationships, mutualism—wherein each partner provides resources or functions the other needs—is the most stable form. It creates a continuous, positive feedback loop (Becks et al., 2025).

Applying human-machine symbiosis to GAI-supported online collaborative learning meant moving beyond the one-way AI-as-tool model. Instead, GAI and learners were treated as equal partners with complementary functions and dynamic interactions (Cress & Kimmerle, 2023; Cukurova, 2025). To turn the principles of mutualism into practical collaboration mechanisms, we used roles as the core mediator, with two main features.

First, using role mapping, we defined clear responsibilities and activity boundaries for both human participants and GAI. This ensured each role complemented the other and maintained a balanced power dynamic throughout the collaboration. The second feature involved interaction rules. We created rules for rotating roles that translated mutual dependence and functional complementarity into concrete, executable scripts. These rules guided when and how each role should step in during the collaboration process.

The following sections outline several role types and their interaction processes based on this framework. We then evaluate how effectively they enabled human-machine mutualism.

Guided by symbiosis theory, we assigned clear roles to both learners and GAI in groups, integrating GAI as an equal intelligent peer in the online discussion. We then examined the effects of this role strategy by assessing knowledge construction, cognitive interaction, and learners’ perceptions. Our goal was to improve human-GAI interaction patterns, boost the quality of collaborative knowledge building, and offer a replicable framework for efficient, symbiotic human-GAI collaboration. Our study was guided by the following research questions.

We recruited a convenience sample of 59 students (male = 7, female = 52; age M = 22.97 years, SD = 0.93) majoring in educational technology at a public university in China. The study took place in their graduate course Learning Analytics Methods, which consisted of eight modules. Our intervention was implemented during the last two modules, each of which required learners to work together on a discussion task. Before the course began, we formed 16 heterogeneous groups, balancing gender and matching students based on their prior course experience and collaboration skills. All participants provided written informed consent and were assured that their course grades would not be affected and that they could withdraw at any time. However, one participant’s data was not submitted, meaning our analyses included data from 58 students.

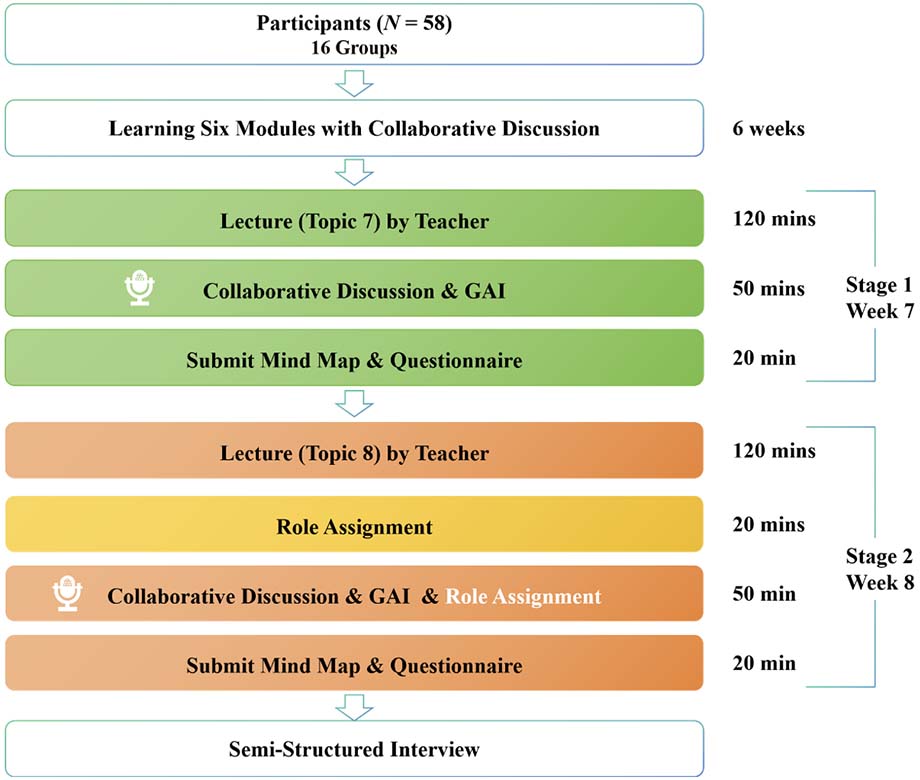

The study employed a pretest-posttest experimental design. The procedure is shown in Figure 1. Before the experiment, learners had already completed six weeks of collaborative learning, which ensured they were familiar with their group members and had comparable teamwork experience. The experiment comprised two stages, pre- and post-intervention, with the same procedure for each. The two stages were separated by a one-week interval. In each stage, the instructor first delivered a lecture on the module topic. Next, each group received an open-ended discussion task related to the topic of the lecture. The groups used an online platform (i.e., Tencent Meeting) for verbal collaborative discussions. Afterwards, the groups submitted their mind maps and completed related questionnaires.

The only difference between the two stages was the presence of the role interventions. In stage 1, groups discussed the task without any role assignment, although they could still use the GAI tool (i.e., Yuanbao), an advanced AI assistant developed by DeepSeek. In stage 2, before the discussion, the instructor clearly explained the role assignment and rotation rules. With the support of teaching assistants, participants then completed a 20-minute preparatory discussion to understand and get comfortable with the strategy before moving on to the formal task. However, during formal discussions, the teaching assistants did not intervene; instead, each group collaborated with Yuanbao, which was configured with the role-design strategy. With the participants’ consent, both 50-minute discussion stages were recorded via Tencent Meeting. Finally, we conducted follow-up interviews with some participants.

Figure 1

Diagram of Research Process

To ensure GAI was effectively embedded in collaborative learning and enabled efficient human-machine collaboration, this study proposed a role intervention strategy based on the theory of symbiosis. The strategy comprised three components: role assignment, role rotation, and GAI role implementation.

First, in role assignment, to ensure functional complementarity between learners and GAI as symbiotic units, we designed three roles—moderator, analyst, and arguer—based on previous studies (Cheng et al., 2014; Q. Feng et al., 2025). Table 1 shows the responsibilities for each role. The moderator was a fixed role, always performed by a learner. Analyst and arguer were flexible roles performed by either learners or GAI.

Table 1

Role Design Based on Symbiosis Theory

| Role | Function description | Example prompts |

| Moderator | Monitor discussion time and guide the group through the process | Let’s move on to the next question. Does anyone have different views or anything to add? |

| Analyst | Raise new questions or ideas based on the discussion topic | My perspective on this is... I think that... |

| Arguer | Support or challenge peers’ ideas; offer reasoned arguments or alternative viewpoints | I’d like to add... I feel there’s another angle... You might have overlooked... |

Second, for role rotation, we designed a dynamic mechanism to ensure mutual benefit rather than subordination. This mechanism focused on switching between analyst and arguer roles. First, the analyst proposed an initial argument, then the arguer(s) provided supportive or opposing arguments. Once all group members completed their contributions, the analyst became the arguer, and an arguer became the analyst. This process was repeated until the group reached a consensus. The moderator also took on either the analyst or arguer role as needed. GAI participated as an equal partner. When a human analyst proposed a point, GAI usually acted as the arguer, offering feedback manifested as one group member interacting with the GAI and sharing feedback within the group. If the discussion stalled, GAI switched to the analyst role and introduced a new perspective, while all human members took on the arguer role to debate it together.

Finally, the third phase of role design focused on allocating clear roles to GAI and enabling it to collaborate with learners. We used the Yuanbao GAI tool, and assigned it two roles, namely analyst and arguer, through predefined prompts (see Figure 2). When the system detected that learners needed new ideas, GAI switched to the analyst role and asked open‐ended questions or suggested new perspectives. When learners evaluated a viewpoint, GAI switched to the arguer and offered support or counterarguments with reasoning. These role prompts let GAI flexibly change roles based on the discussion flow. Yuanbao’s outputs were shared immediately with all group members to support further debate and discussion. These role assignments and rotations were explained by the instructor before the discussion and supported by collaboration scripts during the group work.

Figure 2

GAI Role (YuanBao) Design Interface Description

Note. Interface screenshot from Yuanbao (https://yuanqi.tencent.com).

Our research questions, along with their data sources and analysis methods, are listed in Table 2, followed by detailed explanations for each data source.

Table 2

Research Questions, Data Sources, and Analysis Methods

| Research question | Data source | Analysis method |

| Does role design affect the outcomes of collaborative knowledge construction? | Mind-map artifact scores | Wilcoxon signed-rank test |

| Does role design affect the development of collaborative interaction process? | Recording from collaborative discussion process | Lag sequential analysis |

| Does role design impact learners’ perceptions of collaboration? | Collaborative cognitive load questionnaire perceived usefulness and ease of use questionnaire Semi-structured interviews | Paired-samples t-test Wilcoxon signed-rank test Content analysis |

We systematically evaluated each group’s mind maps before and after the intervention to assess changes in collaborative knowledge construction. Our evaluation used two dimensions, namely structure and content (Veiga et al., 2025). For structure, we measured the number of nodes, hierarchy depth, and branching breadth to quantify the map’s organization. For content, we applied the SOLO taxonomy (Leung, 2000) to rate element completeness, complexity of concept relationships, and overall system level on a scale from 1 (pre‐structural) to 5 (extended abstract; Lenski et al., 2022). Details are shown in Table 3.

Table 3

Evaluation Criteria for Mind Map Artifacts

| Dimension | Indicator | Description |

| Structural | Number of nodes | Number of nodes in the mind map |

| Hierarchy | Hierarchy depth of the mind map | |

| Branching | Branching breadth of the mind map | |

| Content | Pre‐structural | Key topic elements missing; minimal valid information |

| Uni‐structural | Some elements present; relationships are simple and singular | |

| Multi‐structural | Most elements present; structure is reasonable, but not integrated | |

| Relational | All elements present; structure complete with diverse connections | |

| Extended abstract | Elements are comprehensive; structure is systematic and highly complex |

We analyzed the collaboration process using a coding framework based on Gunawardena et al.’s interaction analysis model (Zabolotna et al., 2025) and research on regulated learning (Järvelä & Hadwin, 2013; Zhang et al., 2021). Our framework included four dimensions across 11 specific codes. Details are shown in Table 4.

To ensure coding quality, two team members independently and blindly coded the first group’s discussion after becoming thoroughly familiar with the rules. Their interrater agreement was k = 0.73, indicating good reliability. Once this reliability was confirmed, the researchers used the same procedure to code all remaining discussion data.

Table 4

Cognitive Coding Framework for the Online Collaborative Learning Process

| Dimension | Indicator | Description | Code |

| Shared cognition | Questioning | Raising doubts, asking about the topic, or proposing issues to be discussed | Que |

| Clarifying | Explaining one’s ideas, answering others’ questions, or stating viewpoints | Clr | |

| Divergent cognition | Conflicting | Expressing disagreement, presenting views that challenge existing ones | Cft |

| Supporting | Directly or indirectly agreeing with others, providing positive feedback | Sup | |

| Defending | Restating one’s position, offering in-depth explanations or evidence | Def | |

| Elevating cognition | Consensus | Reaching a shared understanding or agreement during discussion | Cns |

| Evaluating | Judging the relevance or value of ideas presented | Evl | |

| Regulatory cognition | Task understanding | Asking or answering questions about the task’s content, purpose, or procedures | TU |

| Planning and goal setting | Discussing division of labor, scheduling, and task sequencing | Pla | |

| Monitoring and reflection | Tracking progress, evaluating timelines, or reflecting on collaboration methods | M/F | |

| Irrelevant | Irrelevant | Utterances or behaviors not related to collaborative cognition | IR |

To assess how learners perceived cognitive load during online collaborative discussions, we used the collaborative cognitive load scale by Ouyang et al. (2022). This six‐item questionnaire employs a five‐point Likert scale and has demonstrated strong reliability and validity (Cronbach’s α = 0.833; KMO = 0.806).

We also measured changes in how learners perceived usefulness and ease of use of GAI before and after the intervention. We adapted an eight‐item scale from Muñoz-Carril et al. (2021), modifying items to fit our context (e.g., I believe using GAI during discussion activities helps improve online collaborative learning outcomes). Half of the items assessed perceived usefulness, and half assessed perceived ease of use, all on a five‐point Likert scale. The adapted scale showed good reliability and validity (Cronbach’s α = 0.910 for usefulness and 0.831 for ease of use; KMO = 0.787 and 0.796, respectively).

After the discussion ended, follow-up interviews were conducted with participants from two groups to explore their subjective experiences of the intervention and to help interpret the survey and process data. The interviews were based on one main question. Compared to using GAI directly last week, how did assigning roles affect your group’s online collaboration, and how did you feel about it? During each interview, we asked additional questions as needed to clarify and deepen the learners’ responses.

We compared pre- and post-intervention mind maps using paired‐samples Wilcoxon signed-rank tests (see Table 5). In the structural dimension, there were no significant changes in the number of nodes (z = 0.944, p = 0.345) or branches (z = 1.532, p = 0.125), indicating that the role intervention did not alter students’ overall coverage of key points or branching logic. However, hierarchy depth decreased significantly (z = 2.511, p = 0.012), suggesting that, after the intervention, students focused more on core concepts and primary relationships by reducing secondary levels, resulting in a more streamlined map structure. In the content dimension, the overall score increased significantly (z = 3.771, p < 0.001), demonstrating that role design effectively enhanced the completeness of elements and the logical organization of relationships in the mind maps.

Table 5

Wilcoxon Signed-Rank Test Results for Concept Map Scores

| Dimension | Indicator | Pretest M | Pretest SD | Posttest M | Posttest SD | z | p |

| Structural | Node count | 44.35 | 11.70 | 40 | 15.63 | 0.944 | 0.345 |

| Level | 4.82 | 0.64 | 4.06 | 0.66 | 2.511 | 0.012* | |

| Branch count | 4.00 | 0.94 | 3.47 | 1.01 | 1.532 | 0.125 | |

| Content | Score | 3.65 | 0.50 | 4.59 | 0.51 | 3.771 | 0.000* |

Note. *p < 0.05.

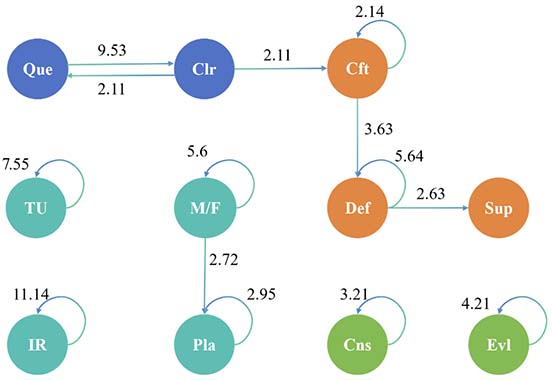

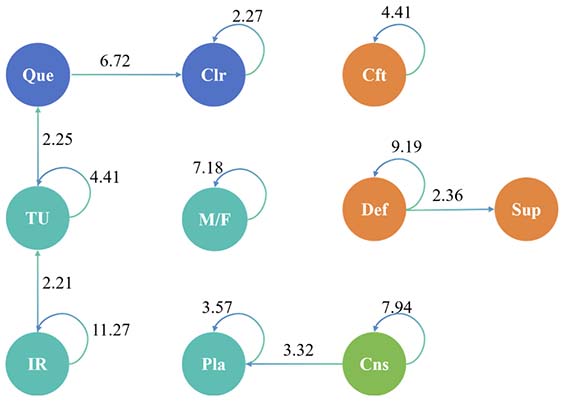

We used GSEQ 5.1 to perform lag sequential analysis on the coded discussion data before and after the role intervention. Figures 3 and 4 show the results: each circle represents a cognitive code, and arrows between circles indicate significant transitions (adjusted residual z > 1.96). For example, the transition Que → Clr means that a question is often followed by clarification. Node colors represent different encoding dimensions, for example, with blue representing shared cognition.

First, we examined the coding nodes. After the intervention, we observed a new self‐transition for the evaluate code, an indication of high‐level cognitive activity that did not appear before. This suggested that the role design successfully triggered more advanced thinking during collaboration.

Next, we analyzed the behavior paths. Before the intervention, most groups followed a simple loop centered on questioning, typically moving from task understanding to questioning and then to clarification. This pattern aligned with their pretest mind maps, which were structurally broad but scored lower for content depth. After introducing roles, discussions shifted clearly from shared cognition toward divergent cognition; conflicts among peers sparked a flow from questioning and clarification to defending and supporting ideas. Although the structural metrics of the mind maps did not change significantly, their content became more systematic and innovative, reflecting the added high‐level thinking.

Finally, we looked at key path changes. The previously significant path from irrelevant to task understanding disappeared post‐intervention, showing that role assignments effectively steered learners away from off‐topic remarks back to the task. We also observed an increase in planning and monitoring behaviors, suggesting that the division of roles fostered regulatory cognition, resulting in more structured interaction patterns and stronger self-regulation within online collaborative groups.

Figure 3

Pre-Intervention Behavioral Sequence Transition Diagram

Figure 4

Post-Intervention Behavioral Sequence Transition Diagram

The collaborative cognitive load data met the assumption of normality (Shapiro-Wilk test, p = 0.084). Therefore, we used a paired‐samples t‐test to compare perceived cognitive load before and after the intervention (see Table 6). The results showed that posttest cognitive load was significantly higher than pretest load (p = 0.023), indicating that the role-based intervention increased learners’ cognitive load during online group collaboration.

Table 6

Paired-Samples t-Test Results for Collaborative Cognitive Load

| Dimension | Pretest M | Pretest SD | Posttest M | Posttest SD | t | p | Cohen’s d |

| Collaborative cognitive load | 4.02 | 0.50 | 4.19 | 0.44 | -2.332 | 0.023* | 0.306 |

Note. *p < 0.05.

We compared learners’ pre- and post-intervention perceived usefulness and ease of use using Wilcoxon signed-rank tests (see Table 7). The mean perceived usefulness rose from 4.16 to 4.31 (z = 1.809, p = 0.070), showing an upward trend that did not reach statistical significance. In contrast, mean perceived ease of use increased significantly from 4.02 to 4.28 (z = 2.885, p = 0.004). This suggested that after the intervention, learners not only appreciated the tool’s functionality but also found it noticeably easier to operate.

Table 7

Wilcoxon Signed-Rank Test Results for Perceived Usefulness and Ease of Use

| Dimension | Pretest M | Pretest SD | Posttest M | Posttest SD | z | p |

| Perceived usefulness | 4.16 | 0.51 | 4.31 | 0.49 | 1.809 | 0.07 |

| Perceived ease of use | 4.02 | 0.52 | 4.28 | 0.54 | 2.885 | 0.004* |

Note. *p < 0.05.

The findings of this study demonstrated that role intervention based on symbiosis theory markedly enhanced collaborative knowledge construction. Specifically, restructuring the structure of mind maps enhanced the logical coherence of content, thereby elevating the expressive quality of collaborative outcomes. This indicated that role design prompted groups to shift from simply completing tasks to engaging in more in-depth knowledge construction (Zhu et al., 2023). In line with Gyasi et al. (2025), our results confirmed that embedding AI partners into online group work can support deeper knowledge construction.

Moreover, the results of collaborative knowledge construction corroborated the changes in cognitive behavior; in post-intervention discussions, high-level codes (e.g., evaluation) and transitions such as conflict → defense increased substantially. This indicated that clear role assignments provided learners with guided pathways for reflection and integration (P. Wang et al., 2024) and enabled them to actively process and deepen incoming AI-generated input. As a result, cognitive flows became more efficient, and human and machine intelligences complemented each other more effectively.

In summary, this study empirically validated the pivotal role of structured human-AI role designs in promoting knowledge construction and offered concrete guidance for future role and interaction designs in human-AI symbiotic systems. Furthermore, this strategy can be applied across disciplines and could help teams with varying levels of collaborative ability and knowledge to achieve better collaborative outcomes.

The results indicated that role design based on symbiosis theory significantly optimized online collaborative behaviors. By assigning clear roles to learners and AI partners, we observed more frequent sequences of higher-order cognitive actions, such as evaluation, during online discussions. This shift from simple questioning to deeper analysis and critique (Peltoniemi et al., 2025) demonstrated how role allocation energized group thinking.

Lag sequential analysis further revealed a change in cognitive linkage paths. Pre-intervention interactions centered on questioning → clarifying, whereas post-intervention discussions pivoted around conflict → defending. In this new pattern, learners reduced off-topic or repetitive queries, engaging more often in reflection and defense, which indicated that role design encouraged learners to employ metacognitive strategies (Faza & Lestari, 2025). Related research has found that role design fostered more positive interpersonal beliefs and a greater sense of psychological safety among learners (Ching & Hsu, 2016), thereby creating a supportive environment that accounted for the observed behavioral changes. This outcome provided a viable approach for online learning, particularly in asynchronous contexts, by clarifying the division of labor and responsibilities between humans and AI, as well as reducing the risk of superficial interactions associated with weak supervision.

Moreover, these results highlighted the vital role of structured role design in multi-actor human-AI settings, where the strengths of humans and machines are balanced to achieve functional complementarity (Karimova et al., 2025). Learners exercised agency within their assigned roles while AI partners, acting as analysts or arguers, provided timely and diverse insights (Shahzad et al., 2025). This role rotation transcended the limitations of human-only interaction, enabling a one-plus-one-is-greater-than-two synergy among learners and GAI, and ultimately led to increased collaborative participation and enhanced collaborative construction outcomes. These findings provided empirical evidence to support the design of human-AI symbiotic systems that actively shape and renew collaborative cognitive processes.

The results indicated that role-based intervention increased learners’ perceived cognitive load. This finding was consistent with previous research (Strauß et al., 2025). Under the role-division mechanism, students had to switch perspectives and deploy different cognitive strategies to fulfill each role, which raised their subjective effort. At the same time, quantitative analyses showed that role design improved learners’ perceived usefulness and ease of use of the GAI. In other words, despite the extra mental effort required, students felt that role assignments made the GAI partner’s contributions better aligned with their collaborative needs, enhancing the tool’s overall return on investment.

This experience emerged clearly in follow-up interviews. As one participant noted, “assigning roles helped us know exactly when to call on the AI instead of relying on its answers from the start.” Another said that “the division of labor made the process a bit more complex, but it also made us think more carefully about who should lead each step.” These comments suggested that while role design sharpened focus and streamlined discussions, it inevitably added cognitive overhead for planning and switching roles.

Overall, role intervention played a dual role in human-AI symbiotic collaboration. It clarified responsibilities to make interactions more structured and effective, yet it required that learners invest extra cognitive effort to manage role transitions and integrate information. Nevertheless, given the clear improvements in both collaboration outcomes and cognitive depth, the increase in cognitive load appeared both acceptable and worthwhile. It offered learners a clearer collaboration framework and provided empirical guidance for balancing efficiency and mental effort in future human-AI symbiotic systems.

This study designed human-machine interaction roles for collaborative learning based on symbiosis theory and tested them in authentic online collaborative environments. The findings have important theoretical and practical implications. Theoretically, we introduced a role strategy, using role division to operationalize symbiosis theory in specific human-machine collaboration scenarios, thereby extending its application in online collaborative environments. Practically, our study provides teachers with practical guidance on effectively integrating GAI into online collaborative learning and designing efficient online learning activities. Assigning roles and responsibilities can motivate learners and prevent blind reliance on AI, while making full use of AI’s supportive functions to encourage more in-depth discussion. Our research expands upon the practical exploration of GAI within online collaborative learning contexts. Future work can refine the role‐switching process to balance collaboration efficiency with cognitive load, creating a more effective and sustainable human-machine symbiotic collaboration model.

This study had several limitations. First, despite efforts to control for prior collaboration experience, the pretest-posttest design may have introduced practice effects, and the limited sample size and single cultural context may have limited the generalizability of the results. Future research should conduct comparative experiments across disciplines and cultural contexts to examine how symbiotic human-GAI collaboration varies across settings, thereby testing the robustness of our findings and broadening their applicability. Second, embedding GAI through role assignments improved knowledge construction but also increased learners’ cognitive load. Future research could explore multimodal interactions to lower role-switching costs and make collaboration more seamless. Finally, this study examined human-AI collaboration involving only a single GAI. Future research could explore human-machine interaction and collaborative design in multi-agent cooperative scenarios.

Adewale, M. D., Azeta, A., Abayomi-Alli, A., & Sambo-Magaji, A. (2024). Impact of artificial intelligence adoption on students’ academic performance in open and distance learning: A systematic literature review. Heliyon, 10(22), e40025. https://doi.org/10.1016/j.heliyon.2024.e40025

Barrot, J. S. (2024). ChatGPT as a language learning tool: An emerging technology report. Technology, Knowledge and Learning, 29(2), 1151-1156. https://doi.org/10.1007/s10758-023-09711-4

Becks, L., Gaedke, U., & Klauschies, T. (2025). Emergent feedback between symbiosis form and population dynamics. Trends in Ecology & Evolution, 40(5), 449-459. https://doi.org/10.1016/j.tree.2025.02.006

Borge, M., Smith, B. K., & Aldemir, T. (2024). Using generative AI as a simulation to support higher-order thinking. International Journal of Computer-Supported Collaborative Learning, 19(4), 479-532. https://doi.org/10.1007/s11412-024-09437-0

Canonigo, A. M. (2024). Levering AI to enhance students’ conceptual understanding and confidence in mathematics. Journal of Computer Assisted Learning, 40(6), 3215-3229. https://doi.org/10.1111/jcal.13065

Chen, B., Zhu, X., & Díaz del Castillo H. F. (2023). Integrating generative AI in knowledge building. Computers and Education: Artificial Intelligence, 5, 100184. https://doi.org/10.1016/j.caeai.2023.100184

Cheng, B., Wang, M., & Mercer, N. (2014). Effects of role assignment in concept mapping mediated small group learning. The Internet and Higher Education, 23, 27-38. https://doi.org/10.1016/j.iheduc.2014.06.001

Ching, Y.-H., & Hsu, Y.-C. (2016). Learners’ interpersonal beliefs and generated feedback in an online role-playing peer-feedback activity: An exploratory study. The International Review of Research in Open and Distributed Learning, 17(2). https://doi.org/10.19173/irrodl.v17i2.2221

Cress, U., & Kimmerle, J. (2023). Co-constructing knowledge with generative AI tools: Reflections from a CSCL perspective. International Journal of Computer-Supported Collaborative Learning, 18(4), 607-614. https://doi.org/10.1007/s11412-023-09409-w

Cukurova, M. (2025). The interplay of learning, analytics and artificial intelligence in education: A vision for hybrid intelligence. British Journal of Educational Technology, 56(2), 469-488. https://doi.org/10.1111/bjet.13514

de Araujo, A., Papadopoulos, P. M., McKenney, S., & de Jong, T. (2025). Investigating the impact of a collaborative conversational agent on dialogue productivity and knowledge acquisition. International Journal of Artificial Intelligence in Education, 35, 2254-2280. https://doi.org/10.1007/s40593-025-00469-7

Du, Q. (2025). How artificially intelligent conversational agents influence EFL learners’self-regulated learning and retention. Education and Information Technologies, 30, 21635-21701. https://doi.org/10.1007/s10639-025-13602-9

Duranti, A. (2023). If it is language that speaks, what do speakers do? Confronting Heidegger’s language ontology. Journal of Linguistic Anthropology, 33(3), 285-310. https://doi.org/10.1111/jola.12404

Faza, A., & Lestari, I. A. (2025). Self-regulated learning in the digital age: A systematic review of strategies, technologies, benefits, and challenges. The International Review of Research in Open and Distributed Learning, 26(2), 23-58. https://doi.org/10.19173/irrodl.v26i2.8119

Feng, Q., Li, W., Zhu, X., & Li, X. (2025). Exploring the effects of elaborated and motivational feedback on learning engagement in online scripted role discussion. International Journal of Educational Technology in Higher Education, 22(1), 2. https://doi.org/10.1186/s41239-024-00499-6

Feng, S. (2025). Group interaction patterns in generative AI‐supported collaborative problem solving: Network analysis of the interactions among students and a GAI chatbot. British Journal of Educational Technology, 56(5), 2125-2140. https://doi.org/10.1111/bjet.13611

Gui, Y., Cai, Z., Zhang, S., & Fan, X. (2025). Dyads composed of members with high prior knowledge are most conducive to digital game-based collaborative learning. Computers & Education, 230, 105266. https://doi.org/10.1016/j.compedu.2025.105266

Gyasi, J. F., Zheng, L., Love, S. F., & Boateng, F. O. (2025). The effects of three different approaches to human-AI collaboration on online collaborative learning. Educational Technology & Society, 28(2), 373-392. https://doi.org/10.30191/ETS.202504_28(2).TP07

Hao, X., Demir, E., & Eyers, D. (2024). Exploring collaborative decision-making: A quasi-experimental study of human and generative AI interaction. Technology in Society, 78, 102662. https://doi.org/10.1016/j.techsoc.2024.102662

Hollan, J., Hutchins, E., & Kirsh, D. (2000). Distributed cognition: Toward a new foundation for human-computer interaction research. ACM Transactions on Computer-Human Interaction, 7(2), 174-196. https://doi.org/10.1145/353485.353487

Janssen, J., & Kirschner, P. A. (2020). Applying collaborative cognitive load theory to computer-supported collaborative learning: Towards a research agenda. Educational Technology Research and Development, 68(2), 783-805. https://doi.org/10.1007/s11423-019-09729-5

Järvelä, S., & Hadwin, A. F. (2013). New frontiers: Regulating learning in CSCL. Educational Psychologist, 48(1), 25-39. https://doi.org/10.1080/00461520.2012.748006

Ji, Y., Zhan, Z., Li, T., Zou, X., & Lyu, S. (2025). Human-machine cocreation: The effects of ChatGPT on students’ learning performance, AI awareness, critical thinking, and cognitive load in a STEM course toward entrepreneurship. IEEE Transactions on Learning Technologies, 18, 402-415. https://doi.org/10.1109/TLT.2025.3554584

Karimova, G. Z., Kim, Y. D., & Shirkhanbeik, A. (2025). Poietic symbiosis or algorithmic subjugation: Generative AI technology in marketing communications education. Education and Information Technologies, 30(2), 2185-2209. https://doi.org/10.1007/s10639-024-12877-8

Kong, X., Fang, H., Chen, W., Xiao, J., & Zhang, M. (2025). Examining human-AI collaboration in hybrid intelligence learning environments: Insight from the synergy degree model. Humanities and Social Sciences Communications, 12(1), 821. https://doi.org/10.1057/s41599-025-05097-z

Lee, G.-G., Mun, S., Shin, M.-K., & Zhai, X. (2025). Collaborative learning with artificial intelligence speakers. Science & Education, 34(2), 847-875. https://doi.org/10.1007/s11191-024-00526-y

Lenski, S., Elsner, S., & Großschedl, J. (2022). Comparing construction and study of concept maps: An intervention study on learning outcome, self-evaluation and enjoyment through training and learning. Frontiers in Education, 7. https://doi.org/10.3389/feduc.2022.892312

Leung, C. F. (2000). Assessment for learning: Using solo taxonomy to measure design performance of design & technology students. International Journal of Technology and Design Education, 10(2), 149-161. https://doi.org/10.1023/A:1008937007674

Li, T., Ji, Y., & Zhan, Z. (2024). Expert or machine? Comparing the effect of pairing student teacher with in-service teacher and ChatGPT on their critical thinking, learning performance, and cognitive load in an integrated-STEM course. Asia Pacific Journal of Education, 44(1), 45-60. https://doi.org/10.1080/02188791.2024.2305163

Li, T., Zhan, Z., Ji, Y., & Li, T. (2025). Exploring human and AI collaboration in inclusive STEM teacher training: A synergistic approach based on self-determination theory. The Internet and Higher Education, 65, 101003. https://doi.org/10.1016/j.iheduc.2025.101003

Mackay, W. E. (2024). Parasitic or symbiotic? Redefining our relationship with intelligent systems. Adjunct proceedings of the 37th annual ACM symposium on user interface software and technology (pp. 1-2). https://doi.org/10.1145/3672539.3695752

Mai, D. T. T., Da, C. V., & Hanh, N. V. (2024). The use of ChatGPT in teaching and learning: A systematic review through SWOT analysis approach. Frontiers in Education, 9. https://doi.org/10.3389/feduc.2024.1328769

Mena-Guacas, A. F., Rodríguez, J. A. U., Trujillo, D. M. S., Gómez-Galán, J., & López-Meneses, E. (2023). Collaborative learning and skill development for educational growth of artificial intelligence: A systematic review. Contemporary Educational Technology, 15(3), ep428. https://doi.org/10.30935/cedtech/13123

Morales-García, W. C., Sairitupa-Sanchez, L. Z., Morales-García, S. B., & Morales-García, M. (2024). Development and validation of a scale for dependence on artificial intelligence in university students. Frontiers in Education, 9. https://doi.org/10.3389/feduc.2024.1323898

Muñoz-Carril, P.-C., Hernández-Sellés, N., Fuentes-Abeledo, E.-J., & González-Sanmamed, M. (2021). Factors influencing students’ perceived impact of learning and satisfaction in computer supported collaborative learning. Computers & Education, 174, 104310. https://doi.org/10.1016/j.compedu.2021.104310

Naik, A., Yin, J. R., Kamath, A., Ma, Q., Wu, S. T., Murray, R. C., Bogart, C., Sakr, M., & Rose, C. P. (2025). Providing tailored reflection instructions in collaborative learning using large language models. British Journal of Educational Technology, 56(2), 531-550. https://doi.org/10.1111/bjet.13548

Ouyang, F., Chen, S., Yang, Y., & Chen, Y. (2022). Examining the effects of three group-level metacognitive scaffoldings on in-service teachers’ knowledge building. Journal of Educational Computing Research, 60(2), 352-379. https://doi.org/10.1177/07356331211030847

Park, Y., & Doo, M. Y. (2024). Role of AI in blended learning: A systematic literature review. The International Review of Research in Open and Distributed Learning, 25(1), 164-196. https://doi.org/10.19173/irrodl.v25i1.7566

Peltoniemi, A. J., Lämsä, J., Lehesvuori, S., & Hämäläinen, R. (2025). Understanding the role of I-positions facilitating knowledge construction in a computer-supported collaborative learning environment. International Journal of Computer-Supported Collaborative Learning, 20, 1-25. https://doi.org/10.1007/s11412-025-09447-6

Pozdniakov, S., Brazil, J., Mohammadi, M., Dollinger, M., Sadiq, S., & Khosravi, H. (2025). AI-assisted co-creation: Bridging skill gaps in student-generated content. Journal of Learning Analytics, 12(1), 129-151. https://doi.org/10.18608/jla.2025.8601

Puntambekar, S., Gnesdilow, D., & Yavuz, S. (2023). Understanding the effect of differences in prior knowledge on middle school students’ collaborative interactions and learning. International Journal of Computer-Supported Collaborative Learning, 18(4), 531-573. https://doi.org/10.1007/s11412-023-09405-0

Saqr, M., López-Pernas, S., & Murphy, K. (2024). How group structure, members’ interactions and teacher facilitation explain the emergence of roles in collaborative learning. Learning and Individual Differences, 112, 102463. https://doi.org/10.1016/j.lindif.2024.102463

Shahzad, M. F., Xu, S., Liu, H., & Zahid, H. (2025). Generative artificial intelligence (ChatGPT-4) and social media impact on academic performance and psychological well-being in China’s higher education. European Journal of Education, 60(1), e12835. https://doi.org/10.1111/ejed.12835

Shin, J. G., Koch, J., Lucero, A., Dalsgaard, P., & Mackay, W. E. (2023). Integrating AI in human-human collaborative ideation. Extended abstracts of the 2023 ACM conference on human factors in computing systems. https://doi.org/10.1145/3544549.3573802

Strauß, S., Tunnigkeit, I., Eberle, J., Avdullahu, A., & Rummel, N. (2025). Comparing the effects of a collaboration script and collaborative reflection on promoting knowledge about good collaboration and effective interaction. International Journal of Computer-Supported Collaborative Learning, 20(1), 121-159. https://doi.org/10.1007/s11412-024-09430-7

Veiga, F., Gil-Del-Val, A., Iriondo, E., & Eslava, U. (2025). Validation of the use of concept maps as an evaluation tool for the teaching and learning of mechanical and industrial engineering. International Journal of Technology and Design Education, 35(1), 383-401. https://doi.org/10.1007/s10798-024-09903-8

Wang, C.-L. (2019). Learning from and for one another: An inquiry on symbiotic learning. Educational Philosophy and Theory, 51(11), 1164-1172. https://doi.org/10.1080/00131857.2018.1526671

Wang, P., Luo, H., Liu, B., Chen, T., & Jiang, H. (2024). Investigating the combined effects of role assignment and discussion timing in a blended learning environment. The Internet and Higher Education, 60, 100932. https://doi.org/10.1016/j.iheduc.2023.100932

Wang, S., Wang, F., Zhu, Z., Wang, J., Tran, T., & Du, Z. (2024). Artificial intelligence in education: A systematic literature review. Expert Systems with Applications, 252, 124167. https://doi.org/10.1016/j.eswa.2024.124167

Wei, X., Wang, L., Lee, L.-K., & Liu, R. (2025). The effects of generative AI on collaborative problem-solving and team creativity performance in digital story creation: An experimental study. International Journal of Educational Technology in Higher Education, 22(1), 23. https://doi.org/10.1186/s41239-025-00526-0

Zabolotna, K., Nøhr, L., Iwata, M., Spikol, D., Malmberg, J., & Järvenoja, H. (2025). How does collaborative task design shape collaborative knowledge construction and group-level regulation of learning? A study of secondary school students’ interactions in two varied tasks. International Journal of Computer-Supported Collaborative Learning, 20(2), 171-199. https://doi.org/10.1007/s11412-024-09442-3

Zhai, C., Wibowo, S., & Li, L. D. (2024). The effects of over-reliance on AI dialogue systems on students’ cognitive abilities: A systematic review. Smart Learning Environments, 11(1), 28. https://doi.org/10.1186/s40561-024-00316-7

Zhang, S., Chen, J., Wen, Y., Chen, H., Gao, Q., & Wang, Q. (2021). Capturing regulatory patterns in online collaborative learning: A network analytic approach. International Journal of Computer-Supported Collaborative Learning, 16(1), 37-66. https://doi.org/10.1007/s11412-021-09339-5

Zhang, S., Zhao, X., Zhou, T., & Kim, J. H. (2024). Do you have AI dependency? The roles of academic self-efficacy, academic stress, and performance expectations on problematic AI usage behavior. International Journal of Educational Technology in Higher Education, 21(1), 34. https://doi.org/10.1186/s41239-024-00467-0

Zheng, L., Fan, Y., Chen, B., Huang, Z., LeiGao, & Long, M. (2024). An AI-enabled feedback-feedforward approach to promoting online collaborative learning. Education and Information Technologies, 29(9), 11385-11406. https://doi.org/10.1007/s10639-023-12292-5

Zhu, X., Shui, H., & Chen, B. (2023). Beyond reading together: Facilitating knowledge construction through participation roles and social annotation in college classrooms. The Internet and Higher Education, 59, 100919. https://doi.org/10.1016/j.iheduc.2023.100919

Enhancing Human-Generative Artificial Intelligence Online Collaboration Outcomes: The Pivotal Function of Symbiotic Role Design by Nuo Cheng, Hongxia Liu, Xiaoqing Xu, Wei Zhao, Lifang Qiao, and Guohao Zhang is licensed under a Creative Commons Attribution 4.0 International License.