Volume 27, Number 2

Woonhee Sung1* and Yasemin Gunpinar1

1School of Education, The University of Texas at Tyler; *Corresponding Author

This study examined an online professional development program integrating artificial intelligence (AI) literacy into mathematics instruction through unplugged, explainable machine-learning activities. Ten K–12 educators created explainable feature matrices to classify geometric shapes, making machine-learning algorithms visible and accessible without requiring complex software or technological tools. The intervention used ontological principles to bridge familiar mathematical concepts with algorithmic processes. Findings demonstrated positive changes across all constructs, with participants’ AI self-efficacy increasing from below-moderate to above-moderate levels. Sentiment analysis revealed dramatic shifts from negative to positive perceptions of AI in education, with 30% of participants initially using negative descriptors versus 0% post intervention. Thematic analysis revealed three key outcomes: (a) AI concepts became explainable and learnable, (b) participants gained enhanced understanding of classification processes, and (c) participants valued the practical applicability of unplugged approaches. The study demonstrates that effective AI literacy education can be delivered through conceptual understanding rather than technological implementation, providing an accessible pathway for K–12 AI integration regardless of resource constraints.

Keywords: AI literacy, explainable machine learning, geometry, online delivery, teacher professional development

Recent developments in artificial intelligence (AI) have led to significant discussion in K–12 education (Aydin & Yurdugül, 2024; Grover, 2024; UNESCO, 2022; Wang & Cheng, 2021). For example, recognizing AI technology as a new subject area for K–12 schools, UNESCO (2022) identified three main areas based on an analysis of government-endorsed AI curricula across 11 countries: (a) AI foundations (algorithm/programming, data literacy, contextual problem-solving); (b) ethics and social impact of AI; and (c) understanding, using, and developing AI. UNESCO also emphasized the need for combining AI curricula with relevant subject matter, mathematical principles, coding, and algorithm (Aydin & Yurdugül, 2024). Similarly, Grover (2024) suggested efforts be made to integrate AI learning with other subjects and that curricula be co-designed by researchers and teachers.

Building teacher capacity in AI education is important given the crucial role experienced teachers play in the Computer Science for All movement—an effort to make high-quality computer science education accessible for all learners (Grover, 2024). Moreover, machine learning (ML) and computational thinking without computers is important since it is part of AI’s core algorithm technologies at the K–12 level (Aydin & Yurdugül, 2024; Grover, 2024; UNESCO, 2022).

Both researchers and teachers agree that it is necessary to prepare students for a future where AI is widely used (Casal-Otero et al., 2023). However, traditional approaches to AI education often require sophisticated technological tools and access to AI-integrated software that may require in-person monitoring and support. Further, these software programs or technological tools are not accessible in all educational settings, particularly in open and distributed learning contexts where educators serve geographically dispersed populations with varying resources.

Online professional development (PD) offers a promising solution for addressing these challenges at scale (Borko et al., 2009; Bragg et al., 2021). However, while a recent systematic review examining the use of AI in online learning and education (Dogan et al., 2023) revealed how to use AI technologies for online learning, it did not find a theme related to how to deliver AI literacy in open, distance, and e-learning (ODL) contexts. Moreover, recent studies on preparing teachers with AI literacy did not discuss online PD for ODL contexts but only examined what online AI tools can be used for PD (Ding et al., 2024; Kohnke et al., 2025; Younis, 2024).

This study addressed the challenge of building conceptual AI understanding while preserving the human interaction and immediate support essential to effective ODL environments (Amin et al., 2025). Specifically, we introduced online PD that could effectively deliver AI literacy by fostering engagement and embedding practical learning activities found to be effective online PD design elements (Bragg et al., 2021). To promote engagement among teacher learners attending online PD and encourage application of AI literacy in a familiar context, the intervention was designed based on a mathematics subject—2D geometric shapes. In short, we explored how an unplugged approach to teaching explainable ML concepts through geometry can make AI literacy accessible in diverse ODL contexts, thereby addressing the “double digital divide”—educators lacking both technology infrastructure and foundational AI literacy (Walter, 2024).

AI and ML refer to systems that mimic cognitive functions that human intelligence can perform based on large sets of data (Soori et al., 2023). AI is broader than ML, enabling a machine or system to sense, reason, act, or adapt like a human. ML is an algorithm that teaches computers how to learn from data to solve a problem (Martins & Gresse Von Wangenheim, 2023). As such, ML is a type of computational process that is an extension of programming, computational thinking, and coding (Mills et al., 2024).

Several domains of AI are discussed in K–12 AI literacy education to prepare students to apply AI in living, learning, and working in a digital world (Ng et al., 2021, 2023; Steinbauer et al., 2021), including (a) understanding AI (grasping how AI systems work); (b) using AI (applying AI tools and systems); (c) evaluating AI (assessing AI outputs and decisions) and creating with AI (developing AI solutions and applications); and (d) the ethics of AI (considering fairness, bias, and societal implications of AI systems; Ng et al., 2021).

Each of these components requires a different pedagogical approach to be applicable in K–12 settings. Discussing AI literacy in K–12 contexts, Wang and Lester (2023) identified critical needs for effective AI PD and AI integration within existing curriculum. Moreover, Abdennour et al. (2025) highlighted the need to foster AI literacy among non-technical learners, including hands-on, unplugged ways of delivering AI literacy to all learners regardless of their background knowledge in computer science or AI. Further, a systematic review study by Tan and Tang (2025) noted that in largely focusing on students, research in K–12 AI literacy has left a gap in understanding how teachers acquire and apply AI literacy in classrooms. Indeed, Tan and Tang (2025) found that teachers are not prepared to teach AI-related concepts and that PD opportunities remain limited.

To bridge these gaps, this study introduced pedagogical approaches that are useful for integrating AI literacy, mainly ML, in familiar subjects such as mathematics while addressing multiple domains of AI that it is important for K–12 educators to understand, apply, and evaluate to promote knowledge of AI technology and foster informed decision-making, including ethical use of AI (Lee et al., 2021; Ng et al., 2021).

Research of AI use in mathematics has focused on applying AI tools or AI learning environments to improve student performance (Hwang & Tu, 2021; Richard et al., 2022), such as using AI to improve teaching strategies (Lee & Yeo, 2022) or teaching AI literacy to help ensure students’ future career success (Ng et al., 2021). However, many did not integrate fundamental AI algorithms and ML into subject matter that does not require the direct teaching of AI literacy or use of AI tools. Further, many studies discussed AI tools such as intelligent tutoring software (Lee & Yeo, 2022), personalized learning (Hwang & Tu, 2021), problem-solving assistance (Richard et al., 2022), and automated grading (Vittorini et al., 2021), all of which require the use of AI software as a tool, rather than promoting understanding of how an AI algorithm is developed.

AI and ML, the key algorithms of AI technologies, are often considered “black boxes” because the algorithm is not visible, making it difficult to understand and interpret how it arrived at a given decision or prediction (Ng et al., 2021; Samek & Müller, 2019; Tiddi & Schlobach, 2022). Therefore, more learning opportunities are needed to teach K–12 teachers not merely to use AI tools but also to present more concrete and hands-on activities to help them understand the underlying AI procedures and ML within a familiar context such as mathematics. The intervention presented in this paper specifically addressed the challenges by designing online accessible and interactive activities.

AI’s opacity in decision-making has limited its wider adoption (Tiddi & Schlobach, 2022), which has led to challenges in teaching AI concepts to novice learners. This key challenge can be addressed by adopting explainable ML (XML), which focuses on making algorithmic machinery understandable and explainable (Belle & Papantonis, 2021). Transparency is a major element of explainability; it includes three dimensions: (a) simulatability: the ability to be simulated by a user; (b) decomposability: the ability to break a model into its subparts and explain them; and (c) algorithmic transparency: understanding the procedure the model goes through to generate decisions/outputs (Belle & Papantonis, 2021).

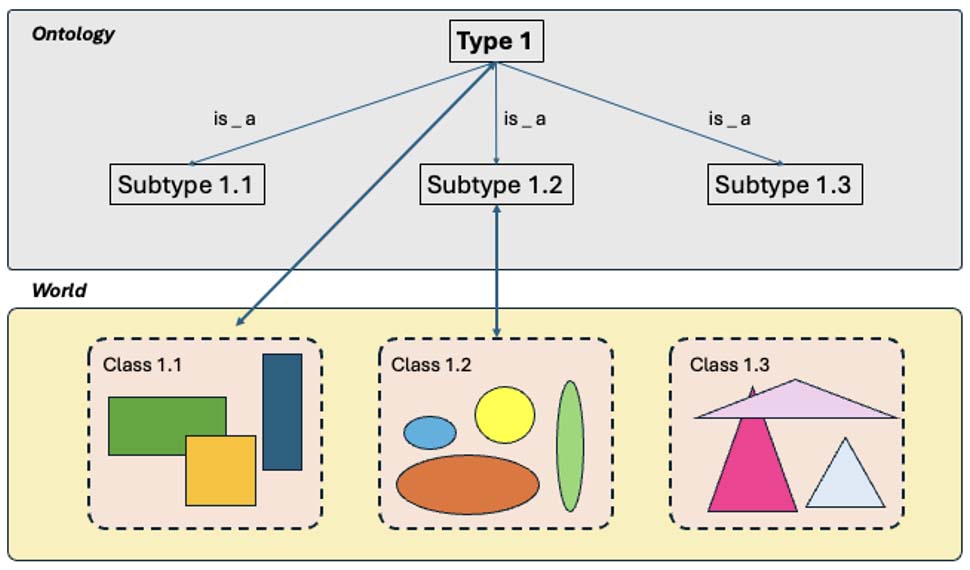

Similar to Lee et al.’s (2023) study, which applied XML to predict manufacturing defects by analyzing geometric features, our investigation infused the XML process into a geometry lesson to show how ML enables AI, thereby delivering ML concepts through an explainable and visible approach. Specifically, our online lesson was designed to support K–12 educators and all novices by making XML visible based on ontology concepts (Schulz & Stenzhorn, 2007). Ontologies provide semantic hierarchies, which demonstrate domain entity organization. We found similar applications in representing knowledge hierarchies of geometric shapes as presented in Figure 1.

Figure 1

The Relationship Between Types in Ontologies and Classes of World Entities

Note. Adapted from “The Ten Theses on Clinical Ontologies,” by S. Schulz and H. Stenzhorn, in L. Bos and B. Blobel (Eds.), Medical and Care Compunetics 4 (p. 271), 2007, IOS Press (https://books.google.ca/books/about/Medical_and_Care_Compunetics_4.html?id=3AfvAgAAQBAJ&redir_esc=y). Copyright 2007 by the authors and IOS Press. Adapted with permission.

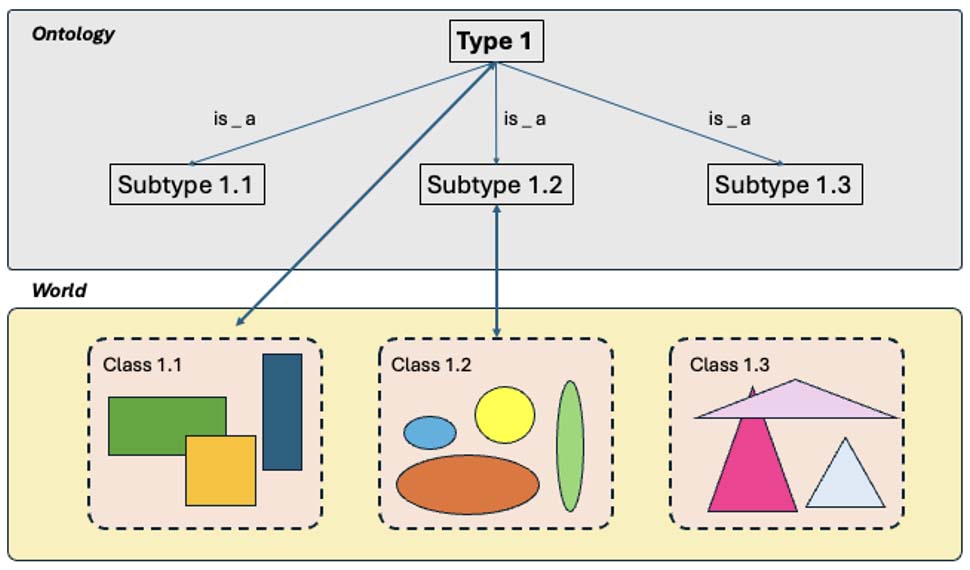

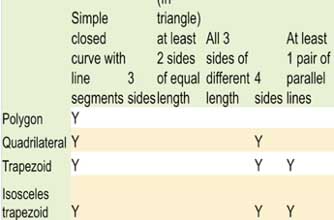

This ontological representation can be transformed into an explainable feature matrix that identifies the key features of each entity, class, and type. That is, the explainable feature matrix employs fundamental ontological principles to practice XML when analyzing geometric shapes, as presented in Table 1.

Table 1

Examples of a Simple Ontology, Hierarchical Classification, and the Explainable Feature Matrix Using Geometric Shapes as a Concept

Note. Adapted from “A Model-Driven Approach for Specifying Semantic Web Services,” by J. T. E. Timm and G. C. Gannod, in C. K. Chang and L.-J. Zhang (Chairs), Proceedings 2005 IEEE International Conference on Web Services (pp. 313-320), 2005, IEEE (https://doi.org/10.1109/ICWS.2005.9). Copyright 2005 IEEE. Adapted with permission.

A common way to visualize ontology is to use a semantic network or taxonomic hierarchy of its concepts (Ng et al., 2021; Timm & Gannod, 2005). A semantic network is used to “express logical sentences as graphical node-and-link diagrams” (Grimm, 2009, p. 112). Taxonomic order refers to the main classification principle of ontologies. As such, taxonomies “relate types with their superordinate types” and are generally named using “is_a” formatting (Schulz & Stenzhorn, 2007, p. 270).

We chose the term explainable feature matrix to refer to the creation of a table that displays selected features for classifying, comparing, and differentiating a given entity—in this case, geometric shapes. Ultimately, when the matrix is converted to codes via programming, the computer can read it and identify any given shape by checking the features specified in the matrix.

Of the three ML techniques—supervised, unsupervised, and reinforced learning—our approach focused on supervised learning models. These models predict outcomes based on trained data sets by learning relationships between features and labels (Jordan & Mitchell, 2015).

By viewing the explainable feature matrix as an algorithmic model that AI can use to identify shapes, learners mimic AI’s thinking by developing an explainable feature matrix—a visible ML algorithm for classifying and identifying 2D geometric shapes. This process mirrors supervised ML by requiring pattern detection, feature analysis, abstraction, evaluation, and debugging—core computational thinking skills (Grover & Pea, 2013). In short, students experience ML algorithms firsthand, shifting their perspective to understand how AI differentiates shapes through feature recognition.

By grounding complex AI concepts in familiar mathematical content, our methodology provides a pathway for developing AI literacy for novice learners without the geographical barriers that often prevent implementation due to a lack of access to PD. Table 2 shows how AI literacy domains discussed in Ng et al. (2021) were addressed in the study.

Table 2

AI Literacy Addressed in the Study Design

| AI literacy domain | Definitions based on previous approaches (Ng et al., 2021) | Current study |

| Know & understand | Know the basic AI function and how to use AI-driven tools | Understand how AI algorithm functions by identifying patterns and features in hands-on activity |

| Use & apply | Use and apply AI in different contexts with an understanding of AI concepts | Apply concepts of AI in the learning concept of geometric shapes |

| Evaluate & create | Evaluate, appraise, create, and build AI | Create a visible feature matrix to identify features/patterns for categorizing geometric shapes, and evaluate the algorithm |

| Ethics | Considerations regarding ethics, including fairness, social impact, privacy, etc. | Through role-play, evaluate feature matrix algorithms to see how algorithms and selected features affect results |

The essence of the lesson is to create explainable feature matrices that make ML algorithms visible and accessible. Table 3 shows the lesson flow organized around the four core AI literacy domains (Ng et al., 2021) along with implementation strategies that can be delivered via conferencing software (i.e., Zoom). Each domain builds upon the previous one, progressing from basic AI comprehension to practical application, creative development, and, eventually, critical evaluation of algorithmic decision-making processes.

Table 3

Flow of a Lesson Integrating ML in Geometric Shapes Content Around AI Literacy Domains

| AI literacy domain | Lesson activity | Teacher facilitation strategies | Tools and materials | Sample image |

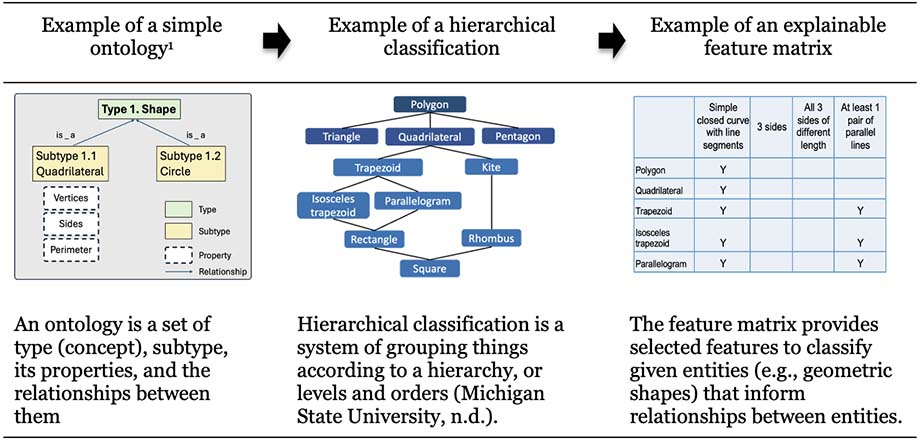

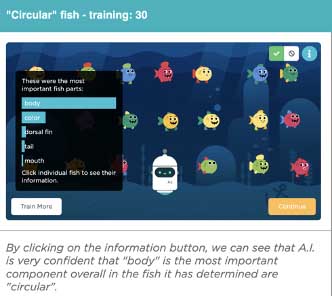

| Know & understand | AI training simulation: Code.org’s sorting game to understand how AI learns patterns and makes decisions | Teacher shares screen with Code.org; facilitates learners’ responses synchronously through sorting game | Code.org activity |  |

| Feature identification: Introduction to ML concepts through fish/trash sorting, explaining AI feature-based classification | Teacher presents fish/trash features identified in sorting game; explains algorithmic logic | Slides showing diverse fish/trash shapes; videos showing data training |  | |

| Use & apply | Geometric shape analysis: Hands-on activity applying AI classification to geometric shapes, identifying features | Learners receive individual handout links; work on activity sheets while teacher guides feature identification | Google Slides with activity sheets (distributed via individual links; completed independently) |  |

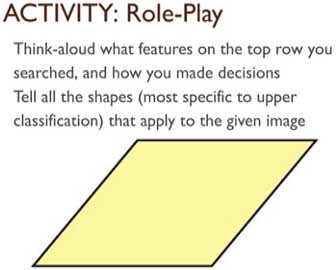

| Evaluate & create | Feature matrix creation: Development of explainable feature matrices for shape classification, creating visible ML algorithm | Teacher demonstrates feature classification with yes/no matrix; students develop feature matrix using specified shapes | Slides for classification; blank table template; sample matrix with 2-3 features |  |

| Ethics | Algorithm testing & evaluation: Role-playing activities where participants test algorithms and examine how feature selection affects outcomes, discussing algorithmic decision-making implications | Teacher presents new shapes; students identify shapes using feature matrices; class discusses feature effects and potential algorithmic biases | Whole-class activity with sequential shape slides; reflection prompts about algorithmic fairness |  |

Note. ML = machine learning. The first three sample images included in the Know & Understand and Use & Apply domain activities are from AI for Oceans, by Code.org, 2026 (https://studio.code.org/courses/oceans). Copyright 2026 by Code.org. Reprinted with permission.

Given the increasing demand for AI literacy education in K–12 and the unique advantages of online delivery for reaching diverse populations, we aimed to answer the following research questions by examining before- and after-intervention surveys, open-ended responses, and reflections.

RQ 1. To what extent did the online PD intervention impact participants’ AI-related self-efficacy, perceptions, and intentions to integrate AI in their teaching practice?

RQ 2. How did participants’ conceptualizations of AI in education evolve following the intervention, as revealed through pre-post word association analysis?

RQ 3. What themes emerged from participants’ reflections about their learning experiences with explainable feature matrices and online AI literacy PD?

Potential participants were invited via email and during online PD sessions hosted by a university located in a northeastern state in the United States. Information about the study was provided along with a consent form; access to a Qualtrics survey was granted based on agreement to participate (IRB# 2023-032). Participation was voluntary, and all data were anonymous. The final sample comprised eight in-service and two preservice teachers (nine females, one male).

Five participants identified as White, four Hispanic, and one as African American. Educational backgrounds included four with master’s degrees, four with bachelor’s degrees, and two preservice teachers. Eight teachers taught at public schools (six elementary, one middle, one secondary). Two worked as a paraprofessional and substitute teacher, respectively. Experience levels varied (three novice teachers, two with less than 5 years teaching, five with more than 10 years teaching). All participants completed the entire online PD.

Our qualitative design allowed in-depth examination of participants’ learning experiences with descriptive statistics gleaned from surveys. Data included a survey, open-ended responses, and reflection.

An online survey included items related to demographic information, familiarity with AI, and perceptions related to AI use in education (Appendix A). Three items targeted frequency of using AI tools, duration of use, and familiarity with various AI tools.

Self-efficacy in using AI and integrating AI in teaching was surveyed using seven items adapted from Kiili et al. (2016). Three items involved teachers’ self-efficacy toward AI use (Cronbach’s α = .86); four items involved self-efficacy toward integrating AI in teaching (Cronbach’s α = .93).

Participants’ perceived ease of using AI and perceived value of AI-integrated tasks were also measured. The six items related to perceived ease of AI use were adapted from Davis (1989, p. 340; Cronbach’s α = .94), who developed scales predicting intention and actual use based on perceived ease of use. We substituted AI for Chart-Master and edited phrases for novice learners based on Doll et al. (1998, p. 846). Reliability showed great consistency (Cronbach’s α = .93).

The three items related to perceived value of AI-integrated tasks were adapted from Liu et al. (2022, p. 5; Cronbach’s α = .88); we changed wording from “value of AI systems” to “value of integrating AI concepts in content areas” (Cronbach’s α = .83). Three items about participants’ behavioral intention to use AI in teaching were also from Liu et al. (2022; Cronbach’s α = .91). Finally, the XML was measured using three items developed by Shin (2021, p. 9; Cronbach’s α = .769) with acceptable reliability (Cronbach’s α = .74).

Measures used before and after the PD session included random words and explanations of AI recommendation systems. For recording random words, a 30-second time limit was set to catch respondents’ initial perceptions about AI use in education. A second question asked how social media advertising or product recommendation algorithms work. Finally, five reflection questions asked participants about (a) what they learned regarding AI, (b) what they learned regarding geometry, (c) lesson features affecting their learning, (d) how the PD differed from other geometry lessons, and (e) recommendations for changes. These post-reflection questions were analyzed using thematic analysis (Clarke & Braun, 2017).

Descriptive statistics reported the mean, median, and standard deviation of the quantitative variables. Given the limited sample size, we analyzed descriptive values to detect pattern differences before and after the PD using visual representations.

For the qualitative data analysis of the open-ended responses and reflection questions, we used thematic analysis, adapting the steps outlined by Braun and Clarke (2006). First, we familiarized ourselves with the data through multiple readings to identify relevant statements and then independently identified keywords within statements, coding them as larger categories. Then we developed the themes based on the categorized themes.

For random words collected pre- and post-intervention, we conducted a qualitative sentiment analysis to examine changes in students’ emotional expressions about their teacher’s communication before and after the PD. Two researchers independently coded each random word response into four categories: positive emotions, negative emotions, factual information, or no response. This manual coding approach was selected because it allows for nuanced interpretation of brief, context-specific responses and is appropriate for smaller data sets (Feldman & Ungar, 2012; Zhou & Ye, 2023). The coding for sentiment categories met 100% agreement between the two researchers. The random words also underwent thematic analysis (Braun & Clarke, 2006) to identify themes based on the lexical meaning. Once themes were finalized, we reviewed the statements to confirm appropriate theme assignment. The process concluded when we reached 100% agreement on codes and themes.

Participants rated their AI tool use and familiarity using a 5-point Likert scale (5 = daily to 1 = not at all). Four teachers (three in-service, one preservice) reported no AI tool use. Three used AI less than once monthly, two indicated monthly use (less than biweekly), and one reported weekly use. No participants reported daily use.

With 5.0 representing highest familiarity, the mean familiarity with AI tools fell below moderate (N = 10, M = 2.10, SD = 0.60). For everyday AI tools such as chatbots, product recommendations, Google Maps, and smart devices, familiarity was slightly higher (N = 10, M = 2.38, SD = 0.79). However, familiarity with educational AI tools (intelligent tutoring, personalized learning platforms, automated grading, learning aids for students with special needs) was lower (N = 10, M = 1.75, SD = 0.47), falling between not familiar and slightly familiar. The findings confirm that the PD was provided to participants who were novices regarding educational AI, ML algorithms, and ML classroom integration.

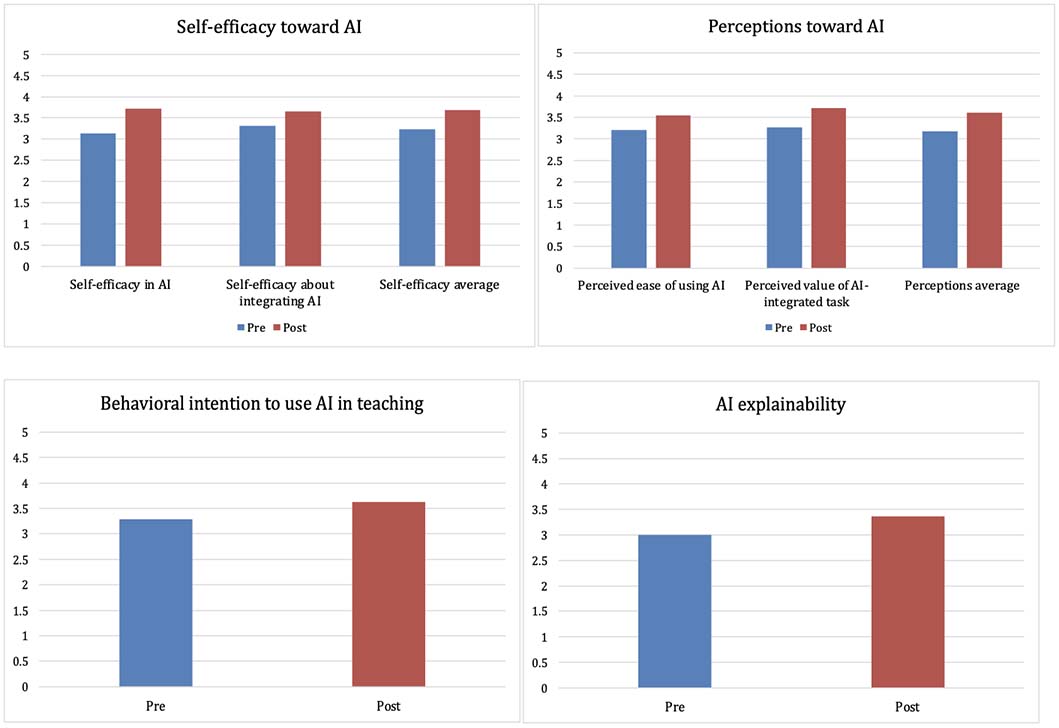

The descriptive analysis of construct averages showed positive changes across all measured constructs related to AI integration in education (see appendices B and C). Self-efficacy improvements were substantial across both domains. Participants’ general self-efficacy toward AI use increased from below-moderate (M = 3.13, SD = 1.037) to above-moderate levels (M = 3.71, SD = 0.603). Similarly, self-efficacy specifically related to integrating AI in teaching showed meaningful gains, rising from M = 3.31 (SD = 0.594) to M = 3.66 (SD = 0.597). The overall self-efficacy composite score increased from M = 3.23 to M = 3.68, indicating that participants moved from uncertain to confident in their AI-related capabilities.

Perceived ease of using AI increased from M = 3.21 (SD = 0.756) to M = 3.54 (SD = 0.533); perceived value of AI-integrated tasks changed from M = 3.26 (SD = 1.006) to M = 3.71 (SD = 0.416), showing a 13.8% improvement. Overall, perceptions increased from M = 3.17 to M = 3.60, suggesting participants developed more positive attitudes toward AI integration following the intervention.

Both behavioral intentions and understanding of AI explainability increased. Participants’ intention to use AI in teaching increased from M = 3.29 (SD = 0.722) to M = 3.63 (SD = 0.702), indicating greater commitment to implementation. Additionally, participants’ understanding of AI explainability increased from M = 3.00 (SD = 0.504) to M = 3.37 (SD = 0.806), the median rising from 3.00 to 3.50. The descriptive statistics showed consistent upward trends in all measures.

Qualitative analysis of random words describing AI use in education during a 30-second time limit revealed profound shifts across multiple dimensions (Table 4). The sentiment analysis showed that participants tended to describe AI with a mixture or curiosity and apprehension before the PD, using words such as intriguing and futuristic. The terms from before PD also reflected uncertainty and concern. Following the PD, responses shifted noticeably toward more positive and enthusiastic descriptions including the words such as fascinating, creative and fun. The negative words that appeared before the PD, such as scary, complex, and overwhelming, were no longer stated after the PD.

A particularly notable change involved participants’ understanding of AI applications. Before the PD, none of the responses referenced AI in terms of categorization or classification. However, after the PD, five stated application-focused terminology, including categorize, classify, and sorting.

Creative and enjoyable aspects of AI emerged as new themes post-intervention. Words related to creativity (e.g., creative, creating) appeared in the responses of four participants; fun associations also appeared in the responses of four participants.

Among the seven participants with matched pre-post responses, specific positive changes included: two participants shifting from negative to positive sentiment, one participant’s negative word (cheating) becoming moderate (interesting), and one participant changing from factual information (technology) to positive reaction (creating).

The factual information category also demonstrated qualitative improvement. Initial responses were limited to basic technological terms, whereas after the PD responses reflected a broader and varied understanding of AI concepts and processes. This shift suggests the online PD successfully moved participants from an apprehensive or superficial understanding toward informed, application-oriented perspectives on AI in education.

Table 4

Qualitative Analysis of Pre and Post Random Words Used to Describe AI

| Sentiment analysis | Before PD, n (%) | Pre words | After PD, n (%) | Post words |

| Positive emotions | 2 (20) | intelligent, intriguing, futuristic | 5 (50) | intelligent, fascinating, creative, fun, simple, smart, attractive, curious |

| Negative emotions | 3 (30) | scary, complex, overwhelming, cheating | 0 (0) | |

| Factual information | 3 (30) | data, computer, robot, analyze, code, testing, model | 4 (40) | robots, recognize, associate, categorize, hierarchy, procedure, order, data, model, classify, programming |

| No response | 2 (20) | 1 (10) | ||

| Themes related to application | Before PD, n (%) | Pre words | After PD, n (%) | Post words |

| Categorization/Classification | 0 (0) | 5 (50) | recognize, associate, hierarchy, procedure, order, classify, categorize | |

| Themes related to creative/positivism | Before PD, n (%) | Pre words | After PD, n (%) | Post words |

| Create variations | 0 (0) | 4 (40) | creative | |

| Fun references | 0 (0) | 4 (40) | fun |

Note. One participant’s response included multiple words.

Thematic analysis revealed enhanced understanding of recommendation algorithms after the PD (Table 5). While most participants initially focused solely on similarity-based matching (70%), post-intervention responses showed greater sophistication. Post-intervention, along with similarity-based explanations decreasing to 40%, a new theme emerged, with 30% of participants recognizing that AI systems use “more information from data collected,” including collaborative filtering and multiple data sources. Thus, post-intervention explanations were more detailed, suggesting the explainable feature matrix activities improved participants’ comprehension of algorithmic decision-making processes.

Table 5

Level of Understanding of AI-Based Recommendation Systems

| Theme | Pre n | Post n | Exemplary Responses |

| Similarity based on history | 7 | 4 | Pre

Post

|

| More information from data collected | 0 | 3 | Post only

|

Five reflection questions underwent thematic analysis, leading to the themes identified in Table 6. Responses could include one or more themes. We agreed to report themes earning three counts or more; however, themes that earned fewer than three counts are also discussed due to their richness and importance related to the PD session.

Table 6

Themes From Reflection Questions and Selected Codes Used to Derive Themes

| Question | Theme | n | Exemplary Responses |

| What did you learn from this lesson about AI? (n = 10) | Made AI explainable & learnable | 7 |

|

| Effective classroom use | 5 |

| |

| Classification/categorization | 5 |

| |

| What did you learn from this lesson about geometry? (n = 8) | Classification and more specific categorization | 6 |

|

| Relationship between shapes | 5 |

| |

| What activities and lesson features helped or hindered in this lesson? (n = 8) | Difficulties in content, technology, and time | 4 |

|

| Helpful classification activities | 3 |

| |

| In what ways was this lesson different from previously experienced geometry lessons? (n = 8) | Effective AI integration | 3 |

|

| Different approach | 3 |

| |

| What would you like to learn more about or what changes do you want to make to this lesson? (n = 7) | Running AI models | 3 |

|

| Other real-world examples of teaching AI | 3 |

|

Three themes emerged about what participants learned about AI from the PD: (a) how explainable and learnable AI can be, (b) effective classroom usage, and (c) categorization/classification. Seven participants mentioned AI being “made explainable and learnable.” Keywords used by participants included simple(r) and logical. Half of the participants said they learned how AI can be effectively used in the classroom. For instance, one mentioned using and teaching AI as well as showing students how AI works through everyday examples. Four participants used the words categorize or classification while one participant said AI is a great way to teach “ordering of shapes through similarities and differences.” One participant mentioned AI being interactive, saying, “AI can be creative. It is interactive.”

Regarding geometry learning, two themes emerged: (a) classification and more specific categorization, and (b) relationship between shapes. Six participants mentioned learning classification and more specific categorization. Five reported being better able to see and understand the connections between shapes. One participant noted that the geometry lesson could be an “easy tool to teach ML.”

For features that helped or hindered learning, two themes emerged: (a) difficulties in content, technology, and time; and (b) helpful classification activities. One participant mentioned content difficulty, another reported technology challenges; two felt rushed by time constraints. Three participants stated that PD helped them understand ML and AI.

Two themes emerged regarding differences from traditional geometry lessons: (a) effective AI integration and (b) a different approach. Three participants mentioned “effective AI integration.” For example, one participant said it was an “interesting combination between geometry and AI.” Participants mentioned that the PD used different approaches by applying familiar concepts to learn something new and showing different ways to teach geometry. Finally, regarding suggested PD changes, two themes emerged: (a) running AI models and (b) other real-world examples. Participants wanted to run their created models as an iterative process and see executed models with debugging stages. They also requested more real-world examples and activities for integrating AI.

The lesson design was beneficial and promising for online teacher education programs and teacher PD to enhance learning for K–12 students. Specifically, after the online PD, themes related to participants’ self-efficacy with AI, self-efficacy about integrating AI in their teaching, perceived ease of using AI, and perceived value of AI-integrated tasks all showed an increase. Although the significance of the results could not be determined due to the small sample size, the improved mean score from pre- to post-AI surveys was supported by the results of qualitative analysis.

First, sentiment analysis using pre and post random words showed dramatic changes from negative to positive views. The words categorizing, creative, and fun appeared significantly in the post words. These positive shifts suggest that online PD can effectively transform educators’ perceptions and build confidence in AI integration. Participants’ reflections confirmed their positive perspective changes about AI in education.

The online delivery model proved particularly effective in creating an interactive learning environment where participants could engage with AI concepts through accessible, hands-on activities while maintaining the flexibility and scalability essential for distance learning contexts. The results also showed that the PD addressed AI, its applications and use in education, and increased interest in AI. Specifically, participants mentioned that AI became “learnable” through creating and interacting with the explainable feature matrix which mimics algorithmic decision-making. As one participant stated, the PD showed “effective ways of integrating ML with the geometric concept” and an “easy way to teach machine learning.”

Improved understanding and integration intention were associated with elevated interest. Participants requested more classroom-feasible examples, online tools, such as the website Code.org (https://code.org), and ideas for implementing their created matrix in real-world settings. This qualitative finding aligned with survey responses showing mean value changes from before to after PD.

Our ontological approach differs from existing unplugged AI resources and PD programs in its transferability and adaptability across disciplines. Rather than fixed content, we provide a flexible framework centered on identifying features/characteristics, categorizing objects, and creating feature matrix—core processes applicable to any subject area, such as science (biological specimens), language arts (literary genres), social studies (historical events), or other domains. Further, the difficulty level is inherently adjustable: novices work with concrete features (color, size) and simple classifications, while advanced learners identify abstract features (significance, style) and create complex categorizations. This scalability addresses critical ODL challenges (Amin et al., 2025) related to educators having varying resources and prior knowledge. By making ML concepts tangible through familiar content with adjustable complexity, the approach enables equitable AI literacy development without requiring sophisticated technology—essential for diverse online learning environments.

The study also addresses a critical gap in the ODL and AI literacy research. While recent studies have examined online AI tools for teaching, learning, and teacher PD (Ding et al., 2024; Kohnke et al., 2025; Younis, 2024) or how AI technologies support online learning (Dogan et al., 2023), our study demonstrates how to effectively deliver conceptual AI literacy within online PD formats by embedding ML concepts within geometry instruction. The design preserves the real-time interaction and collaborative support identified as critical for effective ODL (Amin et al., 2025), while the geometry-based approach reduces dependence on advanced technological tools, making AI literacy feasible for educators with varying resources. In sum, the PD effectively delivered AI literacy online. The intervention helped participants realize effective ML integration in common subject areas through activities suitable for all K–12 settings.

This study demonstrates that online PD can effectively deliver AI literacy training while addressing key ODL challenges. By integrating ML concepts through geometry rather than requiring specialized technology, this approach reduced the persistent accessibility barriers in distance learning (Amin et al., 2025). Generating an explainable feature matrix provided insights into machine-readable formats (Tiddi & Schlobach, 2022) applicable to AI and opportunities for designing activities that make ML explainable and accessible in any classroom setting. The findings inform future instructional ideas that allow a low floor and high ceiling approach (Blake-West & Bers, 2023) in AI education.

However, the small sample size limits reliability and generalizability. A replication study with larger samples can increase statistical power leading to more robust conclusions. Further, time constraints affected the study, with some participants reporting feeling rushed. Future studies can engage a diverse, larger population without time limits. Despite these limitations, the study yielded notable findings on AI use in education, providing future research directions that can be applied in diverse subject areas.

Abdennour, O., Kemouss, H., & Khaldi, M. (2025). Integrating AI education in non-technical disciplines: Bridging the gap between theory and practice. In M. Khaldi (Ed.), Ethics and AI integration into modern classrooms (pp. 117-146). IGI Global Scientific Publishing. https://www.doi.org/10.4018/979-8-3373-2262-9.ch005

Amin, M. R. M., Ismail, I., & Sivakumaran, V. M. (2025). Revolutionizing education with artificial intelligence (AI)? Challenges, and implications for open and distance learning (ODL). Social Sciences & Humanities Open, 11, Article 101308. https://doi.org/10.1016/j.ssaho.2025.101308

Aydin, M., & Yurdugül, H. (2024). Developing a curriculum framework of artificial intelligence teaching for gifted students. Kastamonu Eğitim Dergisi, 32(1), 14-37. https://doi.org/10.24106/kefdergi.1426429

Belle, V., & Papantonis, I. (2021). Principles and practice of explainable machine learning. Frontiers in Big Data, 4, Article 688969. https://doi.org/10.3389/fdata.2021.688969

Blake-West, J. C., & Bers, M. U. (2023). ScratchJr design in practice: Low floor, high ceiling. International Journal of Child-Computer Interaction, 37, Article 100601. https://doi.org/10.1016/j.ijcci.2023.100601

Borko, H., Jacobs, J., & Koellner, K. (2009). Contemporary approaches to teacher professional development. In H. Borko, J. Jacobs, & K. Koellner (Eds.), International encyclopedia of education (3rd ed., pp. 548-556). Elsevier. https://doi.org/10.1016/B978-0-08-044894-7.00654-0

Bragg, L. A., Walsh, C., & Heyeres, M. (2021). Successful design and delivery of online professional development for teachers: A systematic review of the literature. Computers & Education, 166, Article 104158. https://doi.org/10.1016/j.compedu.2021.104158

Braun, V., & Clarke, V. (2006). Using thematic analysis in psychology. Qualitative Research in Psychology, 3(2), 77-101. https://doi.org/10.1191/1478088706qp063oa

Casal-Otero, L., Catala, A., Fernández-Morante, C., Taboada, M., Cebreiro, B., & Barro, S. (2023). AI literacy in K–12: A systematic literature review. International Journal of STEM Education, 10(1), Article 29. https://doi.org/10.1186/s40594-023-00418-7

Clarke, V., & Braun, V. (2017). Thematic analysis. The Journal of Positive Psychology, 12(3), 297-298. https://doi.org/10.1080/17439760.2016.1262613

Code.org. (2026). AI for oceans. https://studio.code.org/courses/oceans

Davis, F. D. (1989). Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Quarterly, 13(3), 319-340. https://www.jstor.org/stable/249008

Ding, A.-C. E., Shi, L., Yang, H., & Choi, I. (2024). Enhancing teacher AI literacy and integration through different types of cases in teacher professional development. Computers and Education Open, 6, Article 100178. https://doi.org/10.1016/j.caeo.2024.100178

Dogan, M. E., Goru Dogan, T., & Bozkurt, A. (2023). The use of artificial intelligence (AI) in online learning and distance education processes: A systematic review of empirical studies. Applied Sciences, 13(5), Article 3056. https://doi.org/10.3390/app13053056

Doll, W. J., Hendrickson, A., & Deng, X. (1998). Using Davis’s perceived usefulness and ease‐of‐use instruments for decision making: A confirmatory and multigroup invariance analysis. Decision Sciences, 29(4), 839-869. https://doi.org/10.1111/j.1540-5915.1998.tb00879.x

Feldman, R., & Ungar, L. (2012, June 4-7). Sentiment mining from user generated content. [Tutorial]. The Sixth International AAAI Conference on Weblogs and Social Media, Dublin, Ireland. http://www.cis.upenn.edu/∼ungar/AAAI/AAAI13.pdf

Grimm, S. (2009). Knowledge representation and ontologies. In M. M. Gaber (Ed.), Scientific data mining and knowledge discovery: Principles and foundations (pp. 111-137). Springer. https://doi.org/10.1007/978-3-642-02788-8_6

Grover, S. (2024). Teaching AI to K–12 learners: Lessons, issues, and guidance. In B. Stephenson & J. A. Stone (Chairs), Proceedings of the 55th ACM Technical Symposium on Computer Science Education (Vol. 1, pp. 422-428). ACM. https://doi.org/10.1145/3626252.3630937

Grover, S., & Pea, R. (2013). Computational thinking in K–12: A review of the state of the field. Educational Researcher, 42(1), 38-43. https://doi.org/10.3102/0013189X12463051

Hwang, G.-J., & Tu, Y.-F. (2021). Roles and research trends of artificial intelligence in mathematics education: A bibliometric mapping analysis and systematic review. Mathematics, 9(6), Article 584. https://doi.org/10.3390/math9060584

Jordan, M. I., & Mitchell, T. M. (2015). Machine learning: Trends, perspectives, and prospects. Science, 349(6245), 255-260. https://doi.org/10.1126/science.aaa8415

Kiili, C., Kauppinen, M., Coiro, J., & Utriainen, J. (2016). Measuring and supporting pre-service teachers’ self-efficacy towards computers, teaching, and technology integration. Journal of Technology and Teacher Education, 24(4), 443-469. https://www.learntechlib.org/primary/p/152285/

Kohnke, L., Zou, D., Ou, A. W., & Gu, M. M. (2025). Preparing future educators for AI-enhanced classrooms: Insights into AI literacy and integration. Computers and Education: Artificial Intelligence, 8, Article 100398. https://doi.org/10.1016/j.caeai.2025.100398

Lee, D., & Yeo, S. (2022). Developing an AI-based chatbot for practicing responsive teaching in mathematics. Computers & Education, 191, Article 104646. https://doi.org/10.1016/j.compedu.2022.104646

Lee, I., Ali, S., Zhang, H., DiPaola, D., & Breazeal, C. (2021, March). Developing middle school students’ AI literacy. In M. Sherriff & L. D. Merkle (Chairs), Proceedings of the 52nd ACM Technical Symposium on Computer Science Education (pp. 191-197). ACM. https://doi.org/10.1145/3408877.3432513

Lee, J. A., Sagong, M. J., Jung, J., Kim, E. S., & Kim, H. S. (2023). Explainable machine learning for understanding and predicting geometry and defect types in Fe-Ni alloys fabricated by laser metal deposition additive manufacturing. Journal of Materials Research and Technology, 22, 413-423. https://doi.org/10.1016/j.jmrt.2022.11.137

Liu, C.-F., Chen, Z.-C., Kuo, S.-C., & Lin, T.-C. (2022). Does AI explainability affect physicians’ intention to use AI? International Journal of Medical Informatics, 168, Article 104884. https://doi.org/10.1016/j.ijmedinf.2022.104884

Martins, R. M., & Gresse Von Wangenheim, C. (2023). Findings on teaching machine learning in high school: A ten-year systematic literature review. Informatics in Education, 22(3), 421-440. https://www.ceeol.com/search/article-detail?id=1192082

Michigan State University. (n.d.). Hierarchical classification. College of Agriculture and Natural Resources. https://www.canr.msu.edu/resources/hierarchical-classification

Mills, K. A., Cope, J., Scholes, L., & Rowe, L. (2024). Coding and computational thinking across the curriculum: A review of educational outcomes. Review of Educational Research, 95(3), 581-618. https://doi.org/10.3102/00346543241241327

Ng, D. T. K., Leung, J. K. L., Chu, S. K. W., & Qiao, M. S. (2021). Conceptualizing AI literacy: An exploratory review. Computers and Education: Artificial Intelligence, 2, Article 100041. https://doi.org/10.1016/j.caeai.2021.100041

Ng, D. T. K., Leung, J. K. L., Su, J., Ng, R. C. W., & Chu, S. K. W. (2023). Teachers’ AI digital competencies and twenty-first century skills in the post-pandemic world. Educational Technology Research and Development, 71(1), 137-161. https://doi.org/10.1007/s11423-023-10203-6

Richard, P. R., Pilar Vélez, M., & Van Vaerenbergh, S. (Eds.). (2022). Mathematics education in the age of artificial intelligence. How artificial intelligence can serve mathematical human learning. Springer Nature.

Samek, W., & Müller, K.-R. (2019). Towards explainable artificial intelligence. In W. Samek, G. Montavon, A. Vedaldi, L. K. Hansen, & K.-R. Müller (Eds.), Explainable AI: Interpreting, explaining and visualizing deep learning (pp. 5-22). Springer. https://doi.org/10.1007/978-3-030-28954-6_1

Schulz, S. & Stenzhorn, H. (2007). Ten theses on clinical ontologies. In L. Bos & B. Blobel (Eds.), Medical and Care Compunetics 4 (pp. 268-275). IOS Press.

Shin, D. (2021). The effects of explainability and causability on perception, trust, and acceptance: Implications for explainable AI. International Journal of Human-Computer Studies, 146, Article 102551. https://doi.org/10.1016/j.ijhcs.2020.102551

Soori, M., Arezoo, B., & Dastres, R. (2023). Artificial intelligence, machine learning and deep learning in advanced robotics, a review. Cognitive Robotics, 3, 54-70. https://doi.org/10.1016/j.cogr.2023.04.001

Steinbauer, G., Kandlhofer, M., Chklovski, T., Heintz, F., & Koenig, S. (2021). A differentiated discussion about AI education K–12. KI-Künstliche Intelligenz, 35(2), 131-137. https://doi.org/10.1007/s13218-021-00724-8

Tan, Q., & Tang, X. (2025). Unveiling AI literacy in K-12 education: A systematic literature review of empirical research. Interactive Learning Environments, 33(9), 5347-5363. https://doi.org/10.1080/10494820.2025.2482586

Tiddi, I., & Schlobach, S. (2022). Knowledge graphs as tools for explainable machine learning: A survey. Artificial Intelligence, 302, Article 103627. https://doi.org/10.1016/j.artint.2021.103627

Timm, J. T. E., & Gannod, G. C. (2005, July). A model-driven approach for specifying semantic Web services. In C. K. Chang & L.-J. Zhang (Chairs), Proceedings 2005 IEEE International Conference on Web Services (pp. 313-320). IEEE. https://doi.org/10.1109/ICWS.2005.9

UNESCO. (2022). K–12 AI curricula: A mapping of government-endorsed AI curricula. https://doi.org/10.54675/ELYF6010

Vittorini, P., Menini, S., & Tonelli, S. (2021). An AI-based system for formative and summative assessment in data science courses. International Journal of Artificial Intelligence in Education, 31(2), 159-185. https://doi.org/10.1007/s40593-020-00230-2

Walter, Y. (2024). Embracing the future of artificial intelligence in the classroom: The relevance of AI literacy, prompt engineering, and critical thinking in modern education. International Journal of Educational Technology in Higher Education, 21(1), Article 15. https://doi.org/10.1186/s41239-024-00448-3

Wang, T., & Cheng, E. C. K. (2021). An investigation of barriers to Hong Kong K–12 schools incorporating artificial intelligence in education. Computers and Education: Artificial Intelligence, 2, Article 100031. https://doi.org/10.1016/j.caeai.2021.100031

Wang, N., & Lester, J. (2023). K–12 education in the age of AI: A call to action for K–12 AI literacy. International Journal of Artificial Intelligence in Education, 33(2), 228-232. https://doi.org/10.1007/s40593-023-00358-x

Younis, B. (2024). Effectiveness of a professional development program based on the instructional design framework for AI literacy in developing AI literacy skills among pre-service teachers. Journal of Digital Learning in Teacher Education, 40(3), 142-158. https://doi.org/10.1080/21532974.2024.2365663

Zhou, J., & Ye, J.-M. (2023). Sentiment analysis in education research: A review of journal publications. Interactive Learning Environments, 31(3), 1252-1264. https://doi.org/10.1080/10494820.2020.1826985

Table A1

Survey Constructs and Items

| Construct | Item | Source |

| Teachers’ self-efficacy in the use of AI |

| Kiili et al. (2016), p. 11 |

| Teachers’ self-efficacy toward AI integration in their current or future teaching |

| Kiili et al. (2016), p. 11 |

| Perceived ease of AI use |

| Davis (1989), p. 340 |

| Perceived value of AI integrated task |

| Liu et al. (2022), p. 5 |

| Teachers’ behavioral intention to integrate AI in teaching |

| Liu et al. (2022), p. 5 |

| AI explainability |

| Shin (2021), p. 9 |

Table B1

Descriptive Statistics for Each Study Construct

| Construct | M | Mdn | SD | SE |

| Self-efficacy | ||||

| Self-efficacy in AI pre | 3.13 | 3.33 | 1.037 | 0.367 |

| Self-efficacy in AI post | 3.71 | 3.83 | 0.603 | 0.213 |

| Self-efficacy about integrating AI pre | 3.31 | 3.00 | 0.594 | 0.210 |

| Self-efficacy about integrating AI post | 3.66 | 3.88 | 0.597 | 0.211 |

| Self-efficacy average pre | 3.23 | 3.21 | 0.666 | 0.235 |

| Self-efficacy average post | 3.68 | 3.79 | 0.555 | 0.196 |

| Perceptions | ||||

| Perceived ease of using AI pre | 3.21 | 3.33 | 0.756 | 0.267 |

| Perceived ease of using AI post | 3.54 | 3.50 | 0.533 | 0.188 |

| Perceived value of AI-integrated task pre | 3.26 | 3.33 | 1.006 | 0.356 |

| Perceived value of AI integrated task post | 3.71 | 3.67 | 0.416 | 0.147 |

| Perceptions average pre | 3.17 | 3.16 | 0.724 | 0.256 |

| Perceptions average post | 3.60 | 3.55 | 0.486 | 0.172 |

| Behavioral Intention | ||||

| Behavioral intention to use AI in teaching pre | 3.29 | 3.17 | 0.722 | 0.255 |

| Behavioral intention to use AI in teaching post | 3.63 | 3.67 | 0.702 | 0.248 |

| Explainability of AI | ||||

| AI explainability pre | 3.00 | 3.00 | 0.504 | 0.178 |

| AI explainability post | 3.37 | 3.50 | 0.806 | 0.285 |

Note. Sample size was consistent across constructs. N = 8.

Note. In all four charts, the y-axis represents the average value of items for each construct.

Bringing Artificial Intelligence Literacy Into Online Education: Machine-Learning Integration Through Geometry in K–12 Teacher Professional Development by Woonhee Sung and Yasemin Gunpinar is licensed under a Creative Commons Attribution 4.0 International License.