Volume 27, Number 1

Pan Liu1, Qiang Jiang1*, Weiyan Xiong2, and Wei Zhao1

1School of Information Science and Technology, Northeast Normal University, China; 2Department of International Education, Education University of Hong Kong, Hong Kong; *Corresponding Author

This study examined how task characteristics (TC) and individual characteristics (IC) affect cognitive load (CL) and how artificial intelligence generated content (AIGC) moderates these effects in online learning. Participants included 435 undergraduate students (200 males and 235 females) enrolled in an introductory educational technology course. A structural model, conducted using Mplus software, was employed to test the relationships between each of TC and IC, and CL. Additional analyses explored the moderating role of AIGC on the relationship between TC and CL, the impact of AIGC on the relationship between IC and CL, as well as how these patterns differed by gender. Results revealed that TC positively affected CL, whereas IC exhibited a negative correlation. Moreover, AIGC negatively affected the relationship between TC and CL, but it enhanced the relationship between IC and CL. The moderating role of AIGC differed by gender. Specifically, AIGC positively influenced the connection between IC and CL among males but not females, and it weakened the relationship between TC and CL among females but not males. The implications and limitations are also discussed.

Keywords: task characteristics, individual characteristics, cognitive load, AIGC, structuring equation modeling, online learning

Online learning, as a core driver of the digital transformation in education, has promote educational equity and personalized development through technological empowerment (Rulinawaty et al., 2023). As a key component of open and distance learning (ODL), online learning environments have typically been characterized by learner autonomy, reduced teacher supervision, and asynchronous interactions. However, as online educational content has become increasingly complex and knowledge updates have accelerated without timely guidance from teachers, students in ODL have been confronted with escalating cognitive load (CL; Skulmowski & Xu, 2022). CL refers to the cumulative mental resources expended during information processing (Chen et al., 2023; Sweller, 1988). When these resources exceed an individual’s processing capacity, CL can occur, negatively affecting learning outcomes. Therefore, identifying the key factors affecting CL in online learning is crucial for enhancing students' learning efficiency and reducing their psychological stress.

Existing research has extensively explored the factors influencing CL (e.g., Li, 2010; Paas & Van Merriënboer, 1994; Tremblay et al., 2023). The seminal CL structural model by Paas and Van Merriënboer (1994) identified task characteristics (TC) and individual characteristics (IC) as pivotal factors shaping CL. TC, such as task complexity and time pressure, are external factors that directly affect learners’ psychological burden during task completion, thereby increasing CL (Chen et al., 2023). IC encompasses psychological factors like self-efficacy and state meta-cognition, reflecting individuals’ psychological states and capabilities when facing learning tasks (Le et al., 2024; Orthey et al., 2019). Numerous studies have affirmed the significant influence of TC and IC on CL (Le et al., 2024; Li, 2010; Tremblay et al., 2023). However, research has predominantly focused on identifying factors related to CL in online learning, with limited exploration into the specific mechanisms through which TC and IC exert their influence.

Researchers have proposed moderating factors, including intelligent tools, that could potentially influence the relationships between each of TC and IC, and CL (Zhao et al., 2024; Wu et al., 2024). Artificial intelligence generated content (AIGC), an online learning tool, manages learning resources and enhances learning processes (Lo, 2023; Xiao et al., 2024). It effectively breaks down complex tasks and reduces task completion time in online learning, thereby mitigating the effects of task complexity and time pressure on CL (Zhai et al., 2024). Drawing on triadic reciprocal determinism (TRD), AIGC has enhanced students’ cognition and self-efficacy (Urban et al., 2024), potentially moderating the effect of IC on CL. Although preliminary studies have explored the effects of AIGC on CL, most research has focused on the utility of these tools rather than delving into their functional mechanisms within CL (Zhao et al., 2024). Specifically, there have been few systematic investigations into how AIGC influences the relationships between TC and CL, and IC and CL.

This study established a structural equation model (d to explore how AIGC moderates the relationships between TC and CL, as well as between IC and CL in online learning. Accordingly, this paper extended cognitive load theory (CLT) and provided practical guidance for digital education by investigating the moderating role of AIGC on CL in online learning. The specific research questions were as follows:

What is the relationship between each of TC and IC, and CL?

How does AIGC moderate the effect of each of TC and IC on CL in online learning?

Are there gender differences in how AIGC moderates the relationship between IC and CL, as well as between TC and CL, in online learning?

Cognitive load theory asserts that students’ cognitive resources are finite, and any learning or problem-solving activities consume these resources, leading to CL (Mayer, 2005; Sweller, 1988). CL refers to the overall mental effort placed on a person’s cognitive system during a particular task duration (Sweller, 1988), or as the burden on an individual’s cognitive system within a defined time period (Cooper, 1990). A renowned CL structure model, established by Paas and Van Merriënboer (1994), reflected causal factors and assessment factors. Since this study focuses on the influencing factors of CL, we adopted the causality component, including TC and IC, to understand the meaning and structure of student CL.

Beyond the factors directly influencing CL, elements like learning tools moderate the relationships between these factors (i.e., TC and IC) and CL. AIGC, an intelligent learning tool, has been widely researched (Du & Lv, 2024). In education, scholars have found that AIGC can alter TC, such as task complexity, and improve students’ digital competence (Zhao et al., 2024). Furthermore, researchers have shown through quasi-experimental studies that AIGC can enhance university students’ programming self-efficacy and individual motivation (Yilmaz & Yilmaz, 2023). These studies examined AIGC as a moderating variable to explore its effects on the relationships between TC and CL, as well as between IC and CL.

With the development of CLT, TC has been defined as attributes related to cognitive effort, including task complexity and time pressure (Paas et al., 2003; Sweller, 1998). Task complexity refers to the cognitive requirements or characteristics associated with the task that elevate the amount of information to be processed (Chen et al., 2023). Time pressure results from strict time limits imposed on task completion, leading individuals to feel stress and anxiety, thereby increasing CL and affecting the quality and speed of task execution (Seitz et al., 2023). We hypothesized that TC, comprising task complexity and time pressure, influences students’ CL.

This inference was supported by numerous empirical studies (Tremblay et al., 2023). For instance, Li (2010) conducted a simulated dual-task experiment to explore the main factors and pathways affecting CL and found that TC, including factors like task complexity and time pressure, had a significant positive effect on CL. Additionally, TC’s effect on CL has been demonstrated through the design of both simple and complex tasks to assess college students’ performance (Tremblay et al., 2023). Meanwhile, task-related factors, such as time pressure, significantly affected CL (Li, 2010). Based on this, we proposed our first hypothesis.

Hypothesis 1: Task characteristics have a significant positive influence on cognitive load.

IC typically refers to the unique properties or attributes that distinguish one person from another. In CLT, Paas and Van Merriënboer (1994) categorized IC into relatively stable traits (e.g., prior knowledge and abilities) and unstable traits associated with IC (e.g., self-efficacy and state meta-cognition). Given that this study focused on tasks, we chose to investigate IC associated with TC, specifically self-efficacy and state meta-cognition.

Numerous studies have researched the relationship between IC and CL, focusing on self-efficacy and meta-cognitive states. For instance, Feldon et al. (2023) targeted undergraduate students and examined the relationship between CL and self-efficacy through timely and longitudinal measures, finding a correlation between the two. Redifer et al. (2021) discovered that high creative self-efficacy was associated with low CL in creative thinking tasks. Existing research has consistently demonstrated a direct correlation between students’ self-efficacy and CL, suggesting that higher self-efficacy correlates with lower CL compared with lower self-efficacy (Jiang, 2023). Similarly, research has indicated a correlation between meta-cognitive states and CL, with lower CL observed under conditions of higher meta-cognitive states (Bürgler et al., 2024). Given the literature above, we proposed a second hypothesis.

Hypothesis 2: Individual characteristics have a significant negative influence on cognitive load.

AIGC, a leading intelligent technology and online learning tool, has been widely touted and researched, particularly for its potential in teaching, personalized feedback, digital transformation, and learning assessment (Lo, 2023; Zhao et al., 2024). For instance, through semi-structured interviews and observations, Zhao et al. (2024) found that ChatGPT, a powerful AIGC platform, influenced students’ writing tasks in higher education and facilitated the digitization of student writing. AIGC can be employed for teaching practices and personalized tutoring (Bai et al., 2025). In sum, AIGC has served as a moderating variable to optimize students’ cognitive resources, assist in online learning tasks, and influence students’ learning outcomes.

Previous studies have suggested that AIGC can modulate CL by decomposing complex tasks, reducing cognitive intensity, and offering timely assistance and feedback ( Zhai et al., 2024). From the perspective of CLT, students’ cognitive resources are limited, and when the cognitive resources demanded for learning tasks exceed capacity, CL increases. AIGC can break down complex learning tasks, reduce the cognitive resources needed, and allocate resources more effectively (Shah & Soosai Raj, 2024). Research in human-computer interaction has emphasized cognitive offloading, where external tools reduce CL and free mental resources for other tasks (Nückles et al., 2020).

These studies showed that AIGC can serve as an intelligent tool to break down complex tasks and reduce task completion time, alleviating the effect of TC (e.g., task complexity and time pressure) on CL in online learning. The following is our third hypothesis.

Hypothesis 3: AIGC negatively moderates the relationship between TC and CL in online learning.

TRD has posited that human behavior, cognition, and the environment mutually influence one another (Bandura, 1983). Within this framework, AIGC can be seen as an online learning environmental factor influencing behavior and cognition through user interaction. TRD has also highlighted that the formation of self-concept is influenced by the external environment. AIGC has provided accurate information and suggestions, enhancing individuals’ confidence in specific tasks.

When faced with complex tasks and distractions, AIGC acts as a supportive tool in online learning, boosting individuals’ confidence and enabling meta-cognitive activities such as planning, monitoring, and self-checking, thereby strengthening the effect of IC (i.e., self-efficacy and meta-cognitive states) on CL (Noy & Zhang, 2023; Urban et al., 2024). Therefore, AIGC may moderate the relationship between IC and CL. Based on these findings, we proposed a fourth hypothesis.

Hypothesis 4: AIGC enhances the moderating effect between IC and CL in online learning.

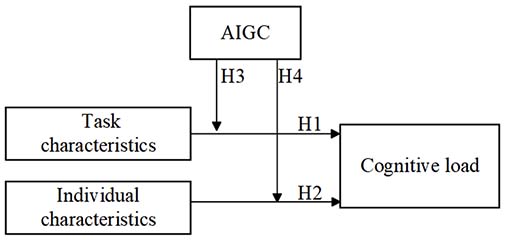

The proposed conceptual model of this paper is illustrated in Figure 1.

Figure 1

The Hypothesized Model

This research was carried out in a modern educational technology course at a university in northeast China. The online course focused on the design and development of digital resources. Weekly two-hour sessions were organized, with an online teaching assistant providing support to students on their assignments. After completing the course, students were asked to fill out a questionnaire consisting of 17 questions covering four aspects: CL, TC, IC, and AIGC.

The 480 questionnaires were distributed, and all were successfully returned (100% response rate). Invalid questionnaires, including those completed very quickly (< 30 seconds) or with extreme responses (all strongly agree or all strongly disagree), were excluded, resulting in 435 valid questionnaires (91% validity rate). Participants ranged in age from 18 to 22 years, with a mean age of 19.82 years (SD = 0.95). Among the valid responses, there were 200 male participants (46% of the total) and 235 female participants (54% of the total). All participants who volunteered for the survey were informed that their data would be used solely for research purposes. The ethical standards employed in this study were established by the Science and Technology Ethics Committee of Northeast Normal University

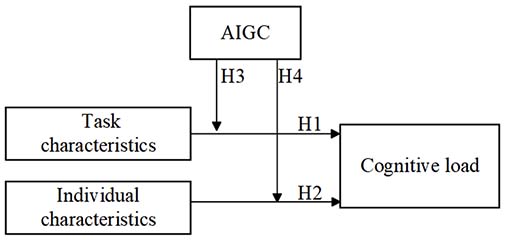

The AIGC tool used in this study was ERNIE Bot, developed by Baidu, similar to ChatGPT, known for its deep understanding of Chinese culture (Rudolph et al., 2023). The experimental design process is illustrated in Figure 2.

Figure 2

Experimental Process

The experimental procedure was divided into three steps. First, students were introduced briefly to ERNIE Bot, including its generative technology, dialogue interaction functions, and methods of use. They were instructed on how to interact with ERNIE Bot; students posed questions and received responses from the bot. For instance, tasks assigned to students beyond the curriculum included understanding the developmental history of generative artificial intelligence and learning to interact effectively with ERNIE Bot.

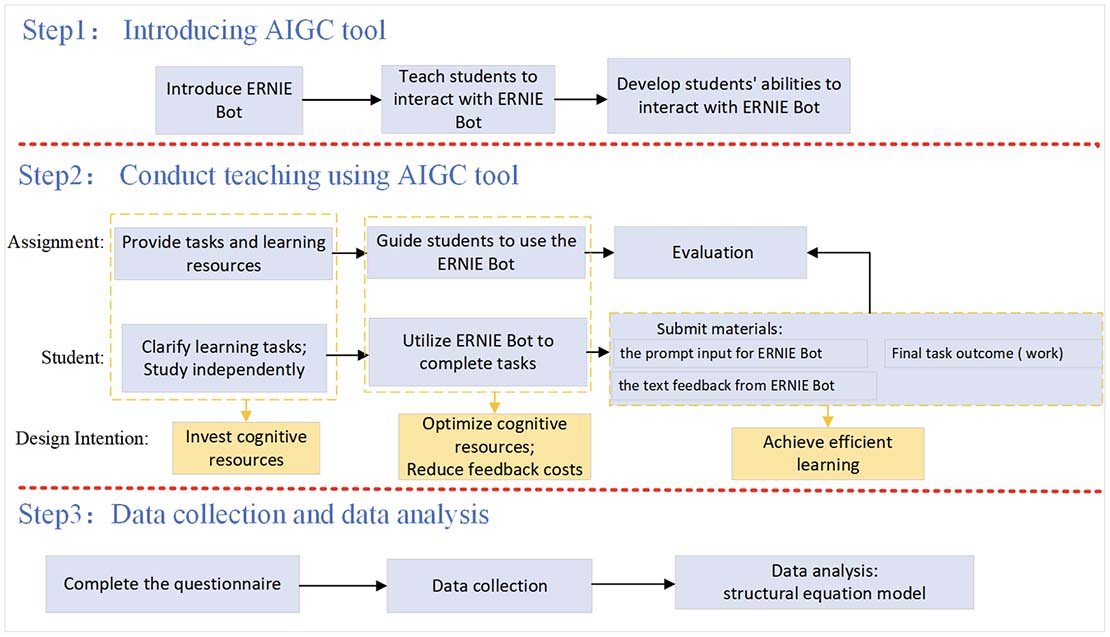

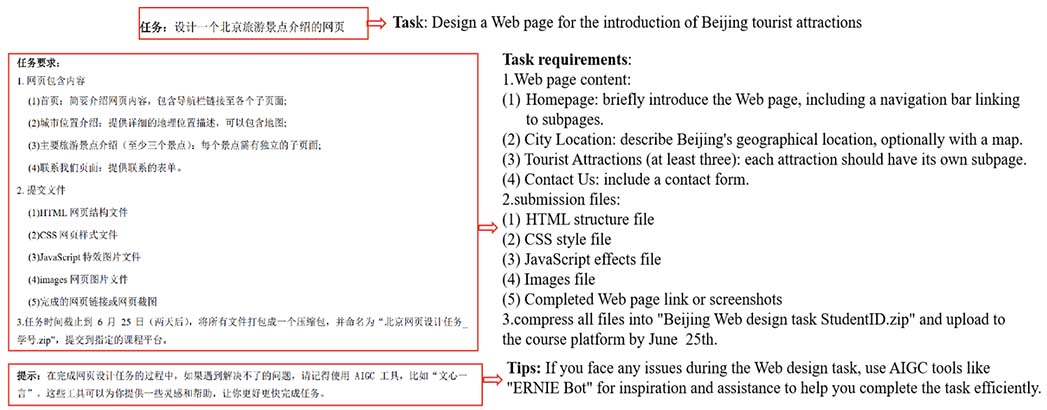

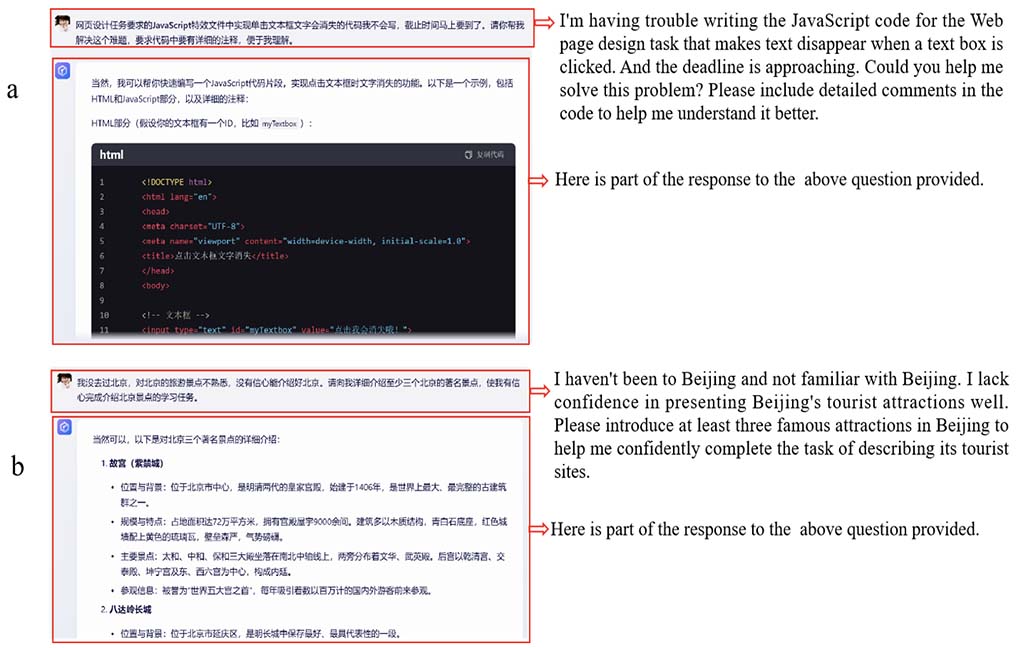

In the second phase, the instructor provided learning assignments and resources, and students, guided by ERNIE Bot, carried out the online learning tasks. To illustrate this process, we used the example of designing a Web page to introduce Beijing, which served as the final learning task of the course. Initially, students received the task and related resources; see Figures 3 and 4.

Figure 3

Screenshot of Online Learning Tasks for Web Design

Figure 4

Screenshots of Students Interacting with ERNIE Bot in Online Environment

Figure 3 outlines the Web page design theme, requirements, submission files, and task tips. Subsequently, students used ERNIE Bot to complete the task, as depicted in Figure 4, showing their interaction during TC and IC activities. (Figure 4a depicts the student’s interaction with ERNIE Bot regarding TC, and Figure 4b shows the interaction concerning IC). This phase spanned approximately one semester, during which multiple course sub-tasks were completed.

The third step of the experiment included administering an online questionnaire after the course. Subsequently, the collected data were organized using SPSS and Excel software. The associations among TC, IC, CL, and AIGC were analyzed using SEM established by Mplus software.

All items in the questionnaire were adapted from existing scales. To ensure the suitability of the content for our research, we invited two experts from the fields of psychology and education to review the comprehensibility of the questions and make necessary modifications. The revised questionnaire consisted of 17 items, encompassing the variables TC, IC, CL, and AIGC. Responses were recorded using a 5-point Likert scale, ranging from 1 (strongly disagree) to 5 (strongly agree), or in some cases, from 1 (very low) to 5 (very high).

CL was assessed using the National Aeronautics and Space Administration’s task load index (NASA-TLX) scale, widely recognized and comprising six items: (a) mental demand, (b) physical demand, (c) temporal demand, (d) effort, (e) own performance, and (f) frustration level (Hart & Staveland, 1988). The scale demonstrated good reliability with Cronbach’s alpha coefficients ranging from 0.70 to 0.90. Given the nature of course tasks and the learning processes of college students, this study adapted four items from the original scale to measure CL. Participants responded to questions such as:

How hard did you have to work (mentally and physically) to accomplish your level of performance?

How much physical activity was required?

How much time pressure did you feel because of the rate or pace of the tasks or task elements?

How successful do you think you were in accomplishing the goals of the task set by the experimenter (or yourself)?

Responses were scored on a scale ranging from 1 (very low) to 5 (very high).

This study assessed TC using the dimensions of task complexity and time pressure. Task complexity was measured using the subjective task complexity scale developed by Maynard & Hakel (1997), which has a high reliability coefficient (Cronbach’s α = 0.93). From the original 10-item scale, items focusing on subjective perceptions of task complexity were selected for this study. These included statements such as (a) I found this task to be complex, and (b) this task required a lot of thought and problem-solving. Time pressure was measured using a scale adapted from Chong et al. (2010), which showed satisfactory reliability (Cronbach’s α ranging from 0.73 to 0.88). The scale items were modified to align with the study’s context and included statements such as (a) the importance of completing this task on time, and (b) the lack of time buffer planned for this task.

State meta-cognition and self-efficacy are crucial dimensions for studying students’ IC (Li, 2010). State meta-cognition was assessed using a scale developed by O’Neil and Abedi (1996), whereas self-efficacy was measured using the general self-efficacy scale developed by Schwarzer and Jerusalem (1995), translated into Chinese by Wang et al. (2001; Cronbach’s α = 0.87). Both scales were adapted to align with the requirements of this study. Items for state meta-cognition included statements such as (a) I was aware of my thinking, and (b) I checked my work while I was doing it. Items for self-efficacy included statements such as (a) I can always manage to solve difficult problems if I try hard enough, and (b) It is easy for me to stick to my aims and accomplish my goals. All items were rated on a 5-point Likert scale ranging from 1 (strongly disagree) to 5 (d, where higher scores indicated higher levels of IC.

AIGC evaluation in this study adopted the technology acceptance model (TAM) framework (Davis, 1993), which categorizes AIGC into perceived usefulness and perceived ease of use. The scales demonstrated high reliability, with Cronbach’s alpha coefficients of 0.97 for perceived usefulness and 0.91 for perceived ease of use. To align with the experimental tools and research objectives, a questionnaire was developed to assess college students’ perceptions and applications of AIGC. The questionnaire included five items adapted from TAM, such as: it is easy for me to use generative AI tools (like ERNIE Bot). All items used a 5-point Likert scale, ranging from 1 (strongly disagree) to 5 (strongly agree), where higher scores reflected greater support and satisfaction with AIGC.

In this study, data analysis was divided into three main parts. First, raw data was organized using SPSS and Excel software, including removing outliers and preparing the data for Mplus software. Second, the reliability and validity of the questionnaire were analyzed using Mplus. This involved assessing construct reliability through item reliability (R2), Cronbach’s alpha, and composite reliability (CR). Convergent validity was evaluated using average variance extracted (AVE), and discriminant validity was examined using the Fornell-Larcker criterion. Descriptive statistics, such as means and standard deviations, were computed for each variable to assess their central tendency and variability.

Finally, a SEM was established to analyze the study’s hypotheses. The model examined the main effects of CL, TC, and IC for hypotheses 1 and 2. It also tested the moderating role of AIGC for hypotheses 3 and 4, while exploring gender differences in the moderating effects of AIGC.

This study assessed reliability using internal consistency reliability and CR. Internal consistency reliability, measured by Cronbach’s alpha coefficient, exceeded 0.70, indicating high internal consistency. Additionally, a CR value exceeding 0.70 is acceptable (Hair, 1998). Item reliability, indicated by factor loadings greater than 0.71, suggests high item quality (Hair et al., 2009).

Validity was examined using both convergent and discriminant validity. Convergent validity, evaluated through the AVE, should ideally exceed 0.5 (Fornell & Larcker, 1981). Discriminant validity was tested using the Fornell-Larcker criterion, where the square root of the AVE for each construct should exceed its correlation with any other construct, demonstrating adequate distinctness.

Table 1

Reliability and Convergent Validity

| Construct indicator | Item | Factor loading | Item reliability (R2) | Composite reliability (CR) | Cronbach’s alpha | Average variance extracted (AVE) |

| CL | CL1 | 0.86 | 0.74 | 0.86 | 0.82 | 0.61 |

| CL2 | 0.83 | 0.68 | ||||

| CL3 | 0.72 | 0.51 | ||||

| CL4 | 0.72 | 0.52 | ||||

| TC | TC1 | 0.76 | 0.58 | 0.84 | 0.84 | 0.58 |

| TC2 | 0.81 | 0.65 | ||||

| TC3 | 0.79 | 0.63 | ||||

| TC4 | 0.67 | 0.45 | ||||

| IC | IC1 | 0.82 | 0.67 | 0.88 | 0.79 | 0.64 |

| IC2 | 0.86 | 0.74 | ||||

| IC3 | 0.76 | 0.57 | ||||

| IC4 | 0.76 | 0.58 | ||||

| AIGC | AIGC1 | 0.81 | 0.66 | 0.84 | 0.89 | 0.63 |

| AIGC2 | 0.88 | 0.77 | ||||

| AIGC3 | 0.78 | 0.61 | ||||

| AIGC4 | 0.72 | 0.51 | ||||

| AIGC5 | 0.76 | 0.58 |

As Table 1 indicates, all Cronbach’s alpha values exceeded 0.7, with CR values ranging from 0.84 to 0.88. Factor loadings were greater than 0.71, indicating high item reliability. The AVE values ranged from 0.58 to 0.64, exceeding their correlations with other constructs, indicating good validity. This analysis shows that the questionnaire used in this study met high standards of reliability and validity, providing strong support for the research results.

The study analyzed means, standard deviations, and correlations, revealing the following mean values: CL (M = 4.07, SD = 0.48); TC (M = 4.17, SD = 0.44); IC (M = 1.86, SD = 0.45); and AIGC (M = 3.70, SD = 0.62).

Table 2

Descriptive Statistics, Latent Variable Correlation Matrix, and Discriminant Validity

| Variable | Descriptive statistics | Discriminant validity | ||||

| M | SD | CL | TC | IC | AIGC | |

| CL | 4.07 | 0.48 | 0.78 | |||

| TC | 4.17 | 0.44 | 0.44*** | 0.76 | ||

| IC | 1.86 | 0.45 | -0.32*** | -0.46*** | 0.80 | |

| AIGC | 3.70 | 0.62 | -0.17*** | 0.17*** | 0.45*** | 0.79 |

Note. ***p < .001, **p < .005. Diagonal values in bold represent the square root of the AVE values.

According to Table 2, CL had significant positive correlations with TC (r = 0.44, p < .001) and significant negative correlations with IC (r = -0.32, p < .001) and AIGC (r = -0.17, p < .001). TC was negatively correlated with IC (r = -0.46, p < .001) and AIGC (r = -0.26, p < .001). Additionally, IC exhibited a positive correlation with AIGC (r = 0.45, p < .001).

Using maximum likelihood estimation methods, a goodness-of-fit analysis was conducted for an SEM containing only dependent and independent variables.

Table 3

Results of Model Fit

| Index | Recommended value | Criteria | Research model |

| χ2/df | < 3 | Kline, 2011 | 2.20 |

| CFI | > .90 | 0.97 | |

| TLI | > .90 | Hu and Bentler, 1999 | 0.96 |

| SRMR | < .08 | 0.04 | |

| RMSEA | < .08 | Browne and Cudeck, 1992 | 0.05 |

As shown in Table 3, according to established standards (Browne & Cudeck, 1992; Hu & Bentler, 1999; Kline, 2011), the values for χ2/df, comparative fit index (CFI), Tucker-Lewis Index (TLI), root mean square error of approximation (RMSEA), and standardized root mean square residual (SRMR) all met the criteria, indicating a satisfactory model fit.

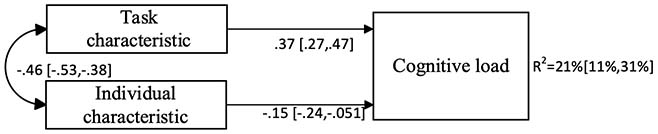

Figure 5

Main Effects of Each of TC and IC on CL, and Correlations Between Variables

The model examined the main effects of TC and IC on CL using the full sample (see Figure 5). Standardized coefficients and 95% confidence intervals (values in brackets) are provided. The bidirectional solid line in Figure 5 reflects a significant association (p < .05). The results showed that TC was positively associated with CL (β = 0.37, p < .001), whereas IC was negatively associated with CL (β = -0.15, p < .001). Therefore, hypotheses 1 and 2 were supported. Overall, the predictors explained 21% of the variability in CL.

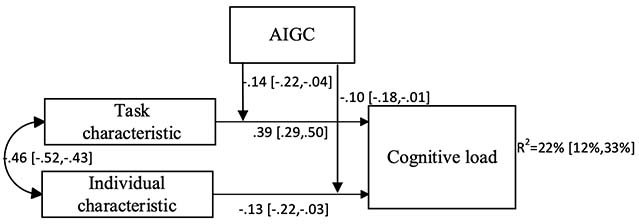

Figure 6

Moderating Role of AIGC

Furthermore, the potential moderation of AIGC on the effects of TC and IC was tested for the full sample. Two significant associations were observed (see Figure 6). Standardized coefficients and 95% confidence intervals (values in brackets) are provided. The bidirectional solid line reflects a significant association (p < .05). Specifically, AIGC moderated the associations between TC and CL (β = -0.14, p < .005) and between IC and CL (β = -0.10, p < .05). The addition of these moderating effects explained an additional 1% of the variance in CL because of TC and IC.

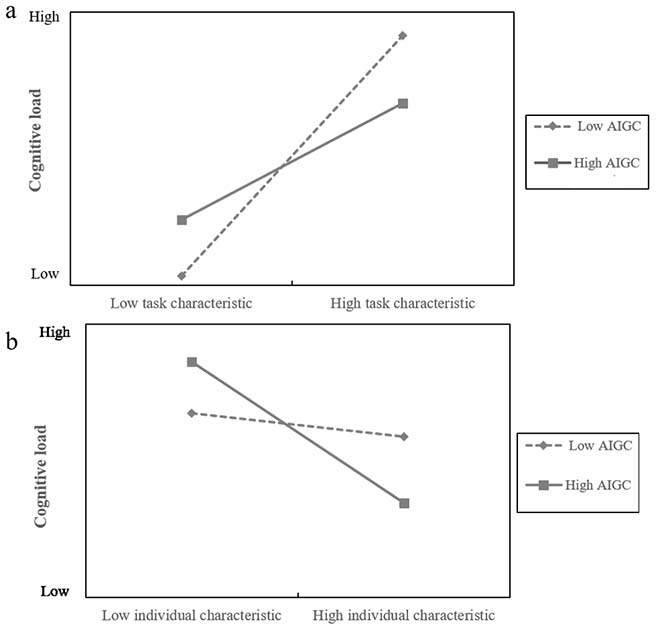

Figure 7

Moderating Role of AIGC on the Relationship Between TC and CL (Panel 7a) and Between IC and CL (Panel 7b)

To visualize the interaction effects proposed in hypotheses 3 and 4, a simple slopes test of AIGC was conducted. As shown in Figure 7a, for AIGC at a low level (one SD below the mean), simple slope = 0.56, p < .001, while for AIGC at a high level (one SD above the mean), simple slope = 0.29, p < .001. The effect of TC on CL was weakened at higher values of AIGC. In contrast, Figure 7b shows that for AIGC at a low level, simple slope = -0.04, p < .001, and for AIGC at a high level, simple slope = -0.23, p < .001. The effect of IC on CL was exacerbated at higher values of AIGC. Thus, hypotheses 3 and 4 were supported.

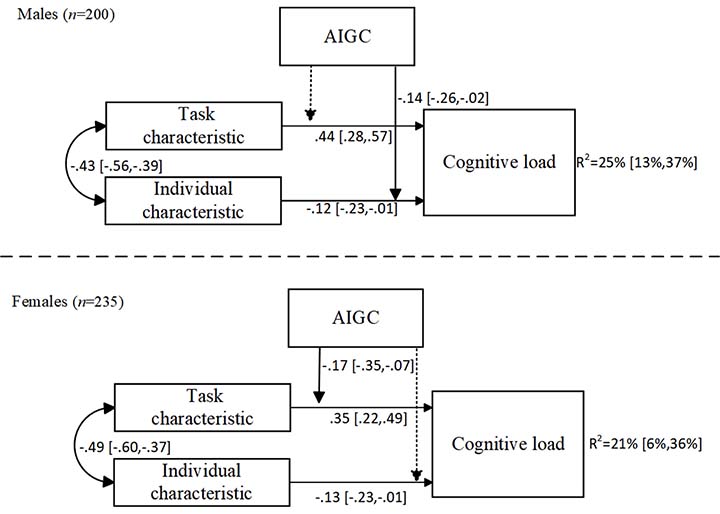

Figure 8

Moderation of AIGC Between Each of TC and IC, and CL, Segmented by Gender

We analyzed the moderating role of AIGC after dividing the sample by gender (see Figure 8). Standardized coefficients are provided. Bidirectional solid lines indicate significant associations (p < .05). As shown in Figure 8, the moderating effects of AIGC differed noticeably between males and females. Among males, the effect of IC on CL was enhanced at higher values of AIGC. An analysis of the explained variance revealed no significant difference in CL between males (25%) and females (21%; p > .05).

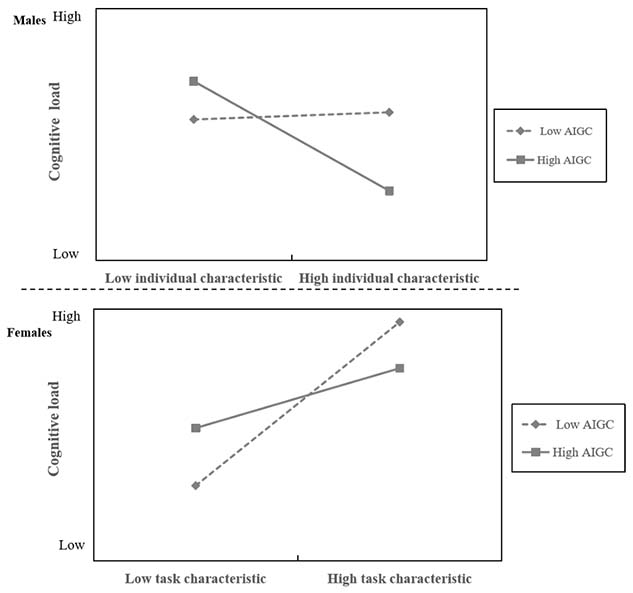

Figure 9

Moderating Role of AIGC on the Relationships Between IC and CL Among Males (Top Panel), and Between TC and CL Among Females (Bottom Panel)

In Figure 9, for males, AIGC at a low level, simple slope = -0.02, p < .05, and at a high level, the simple slope = -0.25, p < .005, indicating that AIGC increasingly strengthened the effect of IC on CL. Conversely, among females, the effect of TC on CL worsened at higher values of AIGC (low AIGC simple slope = 0.58, p < .001; high AIGC simple slope = 0.24, p < .005).

This study verified the influential relationships between each of TC and IC, and CL in online learning. More importantly, it investigated how AIGC moderates these relationships among college students. Gender differences in the regulatory role of AIGC were revealed. The findings of this study supported the hypotheses and were consistent with previous studies.

Regarding the first research question, this study discovered that TC directly influenced CL with a positive correlation, while there was a negative correlation between IC and CL. These findings aligned with existing literature (Li, 2010; Tremblay et al., 2023). Li (2010) found that task complexity and time pressure, as components of TC, positively correlated with CL. Similarly, Tremblay et al. (2023) demonstrated that TC significantly impacted students’ CL, as increased task demands required more cognitive resources (Chakraborty et al., 2024). Additionally, a negative correlation between IC and CL supported hypothesis 2, consistent with prior studies (Feldon et al., 2023; Redifer et al., 2021). According to CLT and social cognitive theory, self-efficacy and state meta-cognition help manage cognitive resources, reducing CL. For instance, Feldon et al. (2023) showed a negative correlation between self-efficacy and CL, while Scheiter et al. (2009) and Sweller (2006) found that learners with favorable IC reported lower CL. Thus, enhancing IC (e.g., self-efficacy and meta-cognition) can effectively reduce students’ CL. Notably, potential confounding variables such as students' prior knowledge, learning motivation, and technological familiarity may influence the observed relationships, which were not fully controlled in this study. These findings further validated the core tenet of CLT, which has posited that cognitive resources are limited and can be optimized by improving IC and managing TC. By confirming that increased IC reduces CL while increased TC heightens CL, this study reinforced the causal framework of CLT and highlighted the relevance of both learner characteristics and task design in CL management.

Regarding the second research question, according to the results of the structural equation analysis, AIGC attenuated the relationship between TC and CL, while it positively moderated the effect of IC on CL in online learning. AI reduces workload and CL (Gandhi et al., 2023), with large language models generating task-appropriate responses to alleviate workload (Ayers et al., 2023; Gu & Li, 2022). Our study confirmed that AIGC mitigated TC’s impact on CL through natural language processing and intelligent tutoring systems (Chen et al., 2024). Furthermore, AIGC positively moderated the relationship between TC and CL, consistent with prior studies (Gilbert et al., 2023; Lodge et al., 2023). Lodge et al. (2023) described this as offload expansion, where AIGC influences IC and CL. Cognitive offloading has suggested that external tools reduce CL, and AIGC tools like ChatGPT impact student writing practices (Rudolph et al., 2023). Thus, AIGC enhances abilities and moderates the relationship between TC and CL (Lodge et al., 2023). However, it is critical to note that the moderating effect of AIGC might be confounded by students’ prior experience with AIGC or their digital literacy levels (Chiappe et al., 2024h). Learners with higher proficiency in using AIGC may benefit more from its cognitive support, which could independently influence CL (Yang, 2024). Additionally, these results substantiated and extended CLT by showing that external technological tools act as environmental scaffolds that optimize cognitive resource allocation, thereby reducing intrinsic and extraneous CL. This aligned with the moderating mechanism proposed in CLT, where external aids can moderate the load experienced by learners during complex tasks (Poupard et al., 2025). From the lens of TRD, AIGC operates as an environmental factor that interacts with personal characteristics and behaviors, thereby affecting CL. This reciprocal interaction highlighted that CL is not only a result of task complexity but also dynamically constructed through the interplay of individual, behavioral, and environmental variables, as posited by TRD (Wang et al., 2023).

For the third research question, our study showed that AIGC significantly influenced IC for males while exerting a more pronounced effect on TC for females in online learning. This result may relate to gender differences in learning styles and technology acceptance. Males tend to exhibit greater openness and receptiveness to new technologies, whereas females often approach them with more caution and conservatism (Cai et al., 2017). Male students are more inclined to rely on their abilities and self-efficacy when using technological tools (Rosli & Saleh, 2023). This attitudinal difference can affect how effectively AIGC supports regulation processes. Consequently, AIGC tools have the potential to enhance male students’ self-efficacy and self-directed learning ability (Wang et al., 2024), thereby aiding them in managing CL more effectively. In contrast, female students may prioritize the requirements and complexity of tasks themselves (Padilla-Meléndez et al., 2013), making AIGC tools particularly effective in task decomposition and reducing task pressure for them. A gendered pattern may reflect socio-cognitive differences in how learners perceive control and use technology. Males may view AIGC as a tool to enhance personal competence, while females may see it as support for managing task complexity. This gender-specific finding added nuance to the TRD framework, as it illustrated how the interplay among personal factors (gender-based attitudes), behaviors (tool usage), and environment (AIGC) jointly shape CL.

This research provides both theoretical and practical implications. Theoretically, it validated the impacts of TC and IC on CL and further emphasized the moderating role of AIGC. Moreover, AIGC mitigated the influence of TC on CL while enhancing the effect of IC in online learning, thereby expanding cognitive load theory (Seitz et al., 2023) and advancing educational technology theory by demonstrating technology’s indirect role in CL optimization. Gender differences further emphasized the need to consider individual variations for AIGC applications in online learning. Practically, the findings offered valuable guidance for instructional designers and educators in ODL. Given the scalability and flexibility of AIGC, designers can use them to support adaptive task design, such as breaking down complex content, scaffolding problem-solving steps, and reducing extraneous load (Cai et al., 2025). Additionally, in ODL, where learners have limited access to real-time human support, AIGC-powered systems can bridge pedagogical gaps with real-time feedback, personalized learning, and adaptive task difficulty (Packer & Keates, 2023). Instructional designers should consider strategies to scaffold complex tasks and integrate meta-cognitive support with intelligent tools to reduce unnecessary load (Poupard et al., 2025). Furthermore, enhancing self-efficacy for male students and emphasizing task structuring for female students with manageable complexity may help students regulate their own cognitive resources more effectively in asynchronous and autonomous learning environments.

This study explored the relationships among TC, IC, CL, and AIGC in online learning. The findings revealed that in online learning, TC positively influences CL, whereas IC is negatively correlated with CL. Additionally, AIGC mitigates the effect of TC on CL while concurrently enhancing the impact of IC on CL. Notably, gender differences emerged, with AIGC exerting a stronger influence on IC for boys and on TC for girls. The primary contribution of this study was in identifying the role of AIGC in online learning by moderating the effects of TC and IC on CL. These findings extended cognitive load theory and provided insights into integrating AIGC into digital education. In addition, the study enhanced our understanding of how AIGC can be leveraged to support cognitive load management, guiding educators in designing more effective online learning strategies. Furthermore, the study emphasized the significance of accounting for individual differences in AIGC applications, offering valuable implications for personalized learning.

However, several limitations should be acknowledged. First, the research was carried out at a university located in northeastern China. While the setting is typical of the region, contextual factors may have limited the generalizability of the findings. Future research should expand the sample size and include participants from diverse educational settings to strengthen the applicability of the results. Nonetheless, the relevance of the findings likely extends to broader online learning environments. The observed effects of AIGC on reducing task-related burden and enhancing self-regulatory capacity have important implications for diverse digital education scenarios, including large-scale online courses, blended learning, and personalized learning platforms (Panwale & Vijayakumar, 2025). Moreover, the study relied on self-reported data, which may be subject to personal biases. Future research should integrate various data collection methods, including behavioral observations, eye tracking, and physiological measures.

This research was supported by the National Natural Science Foundation of China (NSFC), grant numbers 62577018, 62077012, and 62307021.

Ayers, J. W., Poliak, A., Dredze, M., Leas, E. C., Zhu, Z., Kelley, J. B., Faix, D. J., Goodman, A. M., Longhurst, C. A., Hogarth, M., & Smith, D. M. (2023). Comparing physician and artificial intelligence chatbot responses to patient questions posted to a public social media forum. Journals of the American Medical Association (Internal Medicine), 183(6), 589-596. https://doi.org/10.1001/jamainternmed.2023.1838

Bai, J., Cheng, X., Zhang, H., Qin, Y., Xu, T., & Zhou, Y. (2025). Can AI-generated pedagogical agents (AIPA) replace human teacher in picture book videos? The effects of appearance and voice of AIPA on children’s learning. Education and Information Technologies, 30, 12267-12287. https://doi.org/10.1007/s10639-025-13328-8

Bandura, A. (1983). Temporal dynamics and decomposition of reciprocal determinism: A reply to Phillips and Orton. Psychological Review, 90(2), 166-170. https://doi.org/10.1037/0033-295X.90.2.166

Browne, M. W., & Cudeck, R. (1992). Alternative ways of assessing model fit. Sociological Methods & Research, 21(2), 230-258. https://doi.org/10.1177/0049124192021002005

Bürgler, S., Troll, E. S., & Hennecke, M. (2024). The context-sensitive use of task enrichment to promote self-control: The role of metacognition and trait self-control. Motivation Science, 10(1), 15-27. https://doi.org/10.1037/mot0000320

Cai, H., Han, B., Sun, J., Li, X., & Wong, L. H. (2025). Harnessing AI for teacher education to promote inclusive education: Investigating the effects of ChatGPT-supported lesson plan critiques on the development of pre-service teachers’ lesson planning skills. The Internet and Higher Education, 67, 1-15. https://doi.org/10.1016/j.iheduc.2025.101022

Cai, Z., Fan, X., & Du, J. (2017). Gender and attitudes toward technology use: A meta-analysis. Computers & Education, 105, 1-13. https://doi.org/10.1016/j.compedu.2016.11.003

Chakraborty, S., Kiefer, P., & Raubal, M. (2024). The influence of uncertainty visualization on cognitive load in a safety- and time-critical decision-making task. International Journal of Geographical Information Science, 38(8), 1583-1610. https://doi.org/10.1080/13658816.2024.2348747

Chen, O., Paas, F., & Sweller, J. (2023). A cognitive load theory approach to defining and measuring task complexity through element interactivity. Educational Psychology Review, 35(2), 63. https://doi.org/10.1007/s10648-023-09782-w

Chen, X., Hu, Z., & Wang, C. (2024). Empowering education development through AIGC: A systematic literature review. Education and Information Technologies, 29, 17485-17537. https://doi.org/10.1007/s10639-024-12549-7

Chiappe, A., Díaz, J. M., & Ramirez-Montoya, M. S. (2024). Fostering 4.0 digital literacy skills through attributes of openness: A review. The International Review of Research in Open and Distributed Learning, 25(4), 176-200. https://doi.org/10.19173/irrodl.v25i4.7962

Chong, D. S., Van Eerde, W., Chai, K. H., & Rutte, C. G. (2010). A double-edged sword: The effects of challenge and hindrance time pressure on new product development teams. IEEE Transactions on Engineering Management, 58(1), 71-86. https://doi.org/10.1109/TEM.2010.2048914

Cooper, G. (1990). Cognitive load theory as an aid for instructional design. Australasian Journal of Educational Technology, 6(2). https://doi.org/10.14742/ajet.2322

Davis, F. D. (1993). User acceptance of information technology: System characteristics, user perceptions and behavioral impacts. International Journal of Man-Machine Studies, 38(3), 475-487. https://doi.org/10.1006/imms.1993.1022

Du, L., & Lv, B. (2024). Factors influencing students’ acceptance and use generative artificial intelligence in elementary education: An expansion of the UTAUT model. Education and Information Technologies, 29, 24715-24734. https://doi.org/10.1007/s10639-024-12835-4

Feldon, D. F., Brockbank, R., & Litson, K. (2023). Direct effects of cognitive load on self-efficacy during instruction. Journal of Educational Psychology, 116(7), 1153-1171. https://doi.org/10.1037/edu0000826

Fornell, C., & Larcker, D. F. (1981). Evaluating structural equation models with unobservable variables and measurement error. Journal of Marketing Research, 18(1), 39-50.

Gandhi, T. K., Classen, D., Sinsky, C. A., Rhew, D. C., Vande Garde, N., Roberts, A., & Federico, F. (2023). How can artificial intelligence decrease cognitive and work burden for front line practitioners? JAMIA Open, 6(3), ooad079. https://doi.org/10.1093/jamiaopen/ooad079

Gilbert, S. J., Boldt, A., Sachdeva, C., Scarampi, C., & Tsai, P. C. (2023). Outsourcing memory to external tools: A review of ‘intention offloading’. Psychonomic Bulletin & Review, 30(1), 60-76. https://doi.org/10.3758/s13423-022-02139-4

Gu, X., & Li, S. (2022). Artificial intelligence promotes the development of future education: Essential connotation and proper direction. Journal of East China Normal University (Educational Sciences), 40(9), 1. https://doi.org/10.16382/j.cnki.1000-5560.2022.09.001

Hair, J. F., Black, W. C., Babin, B. J., & Anderson, R. E. (1998). Multivariate data analysis: A global perspective. Pearson.

Hair, J. F., Black, W. C., Babin, B. J., & Anderson, R. E. (2009) Multivariate data analysis (7th ed.). Prentice Hall

Hart, S. G., & Staveland, L. E. (1988). Development of NASA-TLX (task load index): Results of empirical and theoretical research. Advances in Psychology, 52, 139-183. https://doi.org/10.1016/S0166-4115(08)62386-9

Hu, L., & Bentler, P. M. (1999). Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Structural Equation Modeling: A Multidisciplinary Journal, 6(1), 1-55. https://doi.org/10.1080/10705519909540118

Jiang, C. (2023). Chinese undergraduates’ English reading self-efficacy, intrinsic cognitive load, boredom, and performance: A moderated mediation model. Frontiers in Psychology, 14, 1093044. https://doi.org/10.3389/fpsyg.2023.1093044

Kline, R. B. (2011). Principles and practice of structural equation modeling. Guilford Publications.

Le Cunff, A. L., Giampietro, V., & Dommett, E. (2024). Neurodiversity and cognitive load in online learning: A systematic review with narrative synthesis. Educational Research Review, 43, 100604. https://doi.org/10.1016/j.edurev.2024.100604

Li, J. B. (2010). The synthetic effect of task characteristics and individual characteristics on cognitive load in the human-machine interaction process. Psychological Science, 33(4), 972-975. https://doi.org/10.16719/j.cnki.1671-6981.2010.04.033

Lo, C. K. (2023). What is the impact of ChatGPT on education? A rapid review of the literature. Education Sciences, 13(4), 410. https://doi.org/10.3390/educsci13040410

Lodge, J. M., Yang, S., Furze, L., & Dawson, P. (2023). It’s not like a calculator, so what is the relationship between learners and generative artificial intelligence? Learning: Research and Practice, 9(2), 117-124. https://doi.org/10.1080/23735082.2023.2261106

Mayer, R. E. (2005). Cognitive theory of multimedia learning. In R. E. Mayer (Ed.), The Cambridge handbook of multimedia learning (pp. 31-48). Cambridge University Press. https://doi.org/10.1017/CBO9780511816819.004

Maynard, D. C., & Hakel, M. D. (1997). Effects of objective and subjective task complexity on performance. Human Performance, 10(4), 303-330. https://doi.org/10.1207/s15327043hup1004_1

Noy, S., & Zhang, W. (2023). Experimental evidence on the productivity effects of generative artificial intelligence. Science, 381(6654), 187-192. https://doi.org/10.1126/science.adh2586

Nückles, M., Roelle, J., Glogger-Frey, I., Waldeyer, J., & Renkl, A. (2020). The self-regulation-view in writing-to-learn: Using journal writing to optimize cognitive load in self-regulated learning. Educational Psychology Review, 32(4), 1089-1126. https://doi.org/10.1007/s10648-020-09541-1

O’Neil, H. F., Jr., & Abedi, J. (1996). Reliability and validity of a state metacognitive inventory: Potential for alternative assessment. The Journal of Educational Research, 89(4), 234-245. https://doi.org/10.1080/00220671.1996.9941208

Orthey, R., Palena, N., Vrij, A., Meijer, E., Leal, S., Blank, H., & Caso, L. (2019). Effects of time pressure on strategy selection and strategy execution in forced choice tests. Applied Cognitive Psychology, 33(5), 974-979. https://doi.org/10.1002/acp.3592

Paas, F., Renkl, A., & Sweller, J. (2003). Cognitive load theory and instructional design: Recent developments. Educational Psychologist, 38(1), 1-4. https://doi.org/10.1207/S15326985EP3801_1

Paas, F. G., & Van Merriënboer, J. J. (1994). Instructional control of cognitive load in the training of complex cognitive tasks. Educational Psychology Review, 6, 351-371. https://doi.org/10.1007/BF02213420

Packer, B., & Keates, S. (2023). Designing AI writing workflow UX for reduced cognitive loads. In M. Antona & C. Stephanidis (Eds.), Universal access in human-computer interaction: Lecture notes in computer science (Vol. 14021, pp. 306-325). Springer. https://doi.org/10.1007/978-3-031-35897-5_23

Padilla-Meléndez, A., del Aguila-Obra, A. R., & Garrido-Moreno, A. (2013). Perceived playfulness, gender differences and technology acceptance model in a blended learning scenario. Computers & Education, 63, 306-317. https://doi.org/10.1016/j.compedu.2012.12.014

Panwale, S. B., & Vijayakumar, S. (2025). Evaluating AI-personalized learning interventions in distance education. The International Review of Research in Open and Distributed Learning, 26(1), 157-174. https://doi.org/10.19173/irrodl.v26i1.7813

Poupard, M., Larrue, F., Sauzéon, H., & Tricot, A. (2025). A systematic review of immersive technologies for education: Learning performance, cognitive load and intrinsic motivation. British Journal of Educational Technology, 56(1), 5-41. https://doi.org/10.1111/bjet.13503

Redifer, J. L., Bae, C. L., & Zhao, Q. (2021). Self-efficacy and performance feedback: Impacts on cognitive load during creative thinking. Learning and Instruction, 71, 101395. https://doi.org/10.1016/j.learninstruc.2020.101395

Rosli, M. S., & Saleh, N. S. (2023). Technology enhanced learning acceptance among university students during COVID-19: Integrating the full spectrum of self-determination theory and self-efficacy into the technology acceptance model. Current Psychology, 42(21), 18212-18231. https://doi.org/10.1007/s12144-022-02996-1

Rudolph, J., Tan, S., & Tan, S. (2023). War of the chatbots: Bard, Bing Chat, ChatGPT, Ernie and beyond. The new AI gold rush and its impact on higher education. Journal of Applied Learning and Teaching, 6(1). http://journals.sfu.ca/jalt/index.php/jalt/index

Rulinawaty, R., Priyanto, A., Kuncoro, S., Rahmawaty, D., & Wijaya, A. (2023). Massive open online courses (MOOCs) as catalysts of change in education during unprecedented times: A narrative review. Jurnal Penelitian Pendidikan IPA, 9, 53-63. https://doi.org/10.29303/jppipa.v9iSpecialIssue.6697

Scheiter, K., Gerjets, P., Vollmann, B., & Catrambone, R. (2009). The impact of learner characteristics on information utilization strategies, cognitive load experienced, and performance in hypermedia learning. Learning and Instruction, 19(5), 387-401. https://doi.org/10.1016/j.learninstruc.2009.02.004

Schwarzer, R., & Jerusalem, M. (1995). Generalized self-efficacy scale [Database record]. APA PsychNet. https://doi.org/10.1037/t00393-000

Seitz, F. I., von Helversen, B., Albrecht, R., Rieskamp, J., & Jarecki, J. B. (2023). Testing three coping strategies for time pressure in categorizations and similarity judgments. Cognition, 233, 105358. https://doi.org/10.1016/j.cognition.2022.105358

Shah, A., & Soosai Raj, A. G. (2024). A review of cognitive apprenticeship methods in computing education research. In Proceedings of the 55th ACM Technical Symposium on Computer Science Education (Vol. 1, pp. 1202-1208). https://doi.org/10.1145/3626252.3630769

Skulmowski, A., & Xu, K. M. (2022). Understanding cognitive load in digital and online learning: A new perspective on extraneous cognitive load. Educational Psychology Review, 34(1), 171-196. https://doi.org/10.1007/s10648-021-09624-7

Sweller, J. (1988). Cognitive load during problem solving: Effects on learning. Cognitive Science, 12(2), 257-285. https://doi.org/10.1016/0364-0213(88)90023-7

Sweller, J. (2006). Discussion of emerging topics in cognitive load research: Using learner and information characteristics in the design of powerful learning environments. Applied Cognitive Psychology, 20(3), 353-357. https://doi.org/10.1002/acp.1251

Tremblay, M. L., Rethans, J. J., & Dolmans, D. (2023). Task complexity and cognitive load in simulation‐based education: A randomised trial. Medical Education, 57(2), 161-169. https://doi.org/10.1111/medu.14941

Urban, M., Děchtěrenko, F., Lukavský, J., Hrabalová, V., Svacha, F., Brom, C., & Urban, K. (2024). ChatGPT improves creative problem-solving performance in university students: An experimental study. Computers & Education, 215, 105031. https://doi.org/10.1016/j.compedu.2024.105031

Wang, C. K, Hu, Z. F., & Liu, Y. (2001). Evidences for reliability and validity of the Chinese version of general self efficacy scale. Chinese Journal of Applied Psychology, 1, 37-40. https://doi.org/10.3969/j.issn.1006-6020.2001.01.007

Wang, D., Liu, Y., Jing, X., Liu, Q., & Lu, Q. (2024). Catalyst for future education: An empirical study on the Impact of artificial intelligence generated content on college students’ innovation ability and autonomous learning. Education and Information Technologies, 30, 1-20. https://doi.org/10.1007/s10639-024-13209-6

Wang, T., Li, S., Tan, C., Zhang, J., & Lajoie, S. P. (2023). Cognitive load patterns affect temporal dynamics of self-regulated learning behaviors, metacognitive judgments, and learning achievements. Computers & Education, 207, 1-18. https://doi.org/10.1016/j.compedu.2023.104924

Wu, H., Wang, Y., & Wang, Y. (2024). “To use or not to use?” A mixed-methods study on the determinants of EFL college learners’ behavioral intention to use AI in the distributed learning context. The International Review of Research in Open and Distributed Learning, 25(3), 158-178. https://doi.org/10.19173/irrodl.v25i3.7708

Xiao, T., Yi, S., & Akhter, S. (2024). AI-supported online language learning: Learners’ self-esteem, cognitive-emotion regulation, academic enjoyment, and language success. The International Review of Research in Open and Distributed Learning, 25(3), 77-96. https://doi.org/10.19173/irrodl.v25i3.7666

Yang, H.-H. (2024). The Acceptance of AI Tools Among Design Professionals: Exploring the Moderating Role of Job Replacement. The International Review of Research in Open and Distributed Learning, 25(3), 326-349. https://doi.org/10.19173/irrodl.v25i3.7811

Yilmaz, R., & Yilmaz, F. G. K. (2023). The effect of generative artificial intelligence (AI)-based tool use on students’ computational thinking skills, programming self-efficacy and motivation. Computers and Education: Artificial Intelligence, 4, 100147. https://doi.org/10.1016/j.caeai.2023.100147

Zhai, X., Nyaaba, M., & Ma, W. (2024). Can generative AI and ChatGPT outperform humans on cognitive-demanding problem-solving tasks in science? Science & Education, 34, 1-22. https://doi.org/10.1007/s11191-024-00496-1

Zhao, X., Cox, A., & Cai, L. (2024). ChatGPT and the digitisation of writing. Humanities and Social Sciences Communications, 11(1), 1-9. https://doi.org/10.1057/s41599-024-02904-x

How Task and Individual Characteristics Affect Students' Cognitive Load: The Moderating Role of AI-Generated Content by Pan Liu, Qiang Jiang, Weiyan Xiong, and Wei Zhao is licensed under a Creative Commons Attribution 4.0 International License.