B. Jean Mandernach

Park University, USA

There is considerable evidence that well-designed multimedia resources can enhance learning outcomes, yet there is little information on the role of multimedia in influencing essential motivational variables, such as student engagement. The current study examines the impact of instructor-personalized multimedia supplements on student engagement in an introductory, college-level online course. A comparison of student engagement between courses that feature increasing numbers of instructor-personalized multimedia components reveals conflicting evidence. While qualitative student feedback indicates enhanced engagement as a function of instructor-generated multimedia supplements, quantitative data reports no significant differences in engagement or learning between the various levels of multimedia inclusion. Findings highlight the complexity surrounding the appropriate use of multimedia within an online course. University policy-makers and instructors are cautioned to examine carefully the cost-benefit ratio of multimedia inclusion for online learning environments.

Keywords: Multimedia; online learning; policy

The increasing growth and popularity of online learning is forcing faculty to examine the role of multimedia within their online course content. Research indicates that effective online multimedia is content-relevant and pedagogically intentional; as such, appropriately integrated multimedia components become a valuable teaching tool for facilitating student learning. While there is considerable evidence supporting the cognitive value of multimedia in the online classroom (for a multitude of studies on this topic, see journals such as New Review of Hypermedia and Multimedia, Multimedia System, Journal of Multimedia, Advances in Multimedia, Journal of Interactive Media in Education or, the previously published, Interactive Multimedia Electronic Journal of Computer-Enhanced Learning), there is little information on the role of multimedia in influencing essential motivational variables such as student engagement. The purpose of the current study is to examine the impact of instructor-personalized multimedia supplements (i.e., multimedia that features the face and/or voice of the course instructor) on the self-reported level of student engagement and learning in an online course.

The emphasis of multimedia design and development has been on the presentation of information in multiple formats (Hede & Hede, 2002). There are a number of overlapping definitions of multimedia. According to Doolittle, “web-based multimedia represents the presentation of instruction that involves more than one delivery media, presentation mode, and/or sensory modality” (2001, p.3). Multimedia has also been defined as “the use of multiple forms of media presentation” (Schwartz & Beichner, 1999, p. 8) and “text along with at least one of the following: audio or sophisticated sound, music, video, photographs, 3-D graphics, animation, or high-resolution graphics” (Maddux, Johnson, & Willis, 2001, p. 253). Although numerous definitions exist to capture the essence and meaning of multimedia, “one commonality among all multi-media definitions involves the integration of more than one media” (Jonassen, 2000, p.207). Examples of multimedia include, but are not limited to, text in combination with graphics, audio, music, video, and/or animation.

The theoretical value of multimedia inclusion is supported by a range of basic learning principles. The cognitive theory of multimedia learning is based on the following: 1) constructivist learning theory in which meaningful learning occurs when a learner selects relevant information, organizes the information, and makes connections between corresponding representations; 2) cognitive load theory in which each working memory store has limited capacity; 3) and dual coding theory emphasizing that humans have separate systems for representing verbal and non-verbal information (Moreno & Mayer, 2000). In supporting this inclusion of multimedia, the multimedia principle finds that “students learn better from words and pictures than from words alone” (Doolittle, 2001, p.3). Hede and Hede (2002) find that games and simulations afford goal-based challenges that trigger interest and increase user motivation, and they also suggest that providing tools for annotation and collation of notes promotes learner engagement. Moreno and Mayer (2000) provide additional information to suggest “active learning occurs when a learner engages in three cognitive processes – selecting relevant words for verbal processing and selecting relevant images for visual processing, organizing words into a coherent verbal model and organizing images into a coherent visual model, integrating corresponding components of the verbal and visual models” (p.3).

The need for diverse instructional strategies targeting a range of cognitive styles is echoed by the literature in learning styles, thinking styles, and individualized cognitive processes (Dunn, Dunn, & Price, 1984; Kolb, 1984; Mills, 2002). Learning styles theories emphasize the unique cognitive approaches favored by individual learners and highlight the importance of providing a range of instructional strategies to facilitate learning for all learners. The potential of multimedia applications has been theoretically favored in the learning styles models based on the ability of multimedia applications to efficiently target various learning styles (i.e., visual, auditory, reading/writing, kinesthetic, or tactile; see Fleming & Mills, 1992 or Sternberg, 1997 for more information). However, the value of multimedia is dependent upon its appropriate use, selection, and placement (Mayer, 1997, 2001). Multimedia users are cautioned to ensure research-based principles are applied to the design and implementation of multimedia supplements.

Empirical results indicate and support the effectiveness of multimedia inclusion for online student learning. Clark and Mayer (2002) provide the following empirically-based principles based in cognitive psychology theory to guide multimedia inclusion as it applies to virtual learning environments:

Multimedia principle

Relevant, instructional graphics to supplement written text should be incorporated to improve learning through the dual coding of verbal and visual information.

Contiguity principle

Place graphics and text close together so that limited working memory is reserved for learning content rather than coordinating various visual components.

Modality principle

Include audio to explain graphics as audio enhances learning more than text by expanding cognitive resources to simultaneously tap both visual and phonetic memory.

Redundancy principle

Supplement graphics with audio alone rather than audio and redundant text to reduce cognitive overload.

Coherence principle

Avoid using visuals, text, and sounds that are not essential to instruction as unnecessary information impedes learning by interfering with the integration of information.

Personalization principle

Use a conversational tone and/or a personalized learning agent to enhance learning via social conventions to listen and respond meaningfully.

As reflected by these principles, the inclusion of multimedia into the online classroom cannot be summarized by either the less-is-more or the more-is-more approach to course design. The educational value of multimedia is dependent upon appropriate inclusion of multimedia supplements to enhance the cognitive impact of the text (Mayer & Anderson, 1992).

These principles and guidelines provide a framework for incorporating multimedia to maximize student learning, but they do not address the impact of multimedia on non-cognitive variables, such as student engagement. While most of the principles (multimedia, contiguity, modality, redundancy, and coherence) are clearly and exclusively geared toward enhancing the learning process through an emphasis on reduced cognitive demands and maximal encoding in memory, the personalization principle goes beyond information-processing theories of learning to highlight the importance of personalized interaction in educational contexts. The value of personalizing the online learning experience is echoed in the research on instructor presence (Anderson, Rourke, Garrison, & Archer, 2001). Instructor presence encompasses “the design, facilitation, and direction of cognitive and social processes for the realization of personally meaningful and educationally worthwhile learning outcomes” (Anderson et al., ¶ 13); key to this model is the importance of an instructor’s social presence. Social presence includes the “degree of salience of the other person in the (mediated) interaction and the consequent salience of the interpersonal relationships” (Short, Williams, & Christie, 1976). Research on instructors’ social presence in the online classroom (Richardson & Swan, 2003) found significant positive correlations between students’ social presence scores and perceived learning as well as between students’ social presence scores and perceptions of instructor presence. Extending the implications of the personalization principle and theory of instructor presence, it is possible that online courses that utilize multimedia to create a more personalized, intimate learning experience may increase student engagement.

Student engagement is rooted in a combination of personality, affective, motivational, and persistence factors applied to the learning process; it “includes attributes like intrinsic motivation, positive affect, persistence, effort and self-confidence” (Ruhe, 2006, p. 1). Students with high levels of engagement enjoy the process of learning, persist in their scholarly work despite challenges and obstacles, and gain satisfaction from scholarly accomplishments (Schlecty, 1994). Student engagement goes beyond simple emphasis on cognitive outcomes and learning to highlight students’ active role in the educational processes. Engagement rests upon “students’ willingness, need, desire and compulsion to participate in, and be successful in, the learning process” (Bomia, Beluzo, Demeester, Elander, Johnson, & Sheldon, 1997, p. 294).

While these attributes are important in all learning environments, student engagement becomes imperative in the virtual classroom. Not only are online students navigating the typical learning challenges of the academic content, but they are learning in a physically isolated environment that is often void of the entertainment and social aspects of the traditional classroom. Research clearly supports the relationship between student engagement and student achievement in the face-to-face classroom (Gutherie & Anderson, 1999; Handelsman, Briggs, Sullivan, & Towler, 2005; Skinner, Wellborn, & Connell, 1990), yet existing literature is limited in its examination of the unique considerations of student engagement as applied to online learning environments. Generalizing the findings from traditional classrooms, one would assume that enhanced student engagement in the online classroom should increase interest and enthusiasm for the course, which, in turn, impacts retention, learning, and satisfaction. Moreover, one may assume that because of the isolated nature of virtual education, the value and impact of increased student engagement may be even more pronounced in the online classroom than in traditional educational settings.

Online faculty must ponder the inclusion of multimedia to make their course design pedagogically intentional (i.e., including course components with diligent attention to their educational impact, alignment with learning goals, relevance to assessments, etc.), while also balancing increasing demands to integrate multimedia as a “best practice” for effective online learning (Mandernach, 2006). The cognitive value of multimedia as a discrete interactive piece has been well-established (Harris, 2002; Hede & Hede, 2002; Moreno & Mayer, 2000; Burg, Wong & McCoy, 2004) as has students’ preferences for multimedia inclusion, but little research has examined the effect of multimedia on non-learning variables, such as student engagement. In addition, there is little empirical data on the relative impact of commercially-produced multimedia supplements compared to more personalized multimedia options featuring the face and/or voice of the course instructor.

Research at the college level reveals five components relevant to student engagement: academic challenge, active/collaborative learning, student-faculty interaction, enriching education experiences, and a supportive learning environment (Kenny, Kenny, & Dumont, 1995). The current study examines the use of multimedia as a tool to enhance student engagement by facilitating active learning, personalizing student-faculty connections, and enriching learning experiences. Relying on the theory underlying the personalization principle of multimedia inclusion, this study focuses on the impact of instructor-personalized multimedia supplements as opposed to commercially-produced pieces. The personalization principle highlights that conversational tone and/or a personalized learning agent enhances learning due to the activation of social conventions to listen and respond meaningfully. Thus, multimedia featuring the course instructor discussing concepts, as one would do in a face-to-face course, has the potential of simultaneously enhancing learning and student engagement. This type of multimedia inclusion increases the visibility of the course instructor to promote a more tangible faculty-student connection in the “faceless” environment of online learning.

The purpose of the current study is to examine changes in student engagement and learning as a function of the inclusion of instructor-personalized multimedia supplements in an online course. It is hypothesized that student engagement will increase as a function of the number of instructor-personalized multimedia supplements. Specifically, students completing a standard online course with no instructor-personalized multimedia will report lower levels of student engagement than students completing an identical course with the addition of instructor-personalized multimedia supplements; additionally, as a course has more instructor-personalized multimedia components, students will report increased course engagement.

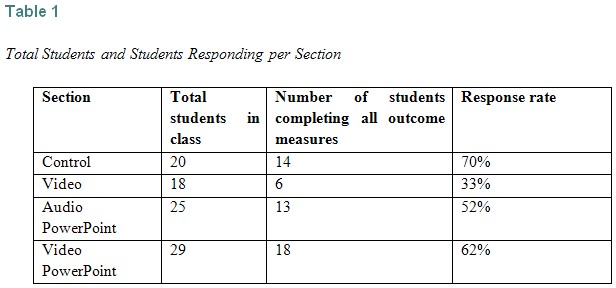

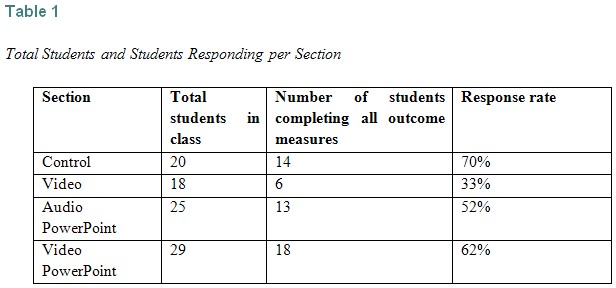

The sample for this quasi-experimental study includes four sections of an introductory-level general psychology course taught across sequential terms; all sections are taught completely in an online format using the Blackboard course management system. All sections are taught by the same instructor and utilize identical course structure, assignments, and instructional material. The instructor for this course is an experienced online teacher and has taught the target course in an online format for three years. Table 1 provides an overview of the number of students per section and the number of students completing all required outcome measures.

The study compares student engagement and learning outcomes between four quasi-experimental course conditions: control (no instructor-personalized multimedia), video (brief weekly videos of the instructor), video plus audio PowerPoint (weekly videos AND PowerPoint that is narrated by the instructor), and video, audio PowerPoint plus video PowerPoint (weekly videos and narrated PowerPoints along with a PowerPoint that is video narrated). All sections of the course contain complete instructional content with basic multimedia supplements woven throughout the online lectures. The control condition examines student outcomes in response to a fully-designed, multimedia-supported course without the addition of instructor-personalized multimedia supplements. The other sections are identical to the control condition with the addition of a specific type of instructor-personalized multimedia supplement. Multimedia supplements were added in a cumulative, rather than comparative, fashion. As such, the study examines changes in student outcomes in response to the integration of additional instructor-personalized multimedia supplements, but it does not address the comparative impact of each type of multimedia.

To ensure that students were aware of the instructor-personalized multimedia components, these pieces were highlighted in the course announcement area of the course. In the three sections containing instructor-personalized multimedia, supplements were added once per week for each of the 10 weeks of the term in the following ways:

All supplements were available via a link from the course announcements area appearing on the initial screen of the course management system; in addition, students received an email notification that a new course announcement had been posted.

At the completion of the term, students were asked to complete an online version of the Student Course Engagement Questionnaire and final course exam (as dictated by the requirements of the course). While the final exam was required as a portion of the students’ overall course grade, completion of the Student Course Engagement Questionnaire was completely voluntary. Those electing to complete the Student Course Engagement Questionnaire were directed to an anonymous online survey tool.

To examine the impact of the cumulative addition of instructor-personalized multimedia supplements, four conditions were created across sequential terms of the same course. The target course is structured in weekly modules across a ten-week term. Each weekly module contains an online lecture, threaded discussion assignment, written homework assignment, and online quiz. The information and assignments contained in each module are the same across all conditions of the study. The only difference between the conditions is the introduction of a specific type of instructor-personalized multimedia. The conditions are outlined below:

Control

The control section serves as a baseline measure to determine students’ engagement in the basic course prior to the addition of instructor-personalized multimedia supplements. To avoid a simple comparison between a multimedia-supported course and a non-multimedia-supported course, the control course was enhanced with an array of professionally-developed multimedia components. The basic version of the course contains 50 interactive review activities created via Respondus StudyMate, 30 publisher-produced videos, nine publisher-produced PowerPoint presentations, seven java-based, non-graded self-reviews, and 24 Flash-based animations. All of the multimedia components of the control section are professionally produced with no explicit connection to the course instructor.

Video

The video condition contains all the components of the control section with the addition of 10 short videos in which the instructor highlights weekly topics that may be of particular interest to the students. The videos, called “My Favorite Things,” were created using a digital video recorder and contain a simple head-shot of the instructor informally discussing the target topic. Each video was professionally edited to ensure quality appearance. Videos were one to three minutes in duration.

Video plus audio PowerPoints

The video plus audio PowerPoint condition contains all the material in the control and video conditions with the addition of an instructor-generated PowerPoint presentation with integrated audio narration. The audio PowerPoints, called “A Closer Look,” were created using authorPoint software that combines the PowerPoint presentation and audio narration into a single, compressed Flash file. All audio Powerpoint presentations are based on an instructor-generated PowerPoint presentation and are narrated by the course instructor. All audio PowerPoints were reviewed and edited by an instructional designer to ensure quality presentation. Each weekly audio PowerPoint went into additional detail about a selected course topic beyond what was covered in the text or online lecture. Audio PowerPoint presentations were three to eight minutes in duration.

Video, audio PowerPoint plus video PowerPoint

The final cumulative condition contained all the features of the three previous conditions with the addition of weekly video PowerPoint presentations. The video PowerPoint presentations were also created with authorPoint software. The authorPoint software coordinates webcam video of the instructor presenting the material with the instructor-generated PowerPoint presentation; the integrated presentation is a single, compressed Flash file. Each weekly video PowerPoint, called “Digging Deeper,” explored a selected course topic beyond what is covered in the text, online lecture, or other multimedia supplement. Each video PowerPoint was reviewed and edited by an instructional designer to ensure quality presentation. All video PowerPoint presentations were five to eight minutes in duration.

To allow for a comparative analysis of the impact of instructor-personalized multimedia inclusion on student engagement and cumulative learning, three outcome measures were implemented at the end of each term: a modified version of the Student Course Engagement Questionnaire (Handelsman, Briggs, Sullivan, & Towler, 2005), a cumulative final exam, and final course grades.

Student Course Engagement Questionnaire

The Student Course Engagement Questionnaire (Handelsman, Briggs, Sullivan, & Towler, 2005) is a 27-item measure designed explicitly to measure college students’ engagement with course material. Respondents indicate their level of agreement on a 5-point Likert-type scale (1 = not at all characteristic of me; 5 = very characteristic of me) to various statements concerning course engagement (such as “staying up on the readings” and “finding ways to make the course interesting”). Engagement is scored according to four discrete factors: skill engagement (including general study strategies, such as note-taking and studying), emotional engagement (including personal involvement with class material, such as relating course material to one’s own life), participation/interaction engagement (including participation in class activities with the instructor or other students, such as asking questions in class), and performance engagement (including levels of performance in class, such as getting a good grade). The Student Course Engagement Questionnaire was modified to target an online learning environment; specifically, the wording of eight questions was adjusted to be more reflective of an online classroom. For example, the statement “Raising my hand in class” was modified to read “Raising questions in the threaded discussions.”

Cumulative final exam

The cumulative final exam is a portion of the course requirements; it includes 80 multiple-choice questions and four short essay questions targeting key concepts and theories from each of the 10 weekly modules. The final exam is worth 100 points and accounts for 20% of the final course grade.

Final course grades

Final course grades were based on criterion-based scoring of all required course components. Grades were based on weekly threaded discussions (30%), weekly homework assignments (30%), weekly quizzes (20%), and final exam (20%).

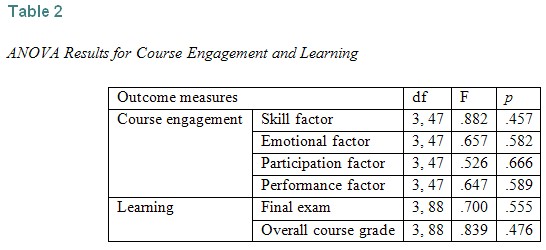

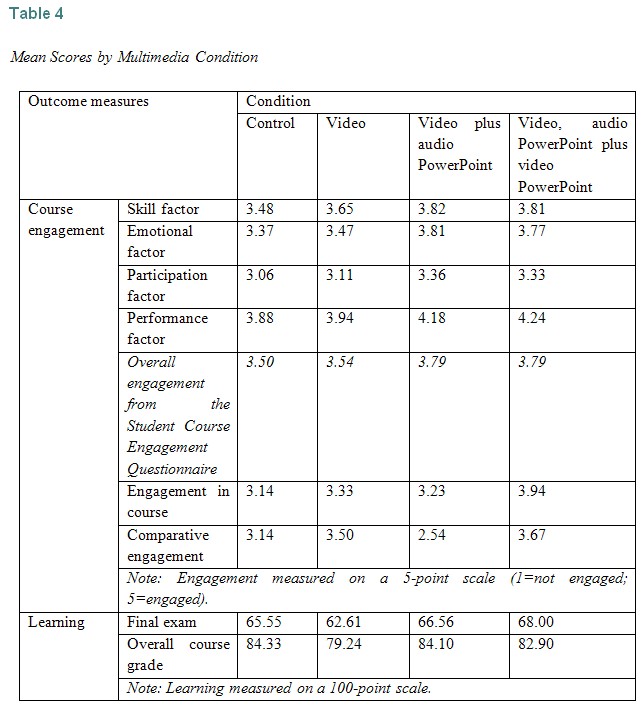

To examine potential differences in student engagement and learning as a function of instructor-personalized multimedia inclusion, one-way ANOVAs were conducted for each student engagement factor (skills, emotional, participation, and performance) as well as for the final exam. The results of these comparisons, as listed in Table 2, indicated no significant differences in course engagement or learning between any of the various levels of instructor-generated multimedia inclusion.

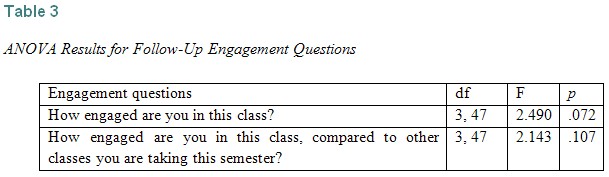

To further investigate potential differences in student engagement, follow-up questions were included with the Student Course Engagement Questionnaire. The follow-up questions were posed directly to students: “How engaged are you in this class?” and “How engaged are you in this class, compared to other classes you are taking this semester?” As shown in Table 3, analysis revealed no significant differences in students’ responses to these engagement indicators as a function of the level of instructor-personalized multimedia they experienced.

While none of the statistical analysis revealed significant differences between the groups, it is interesting to note that mean scores on each of the student engagement factors do slightly increase in the direction hypothesized. As reported in Table 4, for all engagement factors, the mean engagement score is lowest in the control condition and increases slightly in the conditions including the instructor-personalized multimedia components. This pattern is also repeated in the follow-up engagement question asking students their self-reported engagement in the class; however, the pattern of responding is not found when examining students’ self-reported engagement in this class compared to other classes they are taking at the same time.

An examination of the mean scores also shows that overall, regardless of course section, students tend to be most focused on performance engagement (4.09) with less emphasis on skill engagement (3.70), emotional engagement (3.69), and participation engagement (3.24). This type of focus on performance and course grades may be attributed to the nature of this introductory course; most students in the class (regardless of section) take this course as a general studies elective. As such, because the course is not directly relevant to their major, minor, or career/educational goals, students may be less engaged in learning the material and more focused on the impact of this course as it serves to meet necessary requirements of the larger educational plan. This assumption is supported by a follow-up question that directly asks students, “If you had to choose between getting a good grade in this class or being challenged, which would you choose?”; in response to this question, 76% of students indicated that they would prioritize getting a good grade in the course over being challenged.

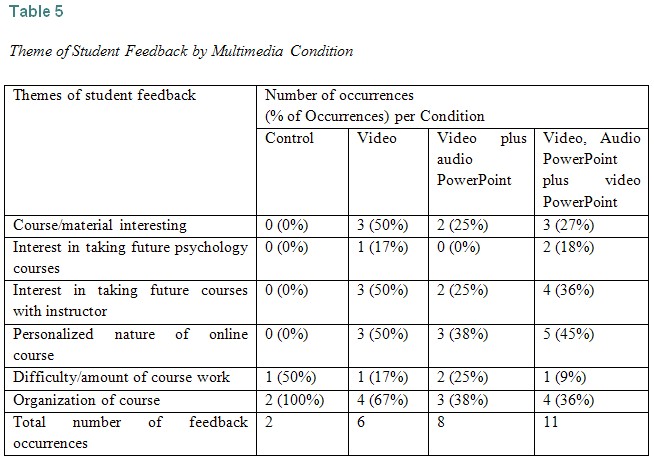

To further investigate potential differences in student engagement, unsolicited student feedback (received via email) and solicited student comments (received via open-ended course evaluation questions) were analyzed according to the theme of the message. Over the course of the four target courses, 27 qualitative feedback statements were available for analysis; the number of feedback statements by multimedia condition is shown in Table 5. An open coding qualitative analysis (Strauss & Corbin, 1993) revealed six common themes: course/material interesting, interest in taking future psychology courses, interest in taking future courses with this online instructor, personalized nature of the online course, difficulty/amount of the course work, and organization of the course. Table 5 provides an analysis of student feedback according to themes and term; in many cases, feedback statements were analyzed and coded into multiple categories.

While there was minimal feedback available in the control condition (only two feedback statements from the 20 students in the course), the available feedback was reflective of the other conditions in expressing an appreciation for the organization of the course as well as a belief that the course was difficult and/or contained excessive work for an introductory level course. None of the feedback in the control condition mentioned a personal interest in the material, instructor, discipline, or online learning. The simple lack of student feedback in the control condition may be taken as an indicator of engagement; students who are less engaged in the course may be less likely to provide feedback about their experiences in the course.

Student feedback from the instructor-personalized multimedia conditions of the course included appreciation of the organization and difficulty/workload of the course and also acknowledgement that the course material was interesting and stimulated an increased interest in taking future psychology courses and/or future courses with this instructor. In addition, students in the instructor-personalized multimedia conditions were more likely to comment that the online course (or the online learning experience) was much more personal than they had expected or experienced in the past, as expressed, for example, by this student (in the video, audio PowerPoint plus video PowerPoint condition):

Honestly, I was very worried about this class when I decided to take it. I can’t tell you how Dr. M pulled my interest to the point where I want to minor now in Psychology. The online class was organized PERFECTLY. The one that made me feel that I was actually part of her class was posting from her webcam of her actually explaining her favorite things each week in detail and also her slide presentations with her voice-overs.

These views were echoed by three other students (all in the video, audio PowerPoint plus video PowerPoint condition):

Thanks for a great class! This is the first online class I have taken where I actually feel like I KNOW the instructor. I loved your presentations each week that made me feel like I was sitting right in the front row of your class. Even though you made us do a lot of work, I hope to take another course from you in the future.

I just wanted you to know that I originally took General Psychology as an elective, but now I am thinking about changing my major. I used to think psychology was all about mental illness and therapy; I had no idea of all the other fun topics studied by psychologists. Thanks for taking the time to talk to us (with all your videos and stuff) about your experiences as a psychologist.

How did you make those lectures where we could see a video of you while listening to you explain the PowerPoint? I really liked those and think that all online teachers should use them. They really helped to grab my attention and make all the readings more interesting.

The impact was again highlighted in these comments from two students (both in the video plus audio PowerPoint condition):

I just wanted to ask you if you would be willing to serve as a reference for me. I take all online classes and it is hard to get to know the professors well enough to ask for a reference. I really feel like I know you as well as any of the face-to-face teachers I had in the past. I don’t know if it is the videos or what, but your personality really came across in this class.

Loved your class (hated the final exam)! I never knew that psychology could be so interesting! I actually found myself yelling at my wife to come to the computer to watch the various little videos you made about your favorite topics in psychology.

As indicated by the open-ended student feedback (both solicited and unsolicited), students like the instructor-personalized multimedia components and believe they are an important component of their online learning experience. As such, the discrepancy between the lack of findings on the Student Course Engagement Questionnaire and the narrative student feedback brings up a number of unanswered issues. Specifically, it is unclear as to why student feedback indicates increased engagement, yet quantitative measures fail to detect differences in engagement as a function of instructor-generated multimedia inclusion. This discrepancy highlights various directions for future research:

A final issue of concern when interpreting the results of this study, as is the case with much of the scholarship of teaching and learning, rests in its quasi-experimental design. Because this is classroom-based research and students were not randomly assigned to instructional conditions, there are many unmeasured factors that have the potential to influence outcomes. While there is nothing that would suggest systematic differences in the student characteristics between various terms, it is still an important consideration. Also, as the researcher was the instructor of investigation, one must be cautious about subtle bias or influence. To help monitor for potential bias, a disciplinary colleague reviewed all courses at the conclusion of the study, and no concerns were noted in this review.

The discrepancies between the quantitative and qualitative findings of this study highlight the complexity surrounding the appropriate use of multimedia within an online course. While the qualitative feedback indicates that students liked and benefitted from the instructor-personalized multimedia supplements, the quantitative data suggests that inclusion of instructor-personalized multimedia may have little impact on student engagement or learning in an online course.

Although there is a theoretical push for multimedia inclusion, the reality is that the integration of multimedia course enhancements can be extremely challenging. It is important to know if the time and monetary investment required to design, develop, and integrate multimedia is justified by the student outcomes. As indicated by the qualitative results of this study, instructors who create and integrate personalized multimedia components in their courses may benefit from increased student satisfaction with the course. But since the quantitative data finds that instructor-generated multimedia components have little measurable impact on student engagement or learning, instructors should monitor the time and monetary investment necessary for personalized multimedia inclusion. As a general rule, online instructors can benefit from the development and inclusion of personalized multimedia only if the investment to do so is low.

The questions posed as a result of this study should serve as a springboard for future research into the impact of multimedia inclusion on student engagement and learning. Little is known about student engagement in online courses at the post-secondary level; additional data is needed to advance our understanding about best practices in designing online courses that promote student engagement. Research on the impact of instructor-personalized multimedia on both learning and student engagement is necessary to assist universities in developing policies surrounding course design expectations as well as to guide budget decisions concerning the investment of resources in the multimedia component of online course development (Simmons, 2004).

Anderson, T., Rourke, L., Garrison, D. R., & Archer, W. (2001). Assessing teaching presence in a computer conferencing context. Journal of Asynchronous Learning Networks, 5(2), 1-17.

Burg, J., Wong, Y., & McCoy, L. (2004). Developing a strategy for creating and assessing digital media curriculum material. The Interactive Multimedia Electronic Journal of Computer-Enhanced Learning, 6(1).

Bomia, L., Beluzo, L., Demeester, D., Elander, K., Johnson, M., & Sheldon, B. (1997). The impact of teaching strategies on intrinsic motivation. Champaign, IL: ERIC Clearinghouse on Elementary and Early Childhood Education. (ERIC Document Reproduction Service No. ED 418 925)

Clark, R.C., & Mayer, R.E. (2002). E-Learning and the science of instruction: Proven guidelines for consumers and designers of multimedia learning. San Francisco: Jossey-Bass.

Doolittle, P. (2001). Multimedia learning: Empirical results and practical applications. Retrieved August 5, 2005, from http://blogs.usask.ca/multimedia_learning_theory/multimedia.pdf

Dunn, R., Dunn, K., & Price, G. E. (1984). Learning style inventory. Lawrence, KS, USA: Price Systems.

Fleming, N.D., & Mills, C. (1992). Not another inventory, rather a catalyst for reflection. To Improve the Academy, 11, 137-149.

Guthrie, J. T., & Anderson, E. (1999). Engagement in reading: Processes of motivated, strategic, knowledgeable, social readers. In J. T. Guthrie & D. E. Alvermann (Eds.), Engaged reading: Processes, practices, and policy implications (pp. 17–45). New York: Teachers College Press.

Handelsman, M. M., Briggs, W. L., Sullivan, N., & Towler, A. (2005). A measure of college student course engagement. Journal of Educational Research, 98, 184-191.

Harris, C. (2002, Summer). Is multimedia-based instruction Hawthorne revisited? Is difference the difference? Education, 122(4).

Hede, T., & Hede, A. (2002). Multimedia effects on learning: Design implications of an integrated model. In S. McNamara and E. Stacey (Eds), Untangling the web: Establishing learning links. Proceedings ASET Conference 2002, Melbourne, Australia. Retrieved July 22, 2008, from http://www.ascilite.org.au/aset-archives/confs/2002/hede-t.html

Jonassen, D.H. (2000). Computers as mindtools for schools: Engaging critical thinking. Upper Saddle River, NJ: Merrill/Prentice Hall.

Kenny, G., Kenny, D., & Dumont, R. (1995). Mission and place: Strengthening learning and community through campus design. Oryx/Greenwood.

Kolb, D. A. (1984). Experiential learning. Englewood Cliffs, NJ: Prentice Hall.

Maddux, C., Johnson, D., & Willis, J. (2001). Educational computer: Learning with tomorrow’s technologies. Boston: Allyn and Bacon.

Mandernach, B. J. (2006). Confessions of a faculty telecommuter: The freedom paradox. Online Classroom, 3, 7-8.

Mandernach, B. J. (2008). Comparative impact of commercially-produced and instructor-generated videos on student engagement. Manuscript in preparation.

Mayer, R. E. (2001). Multimedia learning. Cambridge, UK: Cambridge University Press.

Mayer, R. (1997). Multimedia learning: Are we asking the right questions? Educational Psychology Review, 8, 357-371.

Mayer, R., & Anderson, R. (1992). The instructive animation: Helping students build connections between words and pictures in multimedia learning. Journal of Educational Psychology, 88, 64-73.

Mills, D. W. (2002). Applying what we know: Student learning styles. Retrieved April 7, 2009, from http://www.csrnet.org/csrnet/articles/student-learning-styles.html

Moreno, R., & Mayer, R. (2000). A learner-centered approach to multimedia explanations: Deriving instructional design principles from cognitive theory. Interactive Multimedia Journal of Computer Enhanced Learning, 2(2).

Richardson, J. C., & Swan, K. (2003). Examining social presence in online courses in relation to students’ perceived learning and satisfaction. Journal of Asynchronous Learning Networks, 7(1), 68-88.

Ruhe, V. (2006). A toolkit for writing surveys to measure student engagement, reflective and responsible learning. Retrieved July 22, 2008, from http://www1.umn.edu/innovate/toolkit.pdf

Schlecty, P. (1994). Increasing student engagement. Presentation at the Missouri Leadership Academy.

Schwartz, J.E., & Beichner, R.J. (1999). Essentials of educational technology. Boston: Allyn and Bacon.

Short, J., Williams, E., & Christie, B. (1976). The social psychology of telecommunications. London: John Wiley and Sons.

Simons, T. (2004, September). The multimedia paradox. Presentations Magazine. Retrieved August 5, 2005, from http://www.presentations.com/presentations/trends/article_display.jsp?vnu_content_id=1000734183

Skinner, E. A., Wellborn, J. G., & Connell, J. P. (1990). What it takes to do well in school and whether I've got it: The role of perceived control in children's engagement and school achievement. Journal of Educational Psychology, 82, 22-32.

Sternberg, R. J. (1997). Thinking styles. New York: Cambridge University Press.

Strauss, A., & Corbin, J. (1990). Basics of qualitative research: Grounded theory procedures and techniques. Newbury Park, CA: Sage Publications.