|

|

|

Denise K. Comer, Charlotte R. Clark, and Dorian A. Canelas

Duke University, USA

This study aimed to evaluate how peer-to-peer interactions through writing impact student learning in introductory-level massive open online courses (MOOCs) across disciplines. This article presents the results of a qualitative coding analysis of peer-to-peer interactions in two introductory level MOOCs: English Composition I: Achieving Expertise and Introduction to Chemistry. Results indicate that peer-to-peer interactions in writing through the forums and through peer assessment enhance learner understanding, link to course learning objectives, and generally contribute positively to the learning environment. Moreover, because forum interactions and peer review occur in written form, our research contributes to open distance learning (ODL) scholarship by highlighting the importance of writing to learn as a significant pedagogical practice that should be encouraged more in MOOCs across disciplines.

Keywords: Open learning; higher education; online learning; massive open online courses

Massive open online courses (MOOCs) could be poised to transform access to higher education for millions of people worldwide (Waldrop, 2013). From a pedagogical standpoint, the sheer scale of these courses limits the extent of student-instructor interpersonal contact, and this leads to a central question involving how a reliance on peer interaction and review impacts student learning. Student-student interaction, once called “the neglected variable in education” (Johnson, 1981), is now recognized as a fundamental high-impact practice in education (Salomon & Perkins, 1998; Chi, 2009). Clearly humans interact via multiple modes, including in person, but also more and more frequently via long-distance digital communication such as by telephone, email, social media websites, online chats and forums, video conferencing, and blogs; all of these modes are emerging in modern pedagogies. To this end, deWaard et al. cite “dialogue as a core feature of learning in any world, whether face-to-face or digital” (deWaard et al., 2014). In fact, in face-to-face and smaller-scale online learning contexts, peer-to-peer dialogues have been shown to be critical to developing deep conceptual understanding (Chi, 2009). In MOOCs, peer-to-peer dialogues occur primarily through writing: in forums and via peer-reviewed assignments.

MOOCs, because of their scale, offer a significant opportunity for peer-to-peer interaction in the form of dialogic, networked learning experiences (Clarà & Barberà, 2013). However, also because of their scale and the diversity of student learners enrolled, MOOCs present substantial challenges in this domain (Kim, 2012). Some scholars have suggested that MOOCs limit or underestimate the importance of interpersonal engagement for learning (Kolowich, 2011; Kim, 2012; Pienta, 2013). Questions about how or whether to facilitate interpersonal engagement in MOOCs have particular importance since the majority of MOOC learners are adults (Guo & Reinecke, 2014). Research maintains that constructivist approaches to learning are especially effective with adult learners (Huang, 2002; Ruey, 2010). It is within this context that we endeavor to examine one of the key questions concerning the efficacy of MOOCs: How can interactive learning be promoted and assessed in this context?

Any exploration of this question, though, also demands an inquiry into writing. The primary mechanisms for student interaction in MOOCs occur through writing in course forums and peer reviewed assignments. The act of writing has been identified as a high-impact learning tool across disciplines (Kuh, 2008), and efficacy in writing has been shown to aid in access to higher education and retention (Crosling, Thomas, & Heagney, 2007). Writing has also been shown to be effective in the promotion of learning and student success in relatively large enrollment face-to-face courses (Cooper, 1993; Rivard, 1994; Reynolds et al., 2012). Research suggests that writing instruction in online settings can provide enhanced learning experiences and opportunities for pedagogical reflection (Boynton, 2002). Moreover, across educational disciplines and compared to face-to-face dialogues in time-limited classroom settings, written, time-independent online discourse has been shown to lead to more reflective contributions by participants (Hawkes, 2001; Bernard, 2004). Research also suggests that written dialogue in online courses contributes to the development of students’ critical reasoning skills (Garrison, 2001; Joyner, 2012).

Given the complex ways in which writing intersects with participant interaction in MOOCs, it is of crucial importance to examine how writing impacts the MOOC learning experience. Writing, in fact, may be a key dimension for forging intersections between MOOCs and more traditional higher education contexts. That is, amidst ongoing debates about the promise or threat of MOOCs to higher education more broadly, perhaps writing offers a point of reciprocal research, where we can learn more about the role of writing in learning across higher education contexts, from open distance learning to face-to-face settings and all the hybrid and shifting contexts in between.

Herein, we examine two separate introductory-level MOOCs: one in the humanities, English Composition I: Achieving Expertise (March 18, 2013-June 10, 2013), taught by Denise Comer through Duke University and Coursera, and one in the natural sciences, Introduction to Chemistry (January 20, 2014-April 6, 2014), taught by Dorian Canelas through Duke University and Coursera. Although at first glance these courses might seem unrelated, common threads weave them together into a research project: both specifically target introductory students; focus on critical thinking and writing-to-learn to develop expertise; foster key skills for access to fields in higher education; and employ a combination of video lectures and quizzes along with formal writing assignments and informal written communication via forums. We specifically chose to conduct research across disciplines because we wanted to contribute to emerging MOOC literature that examines how disciplinarity impacts MOOC pedagogy and learning outcomes dimensions (Adamopoulos, 2013; Cain, 2014).

The main objective of this study was to evaluate how peer-to-peer interactions through writing impact student learning in introductory-level MOOCs across disciplines. Specifically, we explored the following research questions:

Our research draws on several related strands of scholarship: writing-to-learn theory, online writing theory; science, technology, engineering, and mathematics (STEM) pedagogy; and emerging MOOC research. Our research contributes to scholarship on open distance learning (ODL) by examining the role of writing as a high impact educational practice in MOOCs across disciplines.

Writing-to-learn is a pedagogy that actively involves students across disciplines in the construction of their own knowledge through writing (Sorcinelli & Elbow, 1997; Carter, 2007). Peer review makes this process not only active but interactive. Student-student and student-faculty dialogues have been shown to be critical to developing a community of scholarship for enhanced learning and deep conceptual understanding among learners (Chi, 2009; Johnson, 1981). The capabilities of MOOCs make it possible to bring this active-constructive-interactive framework (Chi, 2009) to thousands of students at one time. Indeed, emerging research suggests that MOOCs have the capacity to create unique “networked learning experiences” with unprecedented opportunities for collaboration, interaction, and resource exchange in a community of learners (Kop, Fournier, & Mak, 2011; Siemens, 2005). And, also in keeping with these findings, research has found evidence that the most successful MOOC students are typically heavy forum users (Breslow, 2013).

Given that MOOCs promise to increase access to postsecondary education (Yuan & Powell, 2013), we are particularly interested in how peer-to-peer interactions through writing in introductory-level MOOCs impact the learning outcomes for less academically advanced and/or under-resourced learners. Although research has also indicated that MOOCs are not yet reaching less academically prepared students (Emanuel, 2013), we endeavor to learn how less academically prepared students can best learn in these introductory-level MOOCs. Research suggests that less well-prepared students can behave more passively in academic settings, relying on memorization and imitation as strategies for learning (Mammino, 2011). This has been shown to arise at least partly from lack of comfort with the use of language, particularly if trying to communicate in a non-native language (Mammino, 2011). Research in developmental writing suggests that early emphasis on writing in a student’s academic career can improve retention and academic performance (Crews & Aragon, 2004).

Learning more about how peer-to-peer interactions through writing impacts retention and academic performance is especially critical in the context of STEM. Research suggests that the greatest loss of student interest in STEM coursework occurs during the first year of college study (Daempfle, 2004). Scholarship has found that peer interactions in introductory-level science courses, especially through writing, have in some contexts doubled student retention rates in STEM disciplines (Watkins & Mazur, 2013). Writing to learn has been used extensively in chemistry and other science disciplines and has been shown to help students confront and resolve several key barriers to and misconceptions about effective science learning (Pelaez, 2002; Vázquez, 2012; Reynolds et al., 2012). More specifically, writing with peer review has been shown to improve student performance, communication, and satisfaction even in large enrollment undergraduate chemistry courses (Cooper, 1993). We are curious to understand more about how these positive attributes of peer-to-peer interactions through writing will transfer to the MOOC environment and what impact, if any, they may have on student learning and retention in introductory science.

Our research involved intensive qualitative data coding from each MOOC using NVivo(TM) qualitative research software. Coding was accomplished by a team of 10 trained coders (doctoral students, post-doctoral fellows, faculty) during a five-day coding workshop from 11 March 2014 - 14 March 2014. The workshop was designed and led by two researchers in the social sciences at Duke University who primarily work with qualitative methods and are also authorized trainers for QSR, International for the software program NVivo. We estimate that about 175 hours of cumulative coder time occurred during the week. Below we provide more details about our methods.

Prior to the workshop, we developed a coding protocol, with the assistance of a doctoral student and postdoctoral scholar in developmental psychology. The protocol included nodes for such items as affect, length of post, attitude, learning objectives, student challenges, and elements of writing (for full coding protocol, see Appendix A).

During the workshop, coders were first led through processes to become familiar with the structure of MOOCs in general, and our study MOOCs in particular, and with important NVivo components, including data capture, import, and coding to themes. Second, coders were introduced to the pre-designed project file and node structure, and leaders oversaw use of the protocols by team members. After this step, based on coding of the same data sources by team members, leaders examined inter-rater reliability and made adjustments to the team’s work. Third, coders worked individually on various data sources as assigned. Twice a day, time was taken for team discussion, and leaders were present at all times during the coding workshop to answer individual questions.

When introducing the node structure, the coding workshop leader walked all coders through each node and its planned use. Subsequently, teams of three coders coded the same two documents to the node structure. This led to assessment of inter-rater reliability and team discussion. At several points node definitions and/or structure were discussed as a whole group.

Many of the nodes are what Maxwell (2005) refers to as “organizational” or “substantive” nodes, which are more topical, descriptive, or straightforward to interpret than “theoretical” coding (which is more abstract) (p. 97). Because most of the nodes have literal definitions, with a few exceptions, to which we paid close attention, we believe little room existed for coders to differ substantially from each other on inferential coding of text.

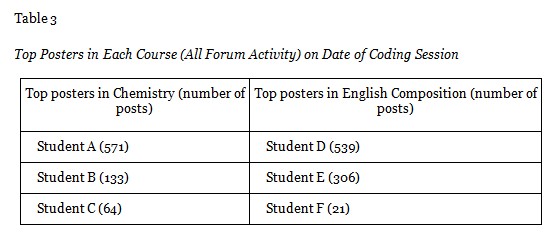

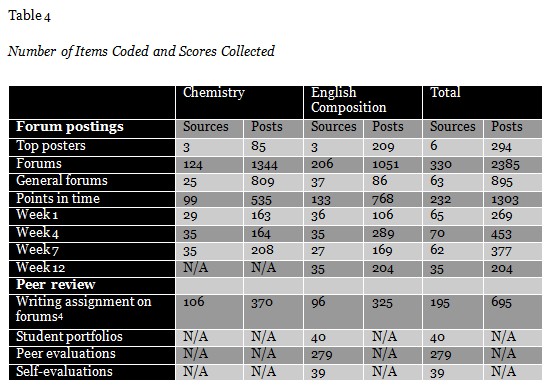

Reliability was also established through having coders collect and evaluate different types of data from different disciplines throughout the coding workshop (for a summary of items coded, please see Table 4). This form of triangulation, called “investigator triangulation” (Denzin, 2009, p. 306), involves multiple researchers in the data collection and analysis process, and the “difference between researchers can be used as a method for promoting better understanding” (Armstrong et al., 1997, p. 597).

Finally, we spent the second half of Day Five of the coding workshop having coders review nodes in the merged file for coding inconsistencies. Various coders were given a family of nodes to open and review, using NVivo queries to consider the consistency of coding that they found.

We coded data from two different areas of the MOOCs: discussion forums and peer assessments.

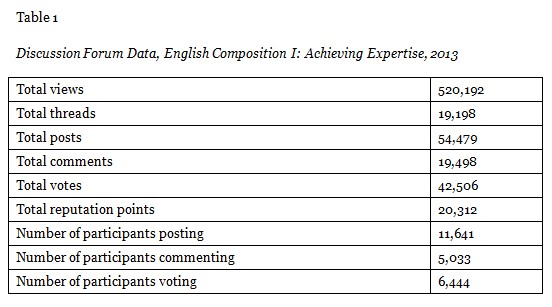

The following data (Tables 1 and 2) provide a sense of the total discussion forum volume for these courses, from which we culled our sample. Please see Appendix A for more definitions and descriptive details.

From this total volume, we coded a sampling of two types of discussion forum data.

We coded 35 full discussion forum threads in Weeks One, Four, and Seven of both courses. In addition, we coded 35 full threads from Week 12 for English Composition (the Chemistry course was not 12 weeks long.) We also coded general forum posts for both courses.

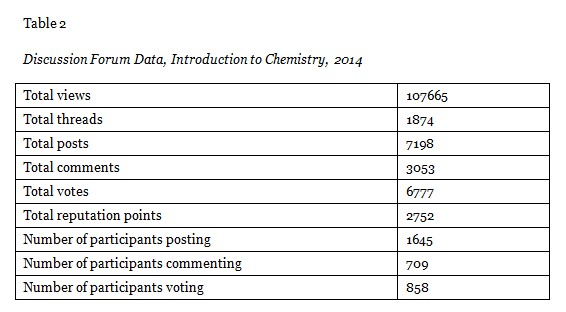

We captured all activities of the top three posters in each of the two courses and coded a sample of these posts. (See Table 3 for top three posters in each course and statistics.)

In addition to coding data from discussion forums, we also coded data from peer assessments in each course. Students provided feedback on other students’ writing for both courses. We did not code the assignments themselves.

In Chemistry, this feedback was located in a specially designated open forum for peer review of a writing assignment. Students in the Chemistry class submitted an essay on a chemistry-related topic of their choice to the peer-review tool (see Appendix B). Coursera then randomly assigned each submission to be read and commented upon by two peers according to a rubric (see Appendix C). After the first student had reviewed a peer’s essay by entering their feedback as written comments, Coursera automatically populated a designated forum with the essay and anonymous peer review, whereupon any additional anonymous peer reviews would appear over time and more students could read and comment on each essay. Seven hundred and fourteen students submitted this assignment and received peer feedback. We coded evaluations on 120 submissions (16.8 percent of the total volume of submissions), randomly selected by capturing every 6th submission with correlating feedback on the Chemistry peer assessment forum.

We reviewed three different types of English Composition peer-assessment data.

1. Peer feedback on a brief introductory essay, “I Am A Writer,” posted to a specially designated open forum (see Appendix D). This first introductory writing activity, designed to facilitate conversations about writing among the community of learners, was conducted on the forums as opposed to through the formal peer-assessment mechanism. Thus, students could voluntarily respond to as few or as many peers’ submissions as they wanted. Approximately 8,000 students posted the “I Am A Writer” assignment. We chose to capture feedback on 80 peer responses, which amounts to feedback on about 1% of the submissions. This was roughly equivalent to taking the first submission from each page of posts on the designated “I am a Writer” forum for a random sample.

2. Peer feedback provided through the formal peer-assessment mechanism. For each of the four major writing projects in English Composition (see Appendix E), students submitted a draft and a revision to the formal peer-assessment mechanism in Coursera. For each project, Coursera randomly distributed each student’s draft submission to three peers in order to receive “formative feedback” according to a rubric. Then, each student’s final version was randomly distributed to four other peers in order to receive “evaluative feedback” according to a rubric.

Formative and evaluative peer feedback rubrics included a series of specific questions as well as several open-ended questions (see Appendix F). We only coded data from questions that seemed relevant to peer-to-peer interaction, namely,

These peer-assessment submissions and feedback were private for students, and so in this case, as required by Duke University’s Internal Review Board, the only student submissions evaluated were those from students who approved our use of these data. Throughout the course, the students provided 14,682 separate project peer assessment feedbacks. Approximately 250 students gave permission for their work to be included in this research process. We coded a random sample of the feedback provided by 50 of these students, which amounted to 342 project peer-assessment feedbacks.

This data enabled us to look at feedback on a student-by-student basis (as opposed to assignment by assignment).

3. Comments about peer feedback written in final reflective essays. Students in English Composition compiled portfolios of their writing at the end of the course. These portfolios consisted of drafts and revisions of each of the four major projects as well as a final reflective essay in which students made an argument about their progress toward the learning objectives of the course (see Appendix G). One thousand four hundred and fifteen students completed final reflective essays; approximately 250 students gave permission for their final reflective essays to be included in the research process. We coded comments about their experiences providing and receiving peer feedback in 48 of these final reflective essays.

Table 4 shows the total number of items coded for each type of source.

Our research included several limitations. A primary limitation is that not all enrolled students participated by posting in the forums, so any analysis of forum posts will only include data from those students who felt motivated to post. Additional limitations include the following:

After the coding was completed, we ran several queries through NVivo. Below are several of the most significant results.

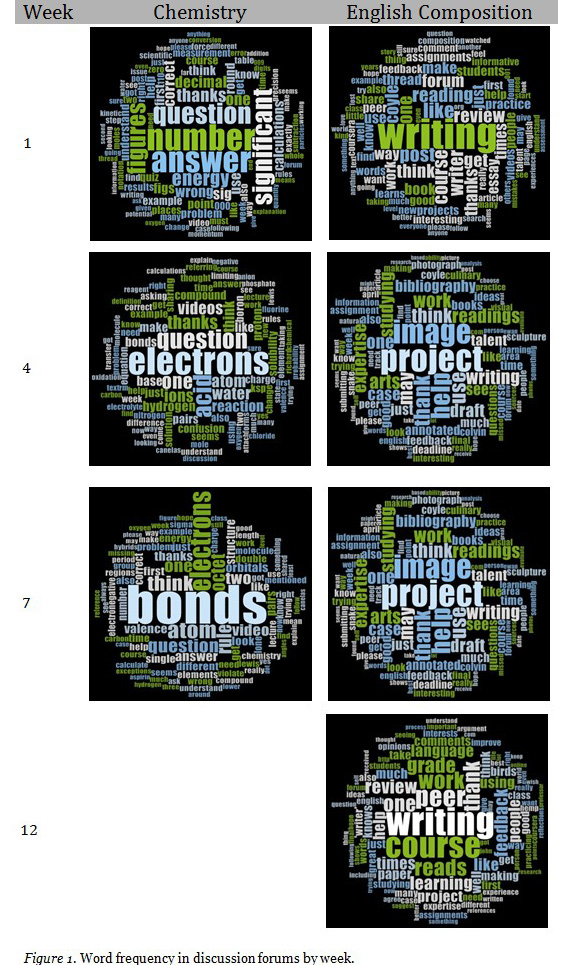

Figure 1 illustrates the 100 most common words in weekly forums in each course. The larger the word, the more commonly it appeared in the forum.

These results illustrate visually that students were staying on topic by primarily engaging in discussions that paralleled the weekly content of the courses. For example, in Chemistry, the syllabus has the following descriptions for content in weeks six and seven, respectively:

Week 6: Introduction to light, Bohr model of the hydrogen atom, atomic orbitals, electron configurations, valence versus core electrons, more information about periodicity.

Week 7: Introduction to chemical bonding concepts including sigma and pi bonds, Lewis dot structures, resonance, formal charge, hybridization of the main group elements, introduction to molecular shapes.

Likewise illustrating the ways in which the discussion forums stayed on topic to the course content, the syllabus for English Composition includes in Weeks 6 and 7 the following text:

What is an annotated bibliography?

Peer Feedback: Project 2 Image Analysis

Sample Case Studies

Clearly, the overwhelming majority of peer-to-peer discussions in the forums for the English Composition and Chemistry courses studied herein are directly related to the course content. This observation offers a counterpoint to the observation by other researchers that “a substantial portion of the discussions [in MOOC forums] are not directly course related” (Brinton et al., 2013) and these data qualify the conclusion that “small talk is a major source of information overload in the forums” (Brinton et al., 2013).

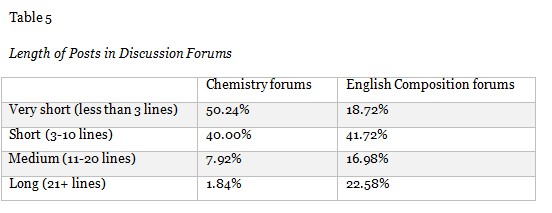

Table 5 shows post length in the forums. In general, Chemistry students’ posts were shorter than those of the English Composition students. The Chemistry forum included a much higher percentage of posts that were coded as very short or short; over 90% of the posts fell into these two categories. On the other hand, English Composition forums also included many posts that were coded as very short or short (approximately 60%), but nearly 40% of the posts in this course were coded as medium or long. While Chemistry forums had about 2% of posts coded as long, English Composition had nearly 23% coded as long.

Attitude is well established as being critically important to learning: “In order for student-student interaction to have constructive impact on learning, it must be characterized by acceptance, support, and liking” (Johnson, 1981, p. 9). Research indicates that learners’ conceptions of and attitudes toward learning have a deep impact on the efficacy of online peer assessment and interactions (Yang & Tsai, 2010).

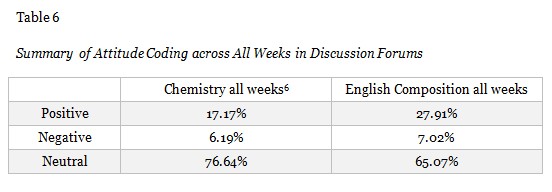

Every post, or part of a post, if warranted, was coded as either positive, negative, or neutral (Table 6). Attitude of student writing in the forums was tracked as a function of time in the courses. Considering all coded weeks, the majority of content coded in student posts were neutral in attitude in both courses, and a relatively small percentage was coded as negative in both courses. The attitude expressed in student posts was generally more positive than negative in both courses: 2.8 times more positive than negative in Chemistry and 3.9 times more positive than negative in English Composition.

Examples of posts coded to positive attitude:

“I am starting to understand why I am studying on a Friday evening for the first time in my entire life. :)”

“I appreciate all the hard work that my reviewers went to . . . thank you!”

Example of posts coded to negative attitude:

“Go for it (un-enrole) [sic]- [two names removed]. You both know too much already and you obviously have nothing to gain from this course. You’ll be doing us “stupid” students a favor.”

The tenor of posts across all weekly forums was coded as slightly more positive in English Composition than in Chemistry (27% of all words coded in English weekly forums compared to 17% in Chemistry). Both Chemistry and English were coded as having roughly the same amount of negative comments (6% and 7% respectively). Note that we also endeavored to distinguish attitude from types of writing critique. One could have a positive attitude while providing constructive critique for writing improvements, for example. The greater degree of positivity than negativity in the forums suggests that the forums can provide a meaningful mechanism for establishing a learning community that has the potential to enhance students’ learning gains and course experience.

Affective and emotional factors are known to play a role in the success of any pedagogical practice (Gannon & Davies, 2007). Research shows that affect impacts students’ response to feedback on their writing (Zhang, 1995). Affect and emotions have also been shown to be particularly important in engagement in science-related activities, and this, in turn, has been suggested as a link to improving public science literacy (Lin, 2012; Falk, 2007). Since MOOCs may be considered a pathway to increasing public understanding of scientific information and development of broad-based efficacy in essential skills such as writing, we were interested in how affect and emotion emerged in the discussion forums.

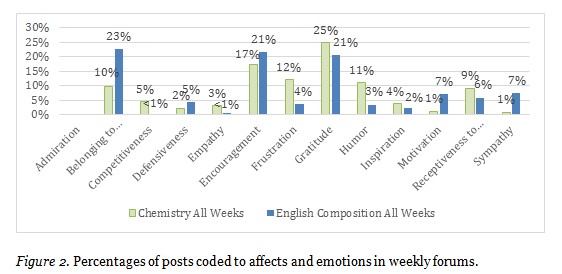

Figure 2 shows the result of queries to identify the coded affects and emotions in combined data from both courses in the weekly forums (See Appendix A for a list of all affect/emotion nodes).

We coded to distinguish between attitude, as an evaluation, and affect, as an expression of feeling or emotion. Of course, students often expressed both an attitude and an affect, and in those cases, we coded to both types of nodes, but text was only coded to affect when appropriate. For example, the first quote below would be coded both to negative attitude and to the affect/emotion of “frustration”, whereas the second would be coded only to negative attitude.

Coded to both negative and frustration: “I haven’t figured this one out either, or any other similar equation for that matter. I am getting really frustrated.”

Coded to negative, but not frustration: “I don’t believe everyone watched and actually listened to the course instructor’s direction on peer feedback. I doubt if anyone taking this course is a writing “Einstein” (genius).”

“Gratitude” and “encouragement” were in the top three of affects coded in discussion forum posts for both Chemistry and English Composition. Text coded to these affects ranged from simple phrases, such as “Thank you for your insights” or “I do think your efforts are praiseworthy,” to lengthier:

“Do not give up! It can’t always be easy. Believe me or not I do some review before the quizzes and I have not yet reached 100%. Some of the questions are tricky! Try hard. Ask for help on the forums. You’ll make it! :)”

In the English Composition course, “Belonging to community” was the most frequently coded affect. Text coded to this affect included the following types of posts:

“I believe most learners here are also not expert writers, just like you and me. So let’s just keep writing and keep improving together, okay?”

“Most of the time, I feel like I’m an individual learner, but when I see the discussions, answers, and so on, I feel like there is someone who is doing something with me also, so I feel sometimes a group member.”

“I’ll hope we can interact, learn and share knowledge together.”

In Chemistry, “frustration,” “humor,” and “belonging” were frequently coded affects:

“I am so confused about how to determine the protons and electrons that move and create different reactions. So frustrated.”

“I got strange looks from people who don’t think that a sleep-deprived working single mother should be giggling at chemistry at 2am.”

“I’ve learnt so much from you all, and I know I can come with any question no matter how trivial.”

While some writing was coded to “competitiveness” (for example, “I do not want to sound blatant or arrogant but I would expect it to be more challenging”), it was not a particularly prevalent affect. Rather, in both classes, students often expressed receptiveness to peer feedback or critique of their work.

“I hope someone will correct anything that is wrong about what I have just written.”

“Oh right, I didn’t notice it was in solid form when I answered! Thanks!”

“But the feedback from peers, critical, suggestive and appreciative, made it possible for me to improve upon my shortcomings and garner for myself scores of three 5’s and a 5.5. Am I happy? I am indebted.”

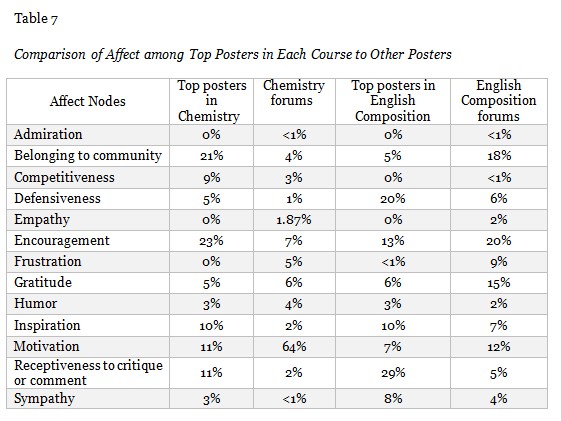

In addition to the weekly forums which were set up by the course instructors, each course also contained other forums including one called “General Discussion.” Table 7 compares the affect of top posters in each course to general discussion forum posters.

In Chemistry, the top posters most strongly expressed encouragement (23% of the words coded to affect), a feeling of belonging to the community (21%), and motivation (11%). Comparatively, other posters in the Chemistry general discussion forum heavily expressed motivation (64%), with the next most commonly expressed affect being gratitude (6%). The top posters in Chemistry were also more frequently coded as being receptive to critiques (11%) than the general posters (2%).

Similarly, in English, the top posters in the general discussion forum were much more frequently coded as being receptive to critiques of their work by peers (29%) than general posters (5%). The top posters also much more frequently expressed defensiveness (20%) than general forum posters (6%). Posts in the forums most frequently were coding as expressing encouragement (20%), belonging to the community (18%), and gratitude (15%).

One criticism of MOOCs is that assessment of student learning can be difficult when relying on multiple-choice quizzes (Meisenhelder, 2013). Many MOOCs, however, have much more versatile assignment types and answer formats available (Balfour, 2013; Breslow et al., 2013). Writing in MOOCs—whether through formal writing assignments, short-answer quizzes, or discussion-forum dialogue—can offer a strong opportunity for students to gain in learning objectives and for researchers to assess student learning (Comer, 2013). Some prior literature has even suggested that people can be more reflective when their engagement is via online writing than in face-to-face interaction (Hawkes, 2001).

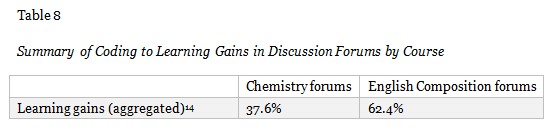

Prior research reveals that through forum writing and peer assignment exchanges, students could be viewed as moving through phases of practical inquiry: triggering event, exploration, integration, and resolution (Garrison, Anderson, & Archer, 2001). Discussion forums in particular offer a rich opportunity for examining student learning gains. Learning gains can be probed by analyzing student dialogue in the discussion forums to evaluate the nature and quality of the discourse. Through our coding of discussion forum posts, we were able to gain insights into student learning gains. Some students enthusiastically post about their learning experiences. Table 8 shows the percentage of discussion forum posts that demonstrated learning gains.

Some of these posts about learning gains are quite general in nature: “I don’t know about you, but I’ve already learned an amazing amount from this class!”

Others show very discrete evolutions in learning:

“I was stuck with the idea that my introductions should be one paragraph long. Maybe I should experiment with longer introductions.”

“And I feel comfortable enough with the chemistry, the basic chemistry, to not avert my eyes like I used to. Whenever I saw a chemical equation I just, oh well, never mind, and I’d just skip it.”

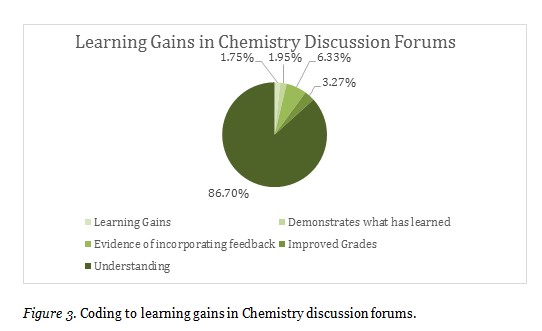

Figure 3 shows the distribution of these learning gains in Chemistry forums. When learning gains were present in Chemistry discussion-forum posts, they were frequently coded to demonstrating understanding.

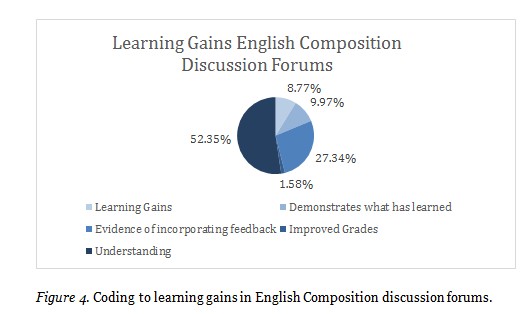

Figure 4 shows the distribution of these learning gains in English Composition discussion forums. When learning gains were present, they were most often related to demonstrating understanding, but also showed significant gains in evidence of incorporating feedback. Like the learning gains in Chemistry, English Composition students also had a very low incidence of discussions about their grades (3.27% and 1.58%, respectively).

In both courses, very little text was coded to the “improved grades” node. Research shows that a focus on grades can be counterproductive to learning gains (Kohn, 2011). The coding results here suggest, therefore, that when students were discussing learning gains they were discussing more meaningful measures of learning gains than grades. Indeed, students posting on the forums in these MOOCs were much more focused on learning than on grade outcomes. As an illustration, one student expressed this sentiment concisely by writing, “I am not hung up on the grade I am too excited about what I learned and how I am putting it into practice and getting results.”

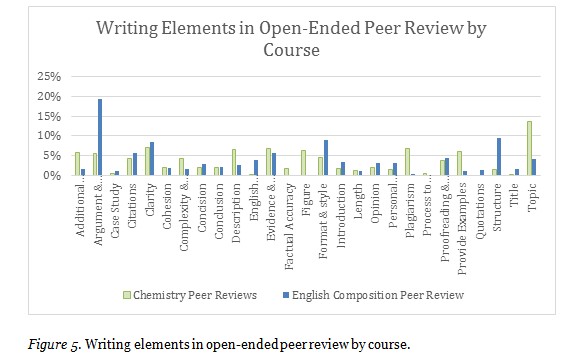

Enrollees’ tendency to discuss more meaningful measures of learning gains in the forums also extended to their interactions through peer review. Peer review is more effective when peers focus on higher order writing elements as opposed to lower order concerns (Elbow, 1973; Clifford, 1981; Nystrand, 1984; Keh, 1990). Figure 5 shows the writing elements learners commented on in the open-ended peer review questions. For English Composition, learners commented most frequently on argument and analysis, format and style, and structure. For Chemistry, learners commented most frequently on topic, evidence and research, and plagiarism.

The greater prevalence of peer notations about plagiarism in Chemistry is likely due to the course instructor specifically asking peers to look for plagiarism: “This is going to come up some small fraction of the time, so here is the procedure: What should you do if you are reviewing an essay that you believe is blatant plagiarism?” Suspected plagiarism was then confirmed by the instructor, who investigated student flagged work. Editorials have expressed concern that MOOC providers and faculty need to be more rigorous at facilitating academic integrity and discouraging or penalizing plagiarism (Young, 2012). Continued work should indeed be done in this area. This is especially important given that, when writing assignments are used on this scale, observations made in face-to-face settings can be magnified. For example, Wilson noted in the summary of his work about writing assignments in a face-to-face chemistry course that “Not all students submitted original work” (Wilson, 1994, p. 1019). A perusal of Chronicle of Higher Education faculty forums reveals that plagiarism continues to constitute a challenge in all educational settings rather than being unique to MOOCs.

However, while academic honesty is of the utmost importance, it is also important to continue facilitating peer commentary based on other elements of writing, especially the higher order concerns named above. Some students expressed a negative impact from what they perceived to be too great a focus by their peers on plagiarism in the Chemistry peer review: “This peer review exercise is rapidly turning into a witch hunt. My opinion of this course has, during the past 2 days, gone from wildly positive to slightly negative.”

Research shows the kind of peer feedback provided impacts peers’ perceptions of the helpfulness of that feedback (Cho, Schunn, & Charney, 2006). We categorized peer feedback by type: positive, constructive, or negative. We defined positive as consisting of compliments that were not related to improving the paper; constructive comments included helpful feedback that a writer could use to improve his or her project or take into consideration for future writing occasions; and negative feedback included comments that were not compliments and were also unconstructive/unhelpful. The ratios of feedback coded as compliment:constructive:negative/unconstructive was 56:42:2 in the peer reviewed assignments and 8:90:2 in the weekly and general discussion forums.

Below are examples of text coded as unconstructive and constructive, respectively:

“Did not read past the 3rd paragraph . . . I am sure it was interesting . . . You just did not keep my interest.”

“Below are my suggestions as a Anglophone and an opinionated reader. . . . ... Before we begin, you used the word feedbacks in the title of this thread. Feedback is the correct term. One of those annoying inconsistencies in English.”

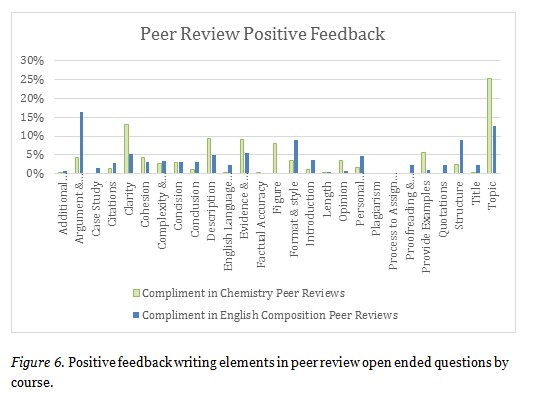

Figure 6 shows the distribution of writing elements specifically among positive feedback in the peer review process, what we termed “compliments.” For Chemistry, compliments were most often focused on topic, clarity, description, evidence and research, and figure (learners included figures in their chemistry assignments). For English Composition, positive feedback was most often focused on argument and analysis, structure, format, and topic. Compliments were least often provided in Chemistry peer reviews to the process of assigning peer review, proofreading and grammar, and quotations. Positive feedback was least often provided in English Composition peer reviews to process of assigning peer review, factual accuracy, and figure.

Students posted positive feedback illustrated by the following excerpts:

“Well written. Good explanations of the chemistry. I liked how it was a topic that you are clearly passionate about.”

“I liked your essay, it is cohesive and concise and its subject is intriguing!”

“You did the great research … and your bibliography is impressive. The introduction is brief, but sufficient, the problem you’ve built your text on is claimed clearly, and your arguments are well supported by references and quotations.”

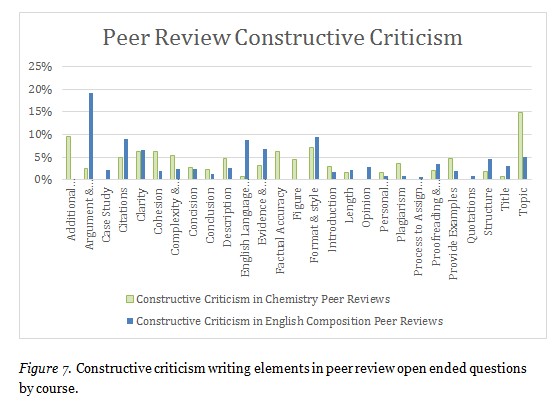

Peers in English Composition were most likely to provide constructive feedback on argument and analysis, English language skills, citations, and format and style. Peers in Chemistry were most likely to provide constructive criticism on additional resources, topic, format and style, and factual accuracy. Coding frequency for both courses is shown in Figure 7.

Students posted constructive criticism such as the following:

“... I could hear your voice among the voices of the cited books and articles, but it is not always obvious where you agree and where you oppose to the cited claim. Probably, you could sharpen your view and make your claim more obvious for your readers.”

“Just as a tip, you could have shown some pictures” of the funghi.

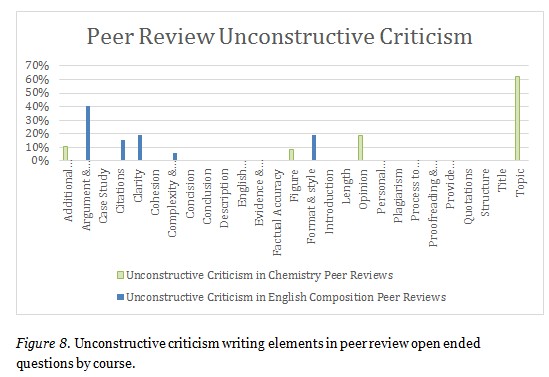

Unconstructive feedback was defined as comments that were negative but not helpful in terms of recommending specific improvements to the student whose work was being reviewed. Figure 8 shows the distribution of unconstructive feedback in the open-ended peer reviews in each course. Peers were most likely to center unconstructive feedback in English Composition on matters of argument and analysis, clarity, and format and style. For Chemistry, peers were most likely to provide unconstructive feedback on topic, opinion, and additional resources.

Examples of unconstructive feedback included the following: “Did not read past the 3rd paragraph . . . I am sure it was interesting . . . You just did not keep my interest.”

It is important to note that because we did not code the assignment submissions themselves, it may have sometimes been difficult to identify what is or is not constructive or unconstructive feedback, particularly in the case of citations and plagiarism. For instance, in some cases, peers responded to feedback as though it were unconstructive, but we do not know for sure whether this feedback about citations was or was not warranted:

“I did research and re-phrased parts of my sources into this essay with citations as is accepted practice. Did you expect me to carry out my very own experiments and post the results? I mean honestly, I am offended by that suggestion. … The only issue I can see is that the numbering of the citations went off during editing, but since all of my sources are still listed at the bottom of the essay this should not be a problem . . . It is also my work, so I would like you to retract your statement, I find it offensive.”

Peer feedback has been shown to enhance learning environments (Topping, 1998). Many posts in the discussion forums and peer reviews from both courses, as well as in the final reflective essays from English Composition learners, indicate that the peer-feedback process contributed to their learning gains. Some of these posts about learning gains from the peer-feedback process are general in nature:

“I found peer comments and their assessment invaluable.”

“[I have been] learning so much from all of the peer review submissions that I have decided to remain in the course just to learn everything I can learn about Chemistry.”

“Throughout the course, I valued my peer’s comments on my drafts so I can improve my writings. I also learnt much by evaluating my peers’ work.”

Other posts show very discrete evolutions in learning:

“I am, however, grateful for the kind parts of your review, and willingly admit to faults within the essay, although until this week, I was, like my fellows, unaware of the expected work on electron transits. By the time I did become aware of this, it was too late to make alterations! Thank you for a thoughtful review.”

“Even more important bit I learned was the importance of feedback. Feedback provides an opportunity to rethink the project, and dramatically improve it.”

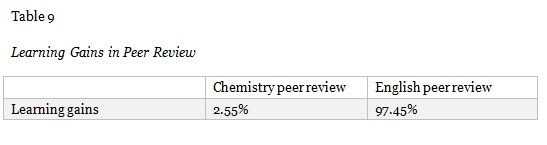

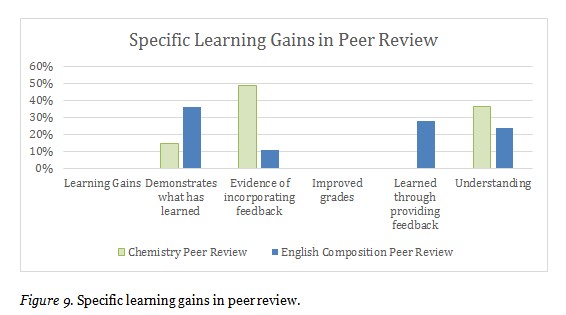

Table 9 shows the frequency of when peer feedback explicitly expressed learning gains. The English Composition peer review rubric specifically asked reviewers to indicate what they had learned from reading and responding to the peer-writing project (see Appendix F). The Introduction to Chemistry peer rubric did not ask this. This probably accounts for why the learning gains were so much more evident in peer review in English Composition than in Chemistry.

Figure 9 shows coding for specific learning gains in peer feedback. In English Composition, peer review provided students with learning gains across four primary areas: understanding, learning through providing peer feedback, demonstrating what the person had learned, and evidence of incorporating feedback. In Chemistry, learning gains from peer feedback occurred most often around matters of understanding, demonstrating what the person had learned, and evidence of incorporating feedback. As with the learning gains in discussion forum posts, the coding shows that students are not focusing on grades, but are instead focusing on higher order concerns.

Because we are interested in the impact of peer-to-peer interactions with less academically prepared students, we specifically looked for challenges faced by students, such as the following: lack of time or energy; less academically prepared; and less or not self-directed. Interestingly, little text was coded to these nodes. For example, only one coding reference was found at the intersection of any of these challenges and learning gains. Therefore, these barriers did not come up in the forum threads that we coded in either class.

We have identified several significant themes that show the importance of and impact of the peer-to-peer interaction through writing in MOOCs.

MOOC Discussion Forum Posts are Connected to Course Content

Both courses examined generated substantial student dialogues on the forums. Students in the English Composition: Achieving Expertise course tended to write longer forum posts than students in the Introduction to Chemistry course. Peer-to-peer dialogue on the weekly forums closely mirrored the content of the course described in the syllabus for that week. This shows that students are primarily discussing course content in these forums and suggests that peer-to-peer writing in the forums can provide one measure of student success in a MOOC.

MOOC Discussion Forums Generally Contribute Positively to the Learning Environment in Chemistry and English Composition

Since attitude was generally positive and the top affects in the forums include belonging to a community, gratitude, and encouragement, we conclude that the forums are in general a positive space for learners to interact. This finding operated across disciplinary context, both in a natural science course and in a more humanities oriented course.

MOOC Discussion Forums Contribute to Learning Gains, Especially in Understanding

In terms of observed learning gains, peer-to-peer interaction on the forums seemed to make the most impact on enhancing and facilitating understanding. Students sought, offered, and provided tips or support from one another on the forums as a way of increasing their understanding of course content.

Peer Review Can Facilitate Learning Gains If This Possibility is Made Explicit

The disparity between the coding for learning gains in English peer reviews and in Chemistry peer reviews suggests that the English students were indicating learning gains because they were asked to do so explicitly. This suggests that faculty should encourage students to reflect on their learning gains explicitly as a way of facilitating those very learning gains.

Peer Feedback on Writing can Meaningfully Focus on Higher Order Concerns across Disciplines

Feedback on writing can be differentiated between that which focuses on higher order or lower order concerns. Effective formative feedback generally must include a focus on higher order concerns, and can then be considered an integral part of the learning and assessment environment (Gikandi, 2011). Peers in both courses focused predominately on higher order concerns, even as they were also able to focus on lower order concerns. This may be due to the peer feedback rubrics. Our data also suggest that peers will follow closely the rubric provided by the instructor. In English Composition, students were asked to focus on argument and analysis. In Chemistry, students were asked to focus on strengths, insights, areas for improvements, and plagiarism. In both cases the students were likely to adhere to the rubric guidelines.

Writing through the Forums Enhances Understanding

Since forum discussions in a MOOC happen through writing, one can extrapolate from our data that writing enhances understanding in MOOC forums. This bolsters evidence for writing-to-learn and suggests that MOOC forums are a key pathway for writing-to-learn and a key pathway for assessing student success in MOOCs across disciplines.

A Limited Group of Learners Posts to the Forums

One of our key areas of inquiry was to understand how peer-to-peer interaction through writing might impact the learning gains of less academically prepared learners. We found, however, that people posting to the forums did not identify themselves explicitly as less academically prepared. This generates questions about how many people post to the forums, and who is or is not likely to post to the forums. The total number of people who posted to the discussion forums in English Composition represents 23% of the total number of people who ever actually accessed the course (51,601); in Chemistry, the total number of people who posted to the discussion forums represents 7% of the total number of people who ever actually accessed the course (22,298). Given the overall positivity of the forums, one wonders if these data indicate that the forums are only positive for certain types of people. Given that the top posters coded higher for “defensiveness” than general posters, one also wonders if there might be drawbacks to certain levels of forum participation. We did not see any significant information about student challenges in the coding data, despite looking for it as one of our coding nodes. Since interactive learning offers so much promise for these learners, and since MOOCs continue to provide the possibility of increased access to higher education, more research is needed about how to facilitate forums in as inclusive and productive a way as possible for less academically prepared learners.

The development of quality educational opportunities through MOOCs, and learning more about how peer interactions through writing contribute to student retention and learning, has the potential to make a significant global impact and increase postsecondary access and success in unprecedented ways. As we discover more through this research about how peer interactions with writing contribute to student learning outcomes and retention, we will be better positioned to understand and work towards a model of higher education that is more flexible, accessible, and effective for the great many individuals in the world interested in pursuing lifelong learning.

We would like to acknowledge the work of the coding team: Michael Barger, Dorian Canelas, Beatrice Capestany, Denise Comer, Madeleine George, David Font-Navarette, Victoria Lee, Jay Summach, and Mark Ulett. Charlotte Clark led the coding workshop with the assistance and data analysis of Noelle Wyman Roth. The figures included in this article were developed by Noelle Wyman Roth. We appreciate the generous funding of this work that was received from several sources. Dorian Canelas and Denise Comer were successful applicants of a competitive grant competition run by Athabasca University (Principal Investigator: George Siemens). This project, the MOOC Research Initiative, was designed to advance understanding of the role of MOOCs in the education sector and how emerging models of learning will influence traditional education. The MOOC Research Initiative is a project funded by the Bill & Melinda Gates Foundation. This research was also funded through matching funds from the office of the Provost at Duke University. Denise Comer’s course was funded largely by a grant from the Bill & Melinda Gates Foundation. We are grateful that development of both courses received support from the talented technology staff and instructional teams in Duke University’s Center for Instructional Technology. Research in Dorian Canelas’s course was also supported by funding from the Bass Connections program. We appreciate the support and encouragement of Keith Whitfield. We acknowledge that a portion of the work described herein was conducted under the guidelines set forth in Duke University IRB Protocol B0596 (Student Writing in MOOC).

Adamopoulos, P. (2013). What makes a great MOOC? An interdisciplinary analysis of student retention in online courses. Thirty fourth international conference on Information Systems, Milan.

Armstrong, D., Gosling, A., Weinman, J., & Marteau, T. (1997). The place of inter-rater reliability in qualitative research: An empirical study. Sociology, 31(3), 597-606.

Balfour, S. P. (2013). Assessing writing in MOOCs: Automated essay scoring and calibrated peer review. Research & Practice in Assessment, 8(1), 40-48.

Bernard, R. M., Abrami, P. C., Lou, Y., Borokhovski, E., Wade, A., Wozney, L., ... & Huang, B. (2004). How does distance education compare with classroom instruction? A meta-analysis of the empirical literature. Review of Educational Research, 74(3), 379-439.

Boynton, L. (2002). When the class bell stops ringing: The achievements and challenges of teaching online first-year composition. Teaching English in the Two-Year College, 29(3), 298-311.

Breslow, L., Pritchard, D. E., DeBoer, J., Stump, G. S., Ho, A. D., & Seaton, D. T. (2013). Studying learning in the worldwide classroom: Research into edx’s first mooc. Research & Practice in Assessment, 8, 13-25.

Brinton, C. G., Chiang, M., Jain, S., Lam, H., Liu, Z., & Wong, F. M. F. (2013). Learning about social learning in MOOCs: From statistical analysis to generative model. arXiv preprint arXiv:1312.2159.

Cain, J., Conway, J., DiVall, M., Erstad, B., Lockman, P., Ressler, J., … Nemire, R. (2014). Report of the 2013-2014 academic affairs committee. American Association of Colleges of Pharmacy. Alexandria, VA: Cain.

Carter, M. (2007). Ways of knowing, doing, and writing in the disciplines. College Composition and Communication, 385-418.

Chi, M. T. (2009). Active-constructive-interactive: A conceptual framework for differentiating learning activities. Topics in Cognitive Science, 1(1), 73-105.

Cho, K., Schunn, C. D., & Charney, D. (2006). Commenting on writing typology and perceived helpfulness of comments from novice peer reviewers and subject matter experts. Written Communication, 23(3), 260-294.

Clarà, M., & Barberà, E. (2013). Learning online: Massive open online courses (MOOCs), connectivism, and cultural psychology. Distance Education, 34(1), 129-136.

Clifford, J. (1981). Composing in stages: Effects of feedback on revision. Research in the Teaching of English, IS, 37-53.

Comer, D. (2013) “MOOCs offer students opportunity to grow as writers.” iiis.org.

Cooper, M. (1993). “Writing: An approach for large-enrollment chemistry courses.” Journal of Chemical Education, 70(6), 476-477.

Crews, D. M., & Aragon, S. R. (2004). Influence of a community college developmental education writing course on academic performance. Community College Review, 32(2), 1-18.

Crosling, G., Thomas, L., & Heagney, M. (2008). Improving student retention in higher education: The role of teaching and learning. Routledge.

Daempfle, P. A. (2004). An analysis of the high attrition rates among first year college science, math, and engineering majors. Journal of College Student Retention, 5, 37-52.

Denzin, N.K. (2009). The research act: A theoretical introduction to sociological methods. New York: McGraw-Hill.

deWaard, I., Abajian, S., Gallagher, M. S., Hogue, R., Keskin, N., Koutropoulos, A., Rodriguez, O. C. (2011). Using mLearning and MOOCs to understand chaos, emergence, and complexity in education. The International Review of Research in Open and Distance Learning, 12(7), 94-115. Retrieved from http://www.irrodl.org/index.php/irrodl/article/view/1046/2026

Elbow, P. (1973). Writing without teachers. London: Oxford University Press.

Emanuel, E. J. (2013). Online education: MOOCs taken by educated few. Nature, 503(7476), 342-342.

Falk, J. H., Storksdieck, M., & Dierking, L. D. (2007). Investigating public science interest and understanding: Evidence for the importance of free-choice learning. Public Understanding of Science, 16(4), 455-469.

Gannon, S., & Davies, C. (2007). For love of the word: English teaching, affect and writing 1. Changing English, 14(1), 87-98.

Garrison, D. R., Anderson, T., & Archer, W. (2001). Critical thinking, cognitive presence, and computer conferencing in distance education. American Journal of Distance Education, 15(1), 7-23.

Gikandi, J. W., Morrow, D., & Davis, N. E. (2011). Online formative assessment in higher education: A review of the literature. Computers & Education, 57(4), 2333-2351.

Guo, P. J., & Reinecke, K. (2014, March). Demographic differences in how students navigate through MOOCs. In Proceedings of the first ACM conference on Learning@ scale conference (pp. 21-30). ACM.

Hawkes, M. (2001). Variables of interest in exploring the reflective outcomes of network-based communication. Journal of Research on Computing in Education, 33(3), 299-315.

Huang, H. M. (2002). Toward constructivism for adult learners in online learning environments. British Journal of Educational Technology, 33(1), 27-37.

Johnson, D. W. (1981). Student-student interaction: The neglected variable in education. Educational Researcher, 5-10.

Joyner, F. (2012). Increasing student interaction and the development of critical thinking in asynchronous threaded discussions. Journal of Teaching and Learning with Technology, 1(1), 35-41.

Keh, C. L. (1990). Feedback in the writing process: A model and methods for implementation. ELT journal, 44(4), 294-304.

J. Kim, in Inside Higher Educ. (2012), http://www.insidehighered.com/blogs/technologyand-learning/playing-role-mooc-skeptic-7-concerns

Kohn, A. (2011). The case against grades. Educational Leadership, 69(3), 28-33.

S. Kolowich, in Inside Higher Ed. (2011), http://www.insidehighered.com/news/2011/04/07/gates_foundation_announces_higher_education_technology_grant_winners

Kop, R., Fournier, H., & Mak, J. S. F. (2011). A pedagogy of abundance or a pedagogy to support human beings? Participant support on massive open online courses. International Review of Research in Open and Distance Learning, 12(7), 74-93.

Kuh, G. D. (2008). Excerpt from “High-impact educational practices: What they are, who has access to them, and why they matter”. Association of American Colleges and Universities.

Lin, H.-S., Hong, Z.-R., & Huang, T.-C. (2012). The role of emotional factors in building public scientific literacy and engagement with science. International Journal of Science Education, 34(1), 25-42.

Mammino, L. (2011). Teaching chemistry in a historically disadvantaged context: experiences, challenges, and inferences. Journal of Chemical Education, 88(11), 1451-1453.

Maxwell, J.A. (2005). Qualitative research design: An interactive approach. 2nd edition. Sage. Thousand Oaks, CA.

Meisenhelder, S. (2013). MOOC mania. Thought and Action, 7.

Nystrand, M. (1984). Learning to write by talking about writing: A summary of research on intensive peer review in expository writing instruction at the University of Wisconsin-Madison. National Institute of Education (ED Publication No. ED 255 914). Washington, DC: U.S.-Government Printing Office. Retrieved from http://files.eric.ed.gov/fulltext/ED255914.pdf

Pelaez, N. J. (2002). Problem-based writing with peer review improves academic performance in physiology. Advances in Physiology Education, 26(3), 174-184.

Pienta, N. J. (2013). Online courses in chemistry: salvation or downfall? Journal of Chemical Education, 90(3), 271-272.

Pomerantz, J. (2013). Data about the Metadata MOOC, Part 3: Discussion Forums. Jeffreypomerantz.name.

Reynolds, J. A., Thaiss, C., Katkin, W., Thompson, Jr. R. J. (2012). Writing to learn in undergraduate science education: A conceptually driven approach. CBE Life Science Education, 11(1), 17-25.

Rivard, L. P., & Straw, S. B. (2000). The effect of talk and writing on learning science: An exploratory study. Science Education, 84(5), 566-593.

Ruey, S. (2010). A case study of constructivist instructional strategies for adult online learning. British Journal of Educational Technology, 41(5), 706-720.

Salomon, G., & Perkins, D. N. (1998). Individual and social aspects of learning. Review of Research in Education, 1-24.

Siemens, G. (2005). Connectivism: A learning theory for the digital age. International Journal of Instructional Technology and Distance Learning, 2(1), 3-10.

Sorcinelli, M. D., & Elbow, P. (1997). Writing to learn: Strategies for assigning and responding to writing across the disciplines. Jossey-Bass.

Topping, K., & Ehly, S. (Eds.). (1998). Peer-assisted learning. Routledge.

Vàzquez, A. V., McLoughlin, K., Sabbagh, M., Runkle, A. C., Simon, J., Coppola, B. P., Pazicni, S. (2012). Writing-to-teach: A new pedagogical approach to elicit explanative writing from undergraduate chemistry students. Journal of Chemical Education, 89(8), 1025-1031.

Waldrop, M. (2013). Campus 2.0: Massive open online courses are transforming higher education and providing fodder for scientific research. Nature, 495, 160 (March 14, 2013).

Watkins, J., & Mazur, E. (2013). Retaining Students in science, technology, engineering, and mathematics (STEM) majors. Journal of College Science Teaching, 42(5).

Wilson, Joseph W., (1994). Writing to learn in an organic chemistry course. Journal of Chemical Education, 71(12), 1019-1020.

Yang, Y. F., & Tsai, C. C. (2010). Conceptions of and approaches to learning through online peer assessment. Learning and Instruction, 20(1), 72-83.

Young, J. L. (2012). Dozens of plagiarism incidents are reported in Coursera’s free online courses. The Chronical of Higher Education. (August 16, 2012.) Retrieved from http://chronicle.com/article/Dozens-of-Plagiarism-Incidents/133697/

Yuan, L., & Powell, S. (2013). MOOCs and open education: Implications for higher education. Cetis White Paper.

Zhang, S. (1995). Reexamining the affective advantage of peer feedback in the esl writing class. Journal of Second Language Writing, 4(3), 209-222.

The following information helps define our terms: Forum: A forum is the top level discussion holder (Week 1). These are created in Coursera by instructional team staff. Subforum: A subforum is a discussion holder that fall under the top forum (Week 1 Lectures, Week 1 Assignment). These are also created by instructional team staff. Thread: A thread is a conversation begun by either instructional team staff or by students. A single forum typically contains many threads covering many different subjects, theoretically related to the forum’s overarching topic. Post: A post is an individual’s response to a thread. Posts can be made by either instructional team staff or by students, and may be posted with the students identifying name, or may be posted as anonymous. Staff with administrative privileges may “toggle” a setting on each post to reveal the identity of students who have chosen to post anonymously. Although in theory a post is a new “top level” contribution to an existing thread (as opposed to comment (read below), many students don’t pay attention to whether they are posting or commenting, and therefore, we didn’t feel that we could accurately distinguish between the two. Comment: A comment is a reply to a post. Comments can be made by either instructional team staff or by students. Again, we decided not to distinguish semantically between a post and a comment, because we felt that distinguishing them was not possible in (the very common) complex web of post, response, subsequent post, subsequent response, etc.

The main page of a forum lists all the primary threads begun in that forum or subforum. If threads are begun in a subforum, they are only listed in the “all threads” area of the subforum, not in the “all threads” list of any parent forum. Subforums may themselves have subforums (which are also called subforums). At the bottom of a list of “all threads,” you can read the total number of pages of threads that exist; each page contains 25 thread headings. Therefore, an estimate of the number of threads can be obtained by multiplying the number of pages by 25.

Coursera provides a number of views received by each thread, as well as the number of times a thread has been opened and read. Of course, by opening a thread to capture it for analysis, we are increasing the number of views of that thread, so we used the “views” number in our analysis with this limitation in mind.

Coursera also allows the viewer to sort by “Top Thread,” “Most Recently Created,” and “Most Recently Modified.” We always sorted by “Top Thread” before beginning our sampling and capturing process. Top threads are defined as those that have the most posts and comments, views, and/or most reputation points or up votes on the original post that started the thread (see below). Number of posts, comments, and views certainly provide one measure of student engagement and activity. Two other related metrics exist in Coursera. Students can choose to vote certain posts “up” or “down,” and are encouraged to do so to bring thoughtful or helpful posts to the attention of their peers. This simple “like” type of toggle exists at the bottom of each post or comment. Students also receive “reputation points” when their posts are voted up (or down) in the forums by other students. Specifically, “Students obtain reputation points when their posts are voted up (or down) in the forums by other students. For each student, his/her reputation is the sum of the square-root of the number of votes for each post/comment that he/she has made” (Pomerantz, 2013). Top posters are the students with the highest number of reputation points.

Node Names and Coding Reference Quantity

Node Name |

Sources |

References |

Affect (aggregated) |

565 |

3103 |

Admiration |

89 |

104 |

Belonging to this community |

110 |

302 |

Competiveness |

20 |

44 |

Defensiveness |

27 |

71 |

Empathy |

49 |

77 |

Encouragement |

307 |

616 |

Frustration |

58 |

126 |

Gratitude |

243 |

510 |

Humor |

32 |

76 |

Inspiration |

52 |

78 |

Motivation |

57 |

766 |

Receptiveness to critique or |

176 |

256 |

Sympathy |

35 |

77 |

Attitude |

0 |

0 |

Negative |

131 |

296 |

Neutral |

382 |

1877 |

Positive |

507 |

1735 |

Feedback |

0 |

0 |

Compliment |

310 |

623 |

Constructive criticism |

174 |

310 |

Unconstructive criticism |

20 |

26 |

Learning through P2P writing |

94 |

105 |

Learning gains |

382 |

751 |

Demonstrates what learned |

123 |

168 |

Evidence of incorporating |

50 |

63 |

Improved grades |

4 |

5 |

Learned through providing |

257 |

261 |

Understanding |

143 |

225 |

Miscommunication |

3 |

9 |

Writing Elements |

0 |

0 |

Additional resources |

48 |

59 |

Argument and analysis |

163 |

228 |

Case study |

13 |

15 |

Citations |

103 |

132 |

Clarity |

109 |

139 |

Cohesion |

42 |

49 |

Complexity and simplicity |

45 |

50 |

Concision |

44 |

51 |

Conclusion |

51 |

54 |

Description |

74 |

96 |

English language skills |

37 |

40 |

Evidence and research |

102 |

128 |

Factual accuracy |

9 |

11 |

Figure |

46 |

74 |

Format and style |

107 |

138 |

Introduction |

52 |

60 |

Length |

28 |

30 |

Opinion |

34 |

43 |

Personal experience |

39 |

49 |

Plagiarism |

14 |

24 |

Process to assign peer |

4 |

4 |

Proofreading and grammar |

50 |

61 |

Provide examples |

37 |

51 |

Quotations |

22 |

24 |

Structure |

111 |

141 |

Title |

31 |

35 |

Topic |

102 |

136 |

Peer-to-peer connections (aggregated) |

511 |

3861 |

Connecting outside of class |

26 |

91 |

Disagreement |

25 |

49 |

Feedback on Math Problem |

6 |

14 |

Feedback on Problem Solving |

9 |

19 |

Goals or aspirations in course |

60 |

576 |

Introductions to peers |

55 |

784 |

Offering moral or emotional |

142 |

293 |

Offering peer review |

138 |

341 |

Offering tips or help to peers |

209 |

931 |

Seeking moral or emotional |

47 |

86 |

Seeking peer review |

100 |

133 |

Seeking tips or help from peers |

175 |

393 |

PIT Post goal priority |

0 |

0 |

1 primary goal |

477 |

3366 |

2 Secondary goal |

81 |

229 |

3 Ancillary goal |

6 |

8 |

Post type |

0 |

0 |

Course experience discussion |

178 |

1040 |

Course experience question |

68 |

161 |

Course material discussion |

189 |

805 |

Course material question |

140 |

319 |

Spam or inappropriate |

9 |

14 |

Student challenges |

0 |

0 |

Lack of time and energy |

50 |

109 |

Less academically prepared |

26 |

91 |

Less or not self-directed |

39 |

65 |

Objective: The objectives of this assignment are:

1) to encourage you to learn more about the chemistry related to a specific topic that interests you through research and writing.

2) to allow you to learn more about diverse topics of interest to other students by reading, responding to, and reviewing their essays.

Assignment: Pick any topic related to chemistry that interests you (some global topics are listed below to give you ideas, but you do not have to restrict yourself to that list.) Since most of the global topics are much too broad for the length limit allowed, narrow your interest until the topic is unique and can be covered (with examples) in less than a couple of pages of writing.

Once you have a topic, write an essay in which you address the following questions:

• What are the chemicals and/or chemical reactions involved with this topic?

• How does the chemistry involved with this topic relate to the material in the course?

• Are there economic or societal impacts of this chemistry? If so, then briefly describe aspects of ongoing debate, costs, etc.

• What some some questions for future research papers if you or someone else wanted to learn more about how chemistry intersects with this topic?

• Did this research lead you to formulate any new questions about the chemistry itself?

Individual Research Paper Guidelines and Requirements:

• Because other students will need to be able to read what you have written, the assignment must be submitted in English. If you are worried about grammar because English is not your native language, then please just note that right at the top of the essay and your peers will take into account the extra effort it requires to write in a foreign language.

• Think about what terms your classmates already know based upon what has been covered to date and what terms might need additional explanation.

• The final paper should be 400-600 words, not including references or tables and figures and their captions. There is no word count police, but please use this as a guideline for length.

• Be careful not to use the “cut and paste” method for your writing. Each sentence should be written in your own words, with appropriate references to the works of others if you are getting your ideas or information for that sentence from a source.

• Online references should be used, and these should be free and available to everyone with internet access (open source, no subscription required.) At least three distinct references must be included. Wikipedia and other encyclopedias should not be cited, but these can be a starting point for finding primary sources. Please be sure to cite your source websites. Please provide the references at the end of the essay as a numbered list, and insert the citation at the appropriate spot in the essay body (usually right after a sentence) using square brackets around the number that corresponds to the correct reference on the list.

• The paper can include up to 3 tables/figures. Tables and figures are optional, but might be helpful in conveying your ideas and analysis. Tables and figures should include citations to sources if they are not your intellectual property (As examples, a photograph that you take would not require citation as you would hold the copyright, but a photograph that you find on the web or in the literature would require citation. A graph or table that you pull straight from a source should cite that source explicitly in the figure caption; a graph or table that you construct yourself using data from multiple sources should cite the sources of the data with an indication that you own copyright to the graph or table itself.)

• The paper should include data and/or chemistry related to the topic and might also include an analysis of the impacts of the issue upon society (yourself and the community.) Political, economic, and/or historical analysis may also be appropriate depending upon the topic. Every paper MUST contain some chemistry.

• Submission will be electronic, and submitted papers will be copied to the course forum as soon as the first peer feedback is received so that others may learn and continue the discussion. Author names will be posted with their writing on the forum as well. Including your name promotes accountability in your work and closer collaboration among peers.

Sample Writing and Sample Peer Feedback: Prof. Canelas has secured permission from a few real students from former courses to post their essays and sample peer feedback to help guide your work. These will be posted in a separate section under the “Reference Information” section on the course main page no later than the beginning of the third week of class.

Some Global Topic Suggestions: (In no particular order. Anything is fair game as long as it involves chemistry, so feel welcome to make up your own topic not related to this list. Again, please be sure to substantially narrow your topic, perhaps to a single molecule, concept, or event; these categories are much too broad but might give you some ideas of topics to explore that interest you.)

• Combustion Chemistry: Politics, Projections, and Pollution for Petroleum, Biofuels

• The Chemistry of the Senses: Taste, Odor, and/or Vision

• History, Chemistry, and Future of Antibiotics

• The Sun, Moon, Stars, and Planets: Chemistry of Astronomy

• Water, Water, Everywhere: Anything related to H2O chemistry from water medical diagnostic imaging to acid rain

• Cradle to Grave: Polymers and Plastics

• The Evolution of Chemistry for Enhanced Technology: Lighter, Stronger, Faster, Cheaper, and Cleaner

• Chemistry, Politics, and Economics of Local Pollution Issues (can be in your local area or other locations of your choice)

• Elementary, My Dear Watson: Forensic Chemistry

• The Chemistry of Diabetes, Sugar, and/or Sugar Substitutes (or pretty much any other disease, biological process, or food)

• Missing Important Food Chemicals: Scurvy, Rickets, Starvation!

• Genetic Engineering of Food (aka Messing with Molecules We Eat)

• Addictive Chemicals, both Legal and Illegal

• Chemistry of Art Preservation

• Dynamism, Diplomacy, and Disaster: Nuclear Energy, Weapons, and Waste

• Athletes on the Edge: Chemistry and Detection of Performance Enhancing Drugs in Sports

• Batteries: Portable Devices that Convert Chemical Energy to Electrical Energy

• Alternative Energy (Solar Cells, Fuel Cells)

• Chemistry of Archeology, such as Unlocking the Secrets of the Terra Cotta Warriors of Xian

• Chemistry and Controversies of Climate Change

• The Chemistry of Color

• Chemical communication: pheromones

• Poisoning: intentional or unintentional

Read your peer’s essay and comment on:

1. Strengths of the paper: what aspects of the paper did you particularly enjoy or feel were well done?

2. Areas for improvements or additions to the paper, ideally with specific suggestions.

3. Insights you learned from reading the paper or what you found to be the most interesting aspects of the topic.

Please give feedback in paragraph form rather than as single sentences underneath the guidelines. Please remember: we are not grading these essays with a score. Instead, we are learning about chemistry from each other through the processes of researching, writing, reading, and providing comments for discussion.

Write a brief essay (~300 words) in which you introduce yourself as a writer to your classmates and instructor. How would you describe yourself as a writer? What are some of your most memorable experiences with writing? Please draw on your experiences with writing and refer directly to some of these as you introduce yourself as a writer. After you have written and posted your essay, please read and respond to two or three of your classmates’ postings.

Project One: Critical Review

The Uses and Limits of Daniel Coyle’s “The Sweet Spot.”

In this project, I will ask you to write a 600-800 word critical review of Coyle’s article, summarizing the project in his terms, quoting and analyzing key words and passages from the text, and assessing the limits and uses of his argument and approach.

Project Two: Analyzing a Visual Image

In your second project, I will ask you to develop a 600-800 word analysis of a visual representation of your chosen field of expertise. I will ask you to apply Coyle’s and Colvin’s ideas, and the forum conversations by classmates, to examine how expertise in your chosen area is represented and how it reflects, modifies, and/or challenges ideas about expertise: How is expertise represented visually? What does the image suggest about what it takes to be an expert in this field? How is expertise being defined in this image?

Project Three: Case Study

In this project, I will ask you to extend your work with Projects One and Two by researching additional scholarship about expertise in your chosen area, reading more texts about expertise theory through a crowd-sourced annotated bibliography (a collection of resources, with summaries, posted by all students), and applying those to a particular case study (example) of an expert or expertise in your field. I will ask you to extend these scholarly conversations through a 1000-1250 word case study in which you can articulate a position about expertise or an expert in the area of inquiry you have chosen.

Project Four: Op-Ed

Since the academic ideas are often made public (and arguably should be), I will ask you to write a two-page Op-Ed about a meaningful aspect of your chosen area of expertise: What aspects are important for others to consider? What advice would you have for people desiring to become an expert in this area? What are the politics and cultures involved with establishing and defining expertise in this particular area?

Sample Full Project Assignment, English Composition, Project 3, Case Study

Project Components and Key dates

Project 3 will be completed in sequenced stages so you can move through the writing process and have adequate time to draft and revise by integrating reader feedback.

• Contribute to our annotated bibliography on the discussion forums: (Weeks 6-8)

• First draft due, with “note to readers”: May 13, 9:00 am EDT (-0400 GMT)

• Respond to Peers (formative feedback): May 20, 9:00 am EDT (-0400 GMT)

Note: You MUST get your comments back to the writers on time so they can meet the next deadline!

• Reflect on Responding to Peers

• Revise and Edit: Feedback available beginning May 20, 10:00 am EDT (-0400 GMT)

• Final draft due, with reflection: May 27, 9:00 am EDT (-0400 GMT)

• Evaluate and respond to Peers (evaluative feedback): June 3, 9:00 am EDT (-0400 GMT)

• Reflect on Project 3

Purpose: Learn how to research an in-depth example of expertise.

Overview