|

|

|

|

Toni Malinovski, Marina Vasileva, Tatjana Vasileva-Stojanovska, and Vladimir Trajkovik

Macedonia, the former Yugoslav Republic of

Early identification of relevant factors that influence students’ experiences is vitally important to the educational process since they play an important role in learning outcomes. The purpose of this study is to determine underlying constructs that predict high school students’ subjective experience and quality expectations during asynchronous and synchronous distance education activities, in a form of quality of experience (QoE). One hundred and fifty-eight students from different high schools participated in several asynchronous and synchronous learning sessions and provided relevant feedback with comparable opinions regarding different conditions. Structural equation modeling was used as an analytical procedure during data analysis which led to a QoE prediction model that identified relevant factors influencing students’ subjective QoE. The results demonstrated no significant difference related to students’ behavior and expectations during both distance education methods. Additionally, this study revealed that students’ QoE in any situation was mainly determined by motivational factors (intrinsic and extrinsic) and moderately influenced by ease of use during synchronous or quality of content during asynchronous activities. We also found moderate support between technical performance and students’ QoE in both learning environments. However, opposed to existing technology acceptance models that stress the importance of attitude towards use, high school students’ attitude failed to predict their QoE.

Keywords: Quality of experience; distance learning; high school students; structural equation modeling; survey

Distance education has emerged as a response to a general need for access to learning where face-to-face education is not possible (Beldarrain, 2006). The incredible growth of internet and widespread use of computers in the last decade have opened tremendous possibilities for distance learning. Different distance education programs were incorporated in traditional schools or provided a flexible way of learning in today’s virtual schools (K–12 level) and virtual universities that deliver full curriculum online (Barbour, 2011; Hew & Cheung, 2010; Stricker, Weibel, & Wissmath, 2011). Distance education possibilities in high schools or virtual schools do not differ from the ones available to other state and private educational institutions. Regardless of the pedagogical approach or the technological tools, they can be roughly categorized as asynchronous and synchronous delivery methods (Bernard et al., 2009; Murphy, Rodríguez-Manzanares, & Barbour, 2011; Oztok et al., 2013; Somenarain, Akkaraju, & Gharbaran, 2010). Asynchronous distance learning solutions support relations between students and the teacher, separated by time and distance. The teacher-student interaction is facilitated through streaming media, emails, discussion boards, social media, and so on, and can reach a high phase of critical thinking since the students have more time to reflect, interact with the content, and process the information (Hrastinski, 2008; Robert & Dennis, 2005). Synchronous distance learning solutions provide real time teacher-student interaction while closely resembling a face-to-face educational environment. The synchronous communication is performed online via video/audio conferencing, instant messaging, real-time collaboration applications, and so on, while live interaction with the teacher and immediate feedback support the traditional pedagogies and different innovative methods for effective teaching and learning (Gillies, 2008; Lawson et al., 2010).

Evolving high schools that try to incorporate distance learning activities can use asynchronous, synchronous, or a combination of both learning solutions in a blended environment where students learn part of the content online. In like manner, Powell and Patrick’s (2006) snapshot of the current state of K-12 e-learning in the world provided a survey’s results which indicate that virtual schools are already active in many countries while using asynchronous and synchronous delivery models. Different studies have tried to compare asynchronous and synchronous delivery methods in terms of educational possibilities, learning efficiency, student retention, and teachers’ approach (Hrastinski, 2008; Murphy, Rodríguez-Manzanares, & Barbour, 2011; Somenarain, Akkaraju, & Gharbaran, 2010), while others have evaluated both learning solutions against face-to-face education (Beldarrain, 2006; Bernard et al., 2004; Jaques & Salmon, 2012). On the other hand, while consumer-centricity has become a growing trend in different areas that utilize technological solutions, research studies that follow a student-centered approach in the distance education area are still scarce, especially involving high school students. Still, the ones that are available have demonstrated that student-centered environments in distance education that focus on the students’ experience can be linked to increased learning achievements (Chang & Smith, 2008; Donavant, 2009; Eom, Wen, & Ashill, 2006).

This study aims to identify relevant factors that influence high school students’ subjective experience and quality expectations during distance education activities, in a form of quality of experience (QoE). Even though high and virtual schools can have adult students attending courses, we focused on students within a typical high school age span as a selected target group and investigated their subjective expectations while using distance learning systems. Thus we developed a QoE prediction model, which can adequately forecast high school students’ experience as a step towards increased learning outcomes. Having in mind the different nature of available distance education methods, we compared the proposed model between classes that incorporate asynchronous and synchronous activities, with results that provide guidelines for future educational development.

Even though literature on distance education has boomed over the last decade with a large proportion of comparisons between distance and face-to-face education (Beldarrain, 2006; Bernard et al., 2004; Cavanaugh et al., 2004; Giancola, Grawitch, & Borchert, 2009; Jaques & Salmon, 2012), only a limited number of studies have compared different distance education methods and solutions, especially from students’ point of view. Johnson’s (2008) comparison study focused only on text-based discussions while concluding that both asynchronous and synchronous forms of online discussion contribute to students’ cognitive and affective outcomes. Hrastinski (2008) discovered that asynchronous communication increases a person’s ability to process information, while students participating in synchronous communication felt more psychologically aroused and motivated. Somenarain, Akkaraju, and Gharbaran (2010) found no significant difference in student satisfaction while participating in asynchronous and synchronous learning environments. Although some of the mentioned studies attempted to transfer the focus to the e-learner while comparing different conditions, there is an important gap for empirical research that evaluates students’ perceptions in distance learning environments, especially relying on validated instruments.

Furthermore, there is a lack of information on the nature of high school students and evidence for the necessity to separate them from the rest of the distance learning population. The present generation involved in K–12 environments has been fitted into the stereotype of “digital native”, since they are growing up with emerging new communication technologies (Koutropoulos, 2011; Li & Ranieri, 2010; Oblinger, 2005; Prensky, 2001). Recent studies suggest that we need to move beyond this concept based purely on generational differences, showing that breadth of use, experience, self-efficacy, and education are also important (Helsper & Eynon, 2010; Steinweg, Williams, & Stapleton, 2010). Therefore, if we approach high school students as the same as any distance education practitioner and evaluate multiple variables that influence students’ behavior and expectations we can provide results that explain their nature and subjective experience.

Since student’s perceptions and experience are out of mind opinions, it is difficult to measure, quantify, and even predict QoE outcomes. Different studies have proposed approaches based on QoE concepts that focus on quality perceived by the end-user from different systems, while exploring its relationship with technical parameters like quality of video/audio (Khan, Sun, & Ifeachor, 2012; Knoche & Sasse, 2008), networking performance (Zapater & Bressan, 2007), system parameters, and so on. Laghari and Connelly (2012) approached QoE as an assessment of the human experience when interacting with technology and business entities in a particular context, while presenting a high-level model in a communication ecosystem. Gong et al. (2009) defined a QoE model consisting of five factors, availability, usability, integrality, retainability and instantaneousness, while mainly focusing on the relationship between the technical and QoE parameters. Malinovski, Lazarova, and Trajkovik (2012) focused on the social aspect during usage of online learning portals and proposed a model where simplicity and adaptability of the system predict students’ experience. In like manner, there have been additional attempts to provide a QoE model and substantial analysis (Janowski & Papir, 2009; Kilkki, 2008), but still lots of inconsistencies remain during identification of relevant influencing factors.

Having in mind that research studies which demonstrate relevant students’ QoE models in distance learning environments are almost nonexistent, we have to consider valid technological acceptance models and sound theories in the distance education area to conduct significant QoE research. The technology acceptance model (TAM) addressed user acceptance of informational systems, while specifying the casual relationship between perceived usefulness, ease of use, attitude, and actual usage behavior (Davis, Bagozzi, & Warshaw, 1989; Lee, Cheung, & Chen, 2005; Liu et al., 2010). Sahin and Shelley (2008) based their research on TAM during selection and measurement of variables, while proposing a model which suggested that students’ computer knowledge, perceived usefulness, and flexibility of distance education should be considered as predictors of students’ satisfaction in online learning environments.

On the other hand, the motivational theories have recognized motivation as an important factor for academic success, while different analyses showed a division between extrinsic (external) and intrinsic (self-determined) motivators (Lee, Cheung, & Chen, 2005; Ryan & Deci, 2000). Intrinsically motivated students are more persistent and more likely to achieve set goals since they are engaging in learning for the inherent satisfaction of acquiring knowledge (Hardre & Reeve, 2003; Murphy & Rodríguez-Manzanares, 2009). Even though generally intrinsic motivation is more effective and lasting than extrinsic motivation (Gagné & Medsker, 1996), the external motivating factors (e.g., higher grades, social influence, etc.) are important drivers capable of evoking specific behavior in distance education environments (Ryan & Deci, 2000), especially since high school students may have less intrinsic motivation (Smith, Clark, & Blomeyer, 2005).

In this study we adopted the importance of students’ motivation in distance learning environments and combined it with certain variables from TAM (ease of use and attitude) since the adoption of new technology is also determined by extrinsic and intrinsic motivators (Davis, Bagozzi, & Warshaw, 1992; Lee, Cheung, & Chen, 2005). Furthermore, we go beyond mere technology acceptance while trying to determine factors that can influence a higher level of positive high-school students’ QoE in distance education settings. We used some of the variables from existing studies to define constructs that can forecast students’ QoE while further comparing outcomes during online asynchronous and synchronous learning conditions.

This study aimed to identify factors that influence high school students’ QoE from distance education environments which involve asynchronous and synchronous activities. Therefore in our research activities, we included 158 students from five different high schools in Macedonia, two in the capital city and three in other towns. Among the participants, 55.7% (n = 88) were male and 44.3% (n = 70) were female, 15-16 years old, while 57.6% of them used computers everyday at home for school activities, 27.8% used computers two-three times a week, 5.1% once a week, and 9.5% did not use computers at home. Students without computers at home were asked to perform the necessary activities using school equipment at their own pace, so they could successfully participate in the research. During the 2012-2013 school year we introduced distance education methods on three different subjects (math, science and art), in two learning sessions with similar topics per subject, while using different distance learning activities. Hence the participants attended a total of six learning sessions (asynchronous and synchronous in three subjects) and were able to provide relevant feedback with comparable opinions regarding different conditions. The student sample and the design provided a representative group which participated in various classes and thus diminished students’ preference towards specific subjects.

The teachers provided streaming videos and notes for each lecture on the schools’ learning portals during the asynchronous activities and discussed the materials with students over email and the portals’ forum. Therefore students were able to use the online curricular materials on their own time for a few days and collaborate with their classmates under guidance of the teachers. The synchronous activities were conducted in class with videoconferencing sessions between two high schools in different cities. Each videoconferencing site had a teacher/student camera, proper sound systems, and two displays for both parties during the two-way video communication. The first part of the lecture was presented by the teacher in one site, was concluded by the teacher in the other site, and was followed by interactive discussion among participants. Students’ feedback information was collected through surveys after each learning session, which included questions regarding students’ opinions to the interest of this study, that were further used as research variables. Links to surveys during asynchronous activities were provided on the schools’ learning portals for easy access, while during videoconferencing-based sessions the surveys were performed online at the end of each class.

Since multi-item measures are more adequate than single-item when measuring complex constructs (Nunnally & Bernstein, 1994), such as students’ perceived QoE and influencing factors, we defined a large set of observed variables while forming five complex unobserved variables, referred to as latent constructs. Details of the instruments are described below, with the necessary difference for different learning conditions.

In line with existing studies that provided relations between quality perceived by end-users from different systems and delivered technical performance (Khan, Sun, & Ifeachor, 2012; Zapater & Bressan, 2007), we evaluated a technical performance variable (TECH) during asynchronous/synchronous learning sessions. The surveys contained a section regarding students’ perception of the technical conditions: students’ perceived quality of the video (T1) and audio (T2) signals; beliefs regarding adequate audio/video synchronization (T3); and proper functioning of equipment during videoconferencing sessions and streaming media delivery for the asynchronous activities (T4).

Technology acceptance models have stressed the importance of usability with different technological solutions, since the “easy to use” approach does influence end-users’ experience. Therefore we formed an easy usage variable (EASY) during videoconferencing sessions constructed from four observed items in our questionnaires: the level of appropriate teacher-student live interaction (E1); students’ perceived easiness in following the lessons (E2); the degree to which students were able to easily understand the content (E3); and ease of use of the videoconferencing equipment (E4).

In the course of our research activities, we found that the content delivered through streaming videos and lecture notes played a more important role than ease of usage of the schools’ portals during asynchronous learning. This notion is consistent with Lee, Cheung, and Chen’s (2005) findings suggesting that perceived ease of use is no longer a crucial factor when students use internet-based learning portals. Therefore we made a slight distinction and used content instead of easy usage as a research variable (CONT) for these activities. We have constructed CONT from the following observed variables: quality of instructions within the recorded materials and lecture notes (C1); students’ opinion of the content modules and online discussions in regards to subjects’ topic (C2); and students’ beliefs regarding appropriateness of content to their high-school level (C3).

The attitude of high school students towards distance education novelties in the learning environment is important since TAM and similar technology acceptance models have linked attitude and intention to use (Davis, Bagozzi, & Warshaw, 1989; Lee, Cheung, & Chen, 2005). Therefore we researched attitude as a variable (ATT) in asynchronous and synchronous learning conditions, constructed from level of acceptance of the following: new teaching approach (A1); students’ beliefs regarding collaboration and intuitive atmosphere (A2); and students’ attitude towards novelties in teaching practice in general (A3).

The importance of students’ motivation in distance education has been widely recognized (Ryan & Deci, 2000) and strongly linked with students’ learning achievements (Tüzün et al., 2009). Xie, Debacker, and Ferguson’s (2006) findings demonstrated that perceived interest (intrinsic motivator) and value as extrinsic motivation positively correlated with online students’ course attitude and engagement, while Chen and Jang (2010) provided a research model with evidence for the mediating effect of need satisfaction between contextual support, motivation, and self-determination. Thus, we evaluated high-school students’ motivation (MOTIV) through a section in the questionnaires that focused on the following: motivation for the challenge and new teaching approach (M1) and interest to use distance education activities for other subjects on their own initiative (M2) as intrinsic motivators; students’ beliefs to enhance grades through distance learning activities (M3) and students obligation to use/reuse streaming video or recorded videoconferencing sessions after class for learning (M4) as extrinsic motivators. .

Since QoE is out of the mind opinion and can relate to fun activities during learning, students’ satisfaction (Sahin & Shelley, 2008), perceived effectiveness, and so on, we formulated high school students’ experience as an unobserved variable (QoE) from asynchronous and synchronous distance education environments, measured by the following: students’ perceived experience for natural feeling and increased efficiency (Q1); beliefs for increased possibilities and productivity (Q2); the degree to which students think this type of learning is interesting and enjoyable (Q3); and overall students’ satisfaction from the new learning environments (Q4).

Students’ feedback information was gathered through a questionnaire after each learning session that covered all measures of the five latent constructs, phrased on a five-point Likert scale (where 1 = strongly disagree and 5 = strongly agree). Internal consistency of the surveyed items for each construct was assessed through Cronbach’s alpha test (Cronbach, 1951) as evidence that the research items measure the underlying construct. In addition, we tested the adopted constructs for necessary validity that demonstrates the degree to which they represent the theoretical concepts (Colliver, Conlee, & Verhulst, 2012).

Structural equation modeling (SEM) was used as a two-step analytical procedure: first to test and estimate complex relationships between observed (measured) variables and referred unobserved (latent) constructs through a development of a measurement model; and then to evaluate relationships between the latent constructs with a structural model (Bollen, 1998; Byrne, 2001), especially their influence on the QoE variable. SEM surpasses multiple regression, discriminant analysis, or principle components analysis (Chin, 1998) and is particularly useful in social/behavior research where many variables (e.g., attitude, motivation, and experience) are not directly observable, while taking into account the measurement errors. The statistical analyses in this study were conducted using Statistical Package for Social Sciences (SPSS) and Analysis of Moment Structures (AMOS) software. We evaluated a structural model to estimate relationships and predict high school students’ QoE during asynchronous and synchronous distance education activities, while comparing results between the different learning conditions.

Following the proposed methodology, we collected students’ feedback information on all research variables through questionnaires and received 470 students’ responses from the asynchronous learning activities and 473 responses from classes organized with videoconferencing sessions between two different high schools, representing a response rate above 99% in both situations. The students participated in all learning sessions, so they were able to compare and express their subjective experience on each teaching approach while practising three different subjects.

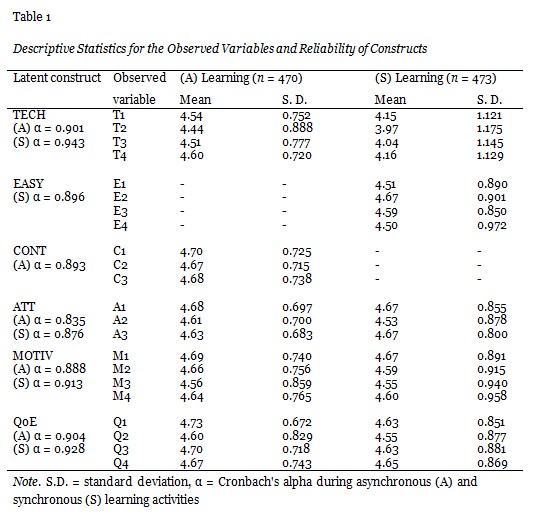

Since proper univariate statistical analysis is important to screen the nature of gathered data, we examined the measures for mean score and standard deviation of the observed variables within asynchronous and synchronous environments, before submitting the research dataset for factor analyses. We also evaluated internal consistency and reliability of each construct through Cronbach’s alpha test in both learning conditions with results analytically presented in Table 1.

We noticed that students’ responses in the questionnaires covered all possible grades from 1 (strongly disagree) to 5 (strongly agree) on different variables, but as shown in Table 1, the descriptive results demonstrated satisfactory standard deviation in both learning conditions, indicating that students’ responses were constructive in nature. From the initial results we can conclude that the level of students’ QoE was slightly higher during asynchronous learning, the technical setup was graded lower during videoconferencing sessions, while the rest of the variables show similar behavior. Still deeper analysis requires factor correlation between each construct and observed variables, as well as presentation of the relationships between constructs by regression or path coefficients. Since as a rule of thumb, Cronbach’s alpha values higher than 0.70 represent good internal consistency (Nunnally & Bernstein, 1994), the results show that the proposed constructs have strong correlations between items and can be used for model development, especially since high values do not mean that the scale is unidimensional.

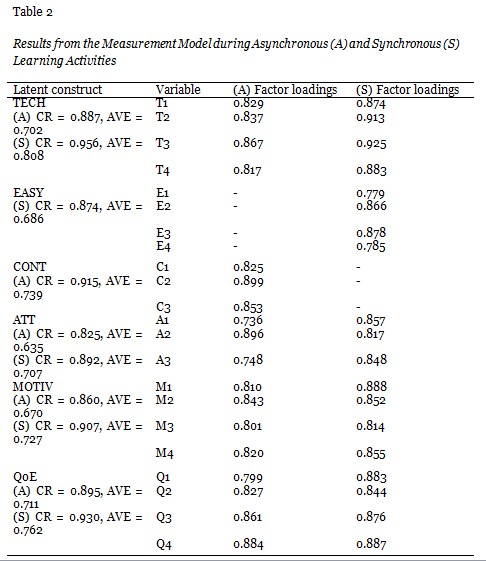

According to Kline (2005) SEM is a large sample technique and complicated path models need at least a sample size of 200 observations. We obtained more than 400 responses from the high school students during both learning conditions and therefore were able to model causal relations between the construct variables, especially influence of multiple variables on students’ QoE. But first we explored adequacy of the observed variables as indicators for the latent constructs (referred to as factor loadings) through evaluation of a measurement model in both environments. The collected data set from the students’ responses was examined within the measurement model, having in mind that standardized factor loadings estimates should be 0.5 or higher, and ideally 0.7 or higher (Nunnally & Bernstein, 1994). In addition, the measurement model was tested for convergent validity through two additional measures: average variance extracted (AVE) and construct reliability (CR) for each construct, derived from provided factor loadings with results demonstrated in Table 2.

As seen in Table 2, the observed variables regress highly on respective constructs with factor loadings above 0.7, as evidence that the research items provide adequate measurement of each underlying construct. On the other hand, a good rule of thumb is that AVE ≥ 0.5 indicates adequate convergent validity and CR should be at least 0.7 for the factor loadings on each construct, even though values between 0.6 and 0.7 may be acceptable. Thus, CR and AVE values were above the desired thresholds and therefore supported the validity of measures in both learning conditions.

Still, when we further analyzed the results, we noticed that T4 in the asynchronous, A2 in the synchronous model, and their error measurements have high value for modification indices with some of the other factors (Hair et al., 1998). High values for modification indices may guide minor modifications in the model in order to improve the fit and estimate the most likely relationships between variables. Therefore we explored the option to remove these variables and improve goodness-of-fit statistics through a structural model refinement process, especially since these modifications are theoretically acceptable.

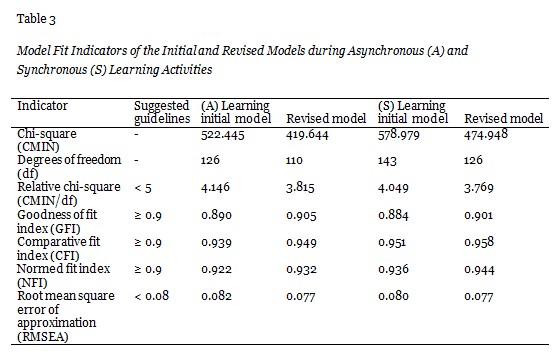

We analyzed complex relationships between the latent constructs and their behavior while influencing high school students’ QoE in the researched environments through development of a structural model for both learning conditions. Since SEM does not have a single statistical test that can determine whether the specified model fits the research data, we subjected the model to various tests with the sole purpose of validating the model and arriving at the best-fit model. We evaluated the initial model and an alternative (revised) model which excluded T4/A2 for the asynchronous/synchronous environments (Table 3), while comparing the following fit indices against acceptance levels, as suggested by previous research: relative chi-square (Wheaton et al., 1977); goodness of fit index (Joreskog & Sorbom, 1984); comparative fit index (Kline, 2005); normed fit index (Bentler & Bonett, 1980); and root mean square error of approximation (MacCallum, Browne, & Sugawara, 1996).

The fit statistics reported that the revised model was improved through the refinement process in both environments and therefore we selected the revised model as final among the two alternatives. Furthermore, these modifications do not significantly change the the constructs’ nature, since streaming media delivery during asynchronous activities (T4) within standard schools’ portals and beliefs regarding intuitive atmosphere (A2) during videoconferencing can be neglected and derived from the remaining measurements.

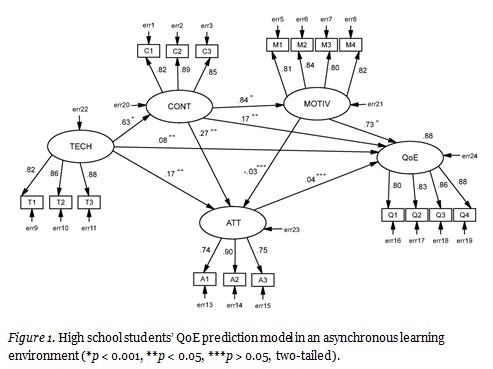

Thus we obtained the final structural model that adequately explains and has the ability to predict high school students’ QoE in an asynchronous distance education environment (Figure 1).

The presented model shows the relationships (paths) between latent constructs, while formulating one exogenous and four endogenous variables. The TECH (exogenous) variable is influenced by factors outside of the model that come from the technical setup, networking and system performance, while the endogenous variables are explained by other constructs via structural model relationships. These relationships are formulated generally and might be fitted for distance education students of varying ages, but the actual results demonstrate beliefs of high school students while participating in asynchronous learning activities. As shown here, the QoE construct (high school students’ perceived QoE) is significantly determined by MOTIV (β = 0.73, p < 0.001), CONT (β = 0.17, p <0.05), and TECH (β = 0.08, p <0.05), while accounting for R2 of 0.88 in the QoE construct. During asynchronous e-learning students’ activities depend on their own will to study and use the materials, so it seems logical that students’ motivation significantly influenced their perceived QoE. Additionally, the content and the technical behavior also contributed to students’ QoE, while students’ attitude towards the new teaching approach did not correlate with their overall experience (ATT/QOE reported path with p > 0.05). The path coefficients, illustrating correlation between the other constructs, show significant influence between CONT/MOTIV (β = 0.84, p < 0.001) and TECH/CONT (β = 0.63, p < 0.001), and positive effect between CONT/ATT (β = 0.27, p < 0.05) and TECH/ATT (β = 0.17, p < 0.05). Therefore the content delivered through the streaming videos and lecture notes strongly influenced students’ motivation and also correlated with their attitude. The results demonstrate that students’ perceptions of the technical performance were important and were correlated with the perceived notion of content and their attitude towards the new learning environment. Consequently, we did not find direct significant statistical effect between students’ motivation and their attitude, since MOTIV/ATT reported path with p > 0.05.

Following our research methodology we constructed a similar structural model and examined model fit during synchronous activities, as shown in Figure 2.

The results from the structural model during synchronous learning activities show similar behavior to the asynchronous model, with overall model variance effect size of R2 = 0.93 for the QoE construct. In like manner high school students’ QoE was significantly determined by MOTIV (β = 0.49, p < 0.001), EASY (β = 0.23, p < 0.05), and TECH (β = 0.12, p < 0.001), which is similar to the asynchronous settings, with the predetermined distinction for EASY and CONT. We found additional patterns, where ease of usage during videoconferencing sessions significantly influenced students’ motivation (EASY/MOTIV reported β = 0.80, p < 0.001) and had moderate effect on attitude towards the new environment (EASY/ATT reported β = 0.12, p < 0.05). Additionally, the motivating factors had significant impact on students’ attitude (MOTIV/ATT reported β = 0.86, p < 0.001), while the technical setup influenced students’ beliefs for ease of usage (TECH/EASY reported β = 0.57, p < 0.001).

However, we found that technical performance did not influence high school students’ attitude towards synchronous learning methods, since the path between TECH/ATT did not report statistical significance (p > 0.05). Furthermore, the results opposed the importance of attitude towards use in TAM (Davis, Bagozzi, & Warshaw, 1989) since ATT/QoE reported path with p > 0.05, which was identical to outcomes in the asynchronous learning environment. The data analysis indicated no significant difference among subjects or gender, which correlated with our initial assumption not to include these variables in the proposed model.

Motivated by a need to understand the nature of the high school students participating in distance education environments, the purpose of this study was to identify relevant factors that influence their subjective QoE, while further comparing outcomes during asynchronous and synchronous learning conditions. Even though certain studies have categorized students involved in K–12 environments as “digital natives” (Koutropoulos, 2011; Li & Ranieri, 2010; Prensky, 2001) we moved beyond this concept based on generational differences and assessed high school students as the same as any distance education practitioner in order to obtain conclusions which are specific for this target group. In the course of our research activities, we defined latent constructs measured through a set of observed variables, demonstrated strong measurement structure, and proposed a model that formulated interrelationships among technical performance, students’ motivation, attitude, and ease of usage or context (during synchronous or asynchronous activities), while predicting high school students’ QoE. Consistent with Somenarain, Akkaraju, and Gharbaran (2010) we found similar behavior in both distance education methods, while the model explained a high percentage of variance in students’ QoE (88% in asynchronous and 93% in synchronous learning environments), reflected as students’ overall satisfaction, beliefs for natural feeling and enjoyable activity, increased efficiency, and productivity.

Our study revealed that the main determinant of high school students’ QoE within asynchronous and synchronous learning environments is students’ motivation, especially during asynchronous activities that generally depend on students’ own initiative. The high school participants involved in this study demonstrated that intrinsic and extrinsic motivators have regressed positively (with minor preference on intrinsic factors) on overall motivation, while supporting existing studies which highlight that motivation is a complicated, multidimensional inner process, as opposed to a singular, monolithic construct (Chen & Jang, 2010; Ryan & Deci, 2000; Xie, Debacker, & Ferguson, 2006). On the other hand, while literature on TAM stresses the importance of ease of usage for acceptance of new technologies (Davis, Bagozzi, & Warshaw, 1989; Liu et al., 2010), there is research evidence which suggests that perceived ease of use is no longer a crucial factor during asynchronous learning (Lee, Cheung, & Chen, 2005). Therefore, we made proper distinction and selected ease of usage as an important factor during synchronous learning, since appropriate teacher-student interaction, easiness in following the lessons, and use of videoconferencing equipment correlated positively with high school students’ QoE. In like manner, the content played a similar role during asynchronous learning activities, as evident from the outcomes in this study. We also found moderate support between technical performance and students’ QoE in both learning methods which correlates with existing studies that provided relations between technical conditions and the quality perceived by end-users from different systems (Khan, Sun, & Ifeachor, 2012; Zapater & Bressan, 2007). However our findings oppose the importance of attitude in TAM or similar acceptance models (Davis, Bagozzi, & Warshaw, 1989; Lee, Cheung, & Chen, 2005), since the high school students instinctively welcomed the new teaching approach and their attitude did not correlate with their subjective QoE.

Although literature abounds on distance education studies that explore the benefits of using emerging technologies, different roles and competencies, proper design of education systems, and so on, the current study involved SEM analysis and a student-centered approach while identifying important factors that can predict positive high school students’ QoE in different learning conditions. Even though distance education and technology are closely connected, we tried to abstract the design of educational tools and the technological layer, while focusing on the social behavior and cognitive level of such a learning process. Hence, the results present valuable information to all stakeholders of virtual schools or evolving high schools that try to incorporate distance learning activities, while striving to facilitate student-centered, experiential, effective, and enjoyable environments.

Since this study demonstrated a strong measurement structure, in our further work we will use these constructs, test the model, and attempt to forecast students’ QoE in primary, tertiary, and adult distance education, while comparing results against students of varying ages. The current study serves as one of the few attempts to determine factors influencing students’ QoE; henceforth, future efforts may use the QoE prediction model and survey additional factors like learning preferences, previous knowledge, cognitive capabilities, and so on, while evaluating the quality of learning (QoL) in similar environments.

Barbour, M. (2011). Today’s student and virtual schooling: The reality, the challenges, the promise. Journal of Open, Flexible and Distance Learning, 13(1), 5-25.

Beldarrain, Y. (2006). Distance education trends: Integrating new technologies to foster student interaction and collaboration. Distance Education, 27(2), 139-153.

Bentler, P. M., & Bonett, D. G. (1980). Significance tests and goodness of fit in the analysis of covariance structures. Psychological Bulletin, 88(3), 588.

Bernard, R. M., Abrami, P. C., Borokhovski, E., Wade, C. A., Tamim, R. M., Surkes, M. A., & Bethel, E. C. (2009). A meta-analysis of three types of interaction treatments in distance education. Review of Educational Research, 79(3), 1243-1289.

Bernard, R. M., Abram, P. C., Lou, Y., Borokhovski, E.,Wade, A.,Wozney, L. et al (2004). How does distance education compare with classroom instruction? A meta-analysis of the empirical literature. Review of Educational Research, 74(3), 379–439.

Bollen, K. A. (1998). Structural equation models. John Wiley & Sons, Ltd.

Byrne, B. M. (2001). Structural equation modeling with AMOS, EQS, and LISREL: Comparative approaches to testing for the factorial validity of a measuring instrument. International Journal of Testing, 1(1), 55-86.

Cavanaugh, C., Gillan, K. J., Kromey, J., Hess, M., & Blomeyer, R. (2004). The effects of distance education on K-12 student outcomes: A meta-analysis. Naperville, IL: Learning Point Associates.

Chang, S. H. H., & Smith, R. A. (2008). Effectiveness of personal interaction in a learner-centered paradigm distance education class based on student satisfaction. Journal of Research on Technology in Education, 40(4), 407-426.

Chen, K. C., & Jang, S. J. (2010). Motivation in online learning: Testing a model of self-determination theory. Computers in Human Behavior, 26(4), 741-752.

Chin, W. W. (1998). Issues and opinion on structural equation modeling. MIS Quarterly, 22(1), 7–16.

Giancola, J. K., Grawitch, M. J., & Borchert, D. (2009). Dealing with the stress of college: A model for adult students. Adult Education Quarterly, 59(3), 246-263.

Colliver, J. A., Conlee, M. J., & Verhulst, S. J. (2012). From test validity to construct validity… and back? Medical Education, 46(4), 366-371.

Cronbach, L. J. (1951). Coefficient alpha and the internal structure of tests. Psychometrika, 16(3), 297-334.

Davis, F.D., Bagozzi, R.P., & Warshaw, P. R. (1989). User acceptance of computer technology: A comparison of two theoretical models. Management Science, 35(8), 982–1003.

Davis, F. D., Bagozzi, R. P., & Warshaw, P. R. (1992). Extrinsic and intrinsic motivation to use computers in the workplace. Journal of Applied Social Psychology, 22(14), 1111-1132.

Donavant, B. W. (2009). The new, modern practice of adult education online instruction in a continuing professional education setting. Adult Education Quarterly, 59(3), 227-245.

Eom, S. B., Wen, H. J., & Ashill, N. (2006). The determinants of students’ perceived learning outcomes and satisfaction in university online education: An empirical investigation. Decision Sciences Journal of Innovative Education, 4(2), 215-235.

Gagné, R. M., & Medsker, K. L. (1996). The conditions of learning: Training applications. Fort Worth: Harcourt Brace College Publishers.

Gillies, D. (2008). Student perspectives on videoconferencing in teacher education at a distance. Distance Education, 29(1), 107-118.

Gong, Y., Yang, F., Huang, L., & Su, S. (2009). Model-based approach to measuring quality of experience. In Emerging Network Intelligence, 2009 First International Conference (pp. 29-32). IEEE.

Hair, J. F., Anderson, R. E., Tatham, R. L., & Black, W. C. (1998). Multivariate data analysis (5th ed.). NY: Prentice Hall International.

Hardre, P. L., & Reeve, J. (2003). A motivational model of rural students’ intentions to persist in, versus drop out of, high school. Journal of Educational Psychology, 95(2), 347.

Helsper, E. J., & Eynon, R. (2010). Digital natives: Where is the evidence? British Educational Research Journal, 36(3), 503-520.

Hew, K. F., & Cheung, W. S. (2010). Use of three‐dimensional (3‐D) immersive virtual worlds in K-12 and higher education settings: A review of the research. British Journal of Educational Technology, 41(1), 33-55.

Hrastinski, S. (2008). Asynchronous and synchronous e-learning. Educause Quarterly, 31(4), 51-55.

Janowski, L., & Papir, Z. (2009). Modeling subjective tests of quality of experience with a generalized linear model. In Quality of Multimedia Experience, 2009. QoMEx 2009. International Workshop (pp. 35-40). IEEE.

Jaques, D., & Salmon, G. (2012). Learning in groups: A handbook for face-to-face and online environments. Routledge.

Johnson, G. (2008). The relative learning benefits of synchronous and asynchronous text-based discussion. British Journal of Educational Technology, 39(1), 166-169.

Joreskog, K. G., & Sorbom, D. (1984). LISREL VI user’s guide. Mooresville, IN: Scientific Software.

Khan, A., Sun, L., & Ifeachor, E. (2012). QoE prediction model and its application in video quality adaptation over UMTS networks. Multimedia, IEEE Transactions, 14(2), 431-442.

Kilkki, K. (2008). Quality of experience in communications ecosystem. J. UCS, 14(5), 615-624.

Kline, R. B. (2005). Principles and practice of structural equation modeling (2nd ed.). New York: Guilford Press.

Knoche, H., & Sasse, M. A. (2008). Getting the big picture on small screens: Quality of experience in mobile TV. Multimedia Transcoding in Mobile and Wireless Networks, 31-46.

Koutropoulos, A. (2011). Digital natives: Ten years after. MERLOT Journal of Online Learning and Teaching, 7(4), 1-21.

Laghari, K. U. R., & Connelly, K. (2012). Toward total quality of experience: A QoE model in a communication ecosystem. Communications Magazine, IEEE, 50(4), 58-65.

Lawson, T., Comber, C., Gage, J., & Cullum‐Hanshaw, A. (2010). Images of the future for education? Videoconferencing: A literature review. Technology, Pedagogy and Education, 19(3), 295-314.

Lee, M. K., Cheung, C. M., & Chen, Z. (2005). Acceptance of Internet-based learning medium: The role of extrinsic and intrinsic motivation. Information & Management, 42(8), 1095-1104.

Li, Y., & Ranieri, M. (2010). Are ‘digital natives’ really digitally competent?—A study on Chinese teenagers. British Journal of Educational Technology, 41(6), 1029-1042.

Liu, I. F., Chen, M. C., Sun, Y. S., Wible, D., & Kuo, C. H. (2010). Extending the TAM model to explore the factors that affect intention to use an online learning community. Computers & Education, 54(2), 600-610.

Malinovski, T., Lazarova, M., & Trajkovik, V. (2012). Learner–content interaction in distance learning models: Students’ experience while using learning management systems. Int. J. Innovation in Education, 1(4), 362–376.

MacCallum, R. C., Browne, M. W., & Sugawara, H. M. (1996). Power analysis and determination of sample size for covariance structure modeling. Psychological Methods, 1(2), 130.

Murphy, E., & Rodríguez-Manzanares, M. A. (2009). Teachers’ perspectives on motivation in high-school distance education. The Journal of Distance Education/Revue de l’Éducation à Distance, 23(3), 1-24.

Murphy, E., Rodríguez‐Manzanares, M. A., & Barbour, M. (2011). Asynchronous and synchronous online teaching: Perspectives of Canadian high school distance education teachers. British Journal of Educational Technology, 42(4), 583-591.

Nunnally, J. C., & Bernstein, I. H. (1994). Psychometric theory (3rd ed.). New York: McGraw-Hill.

Oblinger, D. G. (2005). Learners, learning & technology: The Educause Learning Initiative. Educause Review, 40(5), 66-75.

Oztok, M., Zingaro, D., Brett, C., & Hewitt, J. (2013). Exploring asynchronous and synchronous tool use in online courses. Computers & Education, 60(1), 87-94.

Powell, A., & Patrick, S. (2006). An international perspective of K-12 online learning: A summary of the 2006 NACOL International E-Learning Survey. North American Council for Online Learning.

Prensky, M. (2001). Digital natives, digital immigrants part 1. On the horizon, 9(5), 1-6.

Robert, L. P., & Dennis, A. R. (2005). Paradox of richness: A cognitive model of media choice. Professional Communication, IEEE Transactions, 48(1), 10-21.

Ryan R. M., & Deci E. L. (2000) Self-determination theory and the facilitation of intrinsic motivation, social development, and well being. American Psychologist, 55(1), 68–78.

Sahin, I., & Shelley, M. (2008). Considering students’ perceptions: The distance education student satisfaction model. Educational Technology & Society, 11(3), 216-223.

Smith, R., Clark, T., & Blomeyer, R. L. (2005). A synthesis of new research on K-12 online learning. Naperville, IL: Learning Point Associates.

Somenarain, L., Akkaraju, S., & Gharbaran, R. (2010). Student perceptions and learning outcomes in asynchronous and synchronous online learning environments in a biology course. MERLOT Journal of Online Learning and Teaching, 6(2), 353-356.

Steinweg, S. B., Williams, S. C., & Stapleton, J. N. (2010). Faculty use of tablet PCs in teacher education and K-12 settings. TechTrends, 54(3), 54-61.

Stricker, D., Weibel, D., & Wissmath, B. (2011). Efficient learning using a virtual learning environment in a university class. Computers & Education, 56(2), 495-504.

Tüzün, H., Yılmaz-Soylu, M., Karakuş, T., İnal, Y., & Kızılkaya, G. (2009). The effects of computer games on primary school students’ achievement and motivation in geography learning. Computers & Education, 52(1), 68-77.

Zapater, M. N., & Bressan, G. (2007, January). A proposed approach for quality of experience assurance of IPTV. In Digital Society, 2007, ICDS’07. First International Conference on the (pp. 25-25). IEEE.

Xie, K., Debacker, T. K., & Ferguson, C. (2006). Extending the traditional classroom through online discussion: The role of student motivation. Journal of Educational Computing Research, 34(1), 67–89.

Wheaton, B., Muthen, B., Alwin, D., F., & Summers, G. (1977). Assessing reliability and stability in panel models. Sociological Methodology, 8(1), 84-136.