|

|

|

Mimi Recker1, Min Yuan1, and Lei Ye2

1Utah State University, USA, 2Pacific Northwest University of Health Sciences, USA

The widespread availability of high-quality Web-based content offers new potential for supporting teachers as designers of curricula and classroom activities. When coupled with a participatory Web culture and infrastructure, teachers can share their creations as well as leverage from the best that their peers have to offer to support a collective intelligence or crowdsourcing community, which we dub crowdteaching. We applied a collective intelligence framework to characterize crowdteaching in the context of a Web-based tool for teachers called the Instructional Architect (IA). The IA enables teachers to find, create, and share instructional activities (called IA projects) for their students using online learning resources. These IA projects can further be viewed, copied, or adapted by other IA users. This study examines the usage activities of two samples of teachers, and also analyzes the characteristics of a subset of their IA projects. Analyses of teacher activities suggest that they are engaging in crowdteaching processes. Teachers, on average, chose to share over half of their IA projects, and copied some directly from other IA projects. Thus, these teachers can be seen as both contributors to and consumers of crowdteaching processes. In addition, IA users preferred to view IA projects rather than to completely copy them. Finally, correlational results based on an analysis of the characteristics of IA projects suggest that several easily computed metrics (number of views, number of copies, and number of words in IA projects) can act as an indirect proxy of instructionally relevant indicators of the content of IA projects.

Keywords: Distributed learning environments; evaluation of CAL systems; interactive learning environments; pedagogical issues

Teachers have long been designing and modifying curricula and lesson plans (Ball & Cohen, 1996; Brown & Edelson, 2003; Fogleman, McNeil, & Krajcik, 2011; Remillard, 2005). More recently, this phenomenon, called teachers as designers, has drawn renewed interest (e.g., Davis & Varma, 2008), prompted in part by the widespread availability of high-quality online resources, called open educational resources (OER), via the Internet. As OER become increasingly and widely available, research is needed to understand how teachers design curricula, lesson plans, and classroom activities using such resources. In particular, in networked computing environments that provide easy sharing and reuse, how do teachers become engaged in designing, sharing, and modifying instructional artifacts, and do these activities impact their resulting quality?

Our approach for supporting teachers as designers is via a free, Web-based authoring tool called the Instructional Architect (IA.usu.edu), which enables teachers to find and design instructional activities for their students using OER (Recker, 2006). Teachers can share these resulting activities, called IA projects, by making them publically available within the IA. These IA projects can then be viewed, copied, or adapted by other IA users to further support their own teaching activities (Recker et al., 2005; Recker et al., 2007). Viewed in this way, the IA provides an infrastructure for collective intelligence and crowdsourcing, which we dub crowdteaching, in which teachers can create, share, and iteratively adapt instructional activities using OER, leveraging from their peers’ work to best serve the needs of their students (Benkler, 2006; Borgman et al., 2008; Porcello & Hsi, 2013).

In collective intelligence communities, loosely organized groups of people connected by the Internet work together to accomplish tasks in ways that appear more intelligent, more effective, and more efficient than working alone (Malone, Laubacher, & Dellarocas, 2009). However, studies of collective intelligence sites, such as Wikipedia, suggest that these peer production models may succeed only when they are aimed at focused tasks and coupled with incentives to harness the work of the best contributors (Malone et al., 2009). Thus for crowdteaching models to succeed, we need more nuanced understandings of how teachers can participate in such environments to create and share instructional activities around OER.

The purpose of this exploratory article is to explore how teachers’ activities within the IA may reveal crowdteaching processes. We first provide an overview of the IA system, and then describe how it fits within a framework for examining collective intelligence communities. Next, we examine the usage and creation activities of two teacher samples and analyze the characteristics of a subset of IA projects implemented by teachers in classrooms. In this way, we explore what aspects of these teachers’ design activities may enhance the collective intelligence of the IA community and the nature of the artifacts that are produced.

The collective intelligence context for this work is the Instructional Architect (IA.usu.edu), in use since 2001. The IA is a free, easy-to-use Web-based authoring tool that enables teachers to easily find and assemble OER into learning activities for their students (Recker, 2006). The IA was originally designed for K-12 teachers, and has been widely used in various subject areas (e.g., art, engineering, math) by teachers in both K-12 and higher education settings.

To support teachers’ design and collaboration activities, the IA offers several functions. For example, the “My Resources” area allows teachers to search for and save OER to their personal collections. The “My Projects” area allows teachers to create IA projects using collected OER, and publish (or share) these IA projects. From the perspective of collaboration, teachers can view published IA projects by using the “search” function, and copy their favorite ones to their personal collection. In this way, the IA supports crowdteaching processes in that teachers can create and contribute IA projects, as well as view, copy, and build upon other teachers’ IA projects that have been contributed to the IA community.

Since 2005, the IA has attracted over 7,600 registered users, who have gathered approximately 74,000 OER and created over 17,300 IA projects. Since August 2006, public IA projects have been viewed over 2.5 million times. These IA projects address a range of subject areas and grade levels, rely on a range of pedagogies, and incorporate varying numbers of OER in many different ways.

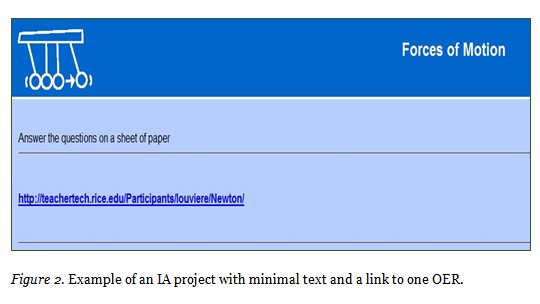

Figure 1 shows an example IA project created by a middle school science teacher. In this example, the teacher has written text that presents a problem, as well as supporting information to help solve the problem. This information includes a link to an online simulation that helps students learn what variables affect density. Here, the OER is included as a support to the primary problem solving activity.

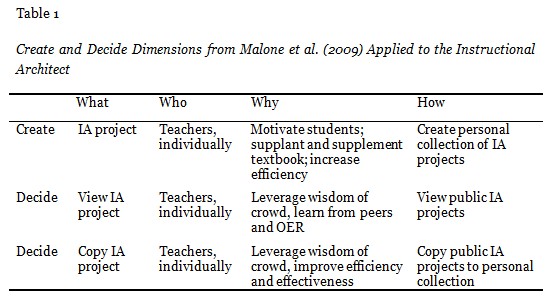

Figure 2 shows a simpler IA project on a science-related topic for 8th grade students. In this example, the teacher directs students to a link, while asking them to complete a worksheet. In this case, the OER plays the major role in instruction.

In this section, we first review a framework for characterizing collective intelligence communities and show how it applies to crowdteaching in the context of the Instructional Architect. We then describe two indicators for examining and characterizing the content of teachers’ IA projects.

In a recent paper, Malone et al. (2009) analyzed over 250 examples of online collective intelligence and crowdsourcing communities, using two sets of related questions. The first set of questions examines “what,” that is, the goals or outcomes of the collective intelligence community. For example, key activities may involve creating artifacts or deciding on winners. These questions also address the primary processes behind these activities, for example, collecting or collaborating.

The second set of questions addresses “who” is engaged in tasks. In a collective intelligence community, either an egalitarian crowd or a hierarchy (where some participants have more decision-making power than others, e.g., editors in Wikipedia) performs the tasks. These questions also address “why,” or user motivations and incentives for engaging in tasks. In some cases, money may be the motivator. For example, in TeachersPayTeachers.com, teachers post lesson plans and activities that then can be purchased and revised by others (Abramovich & Schunn, 2012). In other communities, however, altruism is a key driver. In the Tapped In online community, for example, educational professionals engaged in mentoring and discussions with no concrete reward structures (Farooq, Schank, Harris, Fusco, & Schlager, 2007).

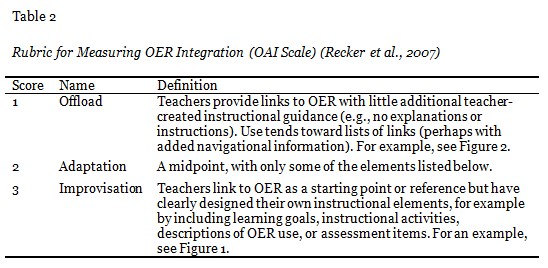

Following the framework of Malone et al. (2009), Table 1 shows key create and decide dimensions in the IA in terms of “what is being accomplished (goal),” “who is performing the task,” “why they are doing it (incentives),” and “how it is done.” For the create dimension in the IA, teachers work independently to design IA projects. As reported elsewhere, we have found that key motivations for teachers in using the IA include the desire to increase student motivation by using interactive content, to supplement their textbook materials, to increase student understanding using interactive resources, and to increase their efficiency as teachers (Recker et al., 2005; Recker et al., 2007). Teachers accomplish these tasks by creating a collection of IA projects, which they can then choose to share with only their students or with anyone using the IA site.

In the decide dimension in the IA, teachers can individually decide to view or copy an existing IA project from the public collection. For example, a teacher might decide to search for and view IA projects on a particular topic to see how other teachers are choosing to teach it and what OER they are using to support student learning. This approach might be more efficient than an unconstrained search of the Internet, which many teachers find highly inefficient (Mardis, ElBasri, Norton, & Newsum, 2012). It might also be more effective in that teachers can learn from other teachers and from the content of the OER they select (Ball & Cohen, 1996; Davis & Krajcik, 2005; Drake & Sherin, 2006). If teachers especially like an IA project, they can decide to copy it to their personal collection for further editing, adaptation, and reuse.

A key objective of the collective intelligence process is to leverage the work of others to improve the effectiveness and/or efficiency of creating artifacts. In education, the rapid rise of repositories of OER, such as those provided by TeacherTube, the National Science Digital Library (NSDL.org), the Khan Academy, the OER Commons, and OpenCourseWare (Atkins, Brown, & Hammond, 2007), has made evaluating the content of OER more pressing (Sumner, Khoo, Recker, & Marlino, 2003; Kastens et al., 2005; Porcello & Hsi, 2013).

Characterizing the content of OER, however, has proven a complex task. Multiple factors can impact a person’s judgment, such as an OER’s availability, credibility, currency, and authority of the content, and the context (e.g., pedagogy, setting) of use of an OER (Custard & Sumner, 2005; Leary, Giersch, Walker, & Recker, 2009; Wetzler et al., 2013). In this work, we adopted strategies used in previous work that assessed teachers’ naturally occurring artifacts (e.g., lesson plans) as a means to measure the quality of students’ learning opportunities (Penuel & Gallagher, 2009). Thus, we examined the content of artifacts created by teachers (i.e., IA projects) using two sets of instructionally relevant indicators. These indicators are described next.

Indicator 1: Problem-based learning (PBL). We applied a rubric developed in previous research (Walker et al., 2012) to score an IA project’s alignment with a form of inquiry learning, specifically problem-based learning (Barrows, 1996). The rubric consisted of 11 elements in four categories (Authentic Problem, Learning Processes, Facilitator, and Group Work), with the presence of each element rated on a 0–2 scale, resulting in a maximum possible score of 22 points. Three raters, randomly selected from a pool of five raters, independently scored the PBL alignment of the IA projects. Overall inter-rater reliability of the rubric as measured by average one-way random effects intra-class correlation (ICC) was high, with ICC = .86 (Walker et al., 2012).

Indicator 2: Offload-adaptation-improvisation (OAI). As an outcome of studying teachers’ adaptation of an innovative curriculum, Brown and Edelson (2003) devised the design capacity for enactment (DCE) framework within a “teaching as design” paradigm. As part of the framework, they defined a continuum of curriculum use, ranging from offloads to adaptations to improvisations. This continuum describes the distribution of responsibility for instruction between the teacher and the curriculum. In particular, in offloads, the curriculum is implemented essentially unchanged and the bulk of instructional decisions are contained in the instructional materials. In improvisation, the teacher flexibly borrows and customizes pieces while playing a major role in the decision-making process. The adaptation category represents the midpoint of the continuum.

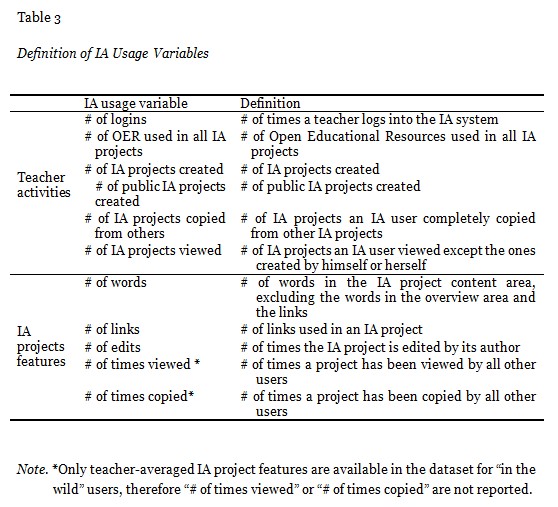

Building on our previous work (Recker et al., 2007), we operationalized aspects of the Brown and Edelson continuum to characterize how IA projects integrate OER (see Table 2). To measure inter-rater reliability, one coder scored all 72 IA projects and a second coder scored a random subset of IA projects. Krippendorff’s alpha suggests moderate to high reliability (Kalpha = .69).

The core research questions guiding this work follow the framework of Malone et al. (2009) and are thus organized around users (teachers) and artifacts (IA projects):

To investigate these two questions, we analyzed two different datasets corresponding to each of the questions. These datasets illustrate IA usage in two different settings. The first sample, “in the wild”, includes users who were not actively recruited to use the IA. The second sample, PD participants, was drawn from a teacher professional development (PD) opportunity centered around use of the IA conducted in a western U.S. state.

The sampling process for “in the wild” teachers was we selected users who created an IA account during the 2009 calendar year and also indicated their years of teaching experience in the optional portion of their profile when they registered with the IA. These 200 teachers created a total of 520 IA projects.

The second sample, PD participants, was comprised of 36 middle school mathematics and science teachers who participated in a professional development series lasting three months. The professional development (PD), described elsewhere (Walker et al., 2012), focused on enhancing teachers’ technology skills for finding and selecting OER, and designing classroom activities around these OER using the Instructional Architect. These teachers created a total of 351 IA projects from September 2010 to August 2011. Using teacher journals, we selected two projects from each teacher (72 total) for further analysis using the following criteria: 1) the IA projects were created during the PD training period, 2) they were implemented in their classroom teaching, and 3) they were scored using the PBL alignment and OAI rubrics. As these teachers were all part of a sustained PD experience and those 72 projects were implemented in teaching, teacher behaviors and their IA project features are likely different from those of teachers “in the wild.”

For these two samples of users, several sources of data were analyzed. First, users completed an optional user profile upon creating their free IA account, in which they were asked to rate their comfort level with technology on a Likert scale, and report their years of teaching experience. Second, the IA was instrumented to automatically collect and aggregate detailed usage data, as well as, third, IA project features (Khoo et al., 2008). In addition, two IA projects created by each of the 36 PD participants (72 total IA projects) were hand scored in terms of their PBL alignment score and their OAI score (described above).

To address RQ1, we used descriptive statistics to explore IA usage from both teacher and IA project perspectives. Table 3 shows definitions for the variables examined in the study.

To address RQ2, correlation analyses were conducted to examine the bivariate relationship between the IA project variables (PBL or OAI score) and IA usage variables. Since the PBL alignment scores were not normally distributed and the OAI score was a categorical variable, Spearman ranked correlations were used. To further investigate what variable(s) predict the OAI scores, multinomial logistic regression models were fit. Before running the regression model, multicollinearity was examined according to the bivariate correlation analysis results, and no high correlations (> .8) were identified. A series of models and predictor variables were tested using the backward elimination. The final models were selected based on their overall significance and parsimony.

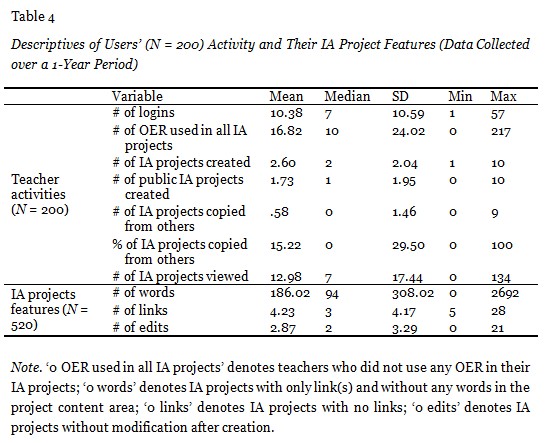

The first research question explored organic teacher activity and how it might relate to teachers’ collective intelligence processes. Table 4 shows summary data for the 200 “in the wild” teachers who created an IA account over the course of one year, and the 520 IA projects they created. On average, these teachers created a small number of IA projects and chose to share almost two thirds of them. In addition, 15% of their IA projects were copied directly from other IA projects. Thus, these teachers can be seen as both contributors to and consumers of crowdteaching.

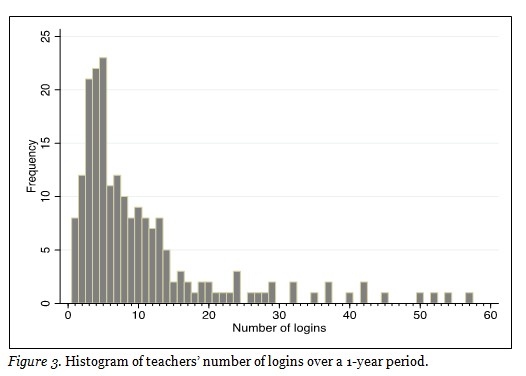

Further, the distribution of most usage values is skewed and follows a Zipf (or long tail) distribution (Anderson, 2006; Recker & Pitkow, 1996). As is common in many Internet-based datasets, a small number of users account for a majority of the activity (see Figure 3 for an example of a histogram of teachers’ number of logins). This has also been called the “90-9-1” rule or “participation inequality,” in that in typical online communities, 90% of participants are lurkers, 9% contribute occasionally, and 1% account for the most contributions (Nielsen, 2006).

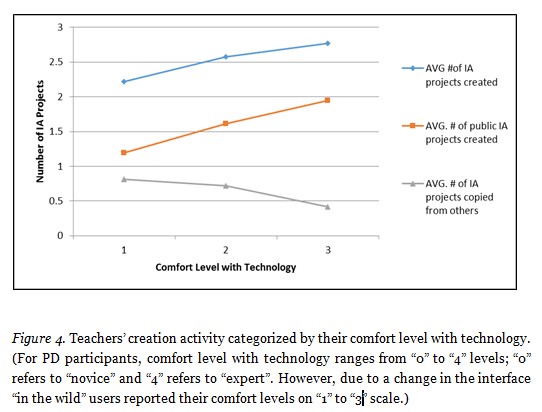

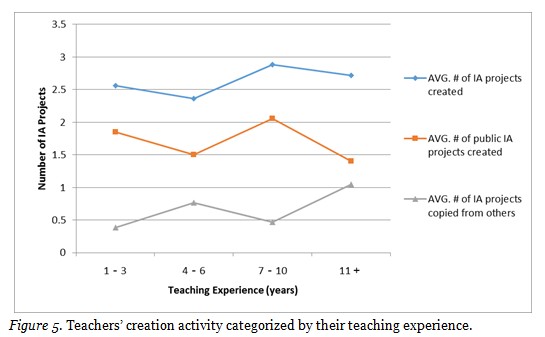

Figures 4 and 5 show these teachers’ IA project creation activity categorized by their self-reported comfort levels with technology and their years of teaching experience. Figure 4 shows that teachers who had a higher comfort level with technology appeared to have more contributor behavior: They created and shared the most IA projects but copied less. Teachers who had a lower comfort level with technology tended to display more consumer behavior: They created and shared less IA projects but copied more. Figure 5 shows teachers’ usage activity categorized by their self-reported teaching experience. Here, no clear pattern was apparent.

In sum, teachers can be involved in crowdteaching in different ways: offering wisdom by making their IA projects public, and benefiting from others’ wisdom by viewing and copying IA projects. In terms of enhancing the collective intelligence of the community, the challenge then becomes automatically deriving metrics that help identify IA projects that are valued in the community. This challenge is addressed next.

This research question examines the relationship between IA project features, teacher characteristics, and the creation of inquiry-oriented IA projects. Table 5 shows summary data for the 36 teachers and their 72 IA projects for data collected over one year.

In comparison to those IA projects created by teachers “in the wild,” these IA projects showed a much higher average number of edits, suggesting higher levels of effort. In addition, perhaps because these teachers were part of a sustained PD intervention, these teachers also showed, on average, higher levels of IA activity, including number of logins, IA project created, projects viewed, projects copied, and OER collected.

Of these 72 IA projects, 51 (70.83%) were made public by their authors, meaning they could be viewed and copied by others. Table 5 shows that the 51 public IA projects were viewed more frequently than they were copied, suggesting that the IA users preferred to view IA projects for ideas and OER rather than to completely copy them. Copying an IA project may be an indication that a teacher places a very high value upon it. Table 5 also shows that overall mean scores for PBL alignment were low and their distribution was skewed (M = 3.32 on a 22-point scale). This skew precluded the use of statistics assuming normality, such as Pearson’s correlation and multiple regression.

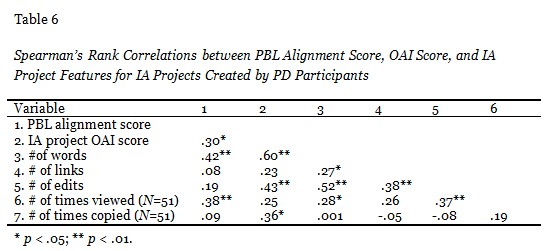

Spearman’s rank order correlation coefficients were calculated to investigate the relationships between various IA project features and PBL alignment scores (see Table 6). Results suggest that PBL alignment scores are positively and moderately correlated with the number of words and the number of views. Thus, if the PBL alignment score is viewed as an indicator of a useful IA project, the number of views and words can act as an indirect proxy of this measure. Table 6 also shows positive and moderate correlations between the number of words and the number of links, edits, and views. Moderate positive correlations were also found between the number of times an IA project was edited and the number of links and views.

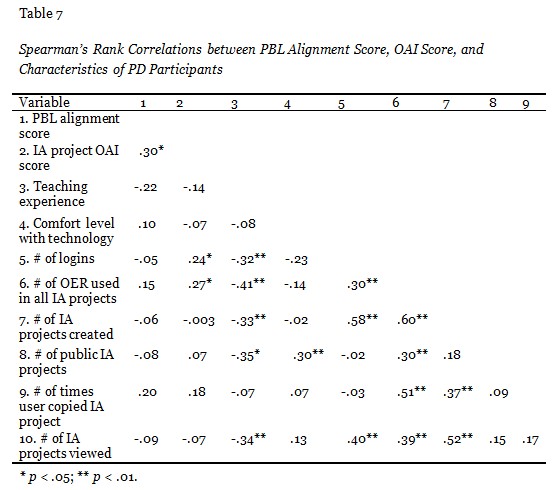

Spearman’s rank correlations were used to explore the relationships between teacher characteristics and the PBL alignment score of their IA projects. Table 7 shows that teachers’ reported teaching experience was moderately and negatively correlated with a variety of IA activities, such as the number of logins, the number of OER used, the number of IA projects created, the number of public IA projects created, and the number of the IA projects viewed, suggesting that novice teachers may have found certain features of the IA more useful. Additionally, Table 7 shows a positive correlation between teachers’ comfort level with technology and the number of public IA projects created. Thus, as teachers’ comfort level with technology increases, they tend to publish and share more IA projects. This is similar to the pattern identified in Figure 4.

Other moderate and positive correlation results are not surprising and suggest that an engaged user shows overall higher levels of activity on all IA features. Note, however, that no strong correlations were found between the PBL alignment score and teacher characteristics.

This question examines the relationship between IA project features, teacher characteristics, and how IA projects are designed to integrate OER. For these 72 IA projects, 23 (31.9%) of the IA projects were categorized as an offload, 38 (52.8%) IA projects were in the adaptation category, and 11 (15.2%) IA projects were in the improvisation category (see Table 2 for definitions).

Spearman’s rank order correlation coefficients were calculated between OAI scores and IA project features (see Table 6). Results show that OAI score is positively correlated with the number of words, the number of edits, and the number of times an IA project was copied. Thus, as the number of words, edits, and copies of an IA project increased, its OAI score increased toward the improvisation end of the scale.

Spearman’s rank order correlation was also used to investigate the relationships between OAI scores and teachers’ characteristics (see Table 7). Results suggest that OAI scores are significantly and positively correlated with teachers’ number of logins and number of OER used. Thus, as a teacher’s number of logins and number of OER used increased, the OAI score of their IA projects increased toward the improvisation end of the scale.

As noted, Tables 6 and 7 show that the OAI scores of the 72 IA projects are significantly and positively correlated with the number of words, the number of edits, the number of times an IA project was copied, the number of logins, and the number of OER used. Several of multinomial logistic regression models were fit to test whether different combinations of these five variables could significantly predict the OAI scores of IA projects. Note that multicollinearity was examined and eliminated as a concern for this dataset. Two final models are reported as follows.

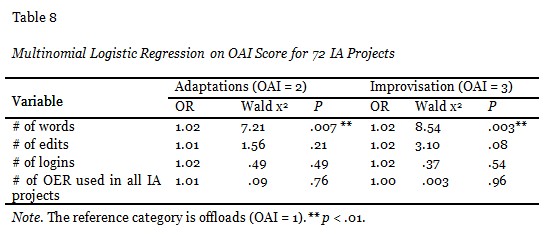

The first multinomial logistic regression model (see Table 8) was based on the 72 IA projects with four predictor candidates. The results show that only the number of words is a significant predictor of OAI score. This means that after holding other variables constant, for each unit increase in the number of words, the multinomial odds ratio for an IA project in the adaptation category (OAI = 2) relative to the offload category (OAI = 1) would be expected to increase by 2%. Similarly, after holding other variables constant, for each unit increase in the number of words, the multinomial odds ratio for an IA project in the improvisation category (OAI = 3) relative to the offload category (OAI = 1) would also be expected to increase by 2%.

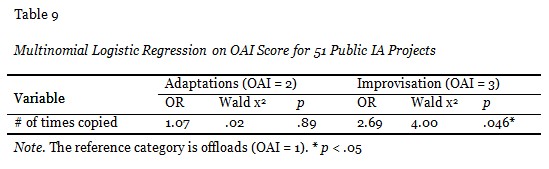

The second multinomial logistic regression (see Table 9) was based on the 51 public IA projects and one predictor was retained in the final model. The results indicate that the number of times users copied IA projects is a significant predictor of OAI score. For each unit increase in the number of times users copied an IA project, the multinomial odds ratio for a project in the improvisation category (OAI = 3) relative to the offload category (OAI = 1) would be expected to increase by 169%. However, the comparison between the adaptation category (OAI = 2) and the offload category (OAI = 1) did not show this trend.

In sum, among the five variables that are significantly correlated with the OAI scores of the IA projects, only the number of words and the number of times an IA project was copied were significant predictors of OAI score.

The evidence suggests that collective intelligence (called crowdteaching) activities occur within the IA. Teachers, on average, chose to share almost two thirds of their created artifacts, while a small proportion of their IA projects were copied directly from other IA projects. Thus, these teachers can be seen as both contributors to and consumers of crowdteaching processes. In addition, IA users preferred to view IA projects rather than to completely copy them. This suggests that they may be browsing for ideas or finding only a smaller set of IA projects that completely meet their needs. Copying an IA project suggests a higher level of endorsement of its content. Finally, PD participants showed overall greater levels of IA activity, compared to those teachers “in the wild.” Thus, like findings from other research of loose online communities (Abramovich & Schunn, 2012; Nielsen, 2006), participation shows deep inequalities, but can be nurtured.

In examining the possible influences of teacher characteristics, we noted similar patterns in the two samples of teachers in terms of their reported comfort level with technology. “In the wild” teachers with lower levels of comfort in technology use appeared to display more consumer behaviors, while higher level teachers appeared to show more contributor behaviors. A similar pattern is also evident in the PD participants: Teachers’ comfort level with technology was positively (and moderately) correlated with sharing behaviors. In terms of the teaching experience, patterns were different. “In the wild” teachers showed no clear pattern; for PD participants, teaching experience was negatively (and moderately) correlated with IA project creation and sharing. We also acknowledge that as self-reported data, these may not capture the most important underlying constructs.

In addition, a goal was to find proxy variables that could be easily computed and that also aligned to instructionally relevant indicators. Distilling such variables could support IA users (teachers) in quickly identifying useful IA projects to either use or further adapt. We coded IA projects implemented in classrooms by teachers using two indicators, the problem-based learning rubric and the OAI scale, and examined their relationship with several usage metrics. Correlational results suggest that among several metrics related to two instructional indicators, the number of words in IA projects was the best indirect proxy of both indicators.

It is possible that IA projects with more content (i.e., words) would necessarily score higher on these two indicators. However, it is unclear that simply having more textual content would cause raters to find greater evidence of inquiry learning elements, or improvisation around OER. Similarly, a comprehensive study of Wikipedia article quality also found that the number of words was the most robust (and easiest to extract) predictor of quality although there is no reason to expect that lengthy encyclopedia articles are necessarily better (Blumenstock, 2008).

We note that this study had several limitations. The first is the small number of users and IA projects analyzed against the larger backdrop of users and usage. True crowdsourcing environments typically assume an Internet-scale community behind crowdsourcing activities (Estellés-Arolas & González-Ladrón-de-Guevara, 2012). In our case, we restricted our analyses in the first sample to users who clearly identified themselves as teachers, which severely restricted the user base. Because we relied on these users voluntarily completing a brief demographic survey, we may have unintentionally excluded actual teachers. In the second sample, however, we deliberately only included IA projects that teachers told us were used in actual classroom practice. In the future, instead of using a restricted sample, a more systematic sampling process should be employed and more comprehensive teacher demographic information should be collected. Finally, we relied upon two indicators of instructional quality. It is certainly possible that others could be identified that could serve as more robust indicators.

This article explored collective intelligence processes in the context of a Web-based tool for teachers, the Instructional Architect, to support teachers as designers using OER. Our analyses were guided by a framework, which posits a very loose notion of community wherein members may have very diffuse interactions with one another (Malone et al., 2009). The IA community is in that vein.

Results of the study have implications for both research and practice in the OER community. From the research perspective, this study reveals different patterns in terms of how teachers engage with OER: They can be contributors by creating and sharing new content; or they can be consumers by viewing and even copying other teachers’ content. In addition, the study identified various factors that influenced teachers’ engagement with the IA, such as their teaching experiences, comfort levels with technology, and whether or not they attended professional development workshops. Moreover, the analysis of user activities indicates that the frequency of teacher activities in the IA often followed the “long tail” distribution, which provides further evidence for Nielsen’s (2006) participation inequality rule.

In terms of practice, results from the study have implications for system design. For example, the “decide-how” dimension is largely done individually, and supports for this step within the IA are mostly implicit. As such, scaffolds in the IA interface could be designed to better represent user activity (e.g., sorting search results by identified proxies of quality, including number of words, views, or copies) in order to help teachers better leverage crowd wisdom.

Future work includes developing means for automatically conducting longerterm analyses of the activities of IA users, as well as the evolution of their artifacts. We are also developing computational approaches that can scale to study how the micro-level activities of these users and their designs might affect the macro-level behaviors of the community as a whole (Walker et al., 2011).

The authors would like to thank the study participants for their time and assistance. This material is based upon work supported by the U.S. National Science Foundation under Grant No. 0937630, and Utah State University. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation. Portions of this research were previously presented at the American Educational Research Association Annual Meeting (AERA 2013) in San Francisco.

Abramovich, S., & Schunn, C. (2012). Studying teacher selection of resources in an ultra-large scale interactive system: Does metadata guide the way? Computers & Education, 58(1), 551–559.

Anderson, C. (2006). The long tail: Why the future of business is selling less of more. New York, NY: Hyperion.

Atkins, D. E., Brown, J. S., & Hammond, A. L. (2007). A review of the open educational resources (OER) movement: Achievements, challenges, and new opportunities. Retrieved from http://www.hewlett.org/Programs/Education/OER/OpenContent/Hewlett+OER+Report.htm

Ball, D. L., & Cohen, D. K. (1996). Reform by the book: What is—or might be—the role of curricular materials in teacher learning and instructional reform? Educational Researcher, 25(9), 6–14.

Barrows, H. S. (1996). Problem-based learning in medicine and beyond: A brief overview. New Directions for Teaching and Learning, 68, 3–16.

Benkler, Y. (2006). The wealth of networks: How social production transforms markets and freedom. New Haven, CT: Yale University Press.

Blumenstock, J. (2008). Size matters: Word count as a measure of quality on Wikipedia. Proceedings of the 17th International Conference on World Wide Web (WWW ‘08) (pp. 1095–1096). ACM, New York, NY, USA. doi:10.1145/1367497.1367673. Retrieved from http://doi.acm.org/10.1145/1367497.1367673

Borgman, C., Abelson, H., Dirks, L., Johnson, R., Koedinger, K., Linn, M., … Szalay, A. (2008). Fostering learning in the networked world: The cyberlearning opportunity and challenge, a 21st century agenda for the National Science Foundation (Report of the NSF Task Force on Cyberlearning). Retrieved from the National Science Foundation website: http://www.nsf.gov/pubs/2008/nsf08204/nsf08204.pdf

Brown, M., & Edelson, D. (2003). Teaching as design: Can we better understand the ways in which teachers use materials so we can better design materials to support their change in practice? (Design Brief). Evanston, IL: Center for Learning Technologies in Urban Schools.

Custard, M., & Sumner, T. (2005). Using machine learning to support quality judgments. D-Lib Magazine, 11(11).

Davis, E. A., & Krajcik, J. S. (2005). Designing educative curriculum materials to promote teacher learning. Educational Researcher, 34(3), 3–14.

Davis, E. A., & Varma, K. (2008). Supporting teachers in productive adaptation. In Y. Kali, M. C. Linn, M. Koppal, & J. E. Roseman (Eds.), Designing coherent science education: Implications for curriculum, instruction, and policy (pp, 94–122). New York, NY: Teachers College Press.

Drake, C., & Sherin, M. G. (2006). Practicing change: Curriculum adaptation and teacher narrative in the context of mathematics education reform. Curriculum Inquiry, 36(2), 153–187.

Estellés-Arolas, E., & González-Ladrón-de-Guevara, F. (2012). Towards an integrated crowdsourcing definition. Journal of Information Science, 38(2), 189-200.

Farooq, U., Schank, P., Harris, A., Fusco, J. & Schlager, M. (2007). Sustaining a community computing infrastructure for online teacher professional development: A case study of designing Tapped In. Journal of Computer Supported Cooperative Work, 16(4-5), 397-429. Norwell, MA: Klewer Academic Publishers.

Fogleman, J., McNeil, K., & Krajcik, J. (2011). Examining the effect of teachers’ adaptations of a middle school science inquiry-oriented curriculum unit on student learning. Journal of Research in Science Teaching, 48(2), 149–169.

Kastens, K., Devaul, H., Ginger, K., Mogk, D., DeFelice, B., DiLeonardo, C., … Tahirkheli, S. (2005). Questions & challenges arising in building the collection of a digital library for education: Lessons from five years of DLESE [computer file]. D-Lib Magazine, 11(11). Doi: 10.1045/november2005-kastens

Khoo, M., Pagano, J., Washington, A. L., Recker, M., Palmer, B., & Donahue, R. A. (2008). Using web metrics to analyze digital libraries. In Proceedings of the 8th ACM/IEEE-CS joint conference on digital libraries (pp. 375-384). ACM.

Leary, H., Giersch, S., Walker, A., & Recker, M. (2009). Developing a review rubric for learning resources in digital libraries. In Proceedings of the 9th ACM/IEEE-CS joint conference on digital libraries (pp. 421-422). ACM.

Malone, T., Laubacher, R., & Dellarocas, C. (2009).Harnessing crowds: Mapping the genome of collective intelligence. MIT Sloan Research Paper, No. 4732-09.

Mardis, M., ElBasri, T., Norton, S., & Newsum, J. (2012). The digital lives of U.S. teachers: A research synthesis and trends to watch. School Libraries Worldwide, 18(1), 70–86.

Nielsen, J. (2006). Participation inequality: Lurkers vs. contributors in Internet communities. Retrieved from http://www.nngroup.com/articles/participation-inequality/

Penuel, W. R., & Gallagher, L. P. (2009). Preparing teachers to design instruction for deep understanding in middle school Earth science. The Journal of the Learning Sciences, 18(4), 461-508.

Porcello, D., & Hsi, S. (2013). Crowdsourcing and curating online education resources. Science, 341(6143), 240-241.

Recker, M. (2006). Perspectives on teachers as digital library users: Consumers, contributors, and designers. D-Lib Magazine, 12(9).

Recker, M., Dorward, J., Dawson, D., Halioris, S., Liu, Y., Mao, X., ... & Park, J. (2005). You can lead a horse to water: Teacher development and use of digital library resources. In Proceedings of the Joint Conference on Digital Libraries (pp. 1–7).New York, NY: ACM.

Recker, M., & Pitkow, J. E. (1996). Predicting document access in large multimedia repositories. ACM Transactions on Computer-Human Interaction (TOCHI), 3(4), 352-375.

Recker, M., Walker, A., Giersch, S., Mao, X., Halioris, S., Palmer, B., ... & Robertshaw, M. B. (2007). A study of teachers’ use of online learning resources to design classroom activities. New Review of Hypermedia and Multimedia, 13(2), 117-134.

Remillard, J. (2005). Examining key concepts in research on teachers’ use of mathematics curricula. Review of Educational Research, 75(2), 211–246.

Sumner, T., Khoo, M., Recker, M., & Marlino, M. (2003). Understanding educator perceptions of “Quality” in digital libraries. In Proceedings of the Joint Conference on Digital Libraries (pp. 269–279). New York, NY: ACM.

Walker, A., Recker, M., Ye, L., Robertshaw, M. B., Sellers, L., & Leary, H. (2012). Comparing technology-related teacher professional development designs: A multilevel study of teacher and student impacts. Educational Technology Research and Development, 60(3), 421-444.

Wetzler, P., Bethard, S., Leary, H., Butcher, K., Zhao, D., Martin, J. S., & Sumner, T. (2013). Characterizing and predicting the multi-faceted nature of quality in educational web resources. Transactions on Interactive Intelligent Systems, 3(3).