|

|

|

Hatice Sancar Tokmak1, H. Meltem Baturay2, and Peter Fadde3

1Mersin University, Turkey, 2Ipek University, Turkey,3Southern Illinois University Carbondale (SIUC), United States

This study aimed to evaluate and redesign an online master’s degree program consisting of 12 courses from the informatics field using a context, input, process, product (CIPP) evaluation model. Research conducted during the redesign of the online program followed a mixed methodology in which data was collected through a CIPP survey, focus-group interview, and open-ended questionnaire. An initial CIPP survey sent to students, which had a response rate of approximately 60%, indicated that the Fuzzy Logic course did not fully meet the needs of students. Based on these findings, the program managers decided to improve this course, and a focus group was organized with the students of the Fuzzy Logic course in order to obtain more information to help in redesigning the course. Accordingly, the course was redesigned to include more examples and visuals, including videos; student-instructor interaction was increased through face-to-face meetings; and extra meetings were arranged before exams so that additional examples could be presented for problem-solving to satisfy students about assessment procedures. Lastly, the modifications to the Fuzzy Logic course were implemented, and the students in the course were sent an open-ended form asking them what they thought about the modifications. The results indicated that most students were pleased with the new version of the course.

Keywords: Online program evaluation; CIPP model; evaluation; mixed methods research

The growth of the Internet, rapid development of technology, and great demand for higher education, lifelong learning, and content-delivery approaches have meant that educational institutions are now equipped with a variety of information and communication technologies (Sancar Tokmak, 2013). In 2000, Moe and Blodget predicted that the number of online education learners could reach as high as 40 million by 2025. One reason for the increased demand for online education is the expectation that in order to be successful, individuals must keep abreast of new technologies and information. Because online instruction offers a viable, more flexible alternative to time-consuming face-to-face education, educational institutions have endeavoured to offer online courses to meet society’s demands for lifelong learning (Lou, 2004). However, online education differs from face-to-face education in many ways and thus requires different strategies to be successful.

Educators and other researchers have expressed numerous concerns about the quality of online education courses (Lou, 2004), and as researchers such as Thompson and Irele (2003) and Kromrey, Hogarty, Hess, Rendina-Gobioff, Hilbelink, and Lang (2005) have noted, as online courses flourish, meaningful assessment is essential for improving the quality of such offerings. Different types of evaluation models address different goals of learners and educators. Eseryel (2002) lists six basic approaches to evaluation – goal-based evaluation, goal-free evaluation, responsive evaluation, systems evaluation, professional review, and quasi-legal evaluation – and points out that researchers and other evaluators should be familiar with the different models and choose the one most appropriate to their aims. Hew et al. (2004) have categorized evaluation models as macro, meso, and micro, with “Context, Input, Process, Product (CIPP)” included in the category of macro-level evaluation as a useful model for answering important questions about online education programs. Bonk (2002) also advocates the CIPP model for examining online learning within a larger system or context.

CIPP is an evaluation model based on decision-making (Boulmetis & Dutwin, 2005). Since this study aimed to make decisions regarding the improvement of an online master’s program, the study used the CIPP model within the framework of a mixed-methodology design. This process involved identifying the needs of stakeholders (learners, managers, and instructors), after which decisions were made as to how to improve the course, and students were surveyed regarding their perceptions about the changes made in the program.

CIPP was developed by the Phi Delta Kappa Committee on Evaluation in 1971 (Smith, 1980). Stufflebeam (1971a) describes evaluation according to the CIPP model as a “process of delineating, obtaining and providing useful information for judging decision alternatives” (p.267). In other words, CIPP is based on providing information for decisions (Stufflebeam, 1971b). Moreover, Boulmetis and Dutwin (2005) named the CIPP model as the best decision-making model.

According to Eseryel’s categorization (2002), CIPP is considered a system-based model, while in Hew et al.’s categorization (2004), CIPP is considered a macro model. Each of the four different types of evaluation that comprise CIPP has an important role to play in a larger whole (Williams, 2000; Smith and Freeman, 2002), with the functions of each described by Stufflebeam (1971a) as follows:

This study aimed to evaluate and redesign an online master’s program consisting of 12 courses from the informatics field using the CIPP model. Four main research questions guided the study:

The study was implemented using a mixed methodology research design. According to Creswell and Clark (2007), mixed methodology research “involves philosophical assumptions that guide the direction of the collection and analysis of data and the mixture of qualitative and quantitative approaches in many phases in the research process” (p. 5). The present study consisted of three main phases of research design. Quantitative and qualitative approaches were applied in consecutive phases, with the results of one phase influencing the process and application of subsequent phases. In the first phase, the needs of students in the online master’s program were defined using the open- and close-ended questions of the CIPP survey. In the second phase, in-depth research was conducted about one course in the online program through focus-group interviews. In the third phase, an open-ended questionnaire was applied to identify students’ perceptions about the new version of the program.

Defining sampling procedures is an important step in research because it indicates the quality of the inferences made by the researcher with regard to the research findings (Collins, Onwuegbuzie, & Jiao, 2006). In this MMR study, criterion sampling procedures were applied during all phases, because the aim was to evaluate and redesign an online master’s program. Thus, in Phase 1, study participants were comprised of 63 students taking part in this online program in 2010. The majority of students were male (n = 52). Students’ ages ranged from 23 to 39. More than half of the students (60.4%) did not have full-time jobs. In Phase 2, the 10 students enrolled in the Fuzzy Logic course participated in focus-group-interviews, and in Phase 3, the 19 students who attended the Fuzzy Logic course during the same semester were sent a form containing open-ended questions about the modifications to the course; of these, 16 students completed and returned the forms.

The online master’s program has been offered by the Institute of Informatics since 2006. The program consists of 12 courses: Fuzzy Logic, Introduction to Mobile Wireless Networks, Object Oriented Programming, Computer Architecture, Computer Networks, Multimedia Systems, Embedded Systems, Data Mining, Web-based Instructional Design and Application I, Human-Computer Interaction, Data Security, and Expert Systems. The content of each course was arranged by the institute’s instructors.

The online master’s program is a graduate program without thesis that lasts between four to six terms. In order to be accepted into the program, applicants are required to hold an undergraduate degree in Computer Systems Education, Computer Education, or Computer Education and Instructional Technology.

All courses were offered online through a learning management system (LMS). Students communicated with their instructors and peers via asynchronous tools (e-mail correspondence and a discussion board) and via synchronous meetings with instructors (video conferencing sessions via an electronic meeting tool) that lasted for one hour per week.

The Fuzzy Logic course is run using synchronous and asynchronous tools as described above. Course content is accessed through LMS 7/24 and includes graphics and animation in addition to text.

The study included a pilot as well as the main study. The pilot study was implemented during the 2009 summer school session to check the validity of the survey developed based on the CIPP model. The main study was initiated during the 2009 fall term, with data collection completed at the end of the 2010 spring term. The timeframe of the pilot study and main study is presented in Table 1.

The main study included three phases. In Phase 1, the CIPP survey was sent to all students enrolled in the online master’s program. Of these, 60.3% returned the surveys. The results indicated that two courses in the program – Fuzzy Logic and Object Oriented Programming – did not completely meet the students’ needs. Due to financial constraints, the program directors decided that initial improvements to the program should focus on the Fuzzy Logic course only. Accordingly, in Phase 2, a focus-group interview was conducted with the students of the Fuzzy Logic course in order to evaluate and redesign the course. The results of this interview were discussed with a group of graduate students attending a course on eLearning (CI-554, “Design and Delivery of eLearning”) offered through the Instructional Design and Instructional Technology program at Southern Illinois University (SIU), Carbondale, and their recommendations, along with the focus-group findings, were relayed to the director and coordinator of the online master’s program, who later decided to implement cost-effective modifications. In Phase 3 of the study, modifications to the Fuzzy Logic course were implemented, and the students who took the course were sent an open-ended form to fill out pertaining to their thoughts about the modifications.

The CIPP survey used in Phase 1 of the study was prepared by the researchers based on two surveys in the literature (Stufflebeam, 2007; Shi, 2006) and was checked by two experts. The survey consists of two parts. Part 1 contains 5 questions pertaining to demographics of the participants, whereas Part 2 contains 19 statements about the online master’s program with a 5-point-Likert scale (strongly agree to strongly disagree) as well as an open-ended question asking participants to select one course that needs further analysis and modification.

Wallen and Freankel (2001) state that researchers should focus on collecting reliable, valid data using instruments. For this reason, the researchers developed the instruments used in this study in consultation with experts in order to ensure content-related validity. Moreover, reliability of the instrument was checked by implementing a pilot survey with online master’s program students.

The focus-group interview conducted in Phase 2 of the study consisted of semi-structured interview questions that were checked by two experts. The two main questions used were designed to obtain students’ opinions about the instructional design of the Fuzzy Logic course as well as their suggestions for improving the course.

The questionnaire form used in Phase 3 of the study consisted of five open-ended questions designed to obtain students’ opinions about the modifications to the Fuzzy Logic course. This form was checked by an expert, revised accordingly, and the revised version of the form was used in the study.

Data was collected through a CIPP survey, including closed- and open-ended questions; a focus group interview; and an open-ended questionnaire. Descriptive analysis was applied to the data collected from the CIPP survey close-ended questions, whereas the data collected from the open-ended question was analyzed using open-coding analysis in line with Ayres, Kavanaugh, and Knafl (2003), with data categories of significant statements presented according to different themes. Intercoder reliability with regard to the emerging themes was rated according to Miles and Huberman (1994) and found to be 88%.

A focus-group interview was conducted by one of the researchers with 10 volunteer students. A supportive atmosphere for discussion was secured by providing each participant opportunities to participate. Focus-group interview results were discussed with the program managers and used as the basis for decisions regarding modifications to be made to the program.

Once the modifications had been implemented, an open-ended questionnaire was sent to all students taking the Fuzzy Logic course. Responses were iteratively examined for patterns and ideas. Collected data was examined for similarities and differences in student responses, and general themes were identified by one researcher and checked by another researcher.

Efforts to ensure data validity are described below with respect to the different research activities.

Instrumentation: The CIPP questionnaire used in the study was developed by the researchers based on the literature, checked by experts, and implemented in a pilot study. The focus-group interview form and open-ended questionnaire were also verified by experts prior to implementation.

Data collection: In line with design-based research methodology, data was collected in a three-phase procedure in order to redesign the program under study. Data was triangulated through the use of a CIPP survey, focus-group interview, and open-ended questionnaire.

Data interpretation: All of the study findings were discussed with the program managers and course instructors. Moreover, to provide external validity, a group of graduate students enrolled in a course on Design and Delivery of eLearning (CI-554) offered by Southern Illinois University, Carbondale discussed the results of the CIPP survey and the focus-group interview results relating to possible course modifications.

Needs of online master’s program students were identified through a CIPP survey sent to all 63 students in the program. In total, 38 students (30 male, 8 female; age range, 23-29 years) returned the survey. The majority (n = 29) had Bachelor’s of Science degrees, while the remaining 9 had Bachelor’s of Arts degrees. When asked what reasons prompted them to register for the online master’s program, the majority (n = 32) gave more than one reason. The most frequently cited reasons were “to improve themselves” (n = 29), “to provide career advancement” (n = 23), “for personal reasons” (n = 18) and “to secure new job opportunities” (n = 18), whereas the least-cited reasons were “a friend’s influence” (n = 3), “family’s influence” (n = 1) and “manager’s influence” (n = 1). Students were also asked to assess their performance in the program, and the majority indicated their performance to be “middle-level” (n = 18) or “good” (n = 17), while a few assessed their performance as either “very good” (n = 2) or “bad” (n = 1). Importantly, no students assessed their performance as “very bad”.

Students were also asked to select one course that they felt required further analysis and modification. Most students (n = 17) selected the Fuzzy Logic course, followed by Object Oriented Programming (n = 9), Data Mining (n = 7), Computer Architecture (n = 3), and Multimedia Systems (n = 1).

The survey questions focused on five main areas, namely, course content, practical job training, instructors, feedback, and general issues. With regard to course content, most students (n = 19) reported that the course contained up-to-date information. However, 27 students pointed out that the course content did not place equal emphasis on theory and practice, and 16 students were undecided as to whether or not the course content emphasized personal work habits. With regard to practical job training, most students (n = 31) pointed out that practical preparation exercises helped them obtain expertise in specialized occupations, and 18 students stated that the practical job training activities were suited to their personal characteristics (i.e., abilities, needs, interests, and aptitudes). However, 30 students stated that the practical job preparation exercises were insufficient. With regard to course instructors, most students (n = 33) reported instructors to be helpful, cooperative, and interested in making the course a useful learning experience. However, most students (n = 31) reported that the instructors did not use the most appropriate instructional strategies, and most students (n = 31) also stated that when they encountered a problem related to the program, they did not receive immediate help from instructors and course assistants. With regard to feedback, most students (n = 15) were undecided about the feedback provided by instructors and teaching assistants, and 13 students pointed out they were unsure as to whether or not they were gaining sufficient knowledge and skills through the course. With regard to general aspects of the course (i.e., course materials, course length, student satisfaction), 28 students reported that the course materials were of sufficient interest; however, 25 students found the course to be too short, and 14 were undecided as to whether they were satisfied with the quality of the course.

To obtain suggestions from students regarding improvements to the online master’s program, an open-ended question was included at the end of the CIPP survey, to which 25 out of 38 students responded. Suggestions are presented below according to “themes” and the number of students mentioning them.

Content: Eleven students recommended that the content of the courses should be redesigned. They pointed out that the courses should contain more videos and graphics, and they advised taking into account material design principles when redesigning existing materials. Some students suggested that the courses should include more exercises and detailed information. Finally, students also emphasized that in some courses, the content was not presented in a logical order.

Interaction: Nine students indicated their dissatisfaction with student-student interactions, and they suggested that a social forum or chat room in which students and instructors can share knowledge should be added to the system to enhance these interactions. For the same reason, they advised conducting face-to-face meetings at the beginning, middle, and end of the semesters and extending the length of these meetings.

Sources: Six students pointed out that the system did not present sufficient sourcing, and they suggested that a resource page be provided so that interested students could obtain more detailed information. Moreover, two students suggested that instructors prepare videos and other documents related to the course content and incorporate these tools into the system.

Technical and usability problems: Five students complained about technical and usability problems that they said created distractions. They emphasized visual and audio problems encountered while watching the videos in the system as well as usability problems such as non-functioning buttons, inaccessible pages, and various mistakes in the “I forgot my password” section.

Recordings: Four students emphasized recording synchronous meetings and archiving them in the system. As one student stated, “I am working, and for that reason, I cannot participate in most of the synchronous meetings. The meetings should be recorded.”

Instructors: Three students stated that the instructors did not seem to be interested in teaching in the online program. According to these students, during the synchronous meetings, most instructors just repeated the course content contained in the system and did not assess student performance.

A large number of questions on the CIPP survey were answered as “undecided”; therefore, the program directors agreed that an in-depth study should be conducted. However, because of time limitations and cost-effectiveness, they decided that this study should focus on improving one course only. Since the Fuzzy Logic course was the most frequently cited by students as needing improvement, it was decided that the in-depth study should focus on this course.

A focus-group interview was conducted, and written responses were analyzed and categorized into four groups of themes that were coded as follows: Suggested changes in course structure, suggested changes in course content, promoting instructor-student and student-student contact, solving technology-based problems.

Most students who participated in the focus-group interview sessions pointed out the need for face-to-face meetings to revise and reinforce the course content. They emphasized that these meetings would allow students to ask questions and would increase student-instructor contact. A great majority said that seeing midterm exam questions was essential for their success in the final exam and stated a preference for on-ground midterm exams conducted in a face-to-face format that would enable them to discuss the exam questions with faculty and other students after the exam was over. Some students said they found the requirements of the courses to be extremely high, adding that not having the opportunity to discuss the items covered with their instructors or peers following the weekly sessions put them at a disadvantage and decreased their chances for success.

A great many students implied that the course content should include more examples and applications of the subject matter, stating that they were concerned about the difficulty in transferring the knowledge gained through the program to real life. Some students suggested that projects created by previous students be accessible somewhere in the system as a way of providing guidance in developing their own projects. The need to include detailed information on the subject matter in order to lessen difficulties and enhance comprehension was also mentioned. Moreover, students emphasized certain problems relating to presentation of the course content, namely, that the content was presented mostly in a text-based format, and they suggested that more content-related pictures, animations, and video clips be added. Students also complained about problems accessing existing video clips. Furthermore, students suggested that the course Web site list more resources and provide enhanced opportunities for file-sharing, which would allow them to follow the activities of other students. Students also recommended that they be divided into groups so that they could work cooperatively on projects and assignments.

Students pointed out that faculty-student contact was inadequate, and some suggested arranging face-to-face meetings to augment faculty-student contact, which they felt was essential for succeeding in the course. They claimed that the information presented in the course material and chat sessions was inadequate for their success. Some students emphasized the need for an effective social-sharing environment that would enable them to contact their peers, adding that because they did not know each other, they could not conduct any joint activities (e.g., form groups, study together, share homework, or work collaboratively on projects). A few students stated that a social forum should be formed on the course Web sites and face-to-face meetings should be arranged to encourage student-instructor and student-student contact as a means of motivating them to study more, attend chat sessions, and complete the course. Other students asserted that adding new activities could enrich the course presentation techniques of instructors.

Most students complained of technology-based problems related to loading of course content and materials, namely that an extremely long time was required for content loading.

CIPP survey and focus-group interview findings were discussed by graduate students attending the CI-554 E-Learning Class at Southern Illinois University. The graduate students identified three main issues for evaluators to consider, namely, those relating to lesson content, those relating to student and instructor interaction, and those relating to assessment. The following recommendations were reported to course managers:

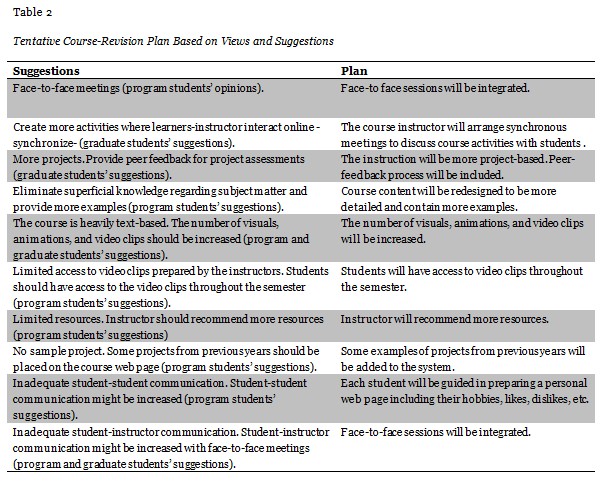

Findings from the focus-group interview and the suggestions of SIU CI-554 eLearning graduate students were discussed in a meeting of the managers of the online master’s program. Instructors and assistants also attended the meeting and provided their own opinions regarding the feedback relating to course improvement. Taking into account all the findings and suggestions, the managers decided to make certain modifications to the Fuzzy Logic course design. Here, it should be emphasized that time limitations and cost effectiveness were two important factors affecting these decisions. Table 2 shows the tentative plan presented to the managers based on the views and suggestions of students in the course, SIU graduate students, and course instructors.

Modifications were implemented in Phase 3 of the study. Course content was revised so that additional examples were embedded and the use of visuals, animations, and video clips was increased. Video clips were also made accessible throughout the whole semester, and, in line with student requests, some of the best projects from the previous year were placed on the course Web site. Student-instructor interaction was increased by arranging face-to-face meetings, and course assistants were charged with providing immediate feedback to students’ email questions. Student-student interaction was increased by guiding students in preparing their own web pages including their hobbies, likes, dislikes, and so on and linking these web pages to the system so that students could interact with each other more. Face-to-face meetings were also arranged at the beginning of the semester and prior to each exam in order to answer students’ questions, provide suggestions regarding assessments. Sample exam questions and a list of study resources were also added to the system. Finally, a midterm exam was added to the course evaluation procedures.

The Fuzzy Logic course was redesigned two months after the start of the spring 2010 semester, in which 19 students were enrolled. Data was collected, and infrastructure modifications were prepared by course assistants and the system administrator, an instructional technologist. The course instructor, an instructional designer, worked with the assistants and the system administrator during the modification process, and one of the researchers, an expert on the usability of web-based systems, provided additional guidance to the system administrator. In the spring 2010 semester, both the old and the newly redesigned versions of the course were presented to students, and changes in course design were announced to the students on an ongoing basis as additional projects, exercises, and homework were incorporated into the system.

At the end of the semester, all students in the course were sent a form with three demographic questions and five open-ended questions about the course. In total, 16 of 19 students completed the form and returned it to one of the researchers, a usability expert. The majority of students returning the form were males (n = 13). Moreover, the majority held Bachelor of Science degrees (n = 14), and the remaining two held Bachelor of Arts degrees. In assessing their performance in the course, 10 students rated their performance as either “not good” or “bad”, and 6 of them rated their performance as “good”. Data was evaluated using open-coding analysis, as follows.

Of the 16 students who returned the forms, 15 answered the question “To what extent did the modifications to the course affect your performance? (Please mention both positive and negative effects.)” Of these, 11 students stated that the additional visuals, examples, and detailed information as well as the reorganization of the course content made the lesson more understandable and that the project-based course design increased their interest in the course content; however, two of these students stated that even though the modifications positively affected their performances, more examples should be included in the system. In addition, two students stated that the course modifications did not affect their performance and two students stated that they did not know whether or not redesigning the course affected their performance.

Thirteen out of 16 students answered the questions, “Which modification(s) positively or negatively affected your participation in the Fuzzy Logic course? Could you please provide the reasons?”According to nine students, the inclusion of examples of fuzzy logic used in real life, project-based course design, and additional visuals related to course content increased their motivation and participation in the course; two students stated that although project-based course design was good in terms of increasing participation, the complexity of the projects made them difficult to complete in a limited time; and two students stated that the modifications had neither a negative nor a positive effect on their participation.

Fourteen out of 16 students answered the question, “In your opinion, were the modifications made in the Fuzzy Logic course sufficient or not?” Of these, 11 stated that the modifications were sufficient and that the new system was better than the previous one; however, 10 of these 11 students emphasized that while the new design was adequate in terms of course content, there was insufficient visual material in the system. In addition, two students stated that the modifications were not sufficient, and, of these, one stated that only 10% of the modifications she had requested were implemented, that more visuals and examples were needed and that the informal language used in the content caused problems. Finally, one student stated that she did not want to comment on this question because she was not an expert on instructional design.

Fourteen out of 16 students answered the question, “Do you have any suggestions for improving the Fuzzy Logic course?” Of these, one student stated that the changes were sufficient; 10 stated that the modifications were good, but more visuals and examples could be added and the content could be reorganized to proceed step-by-step from basic to complex topics; and three students criticized the changes, stating that the projects were too complex and there were no clear explanations regarding course expectations.

Thirteen out of 16 students answered the question, “What is your opinion about making similar modifications in the other courses?” Of these, 11 students stated that the other courses should be redesigned with similar modifications. Moreover, they pointed out that although their different backgrounds made it difficult for them to understand the course content in the area of informatics, the modifications to the course – including more visuals, examples, and projects, eliminating jargon, and reorganizing the course content – made the content more understandable to them. In contrast, the other two students responding to this question said they opposed making similar modifications to the other courses; rather, they advised asking for the opinions of students and course instructors before redesigning the other courses, since every course would need specific modifications.

The aim of this study was to evaluate and redesign a representative course from the online master’s program consisting of 12 courses from the informatics field. The researchers chose to conduct an evaluation study in line with a context, input, process, product (CIPP) model, since this model is based on evaluating and redesigning programs by defining the needs of participants in terms of context, strategies, plans, activities, interaction, and assessment. Moreover, the CIPP model aims to help decisionmakers make improvements in programs (Boulmetis & Dutwin, 2005).

The online master’s program was evaluated in three phases using a mixed-methods methodology. In Phase 1, the researchers prepared a CIPP survey instrument in line with relevant literature and vetted by experts and sent the survey to all students in the online master’s program in order to define students’ needs (Research Question 1). Analysis of the responses of the 38 students who returned the survey revealed three issues for decisionmakers to take into consideration in revising the program, namely, course content, interaction, and assessment. According to Willging and Johnson (2004), these issues, which are related to course quality, have an influence on dropout rates.

Since the students responding to the CIPP survey selected the Fuzzy Logic course for redesigning, and since cost-effectiveness is an important issue in e-learning program design, Phase 2 of the research began by defining students’ specific concerns related to the Fuzzy Logic course (Research Question 1) through a focus-group interview conducted with students in the course. The findings indicated that the course content should be redesigned to include more examples, videos, and other visual material and that interaction should be increased through face-to-face meetings. The focus-group interview also made clear that students in the Fuzzy Logic course were not satisfied with the course assessment procedures and wanted more project-based assessments. With regard to the findings on course content, Garrison and Kanuka (2004) have emphasized blending text-based asynchronous internet technology with face-to-face learning as an emerging trend in higher education that is often referred to simply as “blended learning” (p.96).

The findings of the focus-group interview were presented to graduate students in an e-learning class at Southern Illinois University, who were asked to provide suggestions as to how the Fuzzy Logic course could be improved. A report prepared by the graduate students and sent to the researchers highlighted three main points to be addressed in redesigning the course, namely, content, interaction, and assessment.

This report and the focus-group interview results were subsequently presented to the managers of the online master’s program, who, based on this information, defined strategies and planned activities to address the needs of the online master’s program students (Research Question 2), as follows: arranging face-to-face meetings; conducting a midterm; making the course more project-based; increasing the number of visuals, including animations and video clips; presenting more detailed information about the course content; recommending more resources; making examples of projects from previous years accessible through the course management system; and increasing student-student interaction by helping students to develop personalized web pages and linking them to the system.

In Phase 3 of the study, the Fuzzy Logic course was redesigned according to the program managers’ decisions. Instructors, course assistants, a usability expert and the Web site administrator worked together in redesigning the course (Research Question 3). Although not all of the suggestions or findings from the survey and focus-group interview were taken into account in redesigning the program, the instructors, working together with the course assistants, defined the examples, animations, video clips, and other visuals and selected two or three of the best projects from the previous year to be placed on the system. To increase interactions, face-to-face meetings with the instructor were arranged before the exams, and the course assistants were charged with providing immediate feedback to students’ emails. In addition, personalized web pages that included information on students’ hobbies, likes and dislikes, and so on were added to the system to increase interaction between students. To better meet students’ needs in terms of assessment, the instructor redesigned the course to offer more project-based learning and took student performance into account in assessments.

Students were surveyed about the newly designed course using a form that included open-ended questions about the new course design (Research Question 4). A total of 16 out of 19 students returned the form. According to the findings, most students were pleased with the new version of the course. Students indicated that the additional examples and visuals, more detailed information, and reorganized course content made the lesson more understandable and that the project-based course design made the course more interesting than other courses. Moreover, 11 students stated that while they found the modifications sufficient, the course could still be improved through more visuals. When asked, “Do you have any suggestions for improving the Fuzzy Logic course?” most students again advised adding more visuals and examples. Furthermore, most students recommended that modifications similar to those made in the Fuzzy Logic course should be made in the other courses in the online program. Using a CIPP model and in line with design-based research, the other courses of the online master’s program will be redesigned within the framework of future research.

It is believed that this study will be an example for the future research studies on the systematic evaluation of online courses. The CIPP model used in this study enabled the researchers to focus on content, input, process, and products of the online master’s program from the perspectives of different stakeholders: students, instructors, and managers. It is also believed that this study might take place as a good research example in the online and distance learning literature in that it combined different perspectives in line with the CIPP model.

Ayres L., Kavanaugh, K., & Knafl, K. A. (2003). Within-case and across-case approaches to qualitative data analysis. Qualitative Health Research, 13(6), 871-883.

Bonk, C. J. (2002). Online training in an online world. Bloomington, IN: CourseShare.com.

Boulmetis, J., & Dutwin, P. (2005). The ABCs of evaluation: Timeless techniques for program and project managers (2nd ed.). San Francisco: Jossey-Bass.

Collins, K. M. T., Onwuegbuzie, A. J., & Jiao, Q. G. (2006). Prevalence of mixed-methods sampling designs in social science research. Evaluation and Research in Education, 19(2), 83-101.

Creswell, J. W. (1998). Qualitative inquiry and research design: Choosing among five traditions. Thousand Oaks, CA: Sage Publications.

Creswell, J. W., & Clark, V. L. P. (2007). Designing and conducting mixed methods research. Thousand Oaks, CA: Sage.

Eseryel, D. (2002). Approaches to evaluation of training: Theory & practice. Educational Technology & Society, 5(2), 93-99.

Garrison, R. D., & Kanuka, H. (2004). Blended learning: Uncovering its transformative potential in higher education. Internet and Higher Education , 7, 95-105.

Hew, K. F., Liu, S., Martinez, R., Bonk, C., & Lee, J-Y. (2004). Online education evaluation: What should we evaluate? Association for Educational Communications and Technology, 27th, Chicago, IL, October, 19-23.

Kromrey, J. D., Hogarty, K.Y., Hess, M. R., Rendina-Gobioff, G., Hilbelink, A., & Lang, T. R. (2005). A comprehensive system for the evaluation of innovative online instruction at a research university: Foundations, components, and effectiveness. Journal of College Teaching & Learning, 6(2), 1-9.

Lou, Y. (2004). Learning to solve complex problems through between-group collaboration in project-based online courses. Distance Education, 25(1), 49-66.

Miles, M.B., & Huberman A.M. (1994). Qualitative data analysis: A sourcebook of new methods. Newbury Park, CA: Sage.

Moe, M. T., & Blodget, H. (2000). The knowledge web: Part 1. People power: Fuel for the new economy. New York: Merrill Lynch

Nevo, D. (1983). The conceptualization of educational evaluation: An analytical review of the literature. Review of Educational Research, 53(1), 117-128.

Sancar Tokmak, H. (2013). TPAB - temelli öğretim teknolojileri ve materyal tasarımı dersi: matematik öğretimi için web-tabanlı uzaktan eğitim ortamı tasarlama. In T. Yanpar Yelken, H. Sancar Tokmak, S. Ozgelen, & L. Incikabi (Eds.), Fen ve matematik eğitiminde TPAB temelli öğretim tasarımları (pp. 239-260). Ankara: Ani Publication.

Shi, Y. (2006). International teaching assistant program evaluation (Phd thesis). Southern Illinois University Carbondale.

Smith, K. M. (1980). An analysis of the practice of educational program in terms of the CIPP model (Phd thesis). Loyola University of Chicago.

Smith, C. L., & Freeman, R. L. (2002). Using continuous system level assessment to build school capacity. American Journal of Evaluation, 23(3), 307–319.

Stufflebeam, D. L. (1971a). The use of experimental design in educational evaluation. Journal of Educational Measurement, 8(4), 267-274.

Stufflebeam, D. L. (1971b). An EEPA interview with Daniel L. Stufflebeam. Educational Evaluation and Policy Analysis, 2(4), 85-90.

Stufflebeam, D. L. (2007). CIPP evaluation model checklist (2nd ed.). Retrieved from http://www.wmich.edu/evalctr/archive_checklists/cippchecklist_mar07.pdf

Thompson, M. M., & Irele, M. E. (2003). Evaluating distance education programs. In M. G. Moore & W. G. Anderson (Eds.), Handbook of distance education (pp. 567-584). Mahwah, NJ.: Lawrence Erlbaum.

Wallen, N. E., & Fraenkel, J. R. (2001). Educational research: A guide to the process (2nd ed.). Mahwah, NJ: Erlbaum.

Williams, D. D. (2000). Evaluation of learning objects and instruction using learning objects. In D. Wiley (Ed.), The instructional use of learning objects. Retrieved from http:///reusability.org/read/

Willging, P. A., & Johnson, S. D. (2004). Factors that influence students’ decision to dropout of online courses. JALN, 8(4), 105-118.