|

|

|

|

|

|

|

|

|

|

Peter Shea1, Suzanne Hayes2, Sedef Uzuner Smith3, Jason Vickers1, Temi Bidjerano4, Mary Gozza-Cohen5, Shou-Bang Jian1, Alexandra M. Pickett6, Jane Wilde1, and Chi-Hua Tseng2

1University at Albany – SUNY, USA 2Empire State College – SUNY, USA, 3Lamar University, USA, 4Furman University, USA, 5Widener University, USA, 6SUNY Learning Network, USA

This paper presents an extension of an ongoing study of online learning framed within the community of inquiry (CoI) model (Garrison, Anderson, & Archer, 2001) in which we further examine a new construct labeled as learning presence. We use learning presence to refer to the iterative processes of forethought and planning, monitoring and adapting strategies for learning, and reflecting on results that successful students use to regulate their learning in online, interactive environments. To gain insight into these processes, we present results of a study using quantitative content analysis (QCA) and social network analysis (SNA) in a complementary fashion. First, we used QCA to identify the forms of learning presence reflected in students’ public (class discussions) and more private (learning journals) products of knowledge construction in online, interactive components of a graduate-level blended course. Next, we used SNA to assess how the forms of learning presence we identified through QCA correlated with the network positions students held within those interactional spaces (i.e., discussions and journals). We found that the students who demonstrated better self- and co-regulation (i.e., learning presence) took up more advantageous positions in their knowledge-generating groups. Our results extend and confirm both the CoI framework and previous investigations of online learning using SNA.

Keywords: Community of inquiry; learning presence; social network analysis; self-regulation; online learning; quantitative content analysis; learning journals; online discussions

As online learning continues to grow in higher education, it is critical that we gain a better understanding of the mechanisms by which we can promote its quality. The longstanding community of inquiry (CoI) model (Garrison, Anderson, & Archer, 2000) represents one such mechanism. This model describes the deliberate development of an online learning community, stressing the processes of instructional dialogue likely to lead to successful online learning. It explains formal online knowledge construction through the cultivation of various forms of presence: teaching, social, and cognitive presence (Garrison, Anderson, & Archer, 2001).

The CoI model theorizes online learning in higher education as a byproduct of collaborative work among active participants in learning communities characterized by instructional orchestration appropriate to the online environments (teaching presence) and a supportive, collegial online setting (social presence). The teaching presence construct outlines participant instructional responsibilities such as organization, design, discourse facilitation, and direct instruction (Anderson, Rourke, Garrison, & Archer, 2001) and articulates the specific behaviors likely to result in a productive community of inquiry (e.g., Swan & Shea, 2005). Social presence emphasizes online discourse that promotes positive affect, interaction, and cohesion (Rourke, Anderson, Garrison, & Archer, 1999) that supports a functional, collaborative learning environment. The model also refers to cognitive presence, a cyclical process of interaction intended to lead to significant learning within a community of learners.

More than 10 years of research and a recent two part edited special issue of The Internet and Higher Education (Swan & Ice, 2010), dedicated to CoI and the advances in our understanding of online learning gained through this theory, are testament to its usefulness. However, with more than 6.7 million college students enrolled in at least one credit bearing online course during 2012 and an accompanying growth rate of more than 9% (Allen & Seaman, 2013), it is clear that we will continue to need a comprehensive model that helps describe, explain, and predict how people learn online.

Recently, in an effort to make the CoI model more comprehensive, we (Shea & Bidjerano, 2010; Shea et al., 2012) suggested another dimension of presence in this model. In analyzing student contributions to online courses using the CoI model, we were unable to reliably identify instances of student generated discourse found in collaborative learning activities (such as online discussions and other areas used for group work) using indicators of teaching, social, and cognitive presence (see Shea, Hayes, & Vickers, 2010). Upon further investigation, we considered these student contributions to be examples of online learner self- and co-regulation and applied the term learning presence to describe this interaction. In our most recent CoI research, we presented learning presence (discussed in more detail below) as a new construct that is meant to complement and expand upon teaching, social, and cognitive presences contained in the CoI model.

Our conceptualization of learning presence is informed by Zimmerman’s (2008) well-researched theoretical construct of self-regulated learning, which refers to “students’ proactive use of specific processes [such as setting goals, selecting and deploying strategies, and self-monitoring one’s effectiveness] to improve their academic achievement” (p. 167). Self-regulation research conducted in the last two decades has concluded that self-direction (including e.g., setting personal goals, using diverse modes of learning, time management) is predictive of better learning outcomes in classroom-based education (e.g., Zimmerman, 2000; Zimmerman & Schunk, 2001). In a similar vein, reviewing studies that investigated online learning (e.g., Bixler, 2008; Chang, 2007; Chung, Chung, & Severance 1999; Cook, Dupras, Thompson, & Pankratz, 2005; Crippen & Earl, 2007; Nelson, 2007; Saito & Miwa, 2007; Shen, Lee, & Tsai, 2007; Wang, Wang, Wang, & Huang, 2006), Means and her colleagues (2009) also concluded that support for enhancing students’ self-regulation (such as initiative, perseverance, and adaptive skill) has a positive impact on their online learning.

Our conceptual framing of learning presence reflects learner self- and co-regulatory processes in online educational environments. The coding scheme we developed to delineate this construct aligns with Zimmerman’s concept of self-regulated learning and includes phases for forethought and planning, performance, and reflection, with emphasis on the goals and activities of online learners specifically. Under the forethought phase, we include planning, coordinating, and delegating or assigning online tasks to self and others in the early stages of the course, course module, or specific activity. In the performance phase, we include monitoring and strategy use. This phase is more elaborate and its monitoring component includes checking with online classmates for understanding, identifying problems or issues, noting completion of tasks, evaluating quality, monitoring during performance of the online activity, and taking corrective action if necessary. The monitoring component of performance also includes appraising personal and group interest or engagement in the online learning activity. The strategy use component of the performance phase includes advocating effort or focus, seeking, offering or providing help to complete the online activity, articulating gaps in knowledge, reviewing, noting outcome expectations, and seeking or offering additional information. Finally, the reflective component includes articulation of changes in thinking and causal attribution of results to individual or group performance in the online activity.

It is important to note that we define learning presence as distinct from the instructional design, facilitation of discourse, and direct instruction associated with teaching presence as well as the dimensions of social presence. Additionally, we define learning presence as distinct from each of the phases of cognitive presence (i.e., triggering event, exploration, integration, and resolution). (See Appendix A for additional details and examples of learning presence.)

Building on this expanded version of the CoI model, we hypothesized that for students who are asked to design and facilitate a portion of an online course (in this case, course discussions), this added responsibility might heighten their self- and co-regulatory behaviors, resulting in higher levels of learning presence. Further, when students collectively focus on knowledge construction in online discussions, they create a network, and the messages they post provide clues to the structure of that network and the relative positions that each student occupies within it. As a result, certain advantageous positions can emerge as indicators of relative prominence among participants (Aviv, Erlich, Ravid, & Geva, 2003; deLaat, Lally, Lipponen, & Simons, 2007a). With this understanding, our second hypothesis was that assigning facilitation roles to students might provide them with increased interaction with their peers, resulting in more prominent roles and network positions influencing the flow of information in the discussions. To test these two hypotheses, we sought to explore online learner self- and co-regulation (learning presence) reflected in quantitative content analysis of student discourse and advantageous positions reflected in social network analysis (descriptions of these methods of analysis are in the sections that follow). With these analyses, we sought to examine the effects of a scaffolded transfer of some instructional roles from the instructor to the learners in online discussions on the expression of learning presence and student location within the resulting network of interaction in those discussions. We theorized that elements of the learning presence construct may possibly be more or less evident in different components of the learning activities designed for the course. For example, we conjectured that we might find more instances of student reflection in activities designed to promote such reflection, such as learning journals. As such, the specific questions we asked were as follows:

1) When part of the instructional role is shared with students (elements of design and facilitation of discourse) to what extent is there an impact on the expression of self- and co-regulation (learning presence) as measured through quantitative content analysis of student discussion postings and learning journals?

2) What impact does the shared instructional role (learner design and facilitation of online discussions) have on metrics reflected in social network analysis? Do facilitators occupy more advantageous (e.g., central) locations in the social network?

3) How does student learning presence manifest when we compare more public, interactive forms of online learner self and co-regulation as documented in student discussions versus more private venues such as individual learning journals? How are the three categories of learning presence and their constructs distributed across these two learning activities?

4) What network positions do students with high levels of combined learning presence in discussions and journals occupy relative to their peers?

5) How do prestige and influence correlate with combined learning presence in discussions and learning journals and in each of these activities when considered separately?

The data for this study consisted of students’ learning journals and transcripts of their online discussions collected from a doctoral level research methods course that used blended instruction. The course, which was offered during the 2010 fall term at a large state university in the northeastern United States, met face-to-face for three weeks at the start of term then switched to fully online instruction for the remainder of the semester. There were 18 students enrolled in this blended course. The online components of the course consisted of eight modules, with each module lasting for about two weeks. We report on the results from two sets of three concurrent discussions from one of the modules (Module 6) and the learning journals for that module.

Overall, the discussions we analyzed had an aggregated count of 223 student postings, each of which served as our unit of analysis. In each set of discussions, one discussion was required and there were two others from which students could select to participate. Student postings by discussion were as follows for Weeks 1 and 2 of Module 6: Week 1: Mandatory Discussion: 72; Option One: 30; Option Two: 28; and Week 2: Mandatory Discussion: 43; Option One: 18; Option Two: 32.

In Module 6, there were also a total of 16 journal entries posted to a blog forum. These learning journals were a course requirement and they were available for members of the whole class to read. In their journal entries, students were simply asked to include their comments, questions, insights, concerns, and other reactions to the content of the assigned readings. Although the journal entries were posted to the blog forum, they did not require continuous student interaction. Each student was expected to respond to only one or two other students’ journal posts. There were a total of 19 comments made by students to the journals we analyzed from Module 6.

Our hypothesis was that having students explicitly share the teaching presence role might foster additional expression of the kinds of self and co-regulatory actions reflected in the learning presence construct. To test this hypothesis, we turned to the online discussion component of the course where students took more responsibility for aspects of teaching presence, specifically the facilitation of the discussions on course topics that they selected.

The online discussions students engaged in (described above) were a requirement in the course and they were scheduled in each of the eight modules. At the beginning of the semester, students divided themselves into teams of two to three students. Each team agreed to be the discussion facilitators for one module of instruction covering one of the course topics. Working with the instructor, each team selected key readings and devised leading questions and activities to facilitate the discussions around these readings. Following instructor guidelines, modeling, and suggestions, facilitators were expected to guide the class discussions, ask questions, raise issues, and state their agreements and disagreements with appropriate support and evidence from the literature.

We employed two methods of inquiry to analyze the data: quantitative content analysis and social network analysis (hereafter referred to as QCA and SNA).

QCA includes the process of searching text for recurring trends to identify frequencies (Adler & Clark, 2011). We conducted QCA using a revised version of the original learning presence coding scheme that was developed for a prior study (Shea et al., 2012). At the start of this study, two researchers who developed the original coding scheme refined it to align it more closely with Zimmerman’s (1998, 2000) three phases of self-regulation: forethought, performance, and self-reflection. This was accomplished by adding several new indicators and a new reflection category and re-categorizing the existing monitoring and strategy-use sections to sub-categories under a more inclusive organizing principle for self-regulation (i.e., performance, see Appendix A). After the refinement of the coding scheme, additional coders were trained to identify and count every occurrence of a learner presence code in the discussion transcripts and learning journals. No instructor posts were coded because the learning presence construct is specific to students.

In studies that employ QCA, rigorous coding protocols are crucial to reliability. To establish reliability, we began our coding with a test sample of learning journals and discussions from the course with the goal of identifying and negotiating our coding differences. Repeating the coding and negotiation processes with sample texts allowed us to establish an adequate level of inter-rater reliability (IRR), which we calculated using Holsti’s coefficient of reliability (CR). This method looks at percent agreement using the following formula: 2M/(N1+N2) where M represents the total agreed-upon observations, N1 represents the number of total observations for coder 1, and N2 represents the total number of observations for coder 2 (Holsti, 1969; Krippendorf, 2004; Neurendorf, 2002). For exploratory research of this nature, an IRR of 0.70 is considered acceptable (Lombard, Snyder-Duch, & Bracken, 2002; Neurendorf, 2002). Although Lombard et al. (2002) recommend multiple matrices for establishing IRR, we chose to use the single measure of IRR, again due to the exploratory nature of our research. To ensure rigor and consistency, we avoided sampling, and instead used one-hundred percent of the data in calculating IRR, and coders used ongoing negotiation to improve both IRRs and the coding scheme. For student learning journals, the average initial CR was 0.773 and the negotiated CR was 1.0000. For discussions, coders reached an average initial CR of 0.775 and negotiated CR of .991. (See Appendix B for itemized journal and discussion IRR CRs.) All of these are acceptable measures of IRR for the purposes of this research.

We selected SNA as our second inquiry method because it offers the potential to explain the nature of networked relationships resulting from the flow of information and influence found among participants’ interactions. Within networked learning environments, SNA provides both visual and statistical analyses of interactions. Given the importance of interaction in the CoI framework, SNA has been adopted by several researchers as a method to better understand individual and group dimensions of online learning (e.g., Aviv, Erlich, Ravid, & Geva, 2003; Cho, Gay, Davidson, & Ingraeffea, 2007; Dawson, 2008, 2010; Dawson, Bakharia, & Heathcote, 2010; Dennen, 2008; Lowes, Lin, & Wang, 2007; MacFayden & Dawson, 2010; Russo & Koesten, 2005; Yang & Tang, 2003; Zhu, 2006). While previous researchers have employed other constructs from the CoI model with SNA (for example, deLaat, Lally, Lipponen, & Simons, 2007b, used SNA for teaching presence), most previous SNA research in online learning has lacked a comprehensive conceptual framing for knowledge construction that reflects the three core elements of the CoI model (social presence, teaching presence, and cognitive presence) that contribute to a meaningful online learning experience.

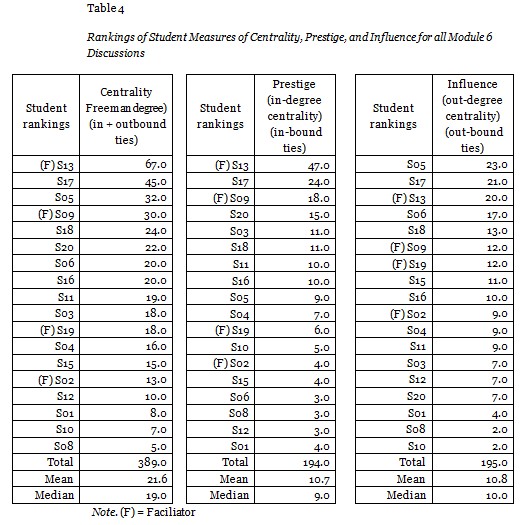

In this study, our purpose was to better understand the nature of the relationship between the fourth and new element of the CoI model, namely, learning presence, and students’ networked positions that may be advantageous in the support of online shared knowledge construction. To accomplish this, we used a key SNA measure: centrality. Centrality is a measure of prominence based on the number of mutual and unreciprocated ties or relations students have with each other. Centrality is an important measure because previous research on online learning has found that it correlates with positive learning outcomes (see Aviv, Erlich, Ravid, & Geva, 2003; deLaat, Lally, Lipponen, & Simons, 2007b; Heo, Lim, & Kim, 2010). We calculated students’ overall network centrality (Freeman degree) by combining measures of in-degree centrality, which are counts of inbound ties with other students, and out-degree centrality, which are counts of outbound ties. These same measures, when considered separately, are indicators of network prestige (in-degree centrality) and influence (out-degree centrality). In online discussions, prestige measures the number of incoming responses directed to a student’s discussion post and represents the degree to which other students seek out that student for interaction (deLaat, et al., 2007a). Students with high prestige are notable because their thoughts and opinions may be considered more important than others in the class. In contrast, students with high influence are in contact with many other students, as evidenced by the large number of discussion posts that they initiate to others. Students with low influence post fewer messages and are not as actively engaged with building or sustaining relationships with other students.

We used all three measures (Freeman degree centrality, in-degree centrality [prestige], and out-degree centrality [influence]) to quantify students’ interactions in three aggregated online discussions and the learning journal entries. We also developed network graphs to illustrate these relationships and to explore the relative measures of students’ learning presence found in the discussions and learning journals. To this end, we used a new software tool called SNAPP (Social Networks Adapting Pedagogical Practice) (Dawson, 2008, 2010; Dawson et al., 2010; Dawson, Bakharia, & Heathcote, 2010). SNAPP was used to capture student discussion posts from all of the discussions in Module 6. We aggregated these data into adjacency matrices that represented all student interactions across all module discussions, and then we created a separate attribute file containing learning presence frequency counts for each student found in each module’s learning journals and discussion posts, as well as individual measures of prestige and influence calculated using UCINet software. Finally, we imported these files into the NetDraw software package to generate a series of network graphs which are analyzed in the Results section.

Research question 1: When part of the online instructional role is shared with students (elements of design and facilitation of discourse) to what extent is there an impact on the expression of self- and co-regulation (learning presence) as measured through quantitative content analysis of discussion postings and learning journals?

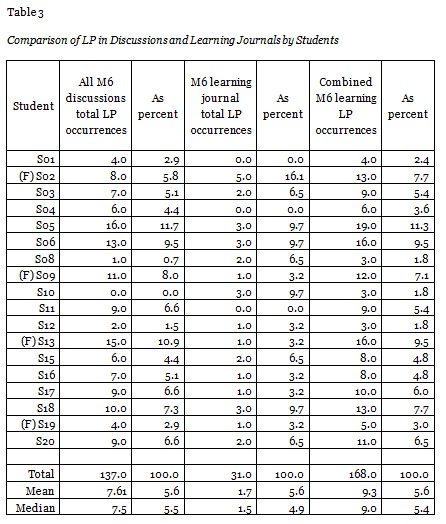

When comparing mean learning presence in the combined averaged discussions and learning journals of the Module 6 student facilitators (02, 09, 13, and 19) and the rest of the class, we found that the facilitator group exceeded their peers with an average of 11.3 versus 8.8 learning presence occurrences across the two learning activities. Thus, the facilitators exhibited 31% more learning presence indicators than their non-facilitating peers (see Table 1).

Mann-Whitney U was performed to determine whether student facilitators and non-facilitators differed with respect to levels of learning presence beyond statistical chance. Median combined occurrences of learning presence were 12.50 and 8.5, respectively. Although the student facilitators as a group had a higher average rank (Mrank = 7.0) than the student non-facilitators (Mrank = 10.21), the differences in the distribution of learning presence within the two groups were not statistically significant (Mann–Whitney U = 18.00, n1 = 4, n2 = 14, p =.286 two-tailed).

Research question 2: What impact does the shared instructional role (learner facilitation of online discussions) have on metrics reflected in social network analysis? Do facilitators occupy more advantageous locations in the social network?

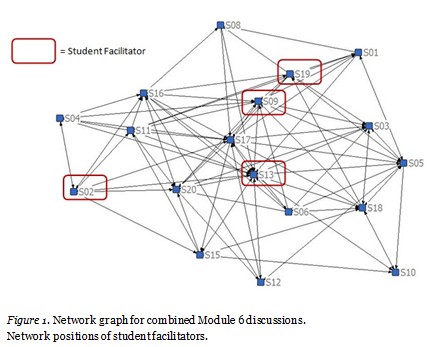

When we examined student interactions using a network graph (see Figure 1) to visualize the ties that emerged between students as a result of their postings in all of the discussions we analyzed, we found the following students were most centrally positioned in the network: 17, 13, and 09. Two members of this group were student facilitators (students 13 and 09). These three students were most active in initiating posts and responding to other students, as evidenced by the number of ties that connected them to their peers. In contrast, student facilitator 19 was somewhat more central, and student 02 was located on the edge of the network, because he had fewer peer relationships.

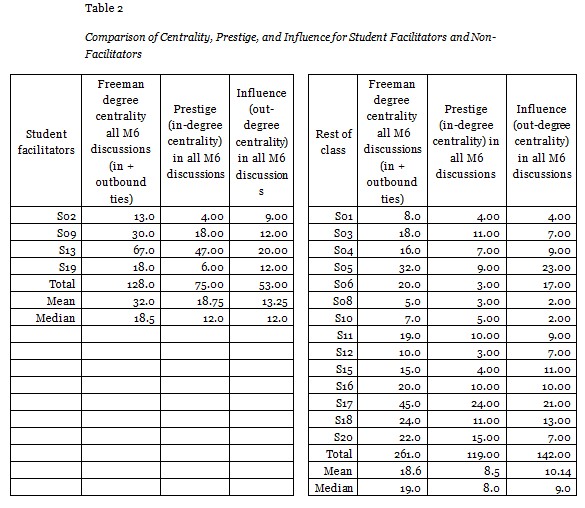

Overall, the student facilitators demonstrated more prominent network positions for prestige (in-degree centrality) and influence (out-degree centrality) than the rest of the class when these two measures were aggregated and averaged across the group (see Table 2). In terms of prestige, the facilitators had a median of 12.0 incoming ties versus 8.0 for the rest of the class. The median of outbound ties (influence) for the facilitator group was 12.0 versus 9.0 for their peers. In both cases, the facilitators had higher measures than non-facilitators.

Results from Mann-Whitney U, testing differences in prestige and influence between student facilitators and non-facilitators, indicated that although the student facilitators had higher medians of in-bound and out-bound messages than their counterparts, statistically significant differences in the metrics for influence (Mann–Whitney U = 17.00, n1 = 4, n2 = 14, p =.24 two-tailed) and prestige (Mann–Whitney U = 19.00, n1 = 4, n2 = 14, p =.337 two-tailed) were not found.

Research question 3: How does student learning presence manifest when we compare more public, interactive forms of online learner self and co-regulation as documented in student discussions versus more private venues such as individual learning journals? How are the three categories of learning presence and their constructs distributed across these two learning activities?

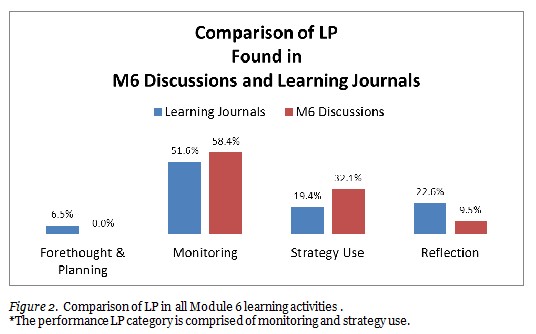

In comparing the distribution of the three learning presence categories, forethought and planning, performance, and reflection, in the two sets of learning activities in Module 6 (discussions and journals), the monitoring construct was most frequently reported in both discussions (58.4%) and learning journals (51.6%) (see Figure 2). From here patterns diverged. The six discussions accounted for 32.1% of strategy use, with no evidence of forethought and planning, and low levels of reflection (9.5%). In contrast, student learning journals demonstrated more evidence of reflection (22.6%) which occurred more frequently than strategy use (19.4%) and forethought and planning (6.5%). This provides evidence that the categories reflect the intended constructs; one would expect to see more reflection in activities such as learning journals in which students are asked to think about their learning.

Wilcoxon signed-rank test was used to examine if an overall difference in occurrences of learning presence in discussion posts and learning journal entries exists. The results indicated that 14 participants had higher learning presence occurrences in the discussion posts and four participants had higher occurrences of learning presence in the learning journals. The median occurrence of learning presence in discussions (Mdn = 7.50) was significantly higher than was evident in learning journals (Mdn = 1.50, z = -3.51, p < .001).

Research question 4: What network positions do students with high levels of combined learning presence in discussions and journals occupy relative to their peers?

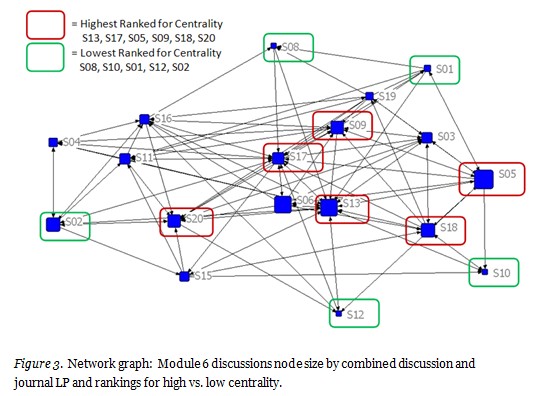

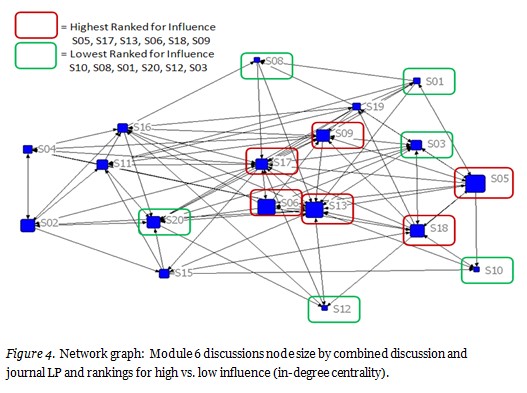

The network graphs in Figures 3 and 4 use scaling to change the node size to correspond to the relative percentages of each student’s combined learning presence occurrences based on all of the analyzed discussions and learning journals. With one exception, all of the students who were ranked with highest learning presence were near the center of the network, indicating they had the greatest interaction with their peers. All of the students with the lowest learning presence were found at the periphery of the network.

To further analyze the effect of learning presence on online activity, a median split was used to identify students with high and low levels of combined learning presence from both discussions and journals (see Table 3). The newly created variable served as grouping to examine differences in centrality, prestige, and influence. As mentioned earlier, we calculated Freeman degree centrality by combining measures of in-degree centrality, which are counts of inbound ties with other students, and out-degree centrality, which are counts of outbound ties. These same measures, when considered individually, are indicators of network prestige (in-degree centrality) and influence (out-degree centrality) (see Table 4). With students’ ranks as a dependent measure, learning presence levels (high vs. low) had an effect on the overall centrality of student positions on the network (Mann–Whitney U = 6.50, n1 = 8, n2 = 10, p =.003 two-tailed).

With students’ ranks in terms of influence as a dependent measure, the results indicated that students with high learning presence ranked higher on influence (Mann–Whitney U = 10.50, n1 = 8, n2 = 10, p =.008 two-tailed) (see Figure 4). A somewhat similar pattern of network positions found in Figure 3 appears in Figure 4, with a core group comprised of students 05, 09, 13, 17, and 18, all ranking among the highest in both graphs for centrality and influence. The results from independent samples test with prestige ranks as a criterion showed no differences in students’ ranks of prestige depending upon high and low levels of LP (Mann–Whitney U = 19.50, n1 = 10, n2 = 8, p =.068 two-tailed).

Research question 5: How do prestige and influence correlate with combined learning presence in discussions and learning journals and in each of these activities when considered separately?

When we examined combined learning presence found in discussions and learning journals, results from correlation analysis indicated that, as a whole, this measure has a positive and moderate correlation with prestige (Spearman rho (18) = .451, p = .06) and a positive and large correlation with influence (Spearman rho (18) = .737, p < .001).

When discussions were considered separately from learning journals, the relationship between learning presence in discussion posts and prestige was moderate, Spearman rho (18) = .569, p = .014. Even though the results from direct group comparisons were not statistically significant, the students with prominent positions on the variable prestige tended to also have higher ranks on LP in discussion, Mann–Whitney U = 7.00, n1 = 3, n2 = 15, p = .065 two-tailed. Further, the relationship between influence and learning presence in discussion posts was large and statistically significant, Spearman rho (18) = .781, p < .001. Furthermore, when grouped based on influence, students with higher positions tend to have also higher ranks on the variable LP in discussion, Mann–Whitney U = 3.00, n1 = 4, n2 = 14, p = .008 two-tailed.

Non-significant correlations between journal learning presence and prestige (Spearman rho (18) = -.211, p = .40) and journal learning presence and influence (Spearman rho (18) = .081, p = .75) confirmed that journal learning presence and prestige and influence are unrelated. The results from Mann-Whitney showed that high and low prestige within the network cannot be reliably linked to levels of journal learning presence, Mann–Whitney U = 15.00, n1 = 3, n2 = 15, p = .363 two-tailed. Also, journal learning presence did not differ between students with high and low influence in the network, Mann–Whitney U = 20.00, n1 = 4, n2 = 14, p = .385, two-tailed. Again, this suggests that certain students, perhaps those who are less active in public forums do, nonetheless, exhibit elements of learning presence in more private forums, and that asking them to facilitate a module may result in higher expressions of learning presence.

With regard to results for our first research question, we found patterns that were suggestive, yet not statistically significant. While student facilitators expressed more evidence of learning presence than their peers, these patterns within a single module were not significant. It seems possible that with a larger sample size, more definitive conclusions could be reached and further research is warranted. In response to our second research question, regarding the occurrence of learning presence among facilitators, we found similarly suggestive patterns of centrality. However, although facilitators occupied more central locations within the network, associated metrics were not significantly different. When we consider our third research question, it is not surprising that students engaged in more reflection in the learning journals than in the discussions. The journals asked students to reflect on their learning processes and they did so. It is somewhat illuminating that students engaged in more learning presence overall in the discussions and that the most frequent form of self-regulation in both journals and discussions was monitoring. Lastly, results for our last research question indicated that metrics of self-regulation evidenced in QCA appear to identify students who are both influential and prestigious as measured by SNA. It seems probable that the capacity to self-regulate in online environments results in more relevant or more sophisticated discourse, making students with better learning presence more attractive interlocutors for their classmates.

As noted by previous researchers (e.g., deLaat, Lally, Lipponen, & Simons, 2007b) the combination of QCA and SNA may allow for a compatible research approach illuminating some of the qualities of both form and content of interactions in online learning environments. Through the combination of these kinds of analysis, we are able to uncover important patterns bearing on the effects of approaches to new online pedagogy generated from the CoI framework. We have also extended the use of SNA in analyzing a new construct (learning presence) within the CoI framework.

Facilitating learner self-regulation has proven to have advantageous outcomes in much research in classrooms (e.g., Zimmerman, 2000) and in emergent research in online environments (Means et al., 2009). In past research, it has been suggested that providing students with more complex collaborative tasks results in higher levels of self and co-regulatory performance (Shea et al., 2012). This study sought to extend previous findings by implementing learner centered forms of instruction in which we analyzed levels of learning presence of student facilitators and non-facilitators in online discussions and journals through QCA and SNA.

Specifically, in this paper, we analyzed a new element in the CoI model reflecting online learner co- and self-regulatory processes – learning presence. We examined the impact of providing a scaffolded shift in instructional roles in which learners were supported to take on more of the responsibility for design and facilitation of discourse (elements of teaching presence) and observed the resulting variation in associated indicators of self- and co-regulatory performance (learning presence) reflected through QCA of different learning activities. Through research questions 1, 2, and 4 we discovered that lead student facilitators exhibit higher levels of learning presence and occupy more advantageous locations reflected in SNA.

Through the results reflected in our third research question, we disclosed significant and illuminating patterns in categories of learning presence in different learning activities. Perhaps not surprisingly, forethought and planning are not very evident in either online discussions or learning journals where strategy use and reflection are more common. That learners are exhibiting forms of strategy use more during performance (online discussion) and greater monitoring and reflection in journal activities validates the intended categories within the learning presence construct. We would expect to see these patterns, that is, more reflection and monitoring in journals and greater strategy use during performance, and we found them.

Research question 5 is significant in that results suggest that students with high discussion learning presence also have high in-degree centrality, indicating that other students sense that they are valuable partners for interaction and the knowledge building meant to result from it. These results suggest that higher levels of learning presence in online discussions are reflected in important metrics associated with SNA. Also of note is the finding that learning presence dimensions that are evident in certain activities (learning journals) are not automatically associated with metrics important in SNA.

Overall, these findings are significant in that they support and extend previous research seeking to enhance one of the dominant theories (the CoI framework) that describes, explains, and predicts learning in online environments. Results here represent important support for the validity of learning presence as a complementary construct to this framework. Findings indicating that learning presence can be fostered through shared instructional roles and that this form of self- and co-regulatory performance is associated with advantageous locations in social networks suggest that the construct is useful. We conclude that the long standing belief that online learners require greater self-direction, time management, and the like is supported and better explained through the more inclusive theoretical construct of self-regulated learning and the related construct of online learning presence. We further conclude that the online environment creates demands for new forms of self-regulation that are under articulated in the current CoI model. We believe that the model can be enhanced through additional research into the specific roles of learners qua learners in collaborative online education.

This paper contributes to the literature on constructivist online learning and on SNA. Specifically, the paper contributes to SNA by adding analysis of a new theoretical construct, learning presence, to it. A weakness of SNA in online educational research has been its lack of a relevant theoretical framing for metrics of centrality. We don’t know, for example, based on the numbers of ties between participants in online learning contexts, whether such connections reflect the quality of the discourse or other processes important to learning. We assume that through interaction, learners increase their opportunity to activate processes known to support knowledge construction. For example, in line with constructivist theories of online learning, Chi (2009) explains that interaction involves co-construction of knowledge and enhances understanding by allowing learners to do things like building upon each other’s contributions, defending and arguing positions, challenging and criticizing each other on the same concepts or points, and asking and answering each other’s questions. Chi argues that such interaction is constructive in nature, because learners are generating knowledge that goes beyond the information that would typically be provided in learning materials. The cognitive benefits of such interaction include that a partner’s contributions can provide additional information, new perspectives, corrective feedback, reminders, or a new line of reasoning which can enhance learning through added guidance, hints, and/or scaffolds that either enrich knowledge or support additional inferencing. Given our results with regard to SNA metrics of influence and prestige, it seems probable that the capacity to self-regulate in online environments leads to more relevant or sophisticated discourse, making students with better learning presence more attractive interlocutors for their classmates. Chi’s rationale for the importance of interaction thus lends weight to the significance of learning presence in courses that depend on online discourse to promote learning.

Through the analysis of learning presence within SNA, we sought to understand whether learners who evince higher levels of online self-regulated learning (learning presence) in their discourse also occupy more central locations within the interaction networks reflected through SNA. In other words, do indicators of learning presence correlate with indicators of prestige and influence measured through SNA meant to indicate richer interactive opportunities of the type that support knowledge creation? Is SNA a promising research method for examining theoretically grounded explanations of online learning? Results reported here suggest that SNA does reflect constructs that are grounded in theories of how people learn, as adapted for online environments. Specifically, these results indicate that students with higher levels of learner presence occupy more advantageous positions, indicating that they are more active and more sought after in networks of interaction. This represents a promising conclusion and additional research into the relationship between learning presence and interaction is warranted.

Finally, we believe that this research continues to provide evidence for the validity of the learning presence construct. Learning presence patterns revealed in this study indicate that student self-regulation as defined here is both logical (the learning presence patterns make sense) and important (learning presence correlates with metrics assumed to be advantageous for interaction). We, therefore, suggest that the inclusion of learning presence in the CoI model may be warranted.

Adler, E. S., & Clark, R. (2011). An invitation to social research: How it’s done. Belmont, CA: Wadsworth.

Allen, I. E., & Seaman, J. (2013). Changing course : Ten years of tracking online education in the United States. Babson Park, MA: Babson Survey Research Group.

Anderson, T., Rourke, L., Garrison, D. R., & Archer, W. (2001). Assessing teaching presence in a computer conferencing context . Journal of Ansynchronous Learning Networks, 5(2), 1–17.

Aviv, R., Erlich, Z., Ravid, G., & Geva, A. (2003). Network analysis of knowledge construction in asynchronous learning networks. Journal of Asynchronous Learning Networks, 7(3), 1-23.

Bixler, B. A. (2008). The effects of scaffolding student’s problem-solving process via question prompts on problem solving and intrinsic motivation in an online learning environment (Doctoral dissertation). The Pennsylvania State University, State College, PA.

Chang, M. M. (2007). Enhancing web-based language learning through self-monitoring. Journal of Computer Assisted Learning, 23(3),187–196. doi: 10.1111/j.1365-2729.2006.00203.x

Chi, M. T. H. (2009). Active-constructive-interactive: A conceptual framework for differentiating learning activities. Topics in Cognitive Science, 1, 73-105. doi: 10.1111/j.1756-8765.2008.01005.x

Cho, H., Stefanone, M., & Gay, G. (2002). Social network analysis of information sharing networks in a CSCL Community. Paper presented at the Proceedings of Computer Support for Collaborative Learning (CSCL) 2002 Conference, Boulder Co.

Cho, H., Geri, G., Davidson, B., & Ingraffea, A. (2007). Social networks, communication styles, and learning performance in a CSCL community. Computers & Education, 49(2), 309–329.

Chung, S., Chung, M.-J., & Severance, C. (1999). Design of support tools and knowledge building in a virtual university course: Effect of reflection and self-explanation prompts. Paper presented at the WebNet 99 World Conference on the WWW and Internet Proceedings, Honolulu, Hawaii. (ERIC Document Reproduction Service No. ED448706)

Cook, D. A., Dupras, D. M., Thompson, W. G., & Pankratz, V. S.. (2005). Web-based learning in residents’ continuity clinics: A randomized, controlled trial. Academic Medicine, 80(1), 90–97. doi: 10.1007/s11606-008-0541-0

Crippen, K. J., & Earl, B.L (2007). The impact of web-based worked examples and self-explanation on performance, problem solving, and self-efficacy. Computers & Education, 49(3), 809–821. doi: 10.1016/j.compedu.2005.11.018

Dawson, S. (2008). A study of the relationship between student social networks and sense of community. Educational Technology & Society, 11(3), 224-238.

Dawson, S., Bakharia, A., & Heathcote, E. (2010). SNAPP: Realizing the affordances of real-time SNA within networked learning environments. Proceedings of the 7th International Conference on Networked Learning.

Dawson, S. (2010). ‘Seeing’ the learning community: An exploration of the development of a resource for monitoring online student networking. British Journal of Educational Technology, 41(5), 736-53. doi: 10.1111/j.1467-8535.2009.00970.x

deLaat, M., Lally, V., Lipponen, L., & Simons, R. J. (2007a). Investigating patterns of interaction in networked learning and computer-supported collaborative learning. International Journal of Computer-Supported Collaborative Learning, 2, 87-103.

deLaat, M., Lally, V., Lipponen, L., & Simons, R. (2007b). Online teaching in networked learning communities: A multi-method approach to studying the role of the teacher. Instructional Science, 35(3), 257-286.

Dennen, V. P. (2008). Looking for evidence of learning: Assessment and analysis methods for online discourse. Computers in Human Behavior, 24(2), 205-219. doi: 10.1016/j.chb.2007.01.010

Garrison, D. R., Anderson, T., & Archer,W. (2000). Critical inquiry in a text-based environment: Computer conferencing in higher education. The Internet and Higher Education, 2(2-3), 87−105. doi: 10.1016/S1096-7516(00)00016-6

Garrison, D. R., Anderson, T., & Archer, W. (2001). Critical thinking, cognitive presence, and computer conferencing in distance education. American Journal of Distance Education, 15(1), 7–23.

Heo, H., Lim, K. Y., & Kim, Y. (2010). Exploratory study on the patterns of online interaction and knowledge co-construction in project-based learning. Computers & Education, 55(3), 1383–1392.

Holsti, Ole R. (1969). Content analysis for the social sciences and humanities. Reading, MA: Addison-Wesley.

Krippendorf, K. (2004). Content analysis: An introduction to its methodology (2nd ed.). Thousand Oaks, CA: Sage Publications.

Lombard, M., Snyder-Duch, J., & Bracken, C. C. (2002). Content analysis in mass communication: Assessment and reporting of intercoder reliability. Human Communication Research, 28(4), 587-604. doi: 10.1111/j.1468-2958.2002.tb00826.x

Lowes, S., Lin, P., & Wang, Y. (2007). Studying the effectiveness of the discussion forum in online professional development courses. Journal of Interactive Online Learning, 6(3), 181-210.

Macfayden, L. P., & Dawson, S. (2010). Mining LMS data to develop an “early warning system” for educators: A proof of concept. Computers & Higher Education, 54, 588-599. doi: 10.1016/j.compedu.2009.09.008

Means, B., Toyama, Y., Murphy, R., Bakia, M., & Jones, K. (2009). Evaluation of evidence-based practices in online learning: A meta-analysis and review of online learning studies. Washington, D.C.: U.S. Department of Education, Office of Planning, Evaluation, and Policy Development.

Nelson, B. C. (2007). Exploring the use of individualized, reflective guidance in an educational multi-user virtual environment. Journal of Science Education and Technology, 16(1), 83–97. doi: 10.1007/s10956-006-9039-x

Neurendorf, K. A. (2002). The content analysis guidebook. Thousand Oaks, CA: Sage Publications.

Rourke, L., Anderson, T., Garrison, D. R., & Archer, W. (1999). Assessing social presence in asynchronous text-based computer conferencing. Distance Education, 14(2), 50–71.

Russo, T. C., & Koesten, J. (2005). Prestige, centrality and learning: A social network analysis of an online class. Communication Education, 54(23), 254-261. doi: 10.1080/03634520500356394

Saito, H., & Miwa, K. (2007). Construction of a learning environment supporting learners’ reflection: A case of information seeking on the Web. Computers & Education, 49(2), 214–229. doi: 10.1016/j.compedu.2005.07.001

Shea, P., & Bidjerano, T. (2010). Learning presence: Towards a theory of self-efficacy, self-regulation, and the development of a communities of inquiry in online and blended learning environments. Computers & Education, 55(4), 1721–1731. doi:10.1016/j.compedu.2010.07.017

Shea, P., Hayes, S., Uzuner-Smith, S., Vickers, J., Bidjerano, T., Gozza-Cohen, M., Jian, S., Pickett, A., Wilde, J., & Tseng, C. (2012). Online learner self-regulation: Learning presence, viewed through quantitative content- and social network analysis. Paper presented at the American Educational Research Association annual meeting, Vancouver, Canada

Shea, P., Hayes, S., & Vickers, J. (2010). Online instructional effort measured through the lens of teaching presence in the community of inquiry framework: A Re-examination of measures and approach. International Review of Research in Open and Distance Learning, 11(3), 127-154.

Shen, P. D., Lee, T.H., & Tsai, C. W. (2007). Applying web-enabled problem-based learning and self-regulated learning to enhance computing skills of Taiwan’s vocational students: A quasi-experimental study of a short-term module. Electronic Journal of e-Learning, 5(2), 147–156.

Swan, K., & Ice, P. (2010). The community of inquiry framework ten years later: Introduction to the special issue. Internet and Higher Education, 13(1-2), 1-4.

Swan, K., & Shea, P. J. (2005). The development of virtual learning communities. Asynchronous learning networks: The research frontier (pp. 239–260). New York: Hampton Press.

Wang, K. H., Wang, T. H., Wang, W. L., & Huang, S. C. (2006). Learning styles and formative assessment strategy: Enhancing student achievement in web-based learning. Journal of Computer Assisted Learning, 22(3), 207–217. doi: 10.1111/j.1365-2729.2006.00166.x

Yang, H.-L., & Tang, J.-H. (2003). Effects of social network on students’ performance: A web-based forum study in Taiwan. Journal of Asynchronous Learning Networks, 7(3), 93-107.

Zhu, E. (2006). Interaction and cognitive engagement: An analysis of four asynchronous online discussions. Instructional Science, 34(6), 451-480. doi: 10.1007/s11251-006-0004-0

Zimmerman, B. J. (1998). Developing self-fulfilling cycles of academic regulation: An analysis of exemplary instructional models. In D. H. Schunk & B.J. Zimmerman(Eds.), Self-regulated learning: From teaching to self-reflective practice (pp. 1–19). New York: Guilford.

Zimmerman, B. J. (2000). Attaining self-regulation: A social cognitive perspective. In M. Boekaerts, P. R. Pintrich & M. Zeidner (Eds.), Handbook of self-regulation (pp. 13–39). New York: Academic Press.

Zimmernan, B.J., & Schunk, D.H. (2001). Theories of self-regulated learning and academic achievement: An overview and analysis. In B. J. Zimmerman & D.H. Schunk (Eds.), Self-regulated learning and academic achievement: Theoretical perspectives (pp. 1-36). Mahwah, NJ: Lawrence Erlbaum.

Zimmerman, B. J. (2008). Investigating self-regulation and motivation: Historical background, methodological developments, and future prospects. American Educational Research Journal, 45(1), 166–183. doi:10.3102/0002831207312909

Please refer to the PDF version of this article.