|

|

Chantorn Chaiprasurt and Vatcharaporn Esichaikul

Asian Institute of Technology, Thailand

Mobile technologies have helped establish new channels of communication among learners and instructors, potentially providing greater access to course information, and promoting easier access to course activities and learner motivation in online learning environments. The paper compares motivation between groups of learners being taught through an online course based on an e-learning system with and without the support of mobile communication tools, respectively. These tools, which are implemented on a mobile phone, extend the use of the existing Moodle learning management system (LMS) under the guidance of a mobile communication tools framework. This framework is considered to be effective in promoting learner motivation and encouraging interaction between learners and instructors as well as among learner peers in online learning environments. A quasi-experimental research design was used to empirically investigate the influence of these tools on learner motivation using subjective assessment (for attention, relevance, confidence, satisfaction, and social ability) and objective assessment (for disengagement, engagement, and academic performance). The results indicate that the use of the tools was effective in improving learner motivation, especially in terms of the attention and engagement variables. Overall, there were statistically significant differences in subjective motivation, with a higher level achieved by experimental-group learners (supported by the tools) than control-group learners (unsupported by the tools).

Keywords: e-learning; mobile communication tools; motivation; online courses; online learning

Online learning increases learners’ ability to learn at their own convenience; however, the physical separation from their peers and instructors that online learning involves may result in a lack of communication and interaction and a weaker sense of belonging to a classroom community. These affect learners’ motivation and can lead to poor performance, dissatisfaction, and dropout (Balaban-Sali, 2008; Hirumi, 2002; Rau, Gao, & Wu, 2008). Effective interaction can positively impact learners’ motivation, engagement, and interest in learning. To address learners’ problems and needs in relation to motivation during online courses, new communication technology—particularly mobile technology—seems to be effective by virtue of its ability to encourage interaction between learners and instructors (Rauet et al., 2008; Shih & Mills, 2007).

Mobile learning gives students the ability to interact with their instructors and fellow learners immediately—at any time and place—and adapt content to their individual needs, which in turn facilitates sustained connections between learners and instructors. This may include content or processes where appropriate knowledge needs to be quickly and easily accessible and where a large volume of introduction or context is not needed; topics where the viewpoints and opinions of recognizable instructors with whom the students have had the opportunity to have fulfilling interactions; topics where advice, tips, and best practices can be simply presented and packaged, for example in areas such as recruitment and coaching; topics where capability to locate them at a certain place or time adds value, for example, location-specific access; and topics where the student will almost certainly benefit from access to learning on the move—this may include learners such as field engineers and salespeople (Ufi & Kineo, 2007).

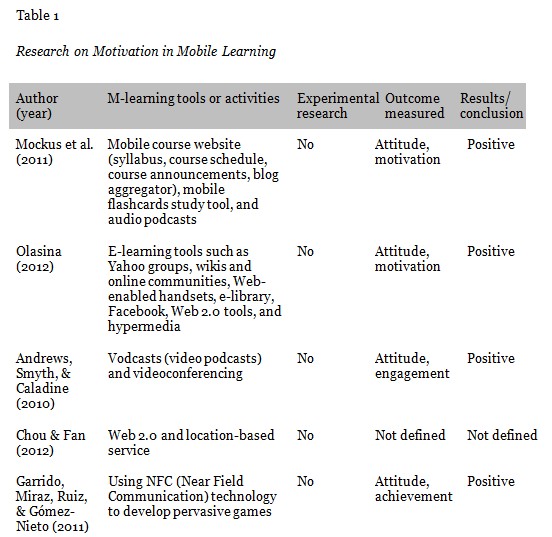

Recent innovations incorporating m-learning activities in various online courses are reportedly effective in encouraging the motivation to learn (e.g., Mockus et al., 2011; Olasina, 2012). However, m-learning studies that explore motivation to learn in online environments remain limited in number. Table 1 summarises research on motivation in m-learning settings in recent years.

A review of the current literature indicates that there are various m-learning tools or activities presently used to motivate online learners. Web 2.0 tools and audio and video podcasts seem more popular than other tools. However, these research studies have had a tendency to develop mobile based learning applications that do not identify any design strategy for the stimulation and support of learner motivation. Apparently, none of these studies conducted an experiment to investigate whether there are motivation changes/improvements due to the proposed m-learning tools.

Previous approaches to motivational design in online learning environments have mostly been based on Keller’s (1987) ARCS model (e.g., Bae, Lim, & Lee, 2005; Jones, Issroff, Scanlon, Clough, & McAndrew, 2006; Shih & Mills, 2007). The ARCS model identifies four essential strategic components for instructors to enhance learning motivation. These components are attention, where the instructor gains and sustains the learners’ attention and interest throughout the session; relevance, which is conceptualized as a matter of how learning activities are depicted to the learners as reflecting their needs, interests, and motives, rather than actually referring to content; confidence, which focuses on learner performance, and helps learners develop a positive expectation that they will be able to achieve a successful learning experience; and satisfaction, which provides learners with positive reinforcement for their efforts (Keller, 1987).

In addition, collaborative learning—learning through social interaction—has been extensively acknowledged for its ability to encourage a spirit of learning and to foster knowledge about the learning process (Sharan & Shaulov, 1990). Since cooperative learning groups provide each member with essential praise and recognition for their positive effort, cooperative learning activity can raise learners’ motivation. Miyake (2007) and Slavin (1995) have shown as well that learners are more motivated to learn in a collaborative situation and that this has a positive effect on academic, social, and attitudinal outcomes.

Although a significant number of studies have examined the motivational requirements of online learners and on their basis created models for motivational design in online instruction, very little research-based evidence has been conducted on learners’ motivation in relation to the use of mobile devices as a complement to existing e-learning systems. Therefore, this study attempts to prove that online learners’ motivation can be enhanced when the proposed mobile communication tools are used as part of an existing e-learning system in accordance with a motivational design model. This study is designed to examine whether the proposed mobile communication tools have the effect of stimulating and maintaining learners’ motivation to use the e-learning system, and also whether any motivational differences exist across different conditions of online courses (i.e., those using mobile tools vs. not using them).

The quasi-experimental study presented in this paper was designed to compare learning motivation between learners in a course being taught using Moodle LMS supported by mobile communication tools (the experimental group) and those being taught using regular Moodle LMS only (control group). An experimental comparison of the two groups’ learning motivation was carried out in the first semester of 2011 in the Faculty of Information Technology at a university in Thailand. The initial sample consisted of 193 undergraduate students (68% female, 93% aged 18–21) enrolled that term in a course called IT for Learning. These initial participants were assigned to control (n = 92) and experimental (n = 101) groups on the basis of their demographic profile in order to ensure homogeneity across the groups, thus reducing bias.

Learners in both the experimental and control groups learned the same content and used the same online course materials. The courses consisted of weekly modules, wherein the instructor required the learners to regularly take part in a variety of online class activities, for example, to read a given text online, post a short essay, engage in debate on the discussion forums, create their own blogs on course topics, and vote on class social activities. During the courses, learners were assessed regularly by means of formative assessments, including discussion forum posts and individual assignments, and summative assessments, including assignment grades and midterm and final exams.

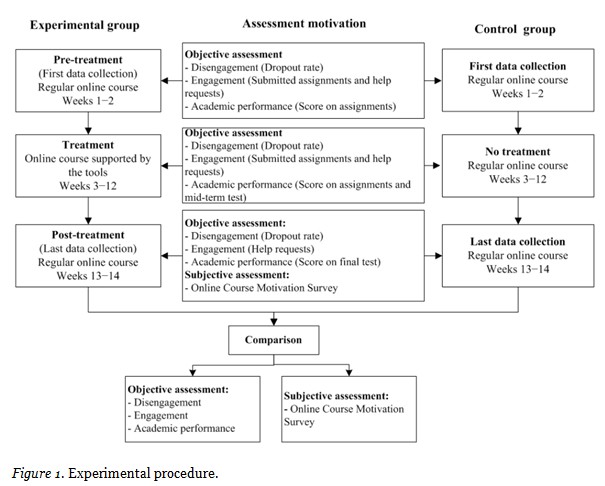

The quasi-experimental design employed by this study can be separated into three periods: the pre-treatment period (first and second weeks), the treatment period (third to twelfth weeks), and the post-treatment period (thirteenth and fourteenth weeks). Figure 1 describes the procedure used by the experiment. Learners from both groups began in the pre-treatment period (the first two weeks, in which no treatment had yet been applied). They took part in regular online courses using Moodle, and objective assessment data (on disengagement, engagement, and academic performance) were collected on the basis of their actions. Data from this pre-treatment period were collected and analysed in relation to those from the treatment and post-treatment periods. During the treatment period, the learners in the experimental group were provided access to mobile communication tools integrated with Moodle, whereas the learners in the control group were able to access regular Moodle only. Scores on the midterm test were added to treatment assessments to assess learners’ academic progress and performance. During the post-treatment period, learners in both groups learned through the regular Moodle LMS, unsupported by the mobile tools. Instead, the objective assessment measures on engagement and academic performance, respectively, were the number of help requests made by the learners and their scores on the final test. They were also asked to complete the Online Course Motivation Survey.

This study used the procedure shown in Figure 1 to develop appropriate mobile communication tools integrated with Moodle LMS for online learners in the experimental group.

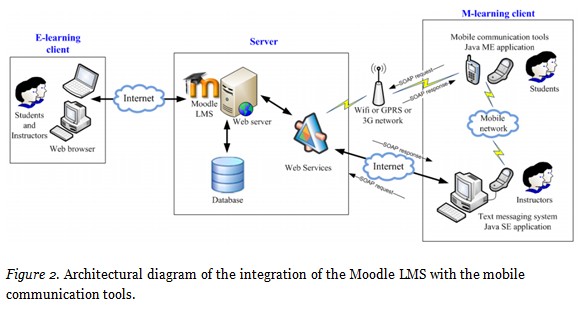

The mobile communication tools used for the experiment are an extension of the Moodle LMS, taking advantage of the help of interactive mobile applications. Figure 2 presents an overview of the whole system, including the e-learning client, server, and m-learning client. The e-learning client used consisted of learning products delivered via a web browser over a network. This study also employed some user interfaces customised to suit the proposed tools in the presentation layer of Moodle. Another component consisted of the server, which delivered course information in a database to the (desktop or laptop) browser, and the web services required (translating LMS requests for the mobile devices). The last component, the m-learning client, was composed of the text messaging system and mobile communication tools. The former was used only by the instructors, to send text messages retrieved from Moodle to individual learners, while the latter were used by learners on their mobile phones to access Moodle.

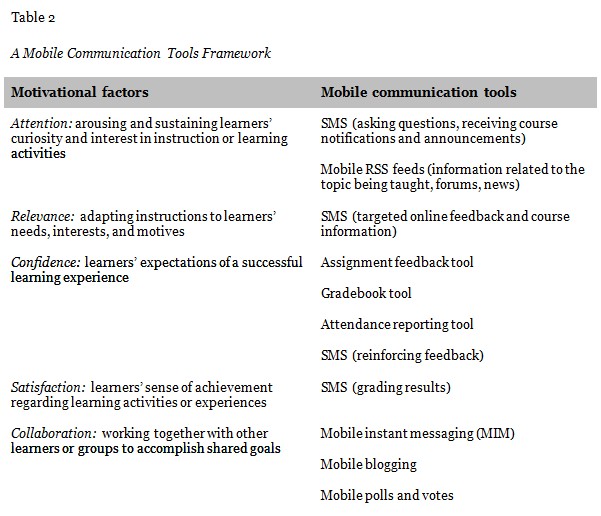

This study’s development of mobile communication tools to enhance motivation in e-learning is guided by a combination of Keller’s ARCS motivation model and the collaboration research of Chaiprasurt, Esichaikul, and Wishart (2011), which presented a framework for tool design and showed that the tools developed were effective in improving learner motivation.

Table 2 illustrates how the ARCS model and the collaboration factors were utilised as a framework for developing mobile communication tools into existing e-learning systems. The approach to designing the tools based on the framework is discussed in more detail in the following sections, focusing on collaboration factor by factor and tool by tool.

Strategies to gain and sustain learners’ attention and interest include capturing interest, stimulating curiosity, and maintaining attention (Keller, 1987).

Relevance in this context refers to a quality of furthering personal understanding and competence, linking instruction to learners’ interests, and tying it to learners’ experiences (Keller, 1987).

To help learners gain self-confidence, instructors need to consider their anxieties and provide instruction that fosters positive expectations for success and belief in competence based upon the learners’ efforts and abilities (Keller, 1987).

These factors help learners feel satisfied with their accomplishments, and include encouraging and supporting learners’ intrinsic enjoyment of the learning experience, providing rewards as incentives, and building learners’ perception that they are treated fairly (Keller, 1987).

Collaborative learning is learning through social interaction.

On this basis, we proceed to evaluate improvement in learners’ motivation when they use the proposed tools and compare motivation outcomes using objective measurements between groups of learners being taught in an online course based on an e-learning system with and without the support of the proposed tools, respectively.

To assess motivation, this study measured learner motivation towards an e-learning system using subjective (self-assessment) and objective (learners’ actions) measures. For the subjective assessment, a questionnaire was constructed to identify learner motivation and carefully designed and tested for validity and reliability. This instrument is based mainly on three questionnaires: Keller and Subhiyah’s Course Interest Survey (CIS) (1993), Keller’s Instructional Material Motivational Survey (IMMS, 1999), and Laffey, Lin, and Lin’s Social Presence Questionnaire (2006). The CIS and IMMS are designed in accordance with the ARCS model and are widely used to measure learner motivation with regard to a specific course. The former assesses learner motivation in relation to teacher-led instruction, and the latter, motivation in relation to instructional materials. The Social Presence Questionnaire, in contrast, covers social ability (the ability of the learner to situate his or her experience and perception of social interaction, use online social tools, and undertake activities) in online learning contexts.

The Online Course Motivation Survey developed for this study on the basis of these previous instruments consists of 45 items grouped onto five motivation scales, namely attention, relevance, confidence, satisfaction, and social ability. These scales each contain three constructs, and each construct consists of three items. The following is a list of three constructs related to each scale:

Five experts who have worked extensively with the online learning environment, the ARCS model, and/or psychological measurement completed the content validation survey. Items with an item objective congruence (IOC) of < .75 were examined and revised to achieve acceptable IOC scores. The draft questionnaire was trialled in a pilot study in the first semester of 2010, and the questionnaire was subsequently refined. The refined questionnaire consists of 45 items.

Reliability is often estimated by Cronbach’s alpha. The alpha internal consistency values separately applied to the five scales were 0.81, 0.82, 0.77, 0.91, and 0.90 for attention, relevance, confidence, satisfaction, and social ability, respectively. These values were consistently > .75, showing good reliability.

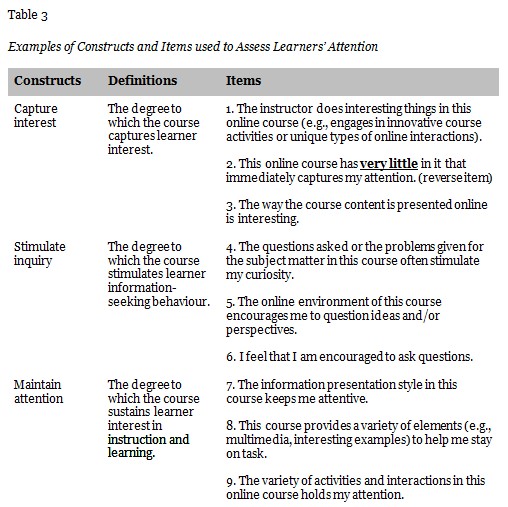

Table 3 lists examples of the constructs and of items used to assess learners’ motivation levels in response to the attention scale. A five-point Likert-type scale was used with a choice range of (1) strongly disagree (2) disagree (3) neutral, (4) agree, and (5) strongly agree.

For objective assessment, learner motivation was captured by analysis of learner’s actions when interacting with the LMS based on information logged, without interrupting their activities. According to Russell, Ainley, and Frydenburg (2005), motivation is frequently inferred from learners’ engagement in learning activities. Under this assumption, engagement relates directly to behaviour and indicates a strong connection between person and activity, whereas disengagement indicates the lack of a connection. If the learner is motivated to perform the activity, he/she is likely to be objectively engaged. The term engagement in the present study is thus used to refer to learners’ focus on the learning activity. Fredricks, Blumenfeld, and Paris (2004) conclude that behavioural disengagement is often a precursor to dropping out. This is confirmed by Martinez (2003), who contends that “low-motivational” behaviour is usually associated with dropout. Thus, a higher level of high-disengaged (low-motivated) learners would lead to higher rates of dropout.

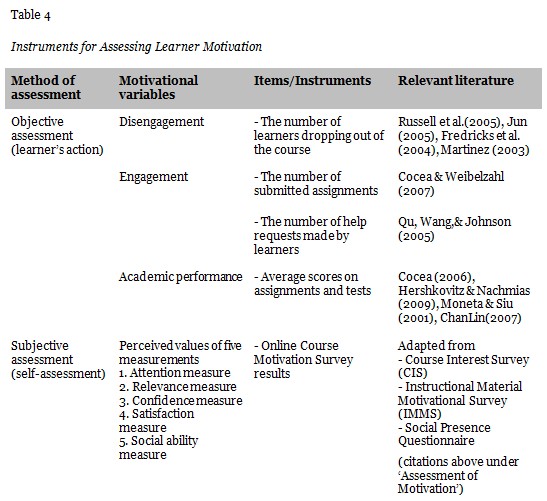

Moreover, the impact of motivation on the learner’s academic performance is also an important aspect of effective learning. Therefore, the objective measurement of motivation in this study used engagement, disengagement, and academic performance as the indicators of motivation. Table 4 summarises the assessment methods, motivational variables, and items or instruments used to assess learners’ motivation.

To evaluate the impact of the proposed tools, learners’ motivation was compared between regular and mobile-added modes of online learning using objective and subjective measurements in three periods along the course timeframe: before, during, and after implementing the tools. Chi-square contingency analysis revealed no difference in the demographic makeup of the treatment and control groups by gender, year of study, computer skill, the number of prior e-learning courses, and place of accessing the course.

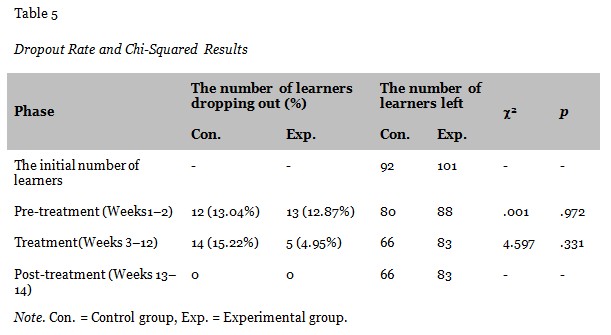

The number of learners dropping out of the courses (with dropout rates in parentheses) was analysed for group comparison using the Pearson chi-squared test. The dropout rate during the treatment period in the control group (15.22%) was higher than that in the experimental group (4.95%). However, the difference was not significant in all periods. Results from the analysis in each period are presented in Table 5. (There was no learner dropout during the post-treatment period.)

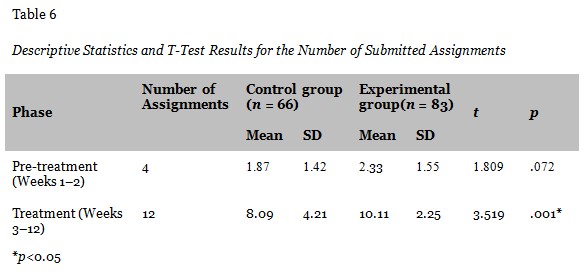

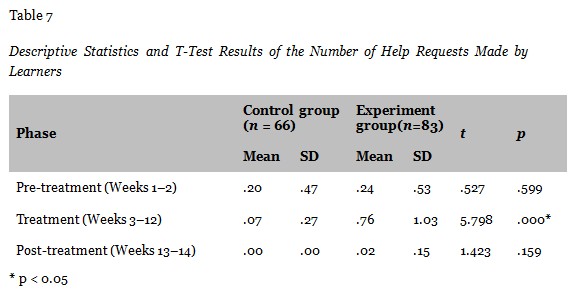

The numbers of submitted assignments and help requests made by learners, according to system records, were counted and compared. It can be observed from Table 6 that for the number of submitted assignments, the means of the experimental group were higher than those of the control group; furthermore, t-test results show a significant difference between the means of the two groups during the treatment period. Similarly, for the number of help requests made by learners, as shown in Table 7, the means of the experimental group were significantly greater than those of the control group during the treatment period. This shows that there were statistically significant differences in learners’ engagement between the control and experimental groups during the treatment portion of the online course.

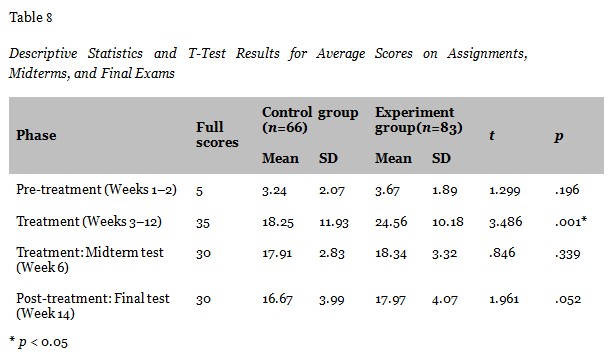

To gauge academic performance, learners’ scores on tests and assignments were evaluated. During pre-treatment and treatment periods, learners were asked to submit assignments weekly, and their average scores on these assignments were evaluated, whereas the average scores on midterm and final tests were evaluated during treatment and post-treatment, respectively. The learners in the experimental group achieved better learning scores with the proposed tools (see Table 8); however, they have a significantly higher mean score on assignments than learners in the control group only during the treatment period (t = 3.486, p < 0.05). In contrast, the average scores on the midterm and final tests, assessed in post-treatment, were not significantly different between the two groups.

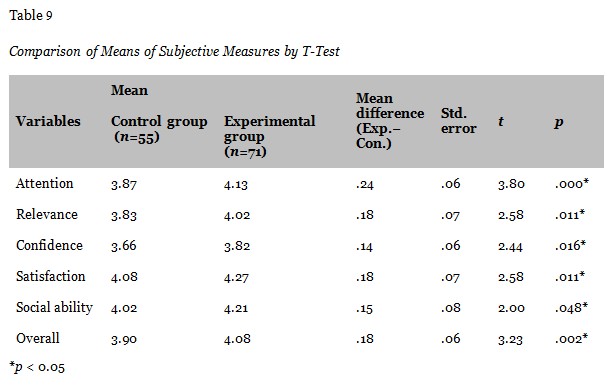

A one-way MANOVA was performed to determine whether there are any differences in motivation between students in the online course with and without the proposed tools on the five dependent measures (attention, relevance, confidence, satisfaction, and social ability). Significant main effects were found for the use of the proposed tools, Wilks’ λ = .89, F(5,120) = 2.90, p = .016, h2 = 0.108. A mean comparison test between the two groups was conducted on each variable and overall due to these significant MANOVA effects. Table 9 presents the mean values and the results of the pairwise comparison (t-test) performed on the two groups obtained from the Online Course Motivation Survey.

The results show a significant mean difference between learners in the control and experimental groups: attention (p = .000), relevance (p = .011), confidence (p = .016), satisfaction (p = .011), social ability (p = .048), and the overall measure of motivation (p = .002). The means for all motivation measures of learners in the experimental group, with the support of mobile tools, were higher than those in the control group. A pairwise comparison test also revealed that the experimental groups’ gain scores in all motivational variables were significantly higher than those of the control group. The attention variable shows the biggest difference in means between the two groups.

The findings confirm that the proposed mobile communication tools are consistent with ARCS and collaboration factors and can be used in existing e-learning systems to enhance the learner’s motivation in the online learning environment. These findings are based on both objective data on learners’ actions as recorded during the experiment and subjective (questionnaire) data assessing learner preferences and opinion. The subjective measurement technique provided significant insights not available by means of the objective method; however, attitudinal measures may be distorted by biasing factors such as the halo effect and acquiescence (Rubinstein & Hersh, 1984 in Cushman & Rosenberg, 1991). Consequently, this study emphasises the need for both objective and subjective assessments so that they can complement and reinforce each other.

In the objective assessment, the results for the disengagement variable showed that learners’ dropout rate in the experimental group was less than that in the control group; however, this difference was statistically insignificant. There was a high dropout rate during the pre-treatment period, in which students were allowed to withdraw from the course without penalty by the university. For the engagement variable, there was a significant difference between the two groups in all measures, but this was found only during the treatment period. These results confirmed that using the proposed tools can help learners to increase engagement and enhance learning.

Academic performance on assignments of learners in the experimental group was significantly higher than that in the control group during the treatment period but not the post-treatment period (that is, in the class assignments but not the midterm and final exam). There are several feasible explanations of why the post-treatment results showed no significant differences. As Amabile, Hill, Hennessey, and Tighe (1994) discovered, trait-intrinsic motivation (motivation to do something because it is enjoyable) correlates positively with course performance, while trait-extrinsic motivation (to do something due to the external reward) has no effect. Further, Moneta and Siu (2001) found that the effects of both intrinsic and extrinsic motivation on course performance can be changed by the educational or institutional environment. They also claimed that there are four main reasons why learners’ academic performance may not correlate with their motivation: course interestingness-complexity (course not sufficiently challenging), heuristic value of assignments (assignments require execution of step-by-step procedures and do not allow sufficient exploration or creative approaches), completeness-validity of assessments (assessments are not validly recognised), and shortcuts-to-grades (shortcuts to good grades allowing surface learning only instead of a deeper understanding).

Pooling these findings together with the results of this study suggests that using academic performance to measure motivation should involve formative assessment, intertwined with teaching, as well as summative assessment and that this should happen at the end of appropriate course subunits throughout the semester. Furthermore, it will be necessary to measure learners’ prior knowledge and skills in order to find out what they know coming in so that the advantages in academic performance they have gained during the online course can be more reliably identified.

In terms of subjective assessment, the Online Course Motivation Survey was carried out to assess learners’ perceived levels of motivation with regard to online courses. The results revealed that the means of all motivation measures (attention, relevance, confidence, satisfaction, and social ability) in learners who used the proposed tools as part of the online course were higher than in those who did not. Indeed, there was also a significant statistical difference in means between two groups across all motivation measures. The attention measure showed the highest difference. The tools used for intervention in the attention factor consisted of SMS (asking questions, course notifications, and announcements) and RSS (forums and news updates); the results indicate that these tools seem to be more effective than the tools proposed for the other factors.

The technologies used in this study, namely Java ME and SMS, are rather traditional by some new generation developers who prefer Android or iOS platform, and a social network service-based mobile application, respectively. However, these technologies serve the purpose of this study. In the case of Java ME, it was the most popular technology that could be supported by participants’ mobile phones during the experiment period, and SMS was the only one of the proposed tools that could reach 100% of participants. From this research study, it can be concluded that the practical implications of these findings are extremely helpful for instructors concerned with how to encourage more communication between their students and between the students and the instructor so as to enhance their motivation. Learners can use the proposed tools on their own mobile phones to interact both synchronously and asynchronously. Instructors can send SMSs on a system integrated with existing e-learning systems. Instructors using the tools proposed here should know what particular outcomes, feedback, course information, notifications, and announcements are essential to their specific group of online learners, as different people have different needs. It is also important that everyone be seen to be treated equitably and fairly. Moreover, individual learners must have control over their learning process and be able to control the amount of effort they expend and the way in which they learn; otherwise, motivation will be difficult to maintain.

This study examines the potential of several mobile communication tools (SMS, mobile RSS feeds, assignment feedback tool, gradebook tool, attendance reporting tool, MIM, mobile blogging, and mobile polls and votes) to stimulate and enhance the learner’s motivation in existing e-learning settings. The quasi-experimental study was designed to compare learners’ motivation in online courses with and without the support of mobile communication tools. Both subjective and objective assessments were carried out in order to evaluate the role of the proposed tools in enhancing learner motivation. Disengagement, engagement, and academic performance were subjected to objective assessment. The Online Course Motivation Survey developed for this study, to assess learners’ subjective motivation levels, consists of five measures, attention, relevance, confidence, satisfaction, and social ability, respectively. This survey is particularly robust especially in terms of content validity and reliability, providing clear and substantiated insight into the causal relationship between the online course approach and the learner’s motivation.

The study revealed significant differences in motivation between the control and experimental groups on the basis of objective assessment items, engagement (the number of submitted assignments and help requests made by learners) and average scores on assignments, and subjective assessment item, ARCS-Social ability (perceived value of all motivation measurements). However, there were no significant differences for disengagement (the number of learners dropping out) and average scores on midterm and final tests. Nevertheless, learners who had the support of the tools in their online courses were less likely to dropout, and they gained more on their test scores (midterm and final) than the control group. The tools can have a favourable impact on learners’ engagement, level of interaction, and completion rate, and improve learning efficiency in the online environment. As the results of the study show a significant effect of m-learning on online learners’ motivation, the proposed mobile communication tools are proved to be a valuable extension of online learning for the improvement of motivation.

In future research, other forms of objective assessment may be useful to assess online learners’ motivation, such as the number of page views and posts for each task, the number of votes made and blog posts produced, or average session duration and time between sessions. In addition, future work should further investigate the impact of online learning incorporating the proposed mobile tools on learning outcomes, using a range of methods, participants, and courses. Another area for future research concerns the ways in which learners use Web 2.0 technologies such as podcasts, audio discussion boards, and social networks (e.g., Facebook and Twitter) as communication tools in tandem with online learning and how these tools can be used to increase interaction among learners and instructors in order to improve motivation and learning outcomes.

Amabile, T. M., Hill, K. G., Hennessey, B. A., & Tighe, E. M. (1994). The work preference inventory: Assessing intrinsic and extrinsic motivational orientations. Journal of Personality and Social Psychology, 66(5), 950-967. doi: 10.1037/0022-3514.66.5.950

Andrews, T., Smyth, R., & Caladine, R. (2010, February). Utilizing students’ own mobile devices and rich media: Two case studies from the health sciences. Paper presented at the Second International Conference on Mobile, Hybrid and On-Line Learning, 2010. ELML ‘10. Second International Conference (pp. 71–76). doi: 10.1109/eLmL.2010.15

Chaiprasurt, C., Esichaikul, V., & Wishart, J. (2011). Designing mobile communication tools: A framework to enhance motivation in an online learning environment. In Proceedings of the 10th World Conference on Mobile and Contextual Learning, Beijing (pp. 112–120).

Tung-Hsiang, C., & Che-Wei, F. (24-26 April 2012). Using LBS to construct an e-learning environment. Paper presented at the Computing Technology and Information Management (ICCM), 2012, 8th International Conference (Vol. 1, pp. 395–399).

Balaban-Sali, J. (2008). Designing motivational learning systems in distance education. Turkish Online Journal of Distance Education, 9(3), 149-161.

Bae, Y. K., Lim, J. S., & Lee, T. W. (2005). Mobile learning system using the ARCS strategies. In Advanced Learning Technologies, 2005, ICALT 2005, Fifth IEEE International Conference (pp. 600-602). IEEE.

ChanLin, L. (2007). Motivation assessment for a web-based computer ergonomics course. In C. Montgomerie & J. Seale (Eds.), Proceedings of World Conference on Educational Multimedia, Hypermedia and Telecommunications 2007 (pp. 633-638). Chesapeake, VA: AACE.

Cocea, M. (2006). Assessment of motivation in online learning environments. In V. Wade, V. Ashman & B. Smyth (Eds.), Proceedings of Adaptive Hypermedia and Adaptive Web-Based Systems 2006 (pp. 414-418). Berlin & Heidelberg: Springer.

Cocea, M., & Weibelzahl, S. (2007). Cross-system validation of engagement prediction from log files. In E. Duval, R. Klamma & M. Wolpers (Eds.), Creating new learning experiences on a global scale (pp. 14-25). Second European Conference on Technology Enhanced Learning, EC-TEL 2007. Heidelberg : Springer.

Cushman, W. H., & Rosenberg, D. J. (1991). Human factors in product design. Advances in human factors/ergonomics (Vol. 14). New York: Elsevier Science.

Fredricks, J., Blumenfeld, P., & Paris, A. (2004). School engagement: Potential of the concept, state of the evidence. Review of Educational Research, 74(1), 59. doi: 10.3102/00346543074001059

Garrido, P. C., Miraz, G. M., Ruiz, I. L., & Gomez-Nieto, M. A. (22-22 Feb. 2011). Use of NFC-based pervasive games for encouraging learning and student motivation. Paper presented at the Near Field Communication (NFC), 2011, 3rd International Workshop (pp. 32-37). doi: 10.1109/NFC.2011.13.

Hershkovitz, A., & Nachmias, R. (2009). Learning about online learning processes and students’ motivation through web usage mining. Interdisciplinary Journal of e-Learning and Learning Objects, 5, 197-214.

Hirumi, A. (2002). The design and sequencing of e-learning interactions: A grounded approach. International Journal of E-Learning, 1, 19-27.

Jones, A., Issroff, K., Scanlon, E., Clough, G., & Mcandrew, P. (2006, July). Using mobile devices for learning in informal settings: Is it motivating. In Proceedings of IADIS International Conference Mobile Learning Dublin, IADIS Press, Barcelona, Spain (pp. 251-5). Halsted Press.

Jun, J. (2005). Understanding dropout of adult learners in e-learning (Unpublished doctoral dissertation). University of Georgia, Athens, GA.

Keller, J. M. (1987). Development and use of the ARCS model of instructional design. Journal of Instructional Development, 10(3), 2-10.

Keller, J. M. (1999). Motivation in cyber learning environments. International Journal of Educational Technology, 1(1), 7-30.

Keller, J. M., & Subhiyah, R. (1993). Course interest survey. Tallahassee, FL: Instructional Systems Program, Florida State University.

Laffey, J., Lin, G., & Lin, Y. (2006). Assessing social ability in online learning environments. Journal of Interactive Learning Research, 17(2), 163-177.

Martinez, M. (2003). High attrition rates in e-learning: Challenges, predictors, and solutions. The E-Learning Developers Journal, 17(11).

Miyake, N. (2007). Computer supported collaborative learning. In R. Andrews & C. Haythornthwaite (Eds.), Handbook of e-learning research (pp. 263-280). London: Sage.

Mockus, L., Dawson, H., Edel-Malizia, S., Shaffer, D., An, J., & Swaggerty, A. (2011, November). The impact of mobile access on motivation: Distance education student perceptions. Paper presented at the 17th Annual Sloan-C Consortium International Conference on Online Learning, Lake Buena Vista, FL. Available at http://wc.psu.edu/sloan/

Moneta, G. B., & Siu, M. Y. (2001) Intrinsic motivation, academic performance, and creativity in Hong Kong college students. Journal of College Student Development, 43, 664-683.

Olasina, G. (2012). Student’s e-learning/m-learning experiences and impact on motivation in Nigeria. Retrieved from http://docs.lib.purdue.edu/iatul/2012/papers/31/

Qu, L., Wang, N., & Johnson, W. L. (2005). Using learner focus of attention to detect learner motivation factors. User Modeling Lecture Notes in Computer Science, Vol. 3538 (pp. 70-73). Berlin & Heidelberg: Springer. doi: 10.1007/11527886_10

Rau, P. L. P., Gao, Q., & Wu, L. M. (2008). Using mobile communication technology in high school education: Motivation, pressure, and learning performance. Computers & Education, 50(1), 1-22. doi:10.1016/j.compedu.2006.03.008

Russell, V. J., Ainley, M., & Frydenberg, E. (2005). Schooling issues digest: Student motivation and engagement. Retrieved from http://www.dest.gov.au/sectors/school_education/ publications_resources/schooling_issues_digest/schooling_issues_digest_motivation_engagement.htm.

Sharan, S., & Shaulov, A. (1990). Cooperative learning, motivation to learn, and academic achievement. In S. Sharan (Ed.), Cooperative learning: Theory and research (pp. 173-202). New York: Praeger.

Shih, Y., & Mills, D. (2007). Setting the new standard with mobile computing in online learning. The International Review of Research in Open and Distance Learning, 8(2), 1-15.

Slavin, R. E. (1995). Cooperative learning: Theory, research, and practice (2nd ed.). Boston: Allyn & Bacon.

Ufi & Kineo (2007). Mobile learning reviewed. Retrieved from http://www.kineo.com/documents/Mobile_learning_reviewed _final.pdf.