|

Tekeisha Denise Zimmerman

The University of North Texas, United States

Interaction plays a critical role in the learning process. For online course participants, interaction with the course content (learner-content interaction) is especially important because it can contribute to successful learning outcomes and course completion. This study aims to examine the relationship between learner-content interaction and course grade to determine if this interaction type is a contributing success factor. Data related to student interaction with course content, including time spent reviewing online course materials, such as module PowerPoint presentations and course videos and time spent completing weekly quizzes, were collected for students in three sections of an online course (N = 139). The data were then correlated against grades achieved in the course to determine if there was any relationship. Findings indicate statistically significant relationships between the amount of time the learner spent with the content and weekly quiz grades (r = .-72). The study concludes that learners who spent more time interacting with course content achieve higher grades than those who spent less time with the content.

Keywords: Interaction; online course; success; grades

As the number of online course offerings in higher education institutions continues to grow, research continues to try and determine educational success factors for learners participating in these courses. Interaction has been identified as an important factor affecting educational success in online courses (Tsui & Ki, 1996; Beaudodin, 2002). Prior to 1989, dimensions of interaction in online courses had yet to be defined. In his editorial in The American Journal of Distance Education, Moore (1989) closed this gap by identifying a three-dimensional construct that characterized interaction as either learner to content, learner to instructor, or learner to learner. Moore’s framework has been widely accepted in the literature and has sparked extensive studies and empirical research on learner-instructor (Dennen, Darabi, & Smith, 2007; Garrison, 2005; Garrison & Cleveland, 2005; Garndzol & Grandzol, 2010) and learner-learner (Bain, 2006; Burnett, 2007) dimensions of interaction, but the learner-content interaction and how this impacts course success has not been a focus in the research. It can be deduced that part of the reason for this lack of attention is the fact that content is such a broad term and content interaction can vary widely depending on course structure, design, and format. Further, although course management systems (CMS) can track the amount of time a student spent online with the course open, it does not tell us if this time is truly spent reviewing course materials.

Although these challenges exist, researchers continue to discuss the importance of understanding learner-content interaction. Vrasida (2000) states that learner-content interaction is “the fundamental form of interaction on which all education is based” (p.2). Tuovinen (2000) calls learner-content interaction the most critical form of interaction because it is here that student learning takes place. To date, very few empirical studies have attempted to examine the role that learner-content interaction plays in course success outcomes. Because of the importance that a learner’s interaction with course content plays in education, the body of research is incomplete without a deeper exploration of the impact that it has on course success. The academic and practitioner communities need rigorous studies that examine learner-content interaction.

To address these gaps in the literature, this study examined learner-content interaction as a contributing success factor for students in an online course. Using Moore’s (1989) theory of interaction as a framework, this study contributes to our understanding of interaction by analyzing learner-content interaction through the dimensions of timing and quantity specifically for online courses. To support this purpose, three steps are presented. The first step is to review the recent online learning and interaction studies that shaped various interaction definitions and points of view. Next, two hypotheses that guided this research are explored empirically and an analysis of the results is discussed. Finally, implications for instructors and online course designers are provided.

The purpose of this study was to determine if there was a relationship between learner-content interaction and the course grades. In many courses, grades are the tangible evidence of the quality and quantity of work completed. Therefore, grades were used as the tangible measurement of the course outcome in each part of the study. The study looked at the amount of time spent completing the quizzes and the total amount of time spent reviewing the content in the course for each student and correlated this information against grades achieved. It is hypothesized that the more time spent up front with content will decrease the amount of time needed to complete quizzes because students will be familiar with the information. Therefore

H1: The amount of time a student spends completing course quizzes will negatively correlate with the grade achieved on the quiz;

H2: Students who spend larger amounts of time with the overall course content (quantity of discussion postings read, total number of files read, and the total amount of time spent in the CMS reviewing overall content) will achieve higher final course grades than those who spend less time with the content.

According to the Sloan Consortium (2011), of the 19.7 million students enrolled in college overall, 5.6 million (28.4%) college students in the continental United States reported taking at least one online course in 2010. This represented a 20% increase over the 2009 numbers. Online courses offer more flexibility, thus allowing for increased enrollment by the nontraditional college student. Some institutions offer degree programs where students never have to step foot on a traditional campus. With these types of course and degree program offerings on the rise, it is no surprise that the literature is saturated with research related to online learning.

Early studies sought to explore the legitimacy of online course learning by examining differences in learning outcomes for students taking online versus traditional courses (Hannay & Newvine, 2006; Mullen & Tallent-Runnels, 2006; Salter, 2003). Mullen and Tallent-Runnels (2006) used interviews to examine differences between online and traditional courses by focusing on instructor support, student motivation, and self-regulation, while Salter used an integrative literature review approach to study the same topic. Both studies suggested a slight difference in the formats themselves, but could not definitively state that one format or the other led to better learning outcomes. Other studies provided evidence suggesting that student achievement and perceived skill development were higher in online teaching formats (Hacker & Sova, 1998; Shneiderman, Borowski, Alavi, & Norman, 1998), while opposing studies suggested that no significant differences existed (Jones, 1999; Navarro & Shoemaker, 1999; Schulman & Sims, 1999). Again here, more successful learning outcomes could not be attributed to either format. For example, Hacker and Sova (1998) tested 43 students to determine if the efficacy of computer-mediated courses was significantly different from that of traditional university delivery methods. Twenty-two of the students were taught in a traditional lecture course and 21 were taught via the Internet in an online course. Results of this study showed the achievement gains were 15% higher for students in the online course versus those participating in the traditional lecture course. Conversely, Navarro and Shoemaker (1999) in a similar study using 63 students (31 traditional, 32 online) found that there was no statistical difference in course achievement for students taking the same course online versus those taking it in the traditional setting. In both studies, GPAs and GMAT scores were similar in the online versus traditional students. Though the abovementioned studies suffered from small sample size, thus limiting the generalizability of the results, a key point noticed was online courses could not be counted out as a viable form of education in the higher learning arena.

In more recent research, there is agreement that no noticeable differences exist in learning outcomes for students who completed traditional versus online courses (Liu, 2008). However, a common thread found among all research streams discussed is that many of the conclusions drawn about the outcomes hinged on the amount and types of interaction that led to learning success for students taking online courses. This research provides a strong foundation for viewing all kinds of interaction as a factor affecting potential course outcomes, especially in online course formats.

Interaction in general has been discussed as central to the educational experience and a primary focus in the study of learning outcomes in online classes (Garrison & Cleveland-Innes, 2005). Prior to 1989, dimensions of interaction in educational courses had yet to be defined. Moore (1989) closed this gap by identifying a three-dimensional framework that characterized interaction as either learner-content, learner-instructor, or learner-learner. Learner-instructor interaction is communication between students and the instructor in a course, while learner-learner interaction is communication between the learner and peers in the same course. For online courses, this interaction can take place using both synchronous (video-conferencing, online chat sessions) and asynchronous (e-mail, discussion boards) methods (Kearsley, 1995). In its most basic definition, learner-content interaction refers to the time spent with course content including textbooks, PowerPoint, web pages, and discussion forums (Su, Bonk, Magjuka, Liu, & Lee, 2005).

Though Moore’s (1989) theory of interaction can be applied to any educational format, more recent research using the framework has been related to online courses. This is an obvious direction because of the growing trend of online course offerings in higher education. As the learning forum moved from face-to-face classes to online courses, completeness of the three-dimensional construct began to come in question as the most comprehensive way to view interaction (Anderson, 1998; Hillman, Willis, & Gunawarden, 1994; Soo & Bonk, 1998; Tuovenin, 2000). To address these concerns, scholars began to revisit the original theory and additional dimensions of interaction were introduced. Hillman, Willis, and Gunawardena (1994) introduced learner to interface interaction as an additional dimension to the construct. Learner-interface suggests that for online courses, the learner has to interact with some form of technology medium as part of the course requirements. This interaction is crucial to the online experience because it enhances cognition and is the interaction which makes online learning possible (Tuovinen, 2000). Learner to interface interaction has emerged as a fourth dimension to the interaction construct and has been explored theoretically and empirically in the literature (Dunlap, Sobel, & Sands, 2007; Jung & Choi, 2002; Rhode, 2009). From a theoretical perspective, Dunlap, Sobel, and Sands (2007) built a conceptual taxonomy of student to content interface interaction strategies. In it, they compared the cognitive interactions between the student and the technology to the cognitive dimensions of Bloom’s taxonomy. Jung and Choi (2002) empirically tested learner to interface interaction with 124 participants in an online course. The study investigated the effect that learning in a web-based training (WBT) environment had on the students’ satisfaction, participation, and attitude towards online learning. It was concluded that “regardless of the type of interaction, WBT experiences resulted in a more positive view of online learning” (Jung & Choi, 2002, p. 160). In both studies, the authors asserted that learner-interface interaction would help involve students in deep and meaningful learner-content interactions in online courses. At the core of these studies is the course structure and design of the course. These factors have increased importance when the ability for eye contact and direct conversation are removed from the learning process.

Because interaction with an interface can take on many complex forms within learner-interface interaction, subdimensions exist. Anderson (1998) introduced the teacher-content interaction, content-content, and teacher-teacher interaction as ways of examining challenges that instructors have with course technology. Teacher-content examines the structure and flexibility of the course. Unlike learner-content, this looks at how teachers connect with each other and use this connection to enhance their comfort in interacting with the course. This element also explores the role that professional development plays in the teaching of online classes. Anderson mentioned teacher-teacher interaction as a way of further enhancing the comfort level and recommends that teachers attend virtual conferences and other World Wide Web options to develop their comfort level with and knowledge of technology. Finally, content-content interaction is used to discuss the ways in which the course can be structured to have the CMS deliver the various types of content (PowerPoint, wikis, etc.) to students in the course.

Soo and Bonk (1998) also suggested learner-self interaction as an additional dimension. Learner-self interaction examines the learner’s reaction to the content and asserts that their reflections and inner-dialogue (called “self-talk”) are related to the learning process. Their study sought to clarify the interaction types that were essential to online learning and rank them in order of significance. Learner-self interaction is often treated as part of the learner-content dimension.

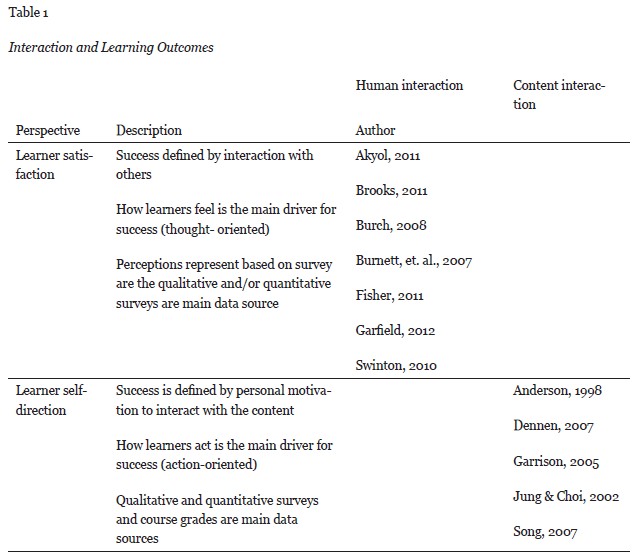

While there is general agreement in the literature on the validity of Moore’s interaction framework, different scholars have presented different perspectives on interaction related to the success of learning outcomes. The literature revealed two main streams of thought related to how learning outcomes were measured in online courses. In the first stream, scholars have placed more emphasis on the human interaction components and measured success in terms of learner satisfaction (Akyol & Garrison, 2011; Rhode, 2009). Learner satisfaction is closely related to learner perceptions and deals with cognitive viewpoints. It is deduced from the literature that this is a critical component because the way a learner processes the information in a course can be an outcome of the amount of learning they feel has taken place. Success in the course is measured by satisfaction with others and how much knowledge learners felt they took away from the course. From an alternate viewpoint, others have focused on the course structure and measured success in terms of the level of self-direction the learner takes in interacting holistically with the course content (Anderson, 1998; Song 2007). This lens focuses more on the way the learner completes the assignments and is tied more to their personal, intrinsic motivation to learn. With learner self-direction, cognition is still a factor, but the drivers for success are viewed as outcomes of interaction with the content. Success is measured in terms of the learner’s motivation to interact and tangible outcomes such as course grades. These differing perspectives, along with names of authors related to the research, are summarized in Table 1 below.

Though many factors can influence the way students perform in a course, interaction in some form has been shown to affect their feelings and thoughts on what has been learned. It is clear from the research that learner-content interaction is an important factor in successful learning outcomes for online courses. Despite the thoroughness of the studies discussed, it remains a fact that limited research exists to measure course success in terms of learner-content interaction. The literature has led to a proposed definition of content interaction, which is one major factor towards more research in this area. Additionally, the need for such studies has been put forth as necessary to fully understand the impact that learner-content interaction has on this stream of research.

This study was carried out at a large higher education institution in the Southwestern United States. Students were enrolled in one of three sections of the same management course. All sections were taught during the same term using the same format and materials and by the same instructor. A total of 185 students was originally enrolled across the three sections but after the add-drop period, only 139 remained (N = 139).The course was taught asynchronously using the Blackboard CMS, and students relied completely on materials posted online to complete the requirements. Students were required to complete both a discussion assignment and a five-question quiz each week. No make-ups were allowed for either. Students had a total of seven days between assignments. They were allowed to complete them at any point during the seven-day period. The content was made available to all students at the same time. There was no direct interaction between the author and the students during the term. All data were collected from the grade book or the statistical reports sections on the CMS. There were no synchronous meetings held during the semester.

Weekly quizzes were timed and learners had to complete the assessments during the allotted time to receive a grade. Students had the option to complete them using an open-book format. There were no mandatory requirements given to the students about the number of discussion postings they either needed to post or review, nor was any direction given by the instructor on the amount of time they should spend reviewing the PowerPoints and other course material provided each week.

Data on the quizzes were pulled and recorded from the CMS each week. This data included the amount of time spent completing the quiz and the grade achieved on the quiz. Since the quizzes were timed, there is confidence that the time that the students showed completing the quizzes was actually dedicated to this activity. At the end of the course, the total amount of time spent reviewing content (i.e., PowerPoints and course videos), the number of discussion postings reviewed by each student, the cumulative time spent completing quizzes, and the final grade were also recorded. The CMS system discussed in this study automatically recorded the amount of time spent reviewing any of the content tied to the course upon access. For example, when a student opened one of the course videos, the CMS began recording time. The system stopped recording when a student closed the video and calculated the total time for that session. Data analysis was performed using the Statistical Package for the Social Sciences for Windows (SPSS ver. 20.0). Frequencies, descriptive statistics, and histograms were run to examine the distribution of the data. Visual inspection revealed no problems with normality, and there were no outliers. Data were analyzed using correlation and multiple regression analysis. The level of significance used for the analysis was .05.

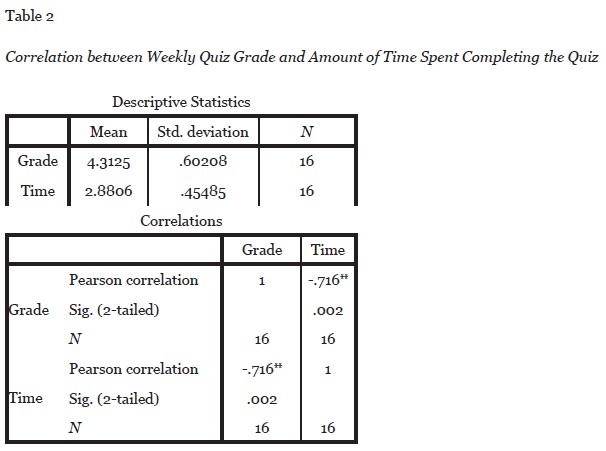

Data were recorded each week on the amounts of time a student spent completing the weekly quiz and the grade received. This data was considered learner-content interaction for two reasons. First, the students were allowed to utilize an online textbook, PowerPoints, and videos when completing the quizzes, and, second, the quiz questions were derived directly from the online textbook, PowerPoints, and videos. For these reasons, it can be deduced that the amount of time the students spent reviewing the course content both before and during the quiz contribute to learner-content interaction and resulting outcomes. Correlation between the grade received on the quiz and the amount of time spent completing the quiz was -.716. This is statistically significant and means that the more time a student spent on the quiz, the lower the grade received. Grades achieved were higher for those students who completed the quiz in less time. Data is shown in Table 2.

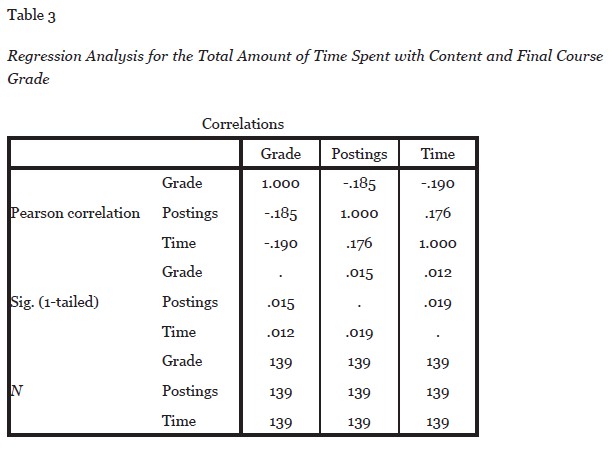

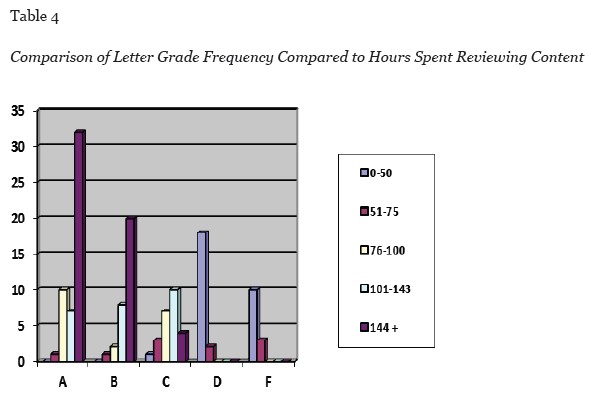

To answer the question on whether there was a grade difference among students who spent more time reviewing overall course content, multiple regression analysis was performed, including descriptive statistics. The number of discussions posted was counted, and the total amount of time spent reviewing all content was tallied and recorded based on the CMS records. Results revealed that there was no statistical significance between the students but the frequency of passing grades (A, B, or C) was noticeably higher among students who spent more time reviewing the course content, indicating practical significance in this area. Statistical results of the analysis for hypothesis 2 is shown in Table 3 and frequency counts for each letter grade are shown in Table 4.

Additional research will be needed to determine the full relationship between learner-content interaction and course success. However, the results of this study suggest that learners who interact with the content more frequently achieve higher success in online courses. The results of hypothesis 1 indicate that students who spent more time with the content overall required less time to complete the quiz. This supports the hypothesis and a strong statistical correlation was shown. Further, results of the initial correlations study related to the weekly quizzes revealed that those who spent less time completing the timed quizzes scored higher. This suggests that these students may have known the answers and thus did not need to search for them during the open book quiz. This assumption is supported by the fact that there was a higher frequency of passing grades achieved by students who spent more time overall with the content.

Implications for online course instructors lie in these findings. First, instructors are encouraged to discuss the importance of interacting with the content as a way to achieve success. Although this should be intuitive to most students, their perceptions of online courses might lead them to believe that the only requirements are the quizzes and potentially the book. Since online courses are often accompanied with additional content like blogs and PowerPoint lectures, this should be expressly discussed with the students. From a course design perspective, CMS designers should work to ensure that the content is easy to access and engaging. This could heighten the motivation that learners have to spend time with the materials.

This study is not without its limitations. First, the small sample size and the fact that all participants were in the same course limits the generalizability of this study. Also, the quantitative nature of the study limited additional findings. For example, it is not known if students were actually reviewing content for the full amount of time that they were recorded as being “in class” based on the CMS. It is also not known what attributed to the quantity of discussion postings and why some students chose to participate more than others. In future studies, these limitations could be addressed by conducting a mixed-methods approach where student interviews or feedback surveys take place. For this study, interaction with the students was not possible so interviews and surveys were not completed. Future research should consider these limitations and care for them during empirical studies involving learner-content interaction.

Anderson, T., & Garrison, D.R. (1998). Learning in a networked world: New roles and responsibilities. In C. Gibson (Ed.), Distance learners in higher education (p. 97-112). Madison, WI.: Atwood Publishing.

Akyol, Z., & Garrison, R. (2011). Understanding cognitive presence in an online and blended community of inquiry: Assessing outcomes and processes for deep approaches to learning. British Journal of educational Technology, 42, 233-250.

Bain, Y. (2006). Do online discussions foster collaboration? Views from the literature. PICTAL (pp. 1-11). Aberdeen: PICTAL Conference Proceedings.

Dennen, V. P., Darabi, A. A., & Smith, K. J. (2007). Instructor-learner interaction in online courses: The relative perceived importance of particular instructor actions on performance and satisfaction. Distance Education, 28, 65-79.

Dunlap, J. C., Sobel, D., & Sands, D. I. (2007). Designing for deep and meaniningful student to content interactions. Tech Trends, 51, 20-31.

Garrison, D. R. (1993). A cognitive constructivist view of distance education: An analysis of teaching-learning assumptions. Distance Education, 14, 199-211.

Garrison, D. R., & Cleveland-Innes, M. (2005). Facilitating cognitive presence in online learning: Interation is not enough. American Journal of Distance Learning, 19, 133-148.

Grandzol, C. J., & Grandzol, J. R. (2010). Interaction in online courses: More is not always better. Online Journal of Distance Learning Administration, 13, 1-18.

Hacker, R., & Sova, B. (1998). Initial teacher education: A study of the efficacy of computer mediated courseware delivery in a partnership context. British Journal of Educational Technology, 29, 3312-3341.

Hannay, M., & Newvine, T. (2006). Perceptions of distance learning: A comparison of online and traditional. Journal of Online Learning and Teaching, 2(1), 1-6.

Hillamn, D. C., Willis, D. J., & Gunawardena, C. N. (1994). Learner-interface interaction in distance education: An extension of contenporary models and strategies for practitioners. The American Journal of Distance Education, 8(2), 30-43.

Jones, E. (1999). A comparison of all web-based class to a traditional class. Texas: ERIC Document Reproduction Service.

Jung, I., & Choi, S. (2002). Effects of different types of interaction on learning achievement, satisfaction and particpation in web-based instruction. Innovations in Education and Teaching International, 39(2), 153-162.

Kearsely, G. (1995). The nature and value of interaction in distance learning. Distance Education, 12, 83-92.

Liu, Y. (2008). Effects of online instruction vs. traditional instruction on students’ learning. International Journal of Instructional Technology and Distance Learning, 2(3), 57-65.

Moore, M. G. (1989). Three types of interaction. American Journal of Distance Education, 3(2), 1-7.

Mullen, G. E., & Tallent-Runnels, M. K. (2006). Student outcomes and perceptions of instructors’ demands and support in online and traditional classrooms. The Internet and Higher Education, 9, 257-266.

Navarro, P., & Shoemaker, J. (1999). The power of cyberlearning: An empirical test. Journal of Computing in Higher Education, 11, 33-46.

Rhode, J. F. (2009). Interaction equivalency in self-paced online learning environments: An exploration of learner preferences. International Review of Research in Open and Distance Learning, 10(1), 1-23.

Salter, G. (2003). Comparing online and traditional teaching-a different approach. Campus-Wide Information Systems, 20, 137-145.

Schulman, A. H., & Sims, R. L. (1999). Learning in an online format vs. an in-class format: An experimental study. T.H.E. Journal, 26, 54-56.

Shneiderman, B., Borkowski, E., Alavi, M., & Norman, K. (1999, January 26). Emergent patterns on teaching/learning in electronic classrooms. Retrieved from Electronic Classrooms: ftp://ftp.cs.umd.edu/pub/hcil/Reports-Abstracts-Bibliography/98-04HTML/98-04.html

Song, L. (2007). A conceptual model for understanding self-directed learning in online environments. Journal of Interactive Online Learning, 6, 27-42.

Soo, K. S., & Bonk, C. J. (1998). Interaction: What does it mean in online distance education? ED Media & ED-Telecom. Frieburg, Germany.

Su, B., Bonk, C. J., Magjuka, R. J., Liu, Z., & Lee, S.-h. (2005). The importance of interaction in web-based education: A program-level case study of online MBA courses. Journal of Interactive Online Learning, 4, 1-19.

Torraco, R. J. (2005). Writing integrative literature reviews: Guidelines and examples. Human Resource Development Reviews, 4, 356-367.

Tuovinen, J. E. (2000). Multimedia distance education interactions. Education Media International, 37(1), 16-24.

Vrasidas, C. (2000). Constructivism versus objectivism: Implications for interaction, course design, and evaluation in distance education. International Journal of Educational Telecommunications, 6, 339-362.